Meta-Harness: End-to-End Optimization of Model Harnesses

This presentation explores Meta-Harness, a breakthrough system that automatically discovers optimal harness code for LLM agents by giving coding agents full access to execution traces, logs, and diagnostic history. Unlike prior text optimizers that compress feedback into summaries, Meta-Harness exploits multi-megatoken diagnostic footprints to perform causal reasoning across long horizons, achieving 4 to 5 times lower context costs while outperforming hand-engineered baselines by up to 8.6 points. The talk demonstrates how agentic file-system access and code-space search unlock robust generalization across text classification, retrieval-augmented reasoning, and terminal-based coding tasks.Script

Harness engineering can swing agent performance by 6 times, yet we still write these orchestration programs by hand. What if a coding agent could read execution traces, inspect hundreds of prior attempts, and evolve better harnesses automatically?

The problem is that traditional optimizers condition only on scores or short summaries. They throw away the execution traces, the logs, the full diagnostic history that reveals why a harness failed. Without this context, you cannot assign credit across complex interventions or trace downstream failures to earlier decisions.

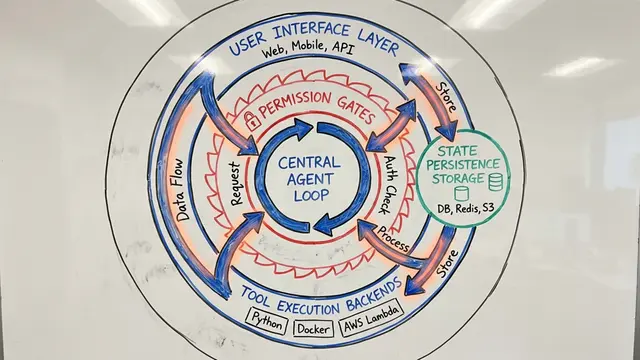

Meta-Harness takes a fundamentally different path by treating harness search as an agentic coding task.

The coding agent explores a directory structure containing every prior harness, every execution trace, every log file. It selectively reads what it needs using grep and cat, inspecting over 20 prior candidates per iteration. This is not Markovian reinforcement learning; this is full-history causal reasoning at a scale that dwarfs prior optimizers by orders of magnitude.

Here is how it works. The agent proposes a harness, the system evaluates it on real tasks, and all artifacts go straight into the file system. On the next iteration, the agent can read any subset of that history to inform its next proposal. This selective access is the key. The agent does not just see a score. It sees which retrieval strategy failed on geometry problems, which prompt structure wasted tokens on easy examples, and how environment bootstrapping reduced wasted turns in terminal tasks.

The results are striking. On text classification, Meta-Harness reaches the accuracy ceiling in 4 evaluations while prior methods require dozens. On retrieval-augmented math reasoning, discovered policies improve pass at 1 by 4.7 points and generalize to unseen models. On terminal coding, the discovered harness beats the hand-engineered baseline and ranks at the top of the leaderboard. Ablations confirm that raw execution trace access is the critical ingredient, effectively doubling median accuracy compared to score-only variants.

Meta-Harness proves that when you give a coding agent full diagnostic access, it does not just optimize, it engineers. The harnesses it discovers generalize out of distribution, transfer across model variants, and reveal intervention strategies no human would have written by hand. To explore this work in depth and create your own research videos, visit EmergentMind.com.