AIRS-Bench: A Suite of Tasks for Frontier AI Research Science Agents

This presentation introduces AIRS-Bench, a rigorous benchmark designed to evaluate AI agents on authentic machine learning research tasks. Unlike existing benchmarks, AIRS-Bench challenges agents to complete the entire research workflow—from hypothesis to implementation to analysis—on 20 unsolved problems from recent publications, with no baseline code provided. The benchmark reveals a stark performance gap: agents achieve only 23% of human performance on average, with most struggling to even produce valid submissions. Through systematic evaluation of 14 agent configurations, the work identifies critical factors for research automation success and charts a path toward truly autonomous scientific discovery.Script

Can AI agents truly conduct original machine learning research from scratch, or are we still far from autonomous scientific discovery? Today we explore a benchmark that puts that question to the ultimate test by asking agents to replicate cutting-edge research without any code to start from.

Building on that question, let's examine why evaluating research agents has been so problematic.

Previous evaluation approaches have three critical flaws. They leak solutions through training data, they only test isolated research steps rather than complete workflows, and they lack the experimental rigor needed for meaningful agent comparison.

To address these gaps, the authors constructed AIRS-Bench around 20 genuinely challenging tasks from state-of-the-art papers. Agents receive only a problem description, a dataset, and an evaluation metric, forcing them to generate, execute, and refine working code completely independently.

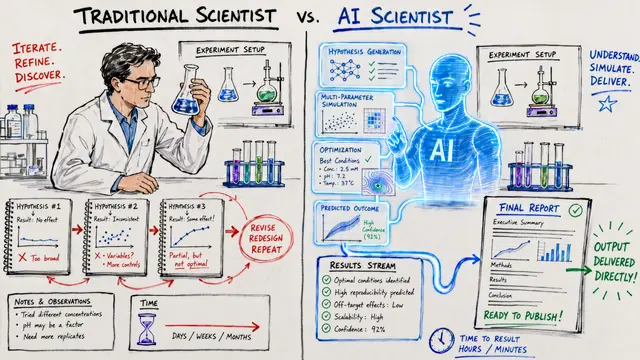

The benchmark architecture separates three key components: the language model provides reasoning, the scaffold orchestrates solution search through algorithms like tree search or evolutionary methods, and the harness manages environment interaction and resource constraints. This modular design enables fair comparison across fundamentally different agentic approaches while maintaining experimental control.

With this rigorous framework established, what did the evaluation reveal about current agent capabilities?

The results paint a sobering picture. Nearly half of all agent attempts fail to produce valid submissions, and even successful runs achieve less than a quarter of the progress toward human expert performance. However, when agents do exceed state-of-the-art, it's almost always through parallel search strategies that explore multiple solution paths simultaneously.

This ranking visualization places all tested agents on a unified skill scale, with human state-of-the-art included as a comparison point. The gap is striking: the best performing agents, using greedy tree search with large language models, still fall considerably short of human researchers. The ordering also confirms that model scale and scaffold sophistication both matter enormously for research automation.

Despite the overall difficulty, agents occasionally achieved genuine breakthroughs. In 4 cases, they exceeded published benchmarks by discovering entirely novel solution strategies, such as sophisticated model ensembles with meta-learning, that weren't present in the original papers. This demonstrates genuine creative potential when the right scaffolding meets sufficient computational search.

AIRS-Bench reveals we're still in the early stages of autonomous research. The benchmark's unsaturated state provides clear targets for advancement, particularly in developing more sophisticated scaffolds that can effectively use test-time computation. The methodology itself offers a template for rigorous, contamination-controlled evaluation that the community can build upon.

While today's agents show flashes of creativity, the journey to truly autonomous scientific discovery has only just begun. To explore the full benchmark, detailed results, and ongoing developments in AI research automation, visit EmergentMind.com.