Bridging Feature Learning and Matrix Recovery

This presentation explores how Linear Recursive Feature Machines (lin-RFM) provide theoretical guarantees for recovering low-rank matrices through an explicit feature learning mechanism. We'll examine how these machines connect gradient-based feature learning to classical sparse recovery methods, offering both theoretical insights and practical algorithms with provable dimensionality reduction properties.Script

What if we could peek inside the black box of feature learning and understand exactly how neural networks discover low-dimensional structure in high-dimensional data? This paper introduces Linear Recursive Feature Machines, offering the first theoretical guarantees that an explicit feature learning procedure can provably recover low-rank matrices.

Building on this mystery, the authors tackle a fundamental challenge in machine learning theory. While deep networks routinely succeed in high dimensions, we lack rigorous understanding of how they learn to focus on relevant features while ignoring noise.

Let's dive into how Recursive Feature Machines offer a solution to this theoretical gap.

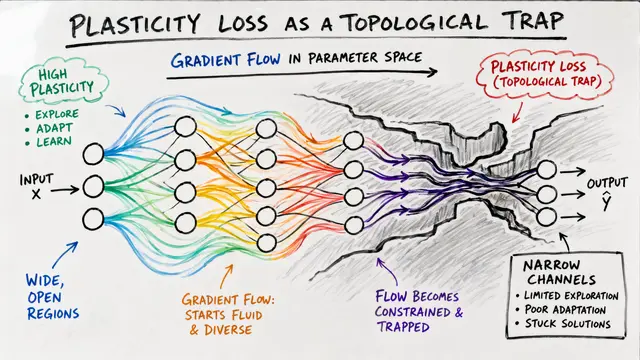

The key insight is remarkably elegant: Recursive Feature Machines alternate between two steps that mirror how we intuitively think about learning. First, they train a predictor on filtered inputs, then update the filter based on which directions the gradients tell us are most important.

When the authors restrict to linear predictors, something beautiful happens: the complex dynamics become analytically tractable. The linear restriction isn't just for convenience—it reveals the essential mechanism while maintaining the core feature learning behavior.

Now we arrive at the paper's central theoretical contribution.

This is where the magic happens: the authors prove that lin-RFM fixed points are exactly the critical points of a well-defined optimization problem. This connection transforms an iterative algorithm into a principled approach with clear theoretical foundations.

Perhaps most remarkably, the authors show that lin-RFM is actually a reparameterization of well-known algorithms like IRLS. This means decades of convergence theory for classical sparse recovery methods now applies to feature learning approaches.

The theory gets even more exciting when we connect to deep neural networks.

The authors provide the first rigorous proof of something called the Neural Feature Ansatz. They show that deep linear networks trained with gradient flow exhibit weight covariances that exactly match specific powers of the gradient outer product matrix.

This insight leads to deep lin-RFM, a multi-layer variant where each layer has its own feature filter. The beautiful result is that fixed points of this system match the implicit bias of deep linear networks, giving us explicit control over what was previously implicit.

Theory is powerful, but implementation matters too.

The authors solve a key computational bottleneck by developing an SVD-free implementation. For certain choices of the phi function, they can maintain the filter matrix squared and update it directly, avoiding expensive singular value decompositions.

For matrix completion specifically, clever engineering makes the algorithm highly practical. By solving the linear system row-wise and exploiting the sparsity pattern, the runtime scales much more favorably than naive implementations.

Let's see how theory translates to practice.

The experimental results are striking. This figure shows that lin-RFM with alpha equals one-half substantially outperforms both deep linear networks and nuclear norm minimization. The algorithm needs thousands fewer observations to achieve consistent low error rates.

The experimental validation spans multiple settings, from sparse regression to noisy matrix completion. Most remarkably, the log-determinant penalty corresponding to alpha equals one-half consistently achieves the strongest empirical performance across different scenarios.

This visualization reveals the algorithm's convergence landscape, showing how different initializations lead to various recovery outcomes. The basin of attraction analysis helps us understand when and why the method succeeds in finding low-rank solutions.

Every breakthrough has boundaries that point toward future discoveries.

The authors are transparent about current limitations. While experiments often work well with epsilon equals zero, the theory requires a small positive epsilon. They also acknowledge that rigorous recovery guarantees for matrix completion remain an open theoretical challenge.

Beyond the immediate results, this work opens exciting new directions. The framework provides a template for analyzing other feature learning mechanisms, while demonstrating that explicit approaches can outperform both classical methods and implicit deep network training.

This paper elegantly bridges the gap between classical optimization and modern feature learning, providing both theoretical insights and practical algorithms for low-rank matrix recovery. The authors show that explicit feature learning mechanisms can achieve what deep networks do implicitly, but with provable guarantees and superior empirical performance. To dive deeper into this fascinating intersection of theory and practice, head over to EmergentMind.com to explore more cutting-edge research.