Max Likelihood RL: Bridging Supervised and Reinforcement Learning Optimization

This presentation explores MaxRL, a principled framework that unifies supervised learning and reinforcement learning by optimizing true likelihood rather than expected reward. By treating RL as a first-order approximation and introducing a harmonic mixture of pass@k gradients, MaxRL addresses coverage collapse, sample efficiency, and optimization failures endemic to standard RL post-training. The talk demonstrates how this approach achieves Pareto improvements across mathematical reasoning, code generation, and navigation tasks, while enabling up to 20x inference speedup through maintained diversity and stable coverage.Script

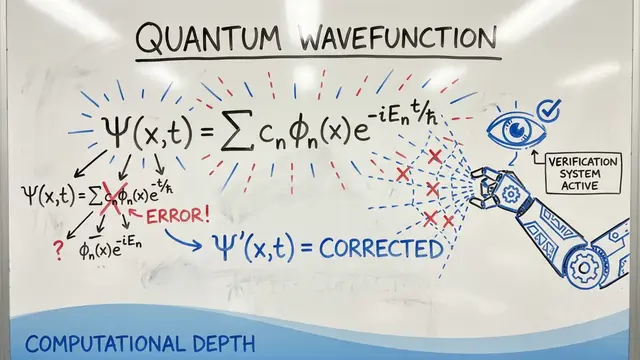

What if the fundamental objective driving reinforcement learning in language models is mathematically incomplete? The authors of this work reveal that standard RL optimizes only a first-order approximation of what we actually care about: the true likelihood of generating correct outputs.

Building on that tension, let's examine why this approximation matters so much in practice.

The researchers demonstrate that conventional reinforcement learning objectives optimize expected reward, which captures only the first-order behavior of true likelihood. This approximation causes RL to focus disproportionately on easy examples while starving hard problems of gradient signal, leading to the coverage collapse widely observed in practice.

This brings us to the elegant solution the authors propose.

MaxRL exploits a beautiful mathematical insight: the gradient of log-likelihood equals a weighted sum of pass@k gradients with harmonic coefficients. As you increase sampling budget, you simultaneously reduce variance and move the objective closer to exact likelihood, creating a compute-controllable bridge between RL and supervised learning.

When we view these methods through the lens of weight functions, the distinction becomes crystal clear. Standard RL and GRPO apply relatively uniform weighting and cannot improve their objective alignment regardless of compute, while MaxRL progressively approaches the ideal 1 over p weighting of maximum likelihood as rollouts increase.

In controlled experiments on image classification, the authors validate this theoretical prediction. With a ResNet model on ImageNet, MaxRL with sufficient rollouts nearly replicates exact maximum likelihood training via cross-entropy. Meanwhile, standard RL fails to escape low initial pass rates even with orders of magnitude more samples, precisely because it lacks the gradient reweighting necessary to learn from hard examples.

These dynamics translate into substantial practical advantages across multiple domains.

On billion-parameter language models trained for mathematical reasoning, MaxRL achieves Pareto improvements over the state of the art across 4 benchmarks. Critically, by preventing coverage collapse, it reduces the number of samples needed at inference by up to 20 times when a verifier is available, directly translating theoretical elegance into deployment efficiency.

The mechanism behind these gains is visible in the training dynamics. Throughout optimization, MaxRL generates at least one correct solution for a substantially larger fraction of training prompts compared to alternatives. This broader exploration prevents premature convergence and extraction of richer learning signal from the same data, addressing a fundamental bottleneck in self-improvement protocols.

Maximum Likelihood Reinforcement Learning shows us that coverage collapse is not an inevitable artifact of RL, but a consequence of objective misspecification we can now correct. To dive deeper into the math, experiments, and open directions, visit EmergentMind.com.