Retrieval-Aware Distillation for Transformer-SSM Hybrids

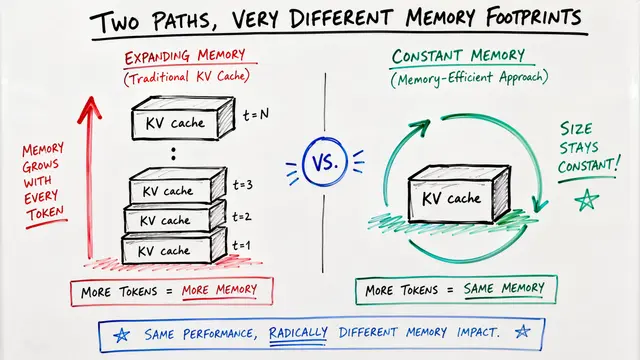

This presentation explores a breakthrough architectural distillation framework that compresses pretrained Transformers into efficient state-space model hybrids. By identifying and preserving only the small subset of attention heads critical for retrieval tasks, researchers achieve 95% performance recovery with just 2% of attention heads, reducing memory footprint by 5-6x while maintaining knowledge-focused capabilities. The work demonstrates that retrieval capacity in Transformers is concentrated rather than distributed, enabling principled hybrid design for resource-constrained deployment.Script

What if we could pinpoint exactly which parts of a Transformer are doing the heavy lifting for memory retrieval, and replace everything else with something far more efficient? That precise question drives this breakthrough work on retrieval-aware distillation.

Building on that tension, we face a fundamental trade-off. The authors observed that while state-space models scale beautifully, they fail at associative recall precisely because they lack the specialized attention heads Transformers use for retrieval.

So how do we bridge this gap intelligently?

The method unfolds in two elegant phases. They first identify which attention heads actually matter for retrieval by measuring performance drops when each is removed, then surgically preserve only those critical heads while converting everything else to efficient recurrent units.

This data-driven approach fundamentally differs from prior work. Where traditional hybrids scatter attention throughout the architecture hoping to cover all bases, retrieval-aware distillation precisely targets the handful of heads that empirically drive recall performance.

The experimental validation reveals striking efficiency gains.

Testing on Llama and Qwen model families, the results are remarkable. The hybrid students achieve near-teacher performance on retrieval-heavy tasks while slashing memory requirements, and they do so with a tiny fraction of the attention resources previous methods required.

Deeper analysis reveals why this works. The authors demonstrate that retrieval operations genuinely concentrate in specific attention heads, a pattern that reproduces consistently across architectures, and that most SSM capacity in pure models is merely compensating for missing retrieval primitives rather than capturing useful linguistic structure.

These findings open exciting practical and theoretical pathways. The work enables efficient deployment of capable language models on resource-limited hardware, while raising fascinating questions about architectural modularity, whether patterns hold at massive scale, and how we might further specialize components for distinct computational roles.

Retrieval-aware distillation shows us that intelligence in neural architectures can be surgically preserved rather than uniformly distributed, a principle that may reshape how we build efficient models. Visit EmergentMind.com to explore the full paper and dive deeper into this research.