Bootstrapping Life-Inspired Machine Intelligence: From Chemistry to Cognition

This presentation explores a radical reimagining of artificial intelligence by looking beyond brain-centric models to the full spectrum of biological problem-solving. The paper introduces the cognitive light cone as a formal framework for measuring intelligence across diverse agents and proposes five design principles derived from living systems—autonomy, multiscale self-assemblage, continuous reconstruction, embodiment exploitation, and pervasive signaling—that could enable machines to achieve the flexibility, scalability, and creativity observed in nature.Script

What if the secret to creating truly intelligent machines isn't hidden in brains at all, but scattered across every living system from bacteria to human societies? This paper argues that biology's full repertoire of problem-solving, not just neural computation, holds the blueprint for flexible, creative artificial intelligence.

Building on that insight, let's examine why current AI falls short.

Following from this gap, the authors identify four critical shortcomings. Today's systems optimize what we tell them to, but they can't set their own goals or protect them. They're trained once and deployed, unable to rebuild themselves like living tissue. And they rarely leverage the computational affordances of their physical substrate the way organisms do.

To address these limitations, the paper introduces a powerful new formalism.

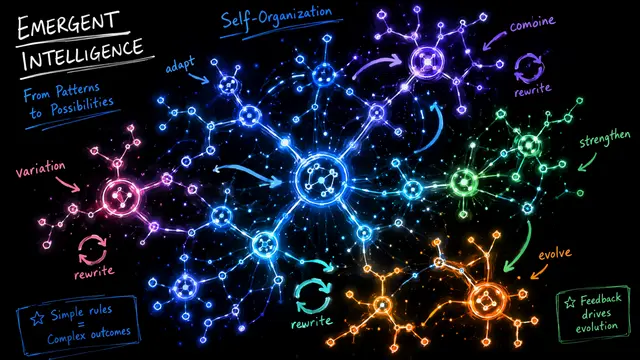

This concept, called the cognitive light cone, provides a unified metric for intelligence. It defines the maximum extent in space and time over which an agent can independently set, represent, and pursue goals. A bacterium's light cone is narrow and local, while human societies extend theirs across generations and continents.

With this framework established, the authors extract five core principles from living systems.

These first two principles address goal-setting and scalability. Living systems generate and protect their own objectives through autonomous agency, while achieving scale through compositional hierarchies where smaller agents coordinate within larger collectives, each maintaining local control while contributing to shared goals.

Continuing with adaptation, principle three emphasizes continuous reconstruction at multiple timescales, from cellular repair to behavioral learning, treating instability as a resource rather than a threat. The fourth principle reframes embodiment not as limitation but as opportunity, where organisms exploit physics, biomechanics, and environmental structure to offload and enhance cognition.

The fifth principle addresses coordination through pervasive signaling. Biological systems use diverse communication channels to establish flexible hierarchies where high-level objectives propagate downward and are realized through local adaptation, achieving global coherence without rigid centralization, a capability today's AI architectures fundamentally lack.

These principles aren't speculative; they're grounded in extensive evidence. The authors synthesize findings from evolutionary biology, developmental science, and cognitive neuroscience, demonstrating that even simple organisms exhibit sophisticated problem-solving through these mechanisms, providing a readily observable template for engineering.

Looking forward, these insights open transformative research directions. Imagine robots that set their own exploratory goals, modular AI systems that scale through nested autonomy, and intelligence measured not by benchmark scores but by the scope of problems an agent can independently formulate and solve, with careful attention to the ethical implications of truly autonomous systems.

By appropriating biology's multi-scale strategies for flexible problem-solving, we may finally bridge the gap between narrow task optimization and the open-ended creativity that defines intelligence. Visit EmergentMind.com to explore more cutting-edge AI research.