AI Co-Mathematician: Agents That Think Like Research Groups

This presentation introduces a novel agentic AI system designed to function as a true collaborative partner in mathematical research. Unlike traditional theorem-proving tools, the AI co-mathematician orchestrates multiple specialized agents in a persistent workspace that mirrors how human mathematicians actually work—iteratively exploring conjectures, managing uncertainty, preserving failed attempts, and integrating computational experiments with formal reasoning. Through case studies and benchmark results, we examine how this architecture achieved 48% accuracy on the challenging FrontierMath benchmark and supported professional mathematicians in resolving open problems.Script

Most AI math tools try to solve problems in one shot, but real mathematical research is messy, collaborative, and iterative. The AI co-mathematician system redesigns AI as a team of specialized agents that work the way mathematicians actually do, managing uncertainty and learning from dead ends.

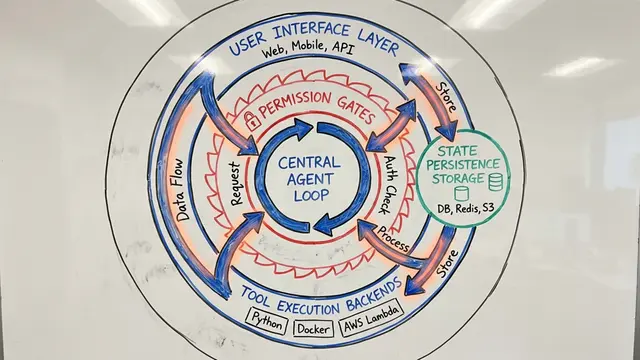

At its core, the system organizes agents like a research group. A project coordinator interfaces with the human mathematician, delegating goals to workstream coordinators that spin up specialized sub-agents for literature review, computational experiments, or proof construction, all working in parallel.

Each workstream follows a structured trajectory: querying databases, running code, generating reports, and integrating feedback. Crucially, the system preserves every failed attempt, not just successes, building a durable record of what doesn't work that prevents wasted effort.

On the FrontierMath Tier 4 benchmark, the AI co-mathematician solved 48% of problems, more than doubling Gemini 3.1 Pro's 19%. This wasn't just raw reasoning power; it came from orchestrating tools, managing multiple solution paths, and enforcing multi-agent review.

The system isn't without risks. Iterative agent review can trap the system in death spirals where reviewers and provers endlessly revise without converging. Even more subtle, flawed arguments can pass review when agents reach a local equilibrium that feels convincing but contains hidden errors.

By treating AI as a collaborative team rather than an oracle, the AI co-mathematician offers a practical blueprint for accelerating mathematical discovery. If you want to explore more systems reshaping research with AI, visit EmergentMind.com to dive deeper and create your own presentation.