- The paper introduces a whole-body yaw maneuver strategy that maximizes geometric constraints and reduces pose error in feature-deprived scenarios.

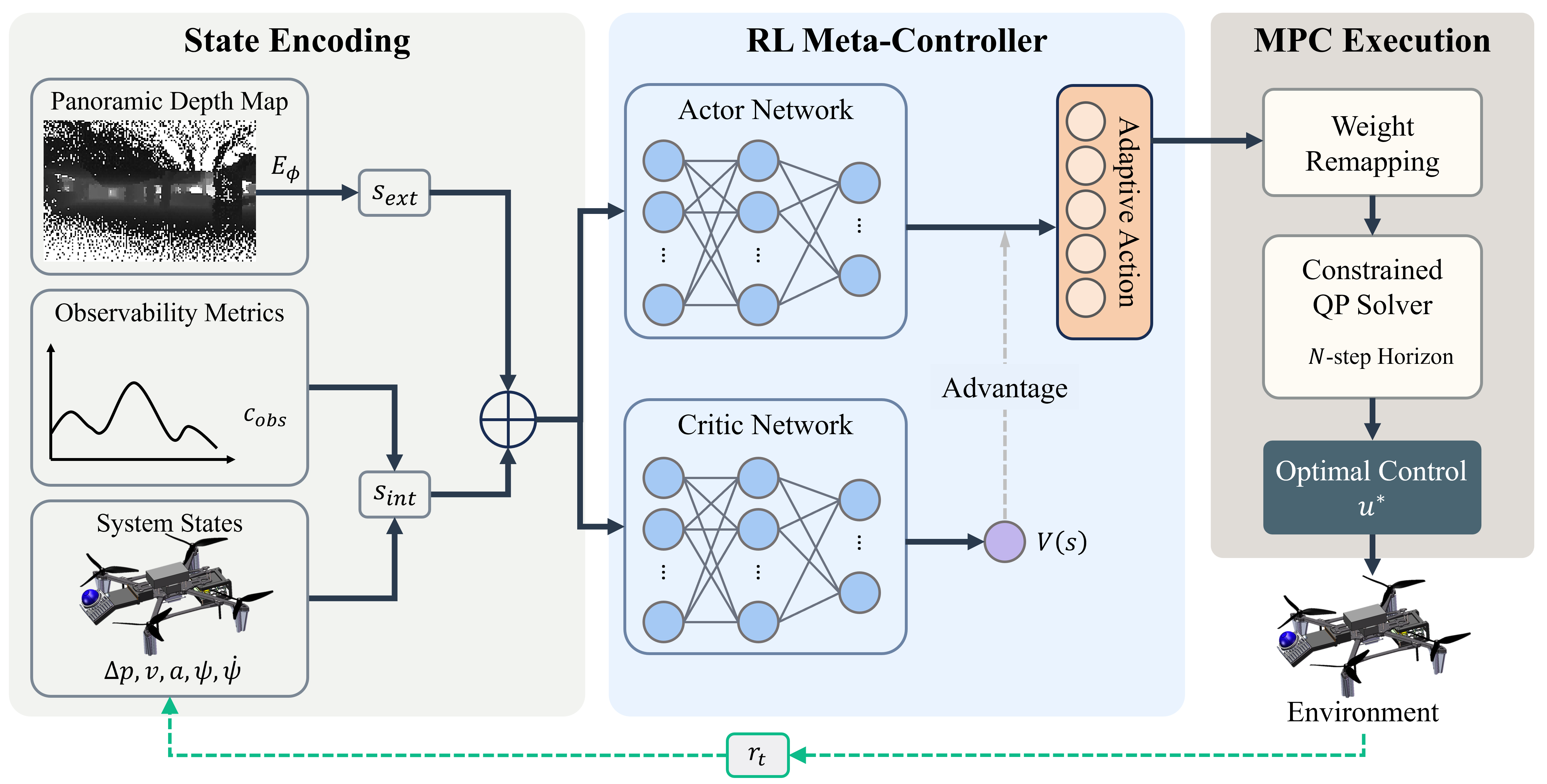

- The paper develops a hybrid RL-MPC architecture that fuses panoramic depth mapping with Fisher Information-based observability analysis for adaptive control.

- The paper demonstrates significant improvements in localization accuracy, reducing median pose error by 27.8% in simulations and achieving low-drift real-world performance.

Adaptive Whole-body Active Rotating Control for Enhanced LiDAR-Inertial Odometry under Human-in-the-Loop Interaction

Introduction and Motivation

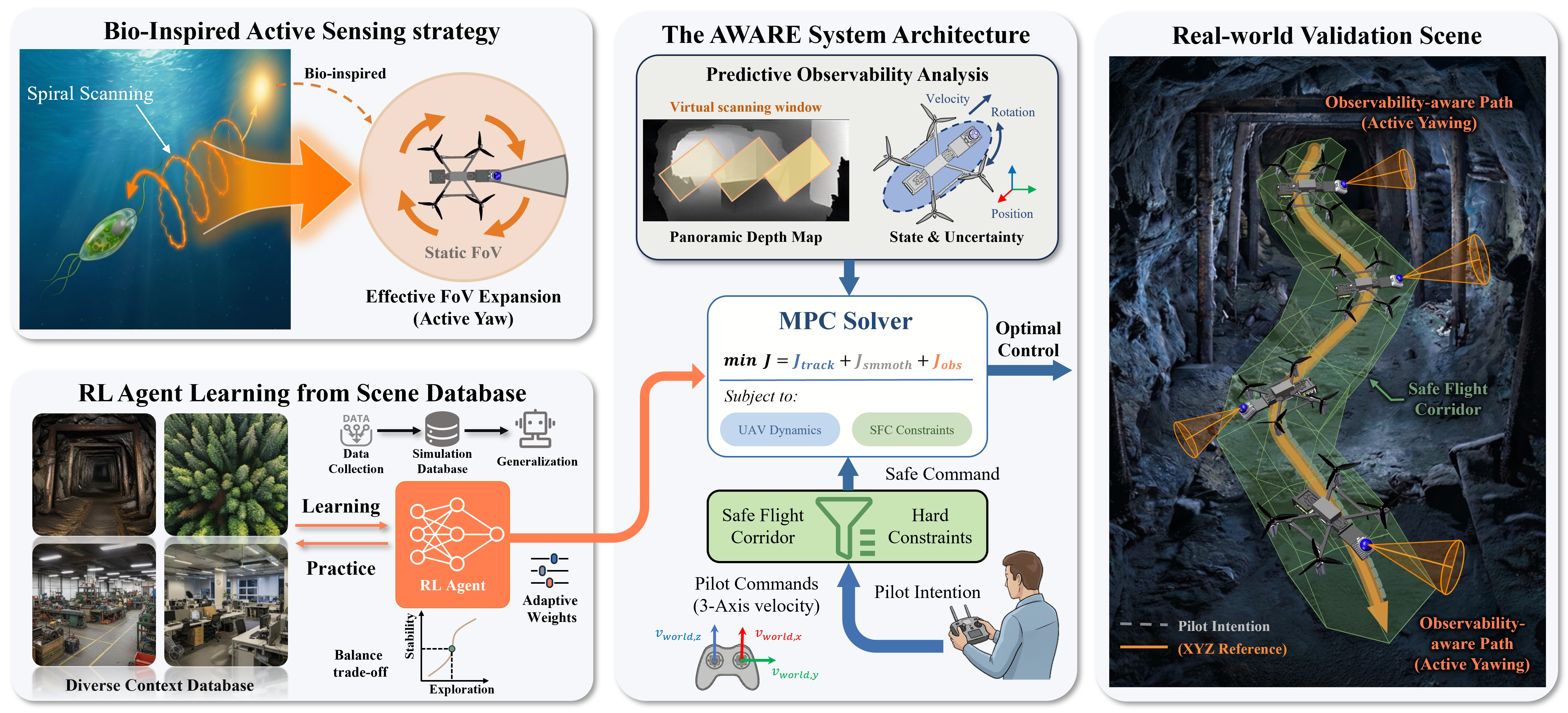

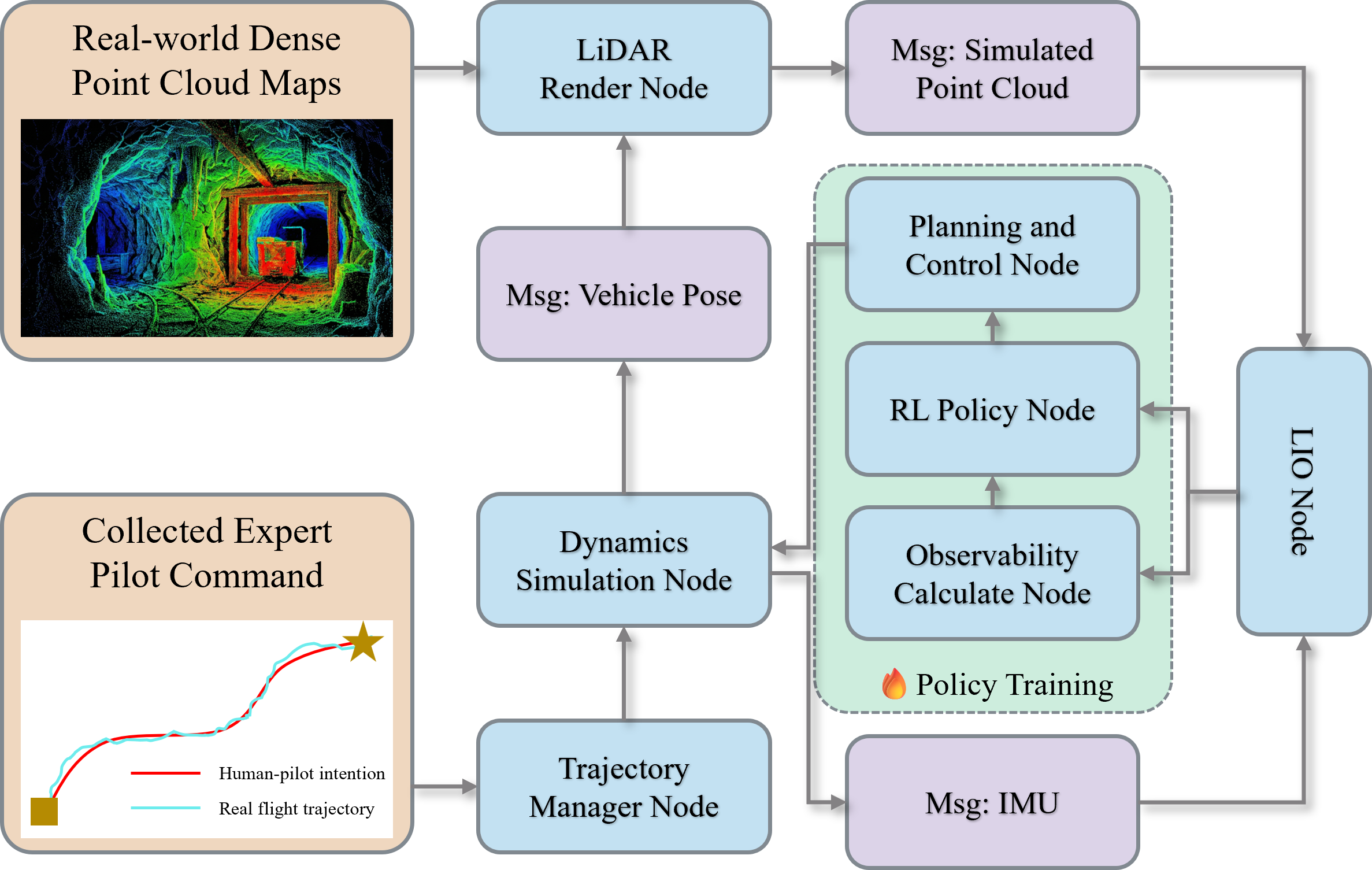

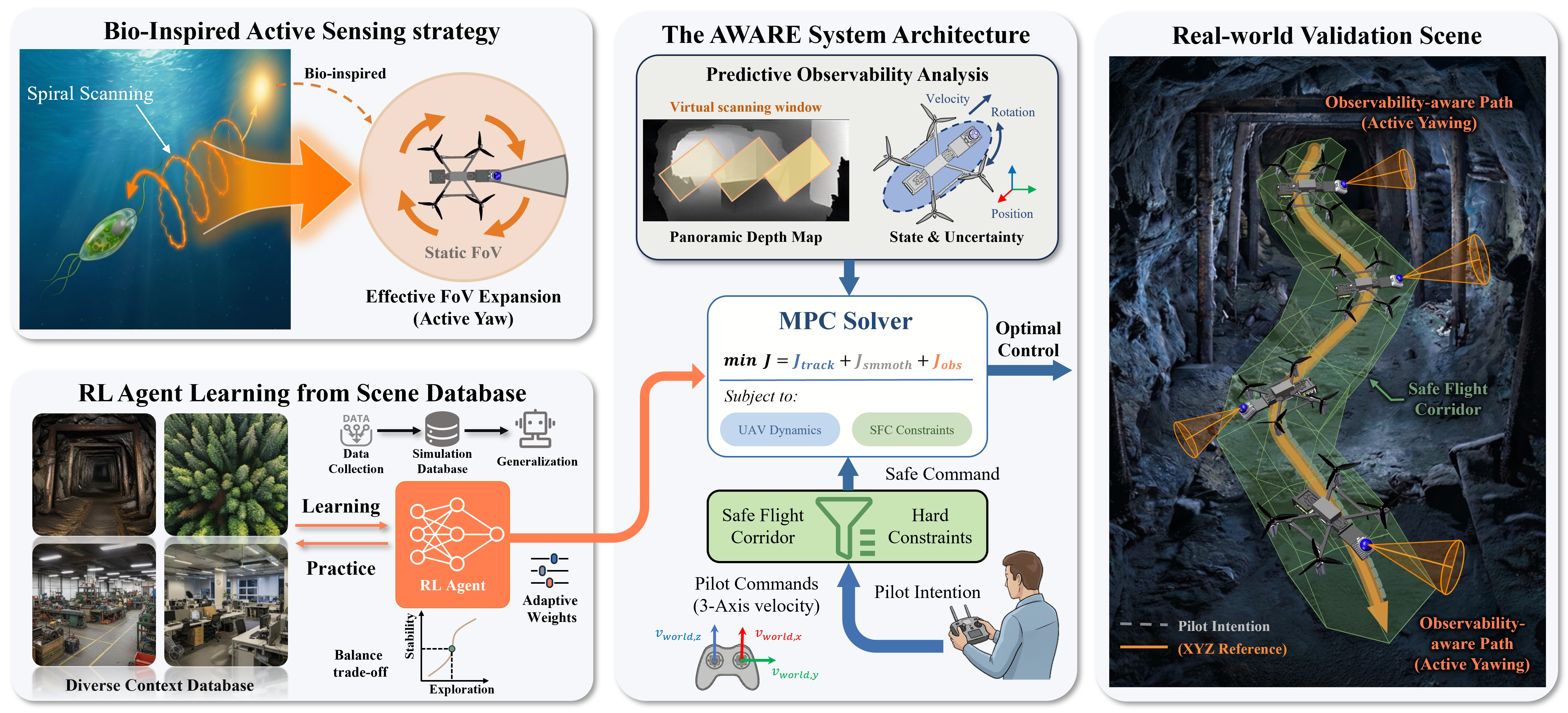

The AWARE framework addresses critical limitations in UAV-based LiDAR-Inertial Odometry (LIO), specifically the effect of narrow field-of-view (FoV) sensors on localization robustness in geometrically degenerate or low-feature scenarios. Unlike hardware-based active sensing solutions that increase payload and complexity, AWARE leverages the UAV's intrinsic rotational agility to perform bio-inspired, whole-body yaw maneuvers, maximizing observable geometric constraint without additional actuators. This approach is tightly integrated within a human-in-the-loop (HITL) paradigm, where operator-provided translational commands are decoupled from autonomous, observability-optimized yaw planning, mediated by a hybrid reinforcement learning (RL) and model predictive control (MPC) architecture.

Figure 1: The AWARE framework integrates active sensing, RL-based cost adaptation, predictive observability analysis, hybrid MPC, HITL interaction, and safe corridor enforcement in both simulation and real-world validation.

Unified Perception and Observability-Driven Control

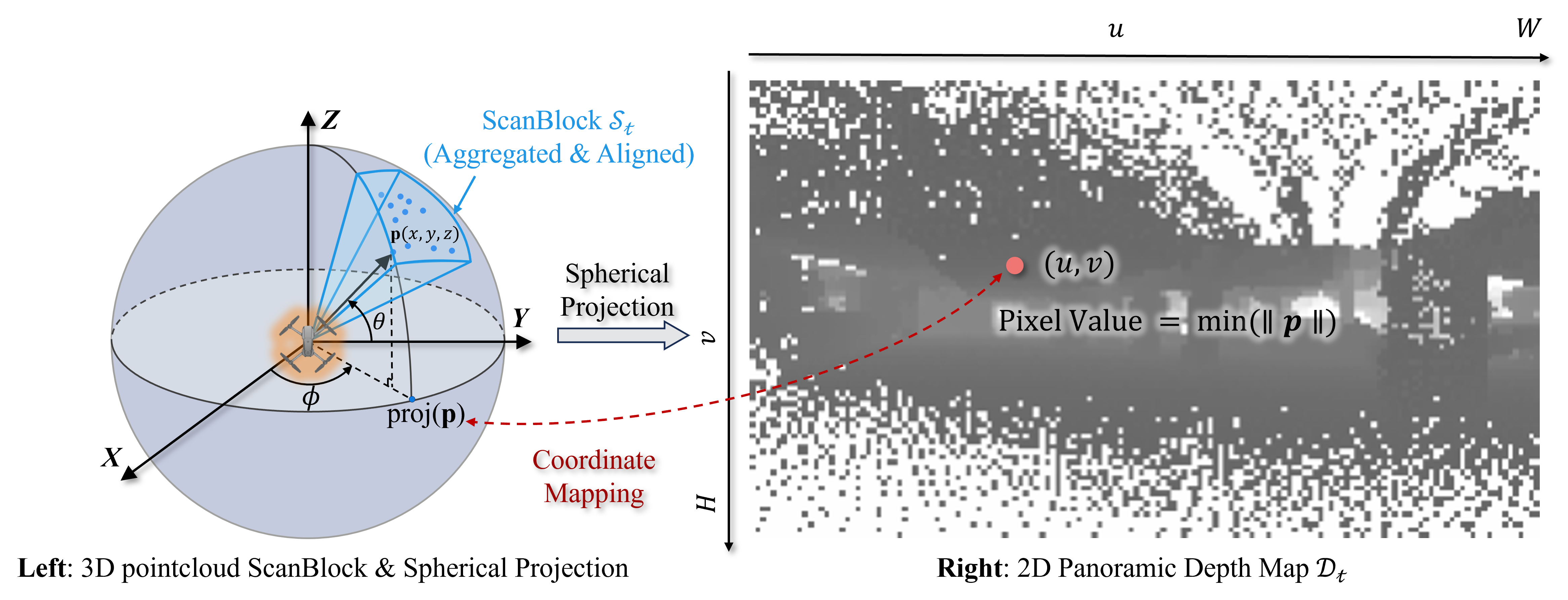

Panoramic Scene Encoding

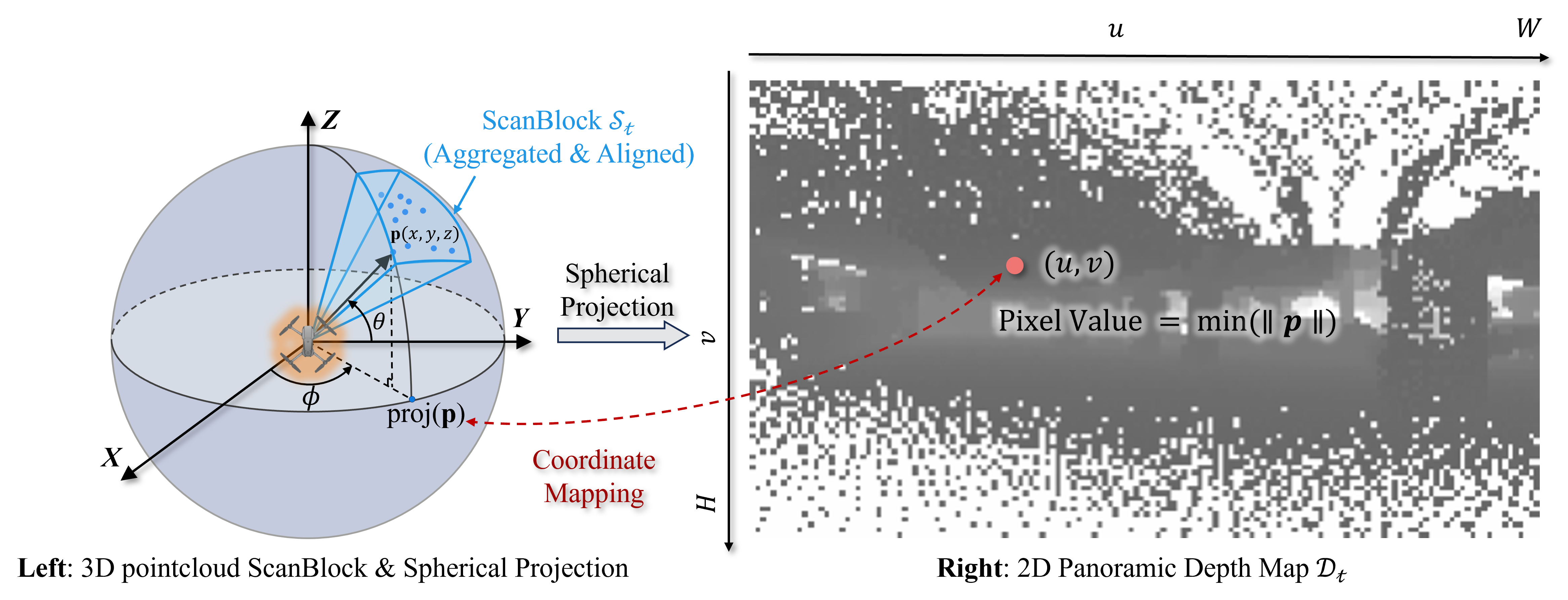

AWARE introduces a unified panoramic spherical depth map representation derived from aggregated LiDAR scanblocks. This dense, angle-preserving pseudo-image retains spatial geometry required for reliable occlusion handling and downstream observability reasoning using a tractable 2D structure.

Figure 2: The aggregated scanblock is projected onto a discretized spherical map, forming a compact panoramic depth tensor for perception and control.

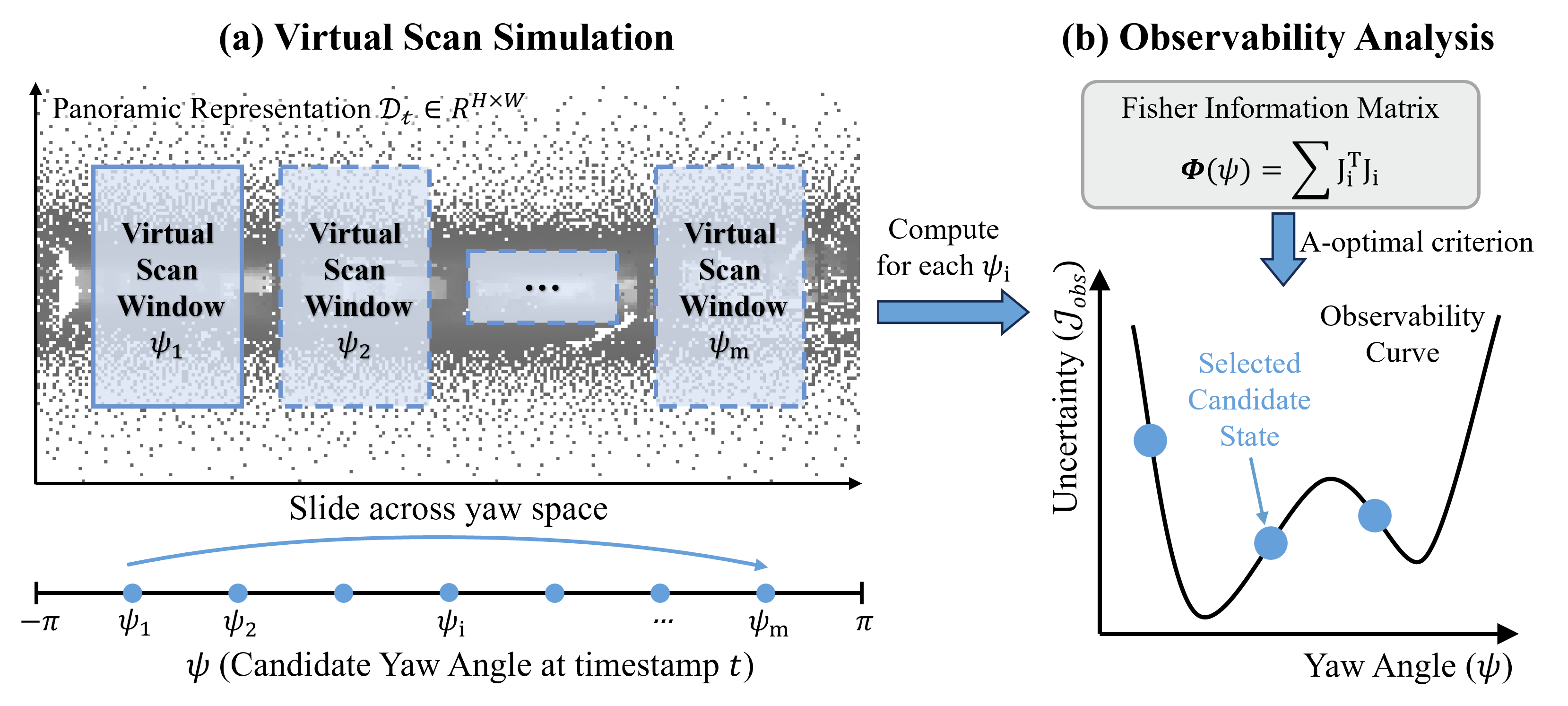

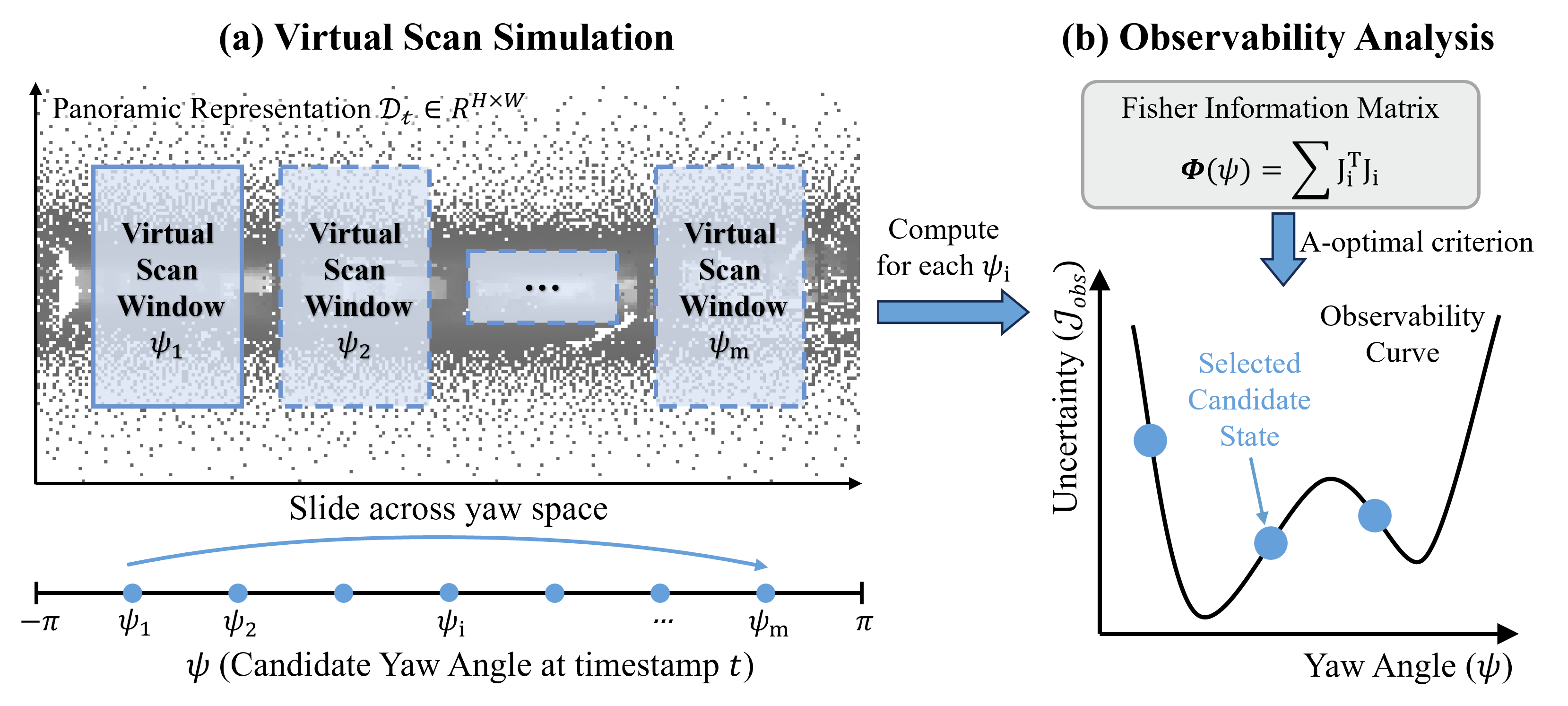

To quantify the geometric information available for LIO, AWARE computes a yaw-dependent Fisher Information Matrix (FIM) on synthetic scans simulated for candidate view directions. The A-optimality criterion, Jobs(ψ)=Tr(Φ(ψ)−1), scores pose uncertainty for each yaw, supplying an explicit metric for control optimization.

Figure 3: For each candidate yaw, virtual scans and associated FIM values are computed, forming a yaw/observability curve for controller integration.

Hybrid RL-MPC Architecture and HITL Control

RL-MPC Cost Modulation

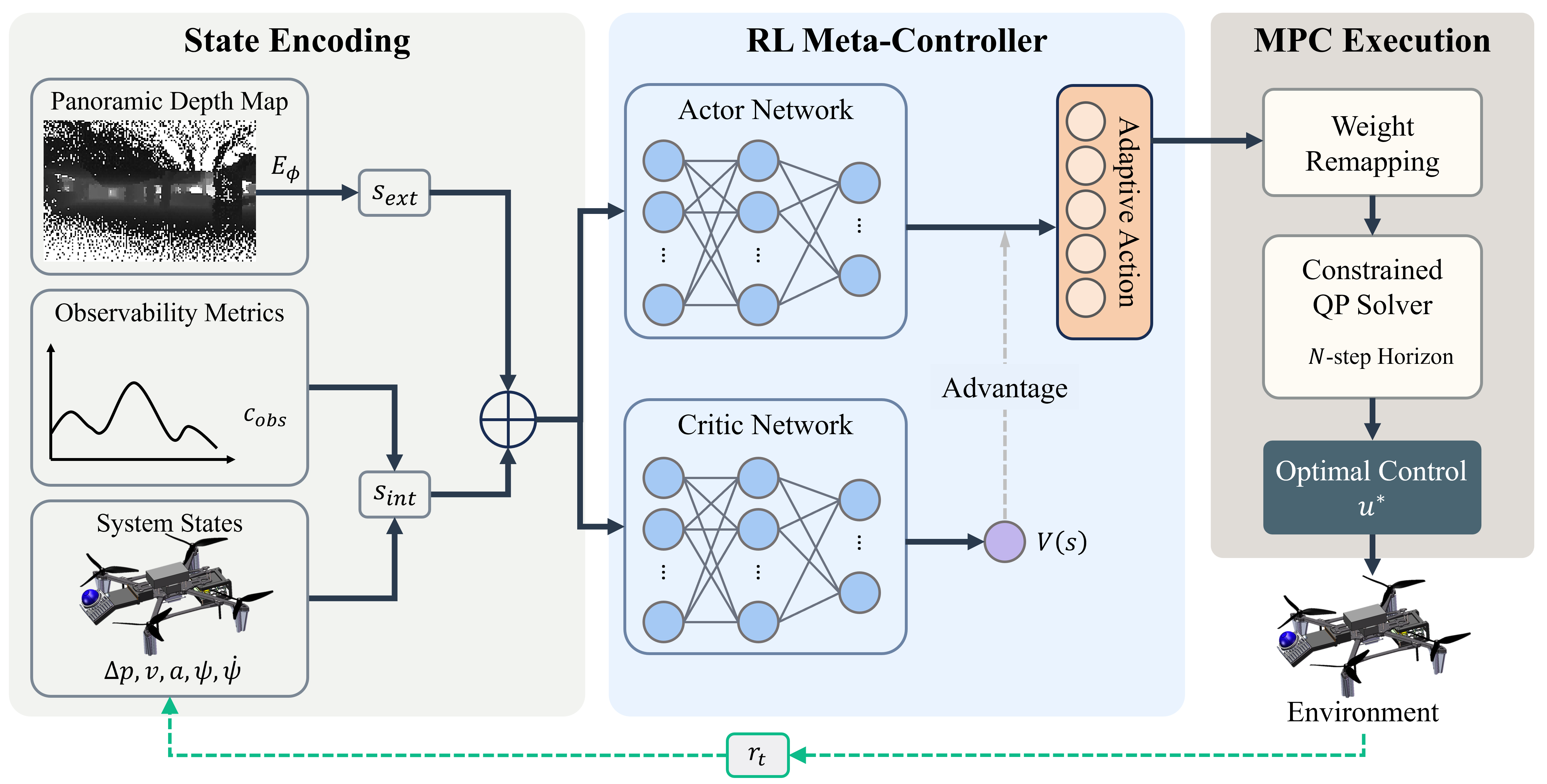

AWARE’s control system fuses explicit observability, UAV state, and panoramic scene context into a joint state encoding. This is parsed by a lightweight PPO-based RL agent that produces adaptive MPC weights (including the yawed observability term), enabling scene-dependent regulation of flight stability versus exploration.

Figure 4: The architecture fuses kinematic, geometric, and observability information, modulating MPC weights adaptively in real time via RL.

The MPC then solves a constrained QP, balancing reference tracking (from pilot input), control effort, smoothness, safety (via Safe Flight Corridor constraints), and active observability maximization. Observability terms are incorporated using a quadratic local approximation, allowing efficient, differentiable integration into the QP even when direct analytic gradients are unavailable.

Safe Flight Corridor and HITL Decoupling

Critically, AWARE decouples operator translational (XYZ) intent from autonomous yaw planning. Collision-free pilot intent is enforced using dynamically computed SFC constraints; meanwhile, the MPC independently adapts heading for optimal state estimation.

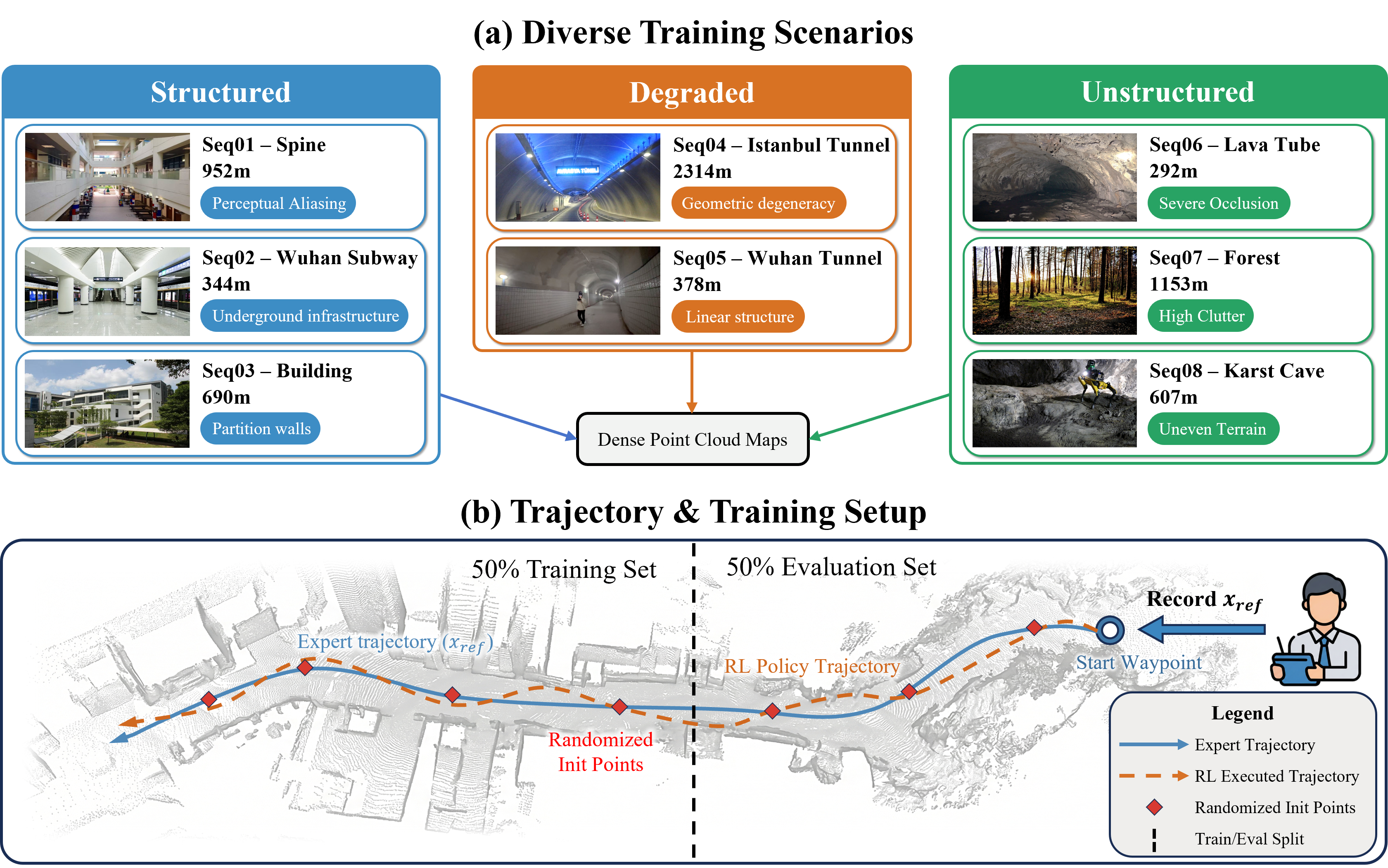

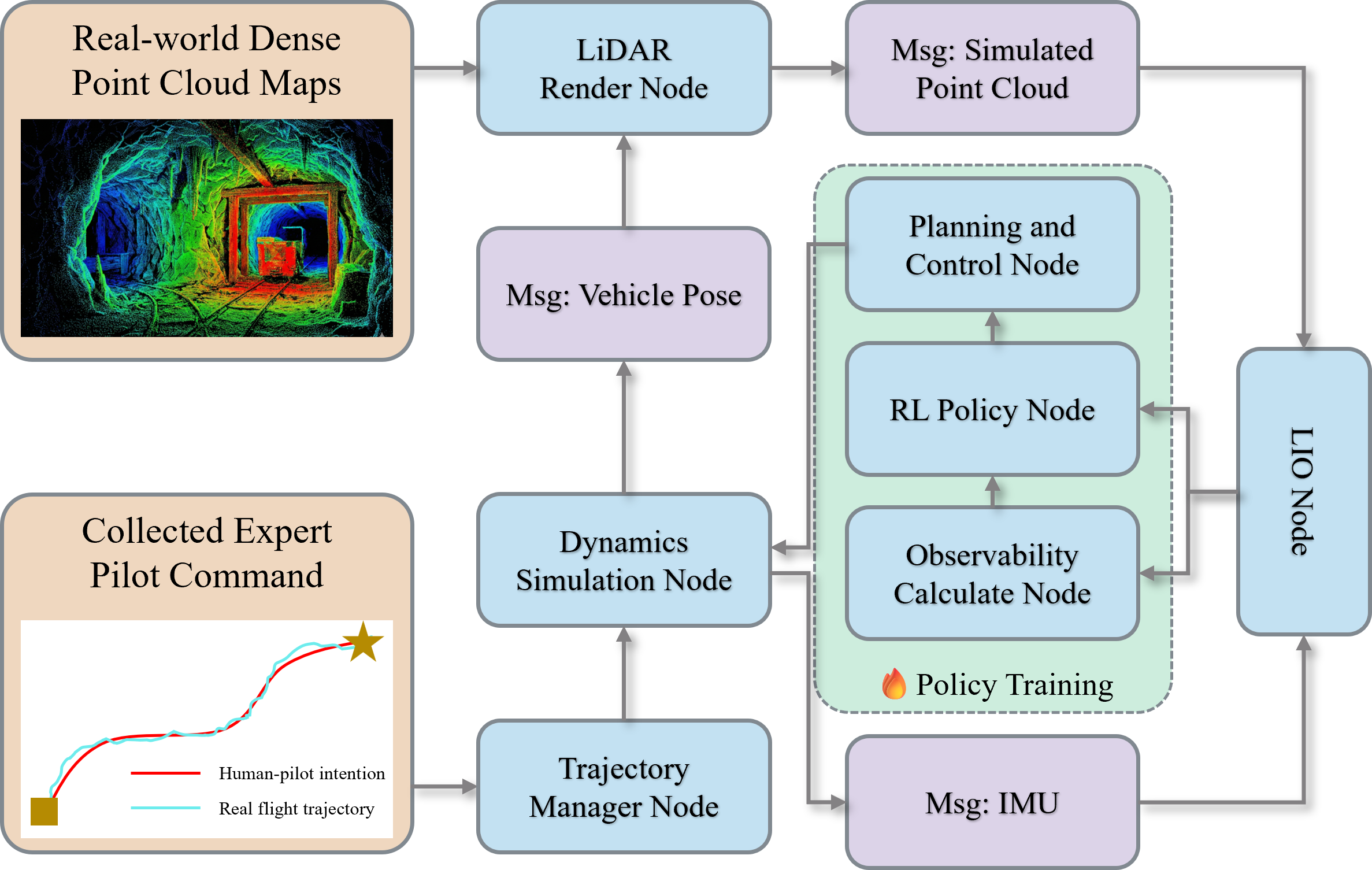

Simulation and Training

A high-fidelity, closed-loop simulator using real-world point clouds and expert trajectories drives both data generation and RL policy training, supporting robust sim2real transfer.

Figure 5: Simulation pipelines leverage real-world point clouds and human reference data to train closed-loop active sensing policies.

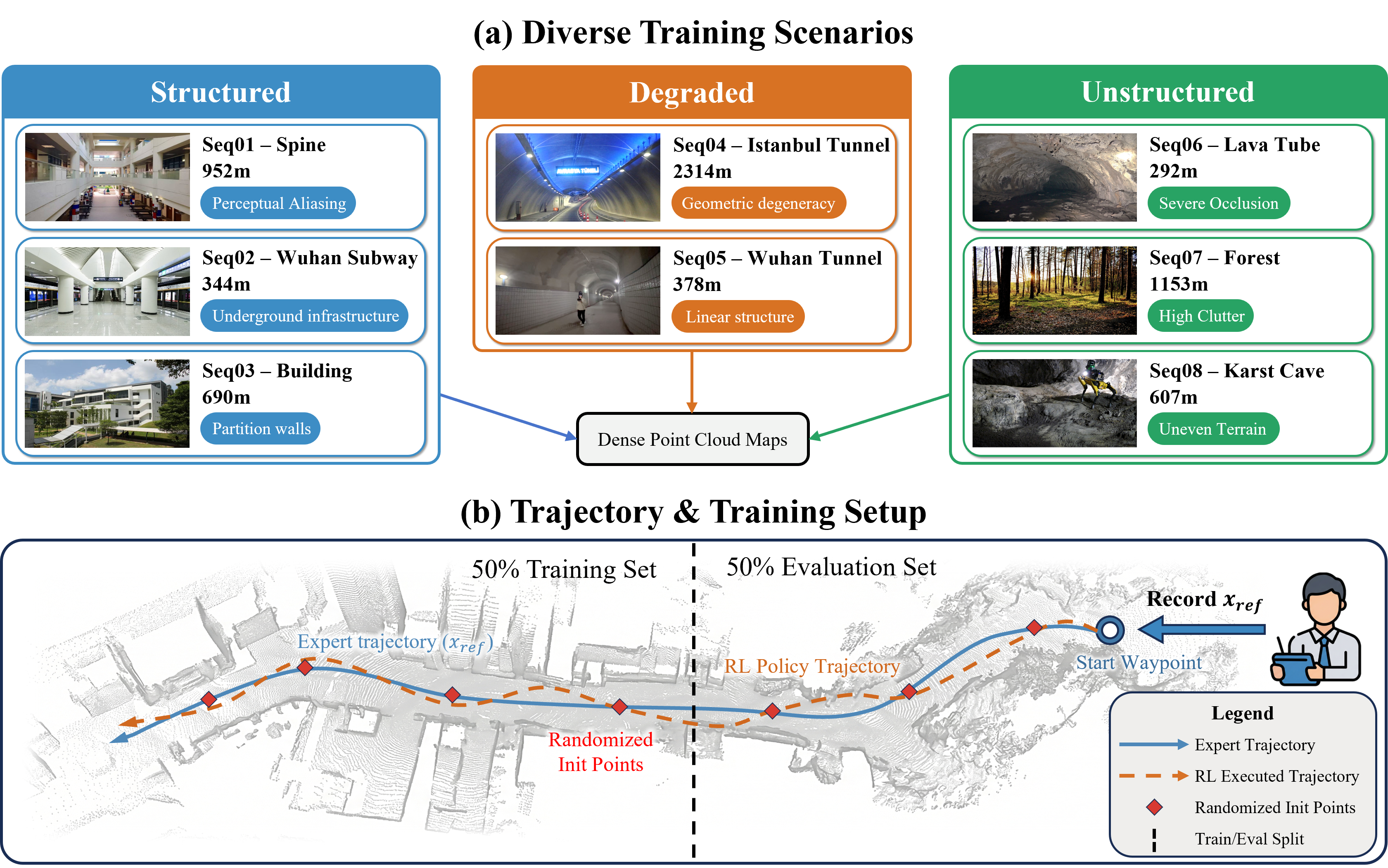

Figure 6: Diverse scenes, expert trajectories, and randomization strategies ensure broad generalization of the trained RL-MPC policy.

Experimental Analysis

Simulation Results

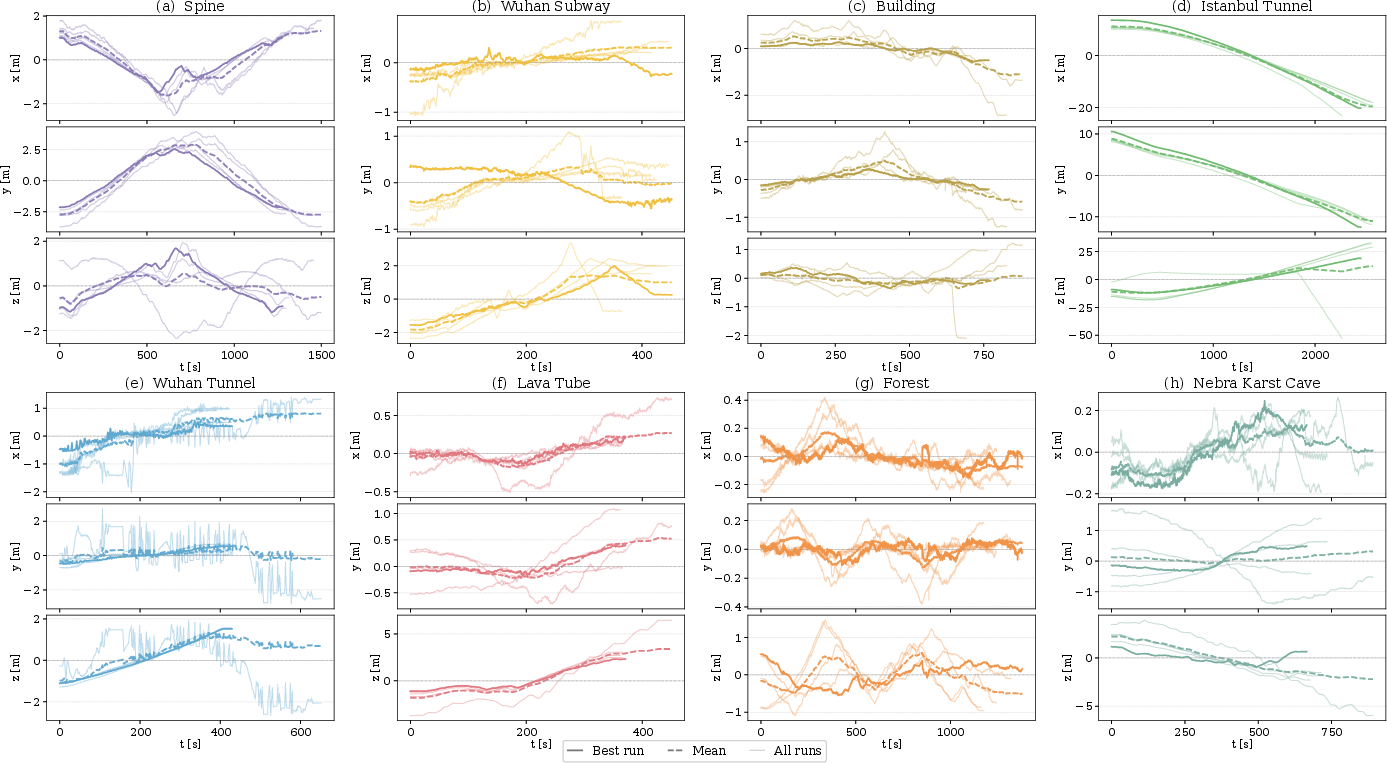

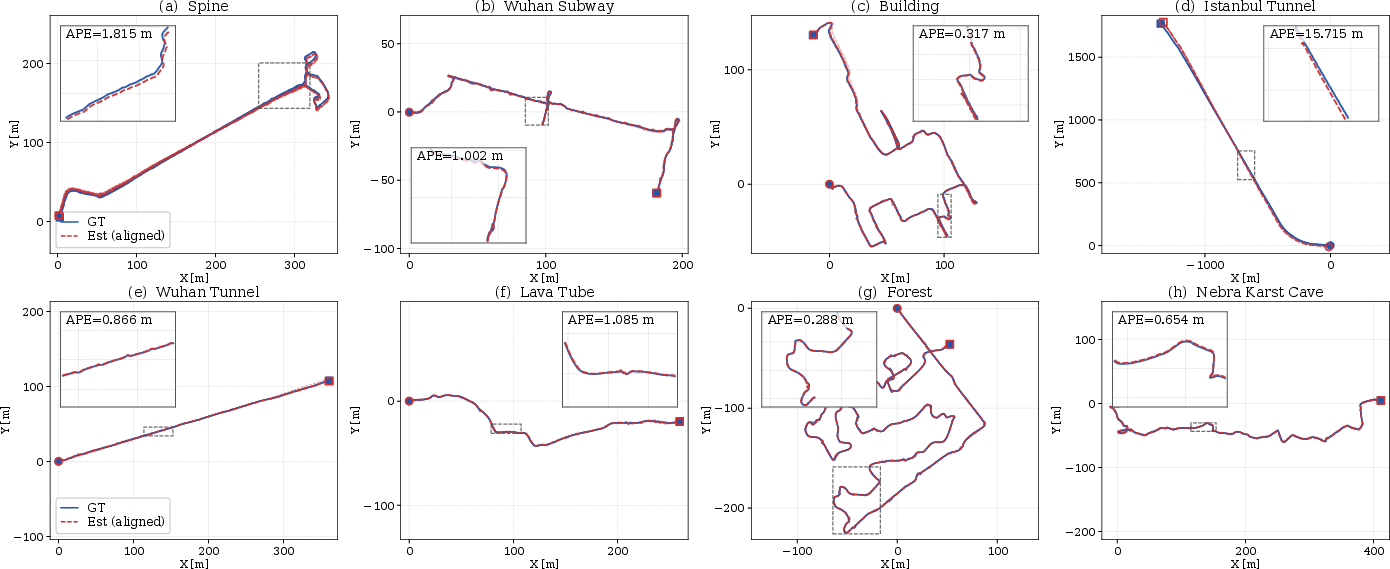

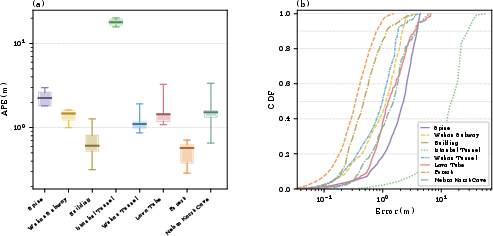

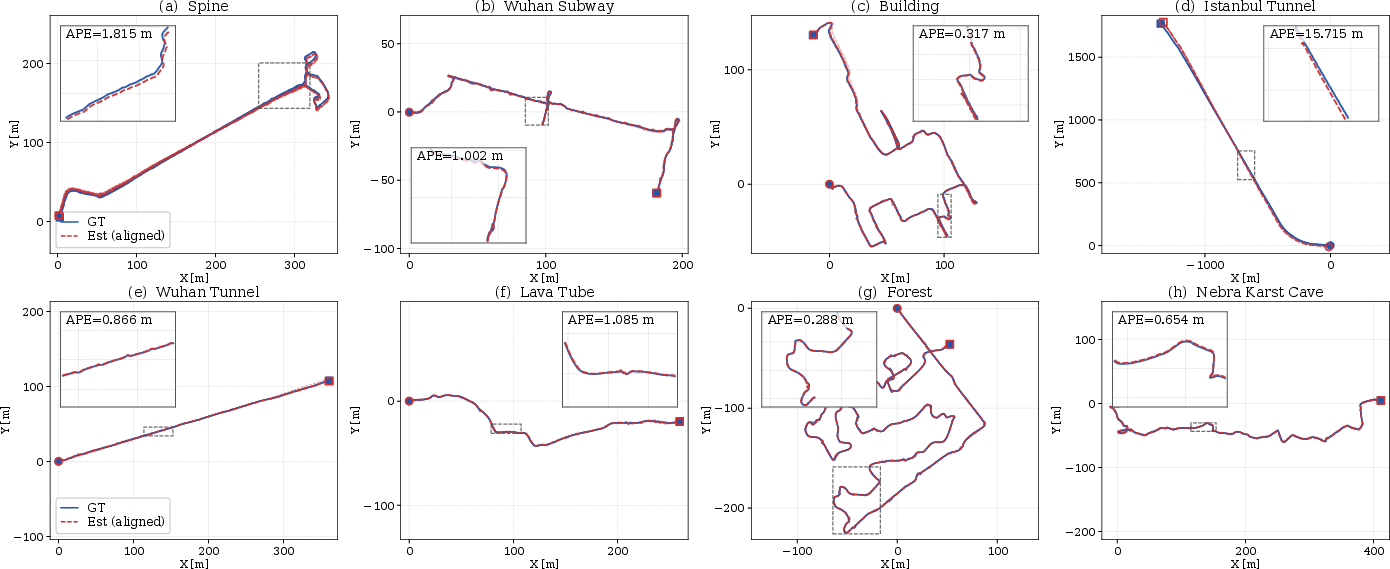

AWARE demonstrates consistent, substantial improvements over passive fixed-rate scanning and static-MPC controllers across eight structurally heterogeneous environments.

- Median Absolute Pose Error (APE) is reduced by 27.8% over the best static baseline, and 84.9% over passive scanning.

- AWARE adapts effectively: no single fixed baseline weight setting is competitive across all scenarios, confirming the necessity and effectiveness of RL-based cost adaptation.

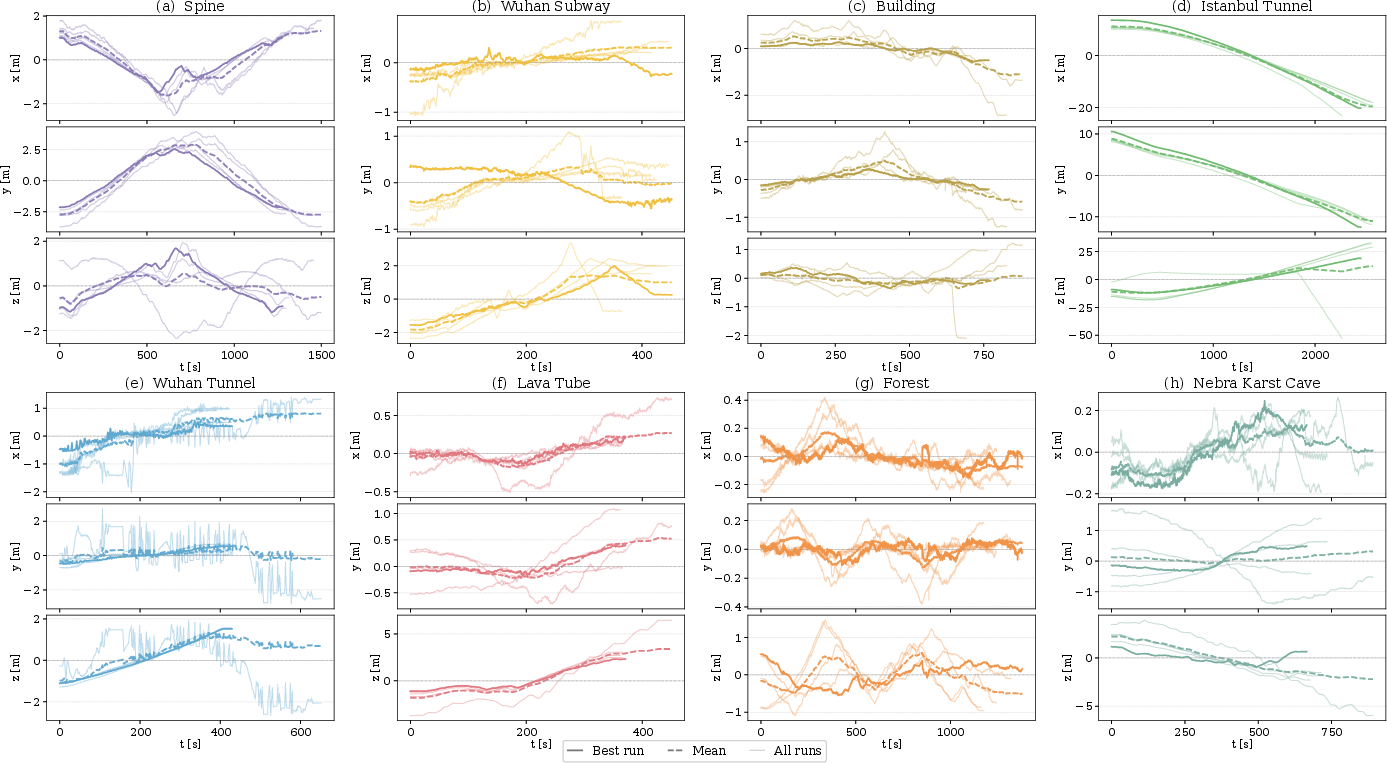

Figure 7: Sequence-wise trajectory errors in simulation confirm the stability and robustness of AWARE across independent trials.

Figure 8: Active trajectories closely match ground truth, minimizing catastrophic drift even under degenerate geometry.

Figure 9: Per-sequence error distributions and CDFs show the strong error contraction of AWARE compared to all baselines.

Real-World Deployment

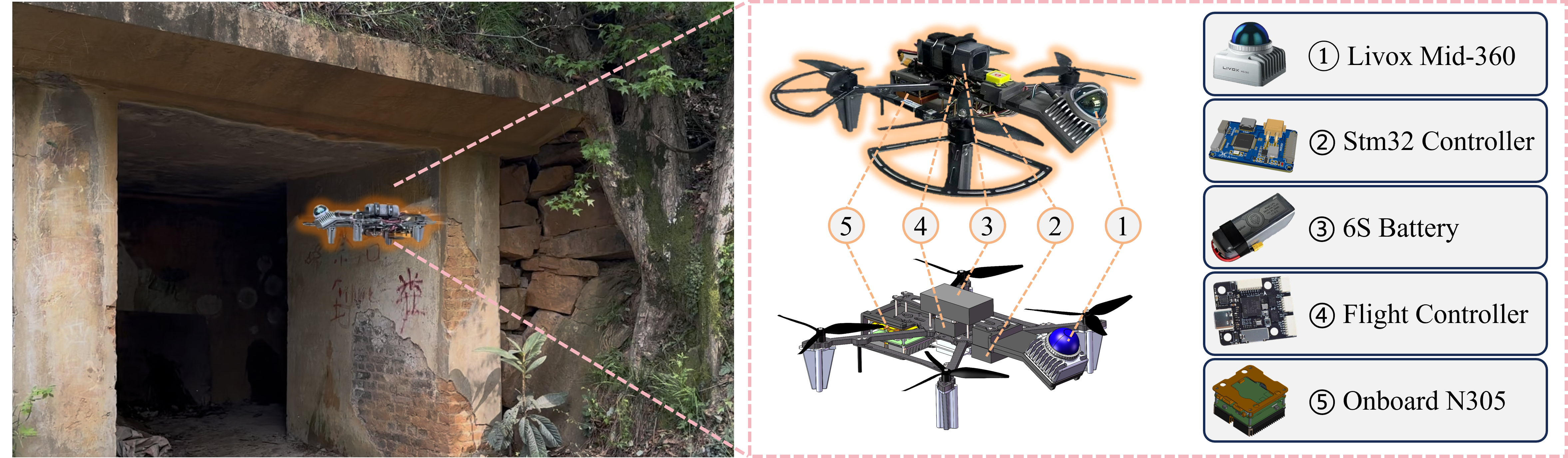

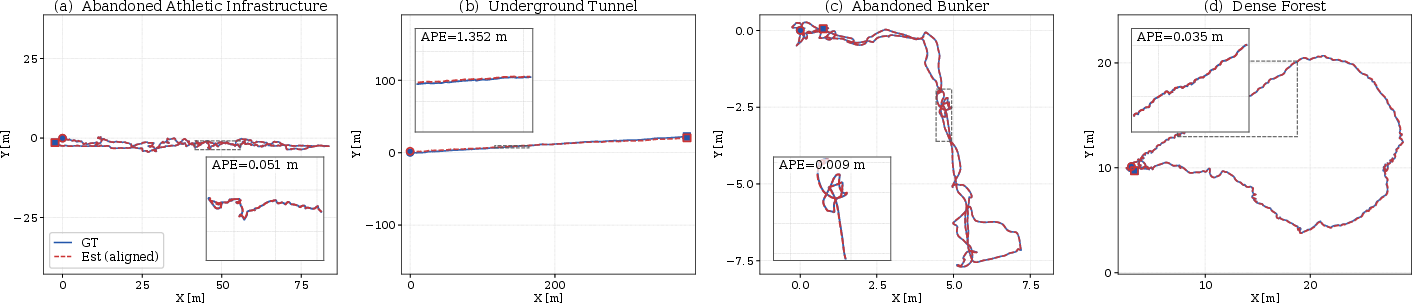

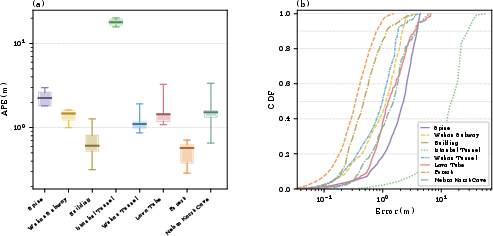

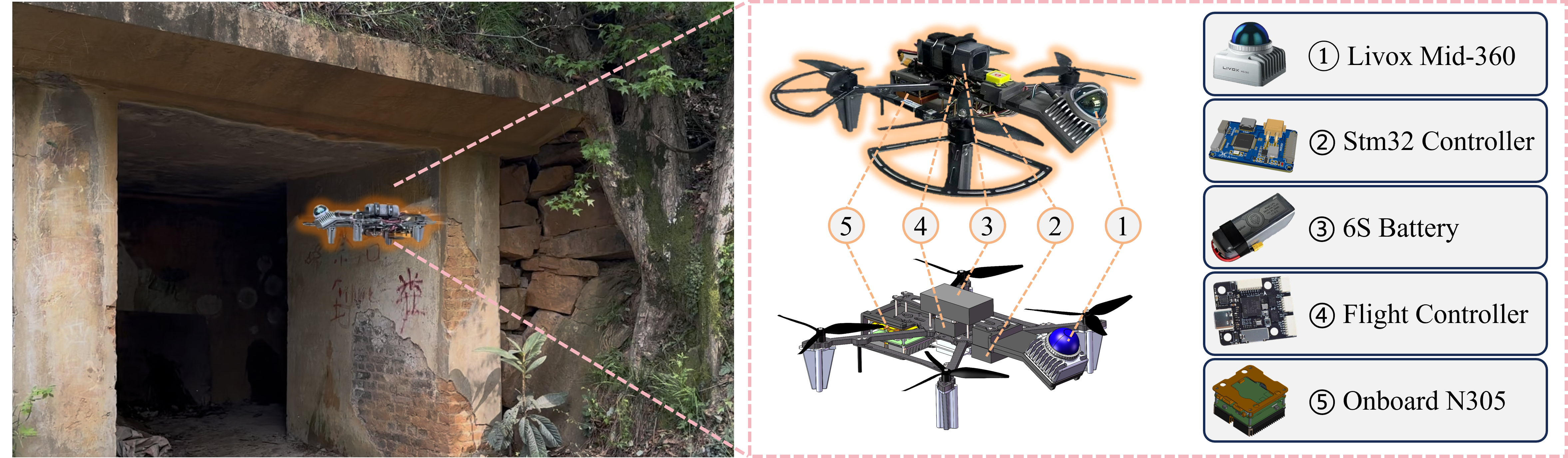

On a custom quadrotor (10Hz LiDAR, IMU, Intel-N305), AWARE achieves low-drift LIO in multiple, previously unseen real-world scenarios:

Figure 10: The onboard compute stack efficiently executes the AWARE stack in real time.

Figure 11: Real-world testing environments include tunnels, abandoned infrastructure, bunkers, and dense forests.

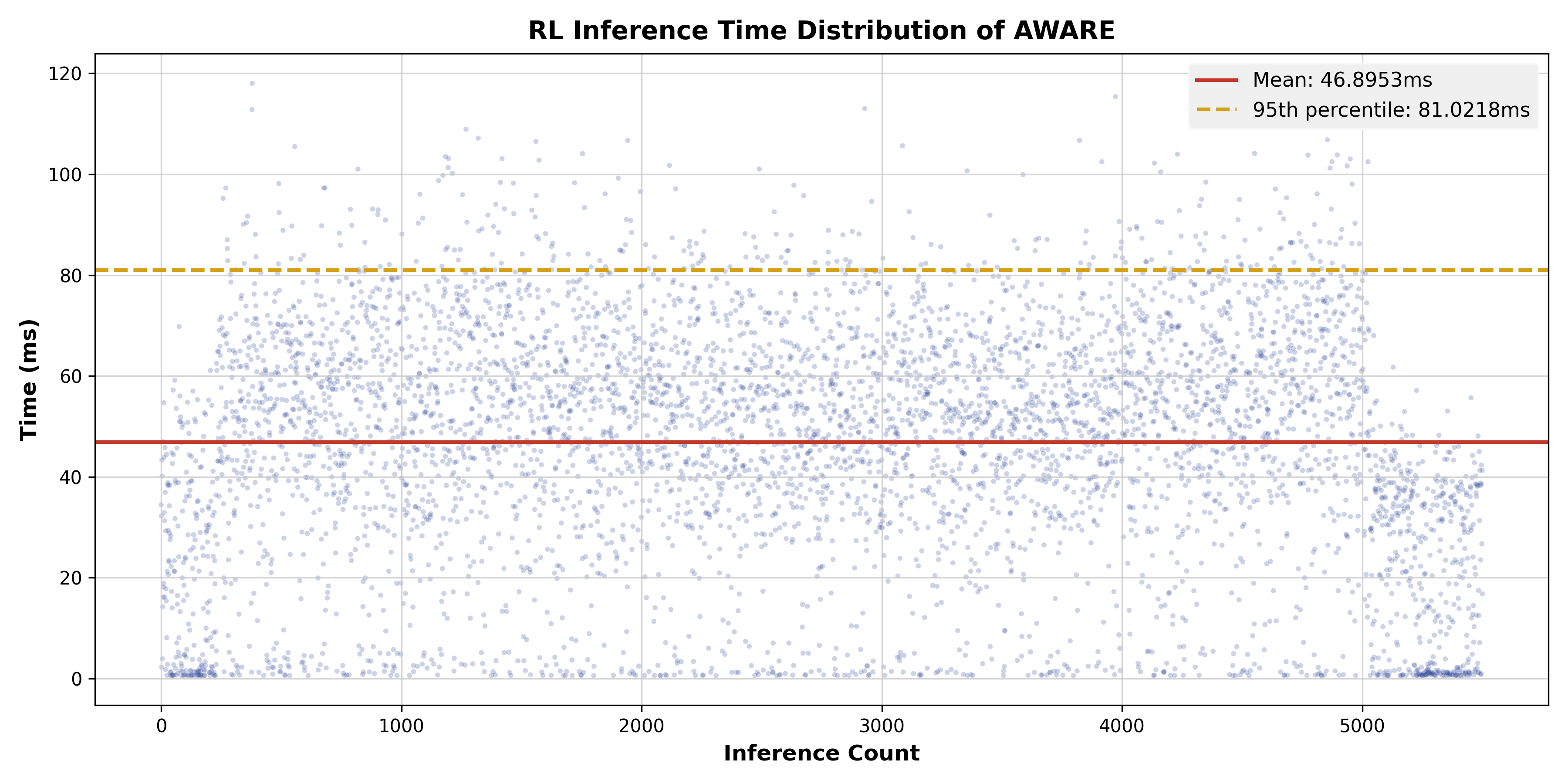

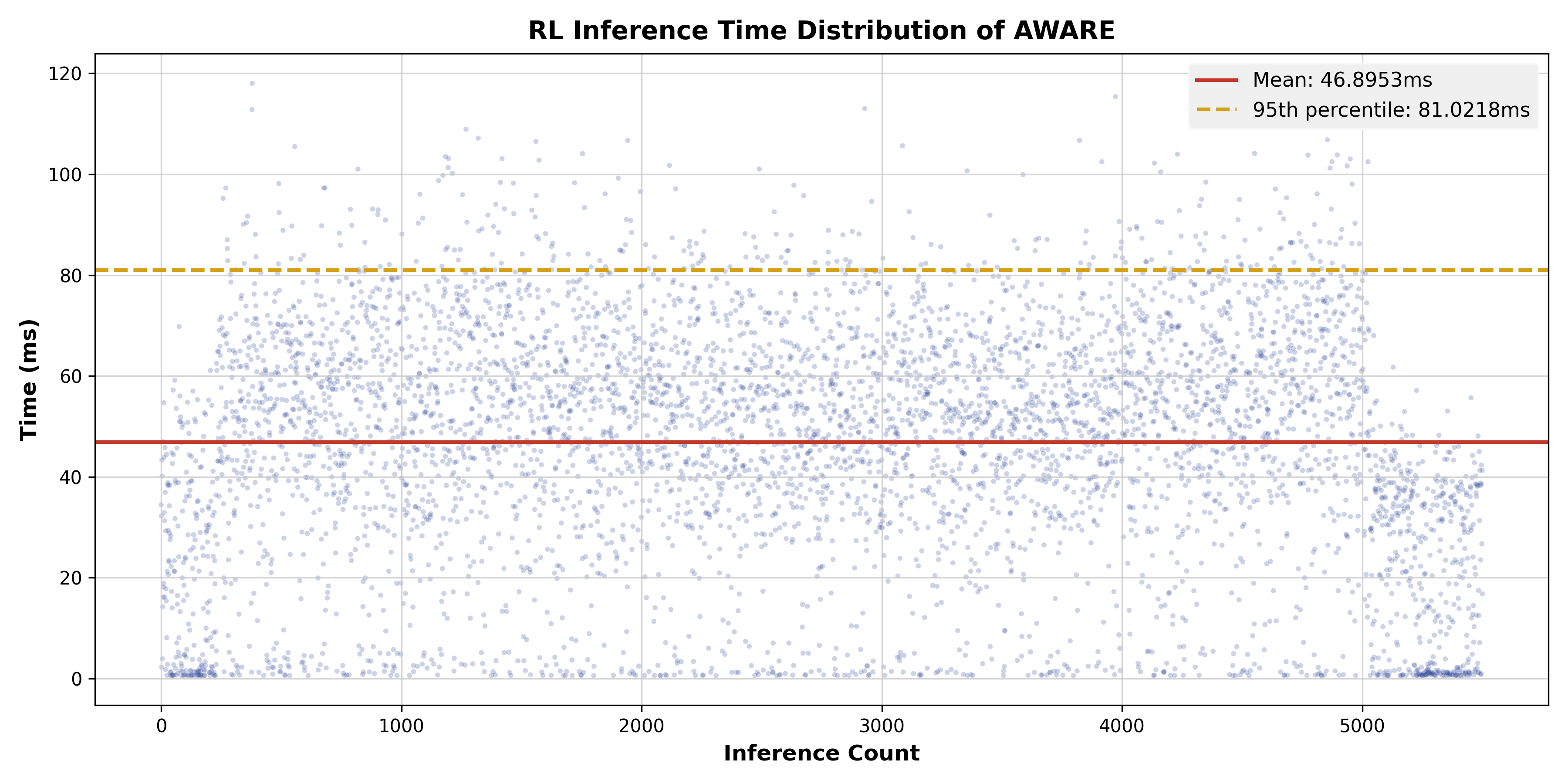

Timing and System Efficiency

Figure 13: End-to-end decision loop latency (mean 46.9 ms, 95th percentile 81 ms) supports real-time operation at 10Hz perception rates.

Analysis of Scene-Adaptive Behavior

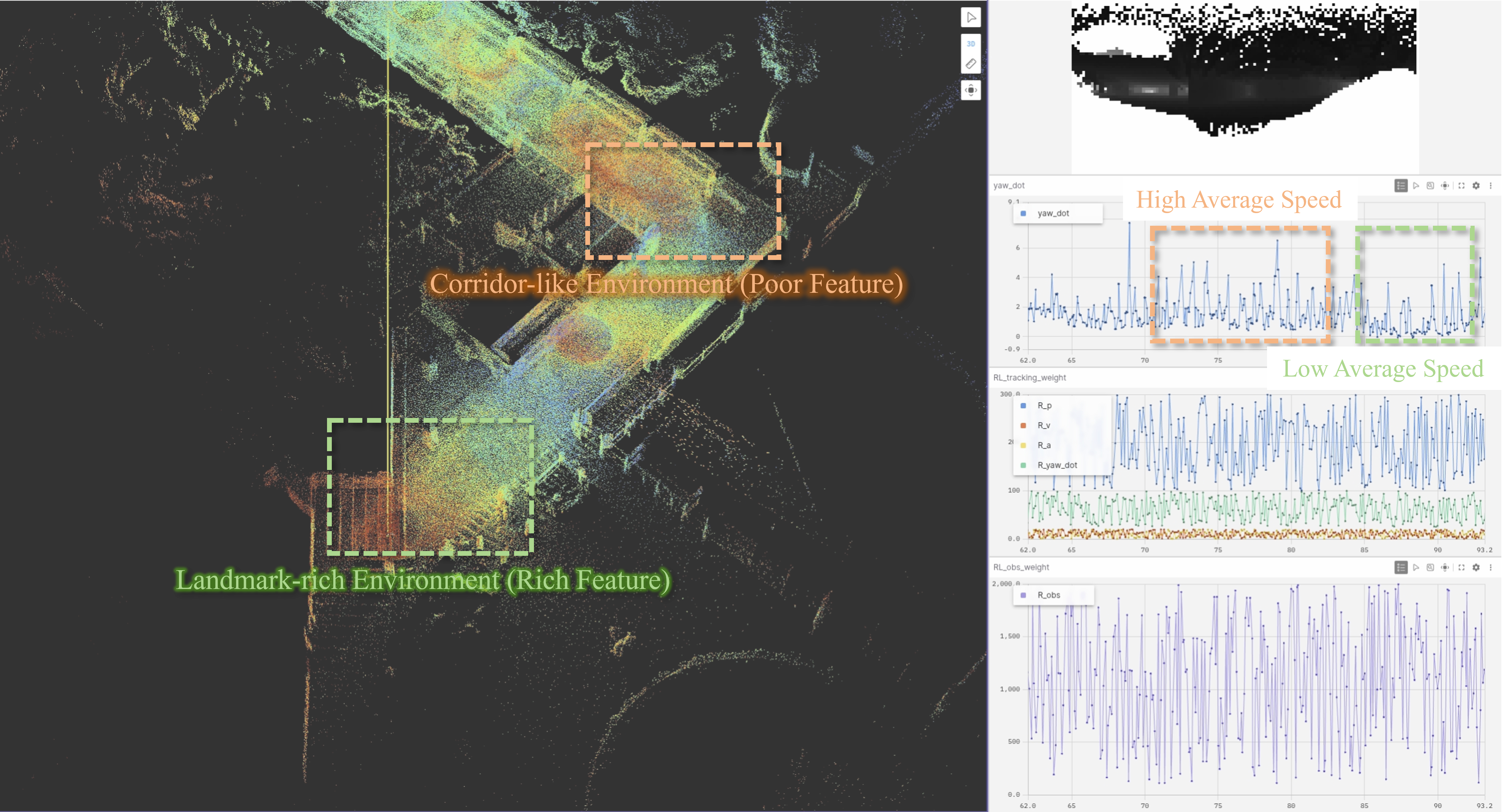

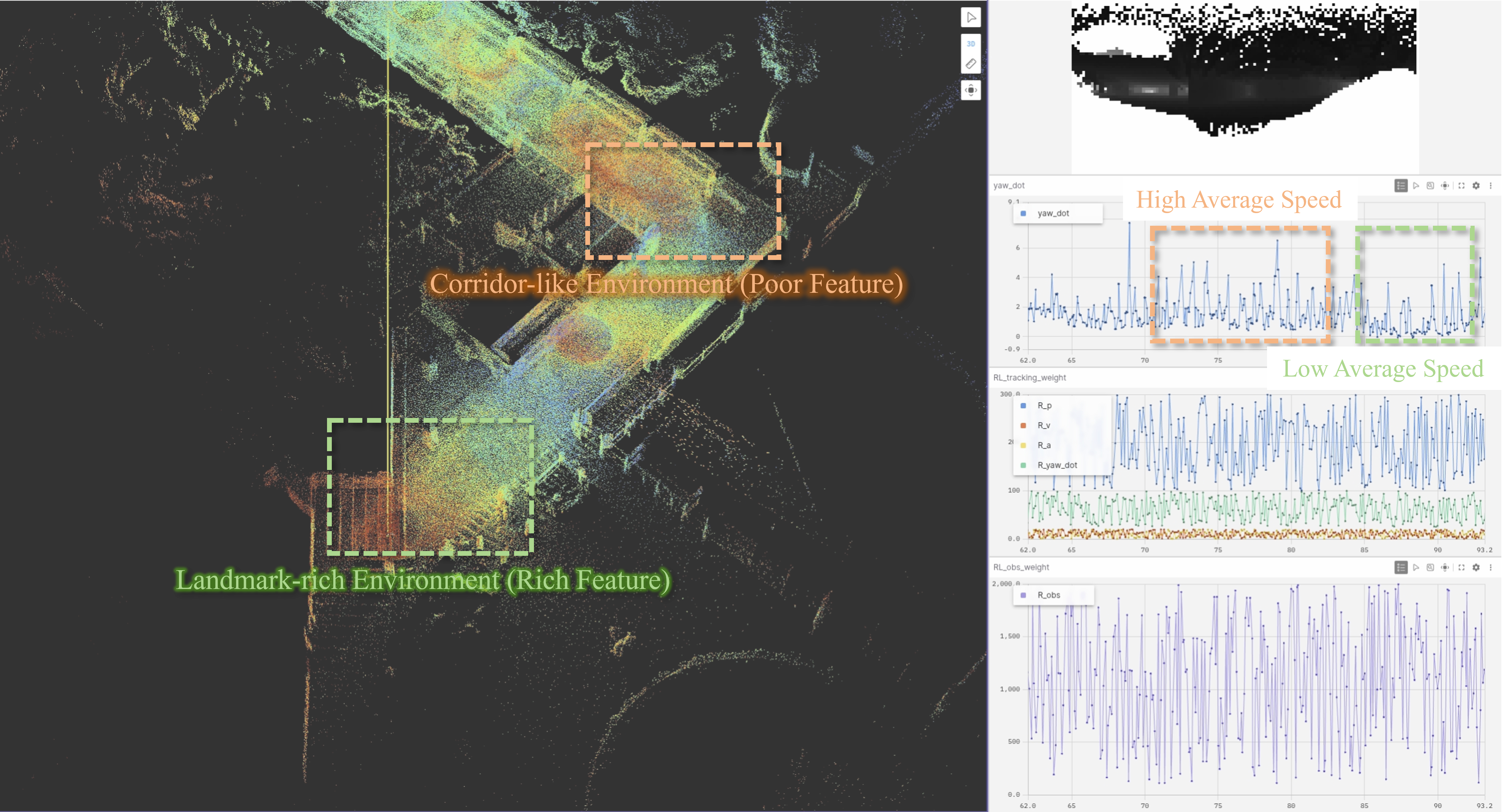

Yaw effort (commanded ψ˙) and RL-modulated observability weights are both strongly scene-dependent. AWARE rotates aggressively in feature-deprived regions (e.g., tunnels, cluttered bunker), while relaxing in rich environments (e.g., forests), reflecting physically meaningful, geometry-aware policy modulation.

Figure 14: Adaptive commanded yaw rates increase in degenerate regions and decrease in multi-feature domains, demonstrating informed control modulation.

Implications and Future Directions

The presented framework establishes a general-purpose template for software-centric, active perceptual control under realistic human-machine cooperation and hardware constraints. By fusing explicit metric-based reasoning, RL-based adaptation, and HITL-compatible constraints, AWARE achieves robust, efficient, and interpretable active perception.

Practically, AWARE enables high-quality aerial surveying, inspection, and rapid deployment in feature-limited or cluttered domains without heavy hardware. Theoretically, it formalizes an interpretable, adaptive coupling between scene geometry, control, and information gain. The modularity of the hybrid RL-MPC approach makes it extensible to richer forms of cooperative autonomy, multi-agent observability sharing, and immersive human interaction paradigms (e.g., VR-guided surveying).

Conclusion

AWARE provides an effective, bio-inspired, whole-body active yawing solution for enhanced LiDAR-inertial odometry in UAVs under human-in-the-loop control (2604.10598). Its integration of unified scene encoding, explicit observability metrics, hybrid RL-MPC control, and SFC-based HITL safety yields quantifiably superior localization accuracy, strong real-world transfer, and real-time operational efficiency. The methodology sets a strong foundation for future developments in adaptive, perception-aware UAV autonomy and collaborative active sensing architectures.