- The paper introduces a degeneracy-aware odometry approach that fuses deep learning-based inertial velocity estimation with an ESKF framework to maintain state consistency in challenging environments.

- It constrains updates along poorly observed axes using a bi-GRU network to predict velocity and covariance, effectively reducing drift and oscillations during degeneracy.

- The method outperforms classical LIO techniques across various platforms including vehicles, drones, and handheld systems, demonstrating improved robustness and reduced error accumulation.

Degeneracy-Aware LiDAR-Inertial Odometry: The ALIVE-LIO Framework

Problem Context and Motivation

LiDAR-Inertial Odometry (LIO) is fundamental for reliable state estimation in autonomous systems navigating complex environments. While LiDAR excels in reconstructing 3D environments, its effectiveness is undermined by geometrically degenerate scenarios where spatial constraints are unidirectional or limited—e.g., narrow corridors, tunnels, or long untextured walls. In such cases, state estimation becomes ill-posed, conventional ICP-based solutions become unstable, and even tightly-coupled LIO leveraging IMUs succumbs to accumulated drift from bias and noise.

Bridging the brittleness of classical LIO in degenerate conditions, recent work has incorporated auxiliary sensors, heuristic pose priors, or deep learning for motion prediction. However, these solutions inadequately address the consistency and probabilistic coupling between state variables, particularly when integrating learned inertial motion priors. Critically, existing methods often either naïvely trust learning outputs or use heuristic weightings without full probabilistic treatment, undermining robustness and consistency during long-term degeneracy.

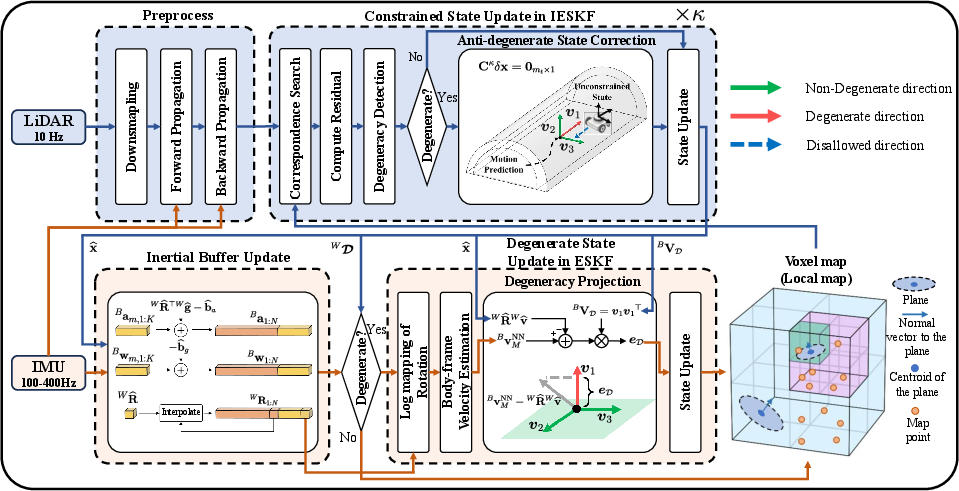

ALIVE-LIO: System Overview and Architecture

ALIVE-LIO introduces a principled, degeneracy-aware approach for LIO by tightly integrating deep learning-based inertial velocity estimation into an Error-State Kalman Filter (ESKF) framework. The architecture of ALIVE-LIO is structured as a sequential pipeline:

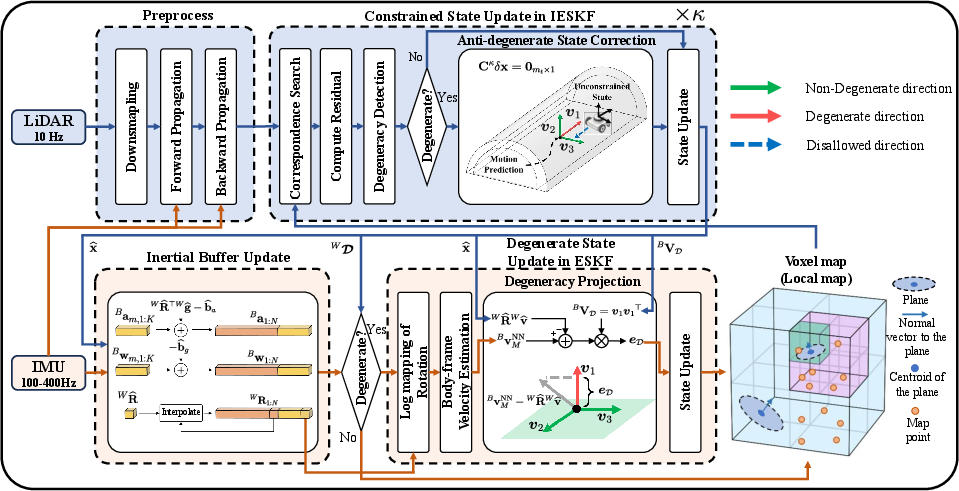

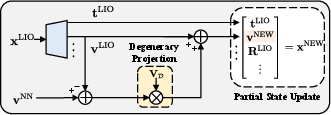

Figure 1: Pipeline of ALIVE-LIO.

- Degeneracy Detection and Constrained Update: The system continuously monitors geometric degeneracy through eigendecomposition of the ICP-derived Hessian, identifying axes with insufficient spatial constraints. When degeneracy is detected, constrained IESKF optimization suppresses updates along degenerate directions, relying instead on motion priors.

- Learning-Based Velocity Estimation: Buffered IMU sequences are transformed into the body frame and fed to a recurrent (bi-GRU) network—adapted from AirIO—that computes velocity and its covariance over the degenerate interval using only local inertial features. Bias and gravity components estimated by the filter are excluded from the learning signal to maximize generalization.

- Probabilistic Fusion via ESKF: Upon degeneracy, the predicted body-frame velocity (and its uncertainty) is selectively injected into the ESKF update, but strictly along degenerate axes. The full state—including biases, orientation, and gravity—is updated according to the cross-correlations learned by the ESKF, balancing data-driven and model uncertainty.

ALIVE-LIO thereby maintains rigorous probabilistic coupling of all state facets, fully leverages information in well-constrained directions, and only admits learned priors where classical observability fails.

Comparison to Prior Degeneracy Mitigation Strategies

Classical approaches to degeneracy mitigation in LIO either exploit richer geometric features (e.g., intensity cues [coin_lio], point-to-point metrics [genzicp]) or fuse with additional sensors (cameras, wheel encoders) [fast_livo2, switch_slam]. These, however, are either hardware-dependent or insufficient in multi-modal-degenerate settings. Pose-prior techniques, such as X-ICP [x_icp] and constrained optimization [relead], can suppress drift short-term but are vulnerable to IMU bias accumulation over long durations.

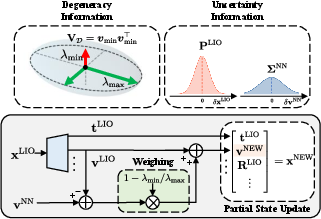

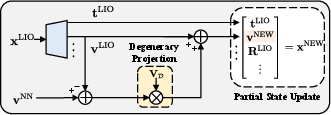

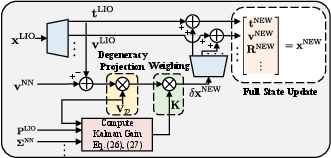

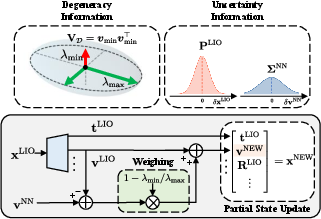

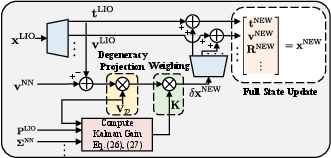

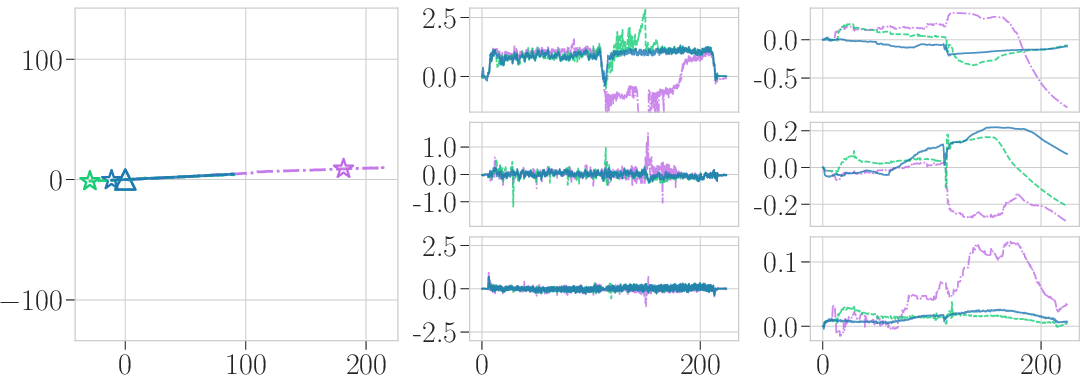

Data-driven methods like ININ-LIO [inin_lio] and the approach of Liao et al. [zongbo_tits] introduce deep inertial motion priors for degenerate axes. ININ-LIO projects the neural estimate directly without uncertainty integration, which degrades performance when the model is unreliable. The method of Liao et al. weights the IMU prior by a heuristic degeneracy score, but also lacks a principled uncertainty coupling. ALIVE-LIO advances the field by integrating learned velocity estimates into the ESKF, blending uncertainties from both generative and observation models, and updating the complete state (not only velocity) through the correct cross-covariance structure (Figure 2).

Figure 2: Comparison of state update methods. (a): Degeneracy ratio-based weighted update (Liao et al.); (b): Direct projection onto the degenerate space (ININ-LIO); (c): ESKF-based update in ALIVE-LIO, updating the full state using both system and learning-based uncertainty.

A key insight in ALIVE-LIO is the body-frame representation of all inertial data and estimated velocities. Empirical evaluations demonstrate that excluding gravity and bias estimates from the learning input yields better generalization across platforms and environments, while frame-aligned orientation histories (represented in the Lie algebra) minimize state representation mismatch and reduce drift artifacts.

IMU signals, pre-compensated for the latest bias and gravity estimates from the ESKF, are aggregated over multi-second intervals to capture temporal dependencies. The chosen neural architecture (bi-GRU) predicts both mean velocity and a full covariance, enabling dynamic weighing of the learning-based update in the filter.

Experimental Evaluation

ALIVE-LIO was extensively benchmarked against state-of-the-art LIO pipelines, degeneracy-robust methods, and learning-integrated baselines across diverse conditions: ground vehicles, drones, and handheld platforms traversing synthetic and real-world degenerate environments.

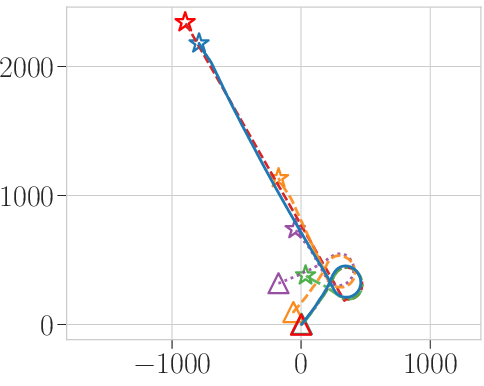

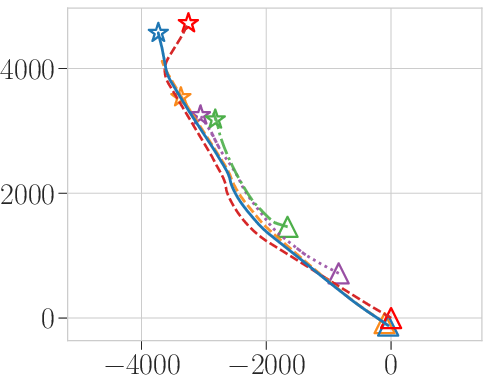

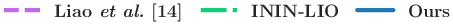

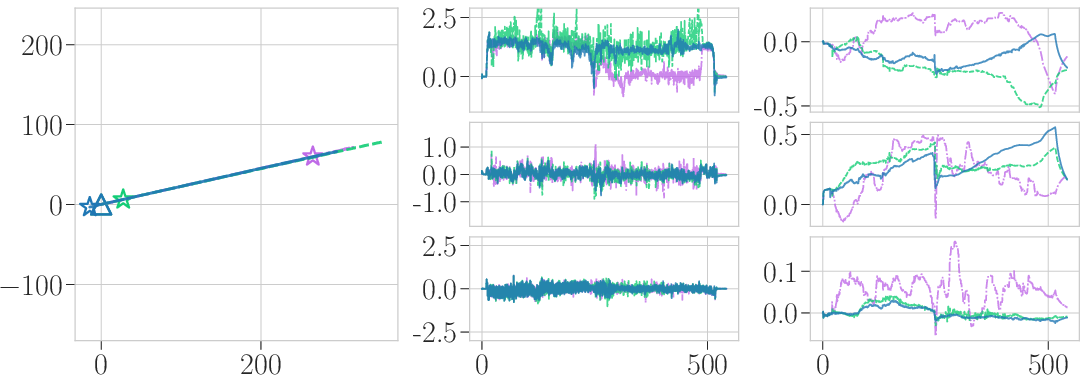

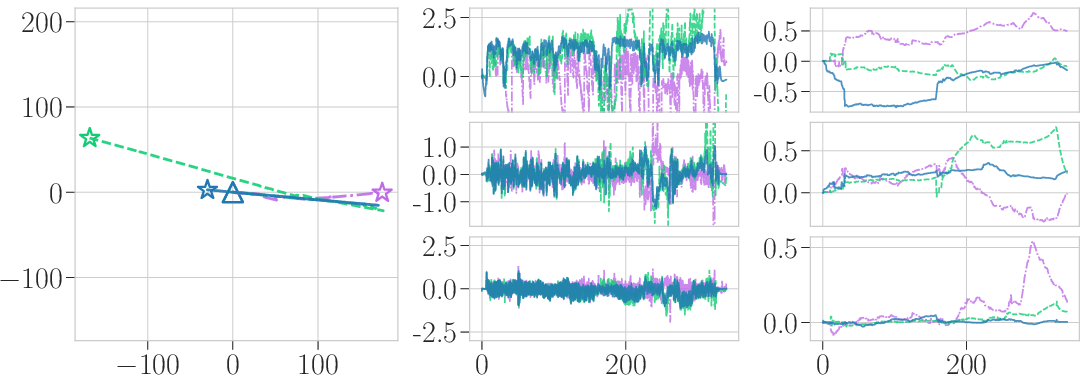

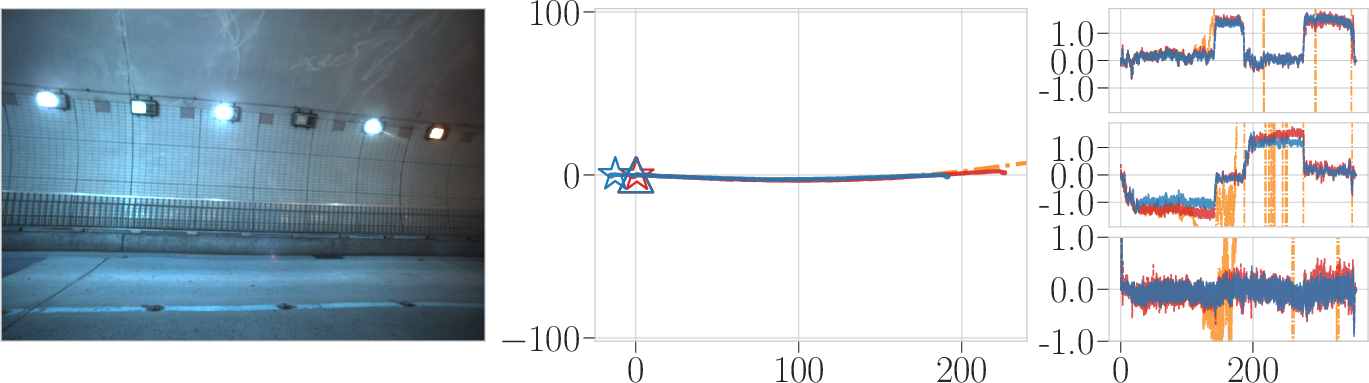

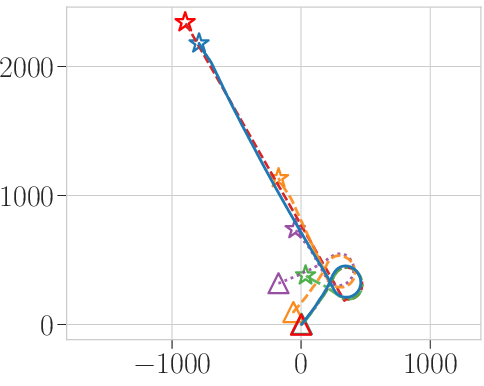

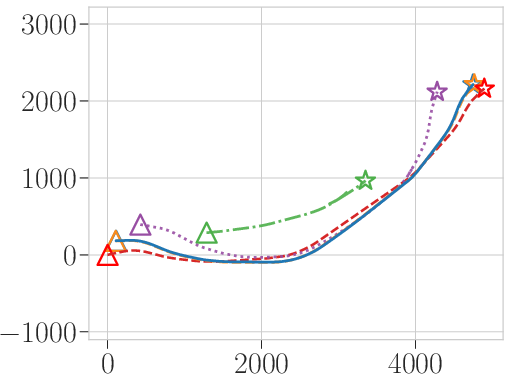

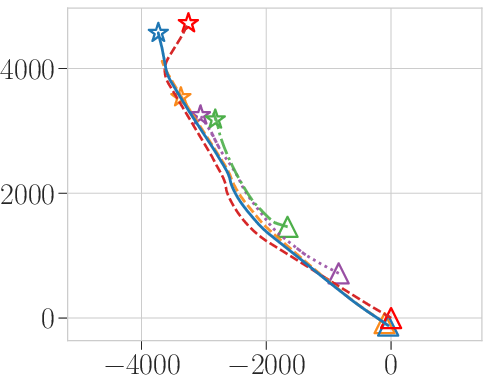

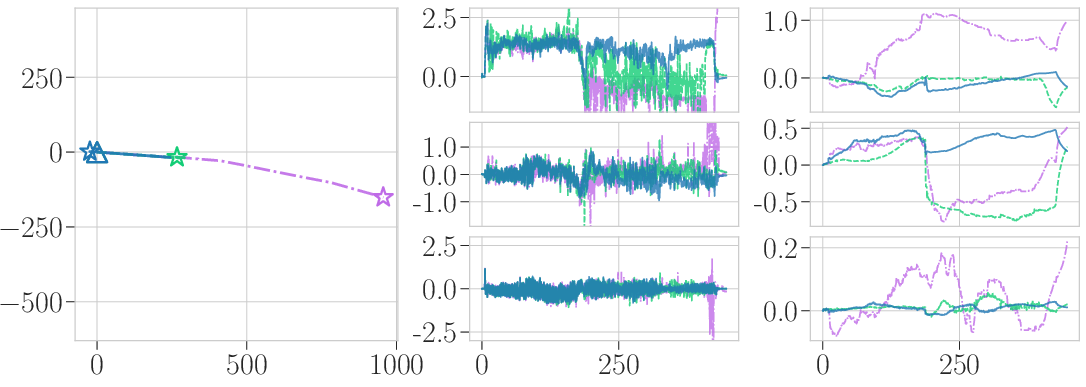

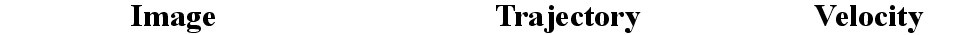

ALIVE-LIO exhibited substantially reduced pose drift in long tunnels, featureless corridors, and environments with narrow FOV LiDARs, outperforming all baselines in 22 of 32 public/private dataset sequences. Specifically, on challenging automotive tunnel runs, classical LIO methods diverged rapidly under degeneracy, while ALIVE-LIO maintained bounded drift (Figure 3).

Figure 3: Vehicle motion: Estimated trajectory visualization under degenerate conditions (X–Y plane). The proposed method maintains consistent estimation when competing methods diverge or drift.

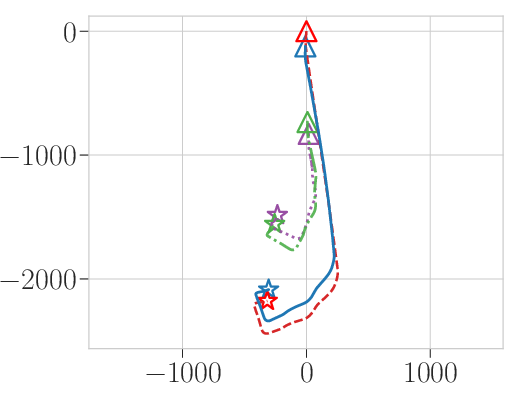

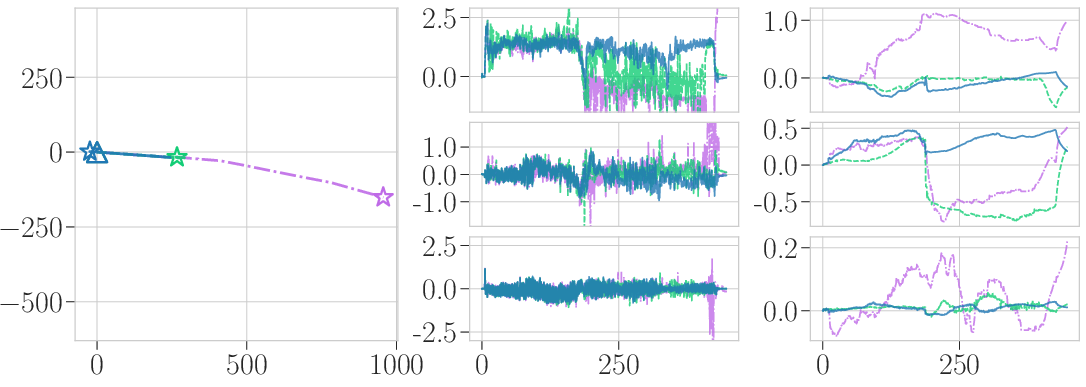

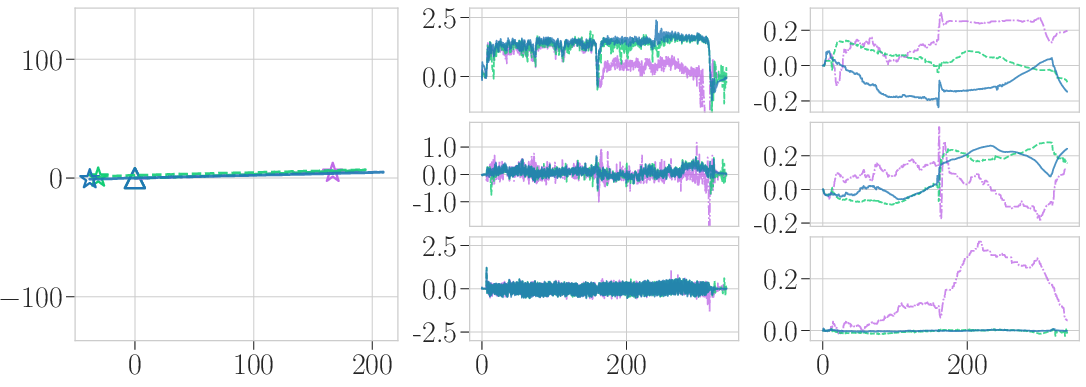

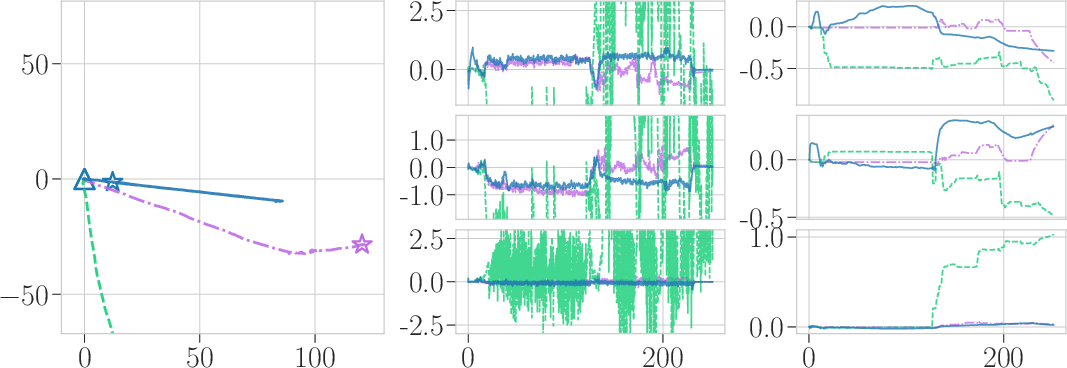

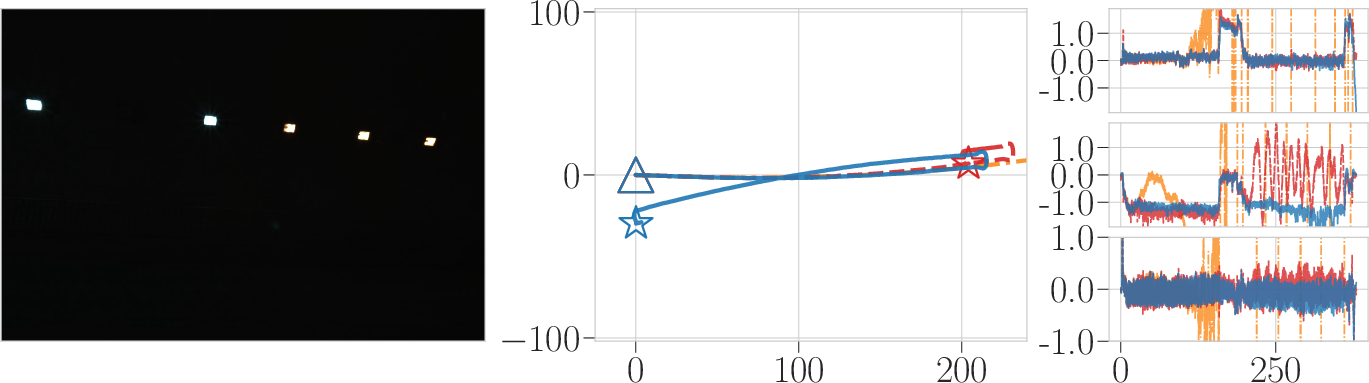

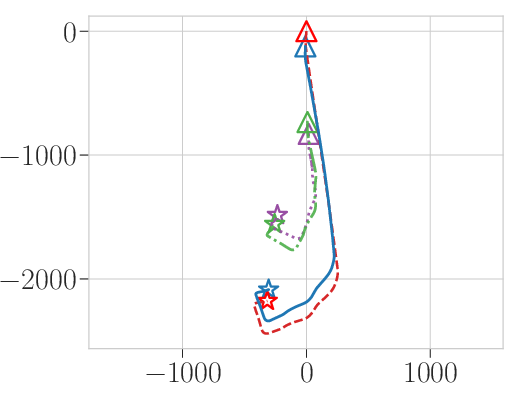

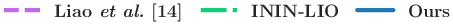

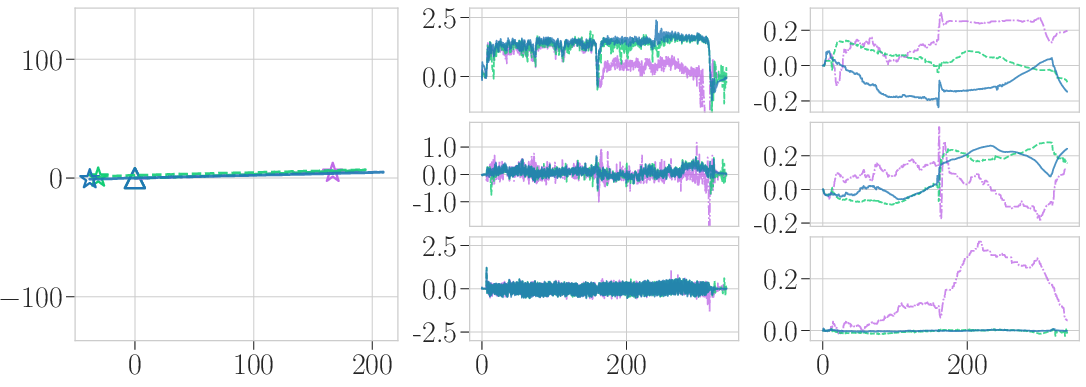

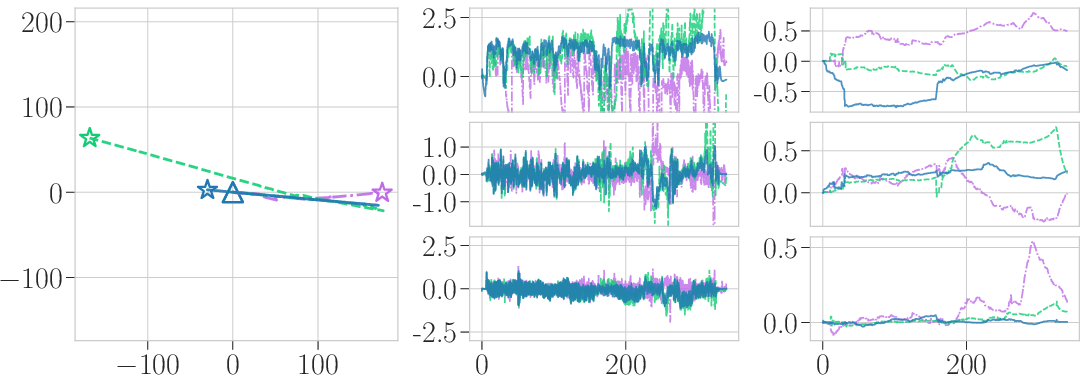

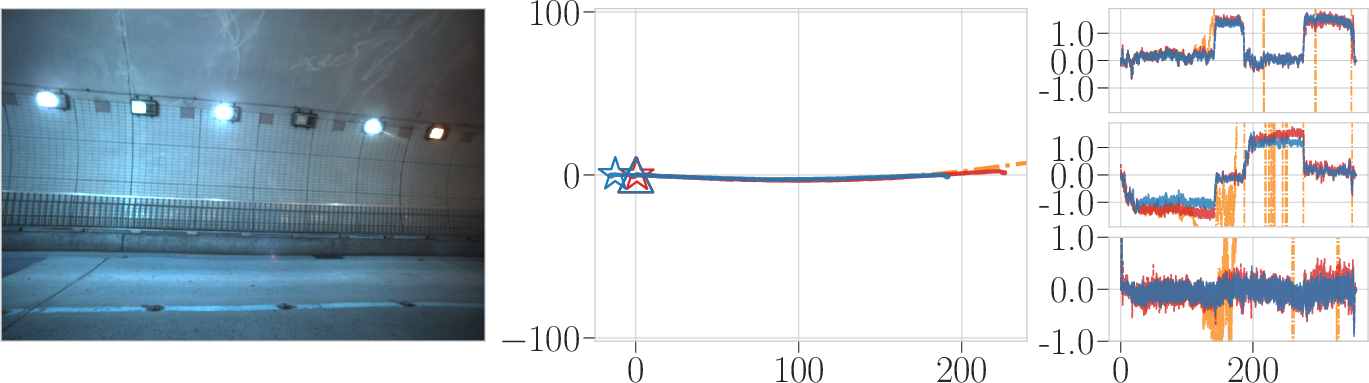

For handheld and aerial platforms, the classical methods collapsed in prolonged degeneracy, whereas ALIVE-LIO stably fused neural velocity predictions, suppressing non-physical reversals in velocity and maintaining trackability even as IMU drift mounted. However, aggressive rotational motion and failure to detect all degenerate axes limit effectiveness and are identified as primary failure cases.

Comprehensive ablation shows that full-state fusion in the ESKF, propagated through cross-covariances and weighted by predicted uncertainties, dramatically reduces error compared to prior art (Table: Comparison Between Odometry Updates) and prevents the oscillations and discontinuities typical of direct or naïvely weighted neural updates. Qualitative plots verify that, even under extended degeneracy and IMU bias, ALIVE-LIO’s trajectories remain smooth, velocity estimation is stable, and bias estimates recover faster when normal observability resumes (Figure 4).

Figure 4: Plots of the estimated trajectories, velocities, and acceleration biases in degenerate handheld tunnel scenarios. ESKF-based fusion in ALIVE-LIO yields stable velocity and bias estimates, suppressing the oscillations observed in other methods.

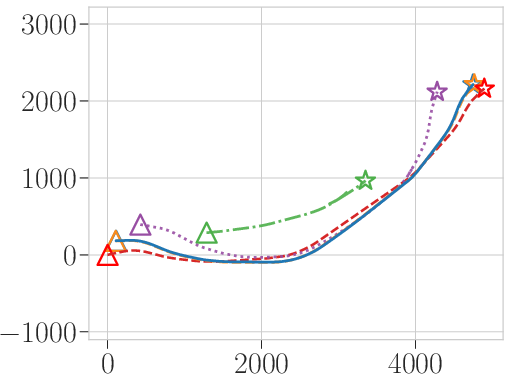

Applicability in Featureless and Multi-Modal-Degenerate Environments

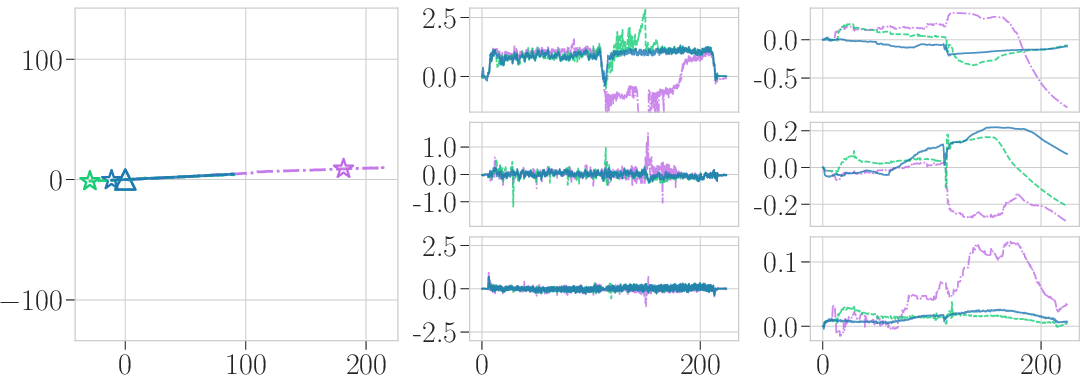

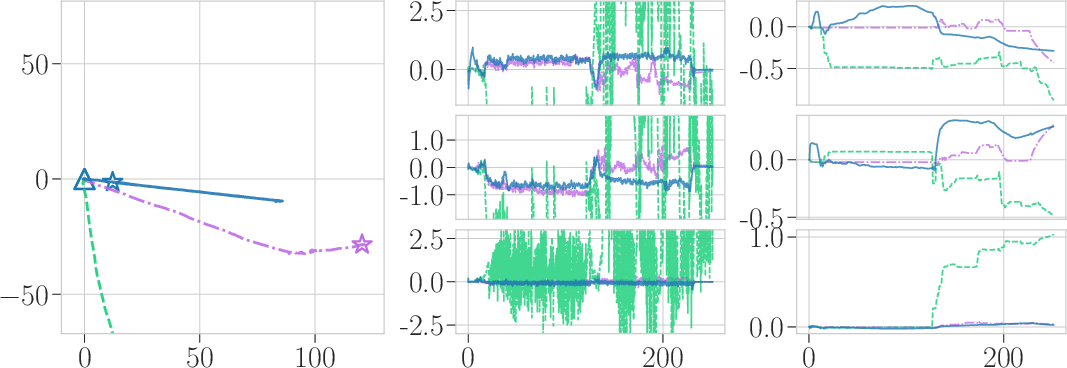

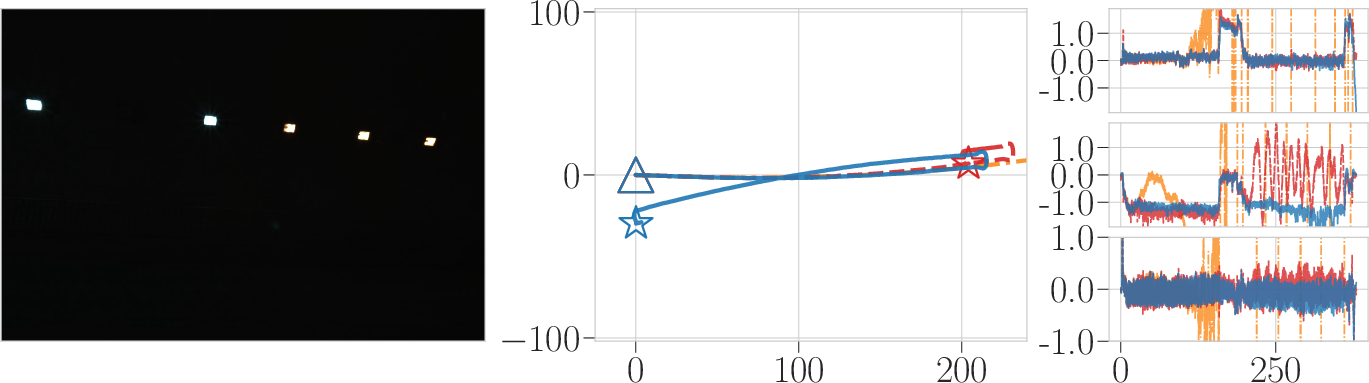

ALIVE-LIO serves as a robust fallback in photometrically and geometrically sparse environments where both LiDAR and camera cues are unreliable (Figure 5). In private datasets with minimal visual and geometric structure, vision-based systems (FAST-LIVO2) failed, whereas ALIVE-LIO produced bounded, albeit drift-accumulating, odometry—demonstrating a practical alternative in LVIO-breaking scenarios.

Figure 5: Performance comparison under abundant and sparse visual features. ALIVE-LIO provides stable odometry when visual and geometric cues are insufficient for LVIO systems.

Removing estimated gravity and bias terms from neural network inputs demonstrably improves cross-device and cross-motion generalization. This effect is more pronounced in scenarios with complex, multi-axis rotations and non-linear motion.

Implications, Limitations, and Future Prospects

The ALIVE-LIO system represents a methodological advance in probabilistic sensor fusion under partial observability—paving the way for resilient, modular SLAM pipelines robust to various forms of environmental degeneracy. By directly modeling uncertainty and coupling learning-based priors within a classical filtering framework, it sets a template for integrating data-driven dynamics into SLAM. Practically, ALIVE-LIO is relevant for field robotics, search-and-rescue, autonomous vehicles operating in large-scale, poorly-structured infrastructures, and mapping in visually deprived or featureless environments.

Limitations include performance degradation in highly dynamic rotational regimes, sensitivity to reliable degeneracy detection, and slow recovery when normal observability is restored. Addressing these may require joint learning for rotational and translational motion, adaptive degeneracy thresholds, or meta-learning methods for out-of-distribution detection.

A potential trajectory for future research involves the principled integration of self-supervised learning for online adaptation in changing sensor configurations/environments, end-to-end differentiable filtering, or extending the degeneracy-aware paradigm to multi-modal SLAM beyond LiDAR-IMU (e.g., radar, event cameras).

Conclusion

ALIVE-LIO establishes a framework for degeneracy-aware LiDAR-Inertial Odometry that fuses learning-based velocity estimation into the ESKF. By explicitly modeling degeneracy, tightly coupling uncertainties, and updating the entire state vector, it substantially improves robustness and reliability in previously intractable scenarios. The approach is validated across platforms and datasets, motivating further exploration of uncertainty-driven, learning-enhanced state estimation for next-generation autonomous systems.