- The paper introduces internal circuit metrics, notably Dependency Depth Bias, to quantify model generalization in Vision Transformers.

- It employs tools like CCA and EAP-IG for structural analysis, distinguishing robust deep layer connectivity from superficial shortcuts.

- The proposed Circuit Shift Score accurately predicts post-deployment performance shifts, outperforming traditional confidence-based approaches.

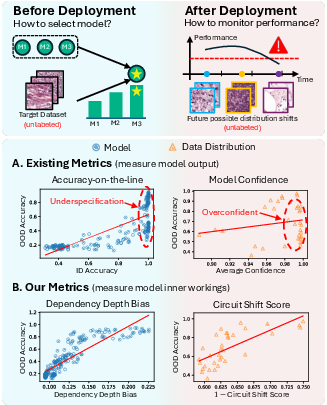

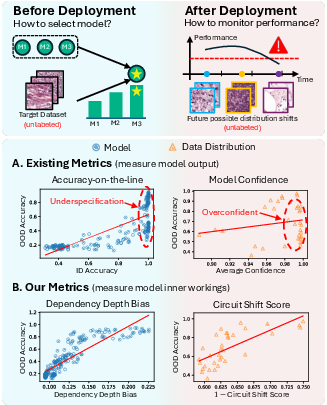

Evaluating the generalization of vision models, particularly Vision Transformers (ViTs), under distribution shift is central for deployment in real-world and high-stakes scenarios where labeled target data is often scarce. Traditional approaches rely on output-based proxy metrics—such as in-distribution (ID) accuracy or output confidence—which can be unreliable due to phenomena like underspecification and overconfidence. This paper introduces a fundamentally distinct perspective: leveraging the internal computational circuits of a model as a predictive metric for generalization, targeting two practical scenarios—pre-deployment model selection and post-deployment performance monitoring.

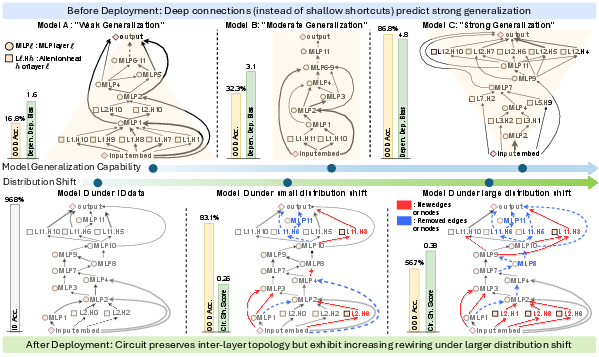

Figure 1: Overview of two challenges (model selection pre-deployment, performance monitoring post-deployment), highlighting the shortcomings of existing metrics and superior correlation of circuit-based metrics.

Circuit Discovery and Structural Analysis

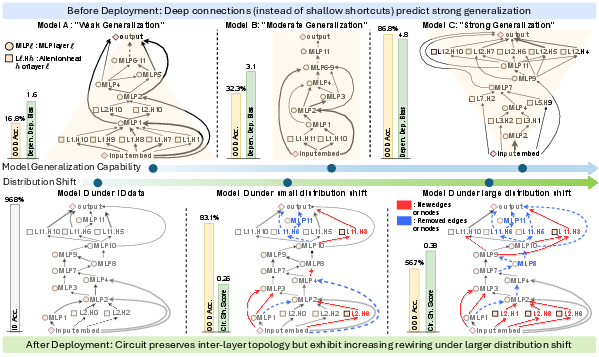

The approach centers around mechanistic interpretability, defining ViT’s computation as a directed graph at sub-layer granularity, distinguishing MLP layers and attention heads as atomic nodes. Circuits are formalized via edge weight mappings, using mean ablation to assess the causal importance of each edge in preserving the model’s output distribution. Edge Attribution Patching with Integrated Gradients (EAP-IG) is identified as an efficient and faithful circuit discovery method for ViTs.

Figure 2: Visualization of circuits pre- and post-deployment, showing distinct structural motifs correlated with generalization and rewiring dynamics under shift.

Pre-Deployment: Dependency Depth Bias and Model Selection

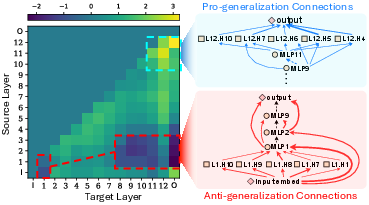

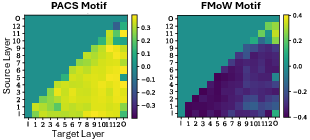

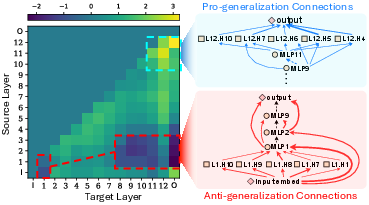

Canonical Correlation Analysis (CCA) over circuit-induced inter-layer dependency matrices reveals a universal generalization motif: models with stronger OOD robustness exhibit deeper layer connectivity (“∇”-like structure), in contrast to shortcut reliance in shallow layers (“Δ”-like structure). Quantitatively, the Dependency Depth Bias (DDB) metric is introduced to capture the ratio of deep versus shallow layer dependencies, instantiated in global, deep, and output variants.

Figure 3: CCA identifies the universal generalization motif, highlighting layer dependencies positively/negatively correlated with performance.

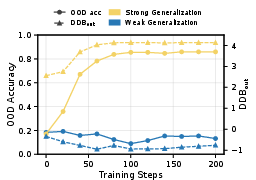

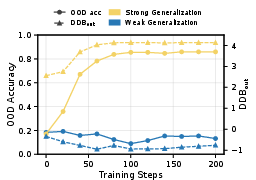

DDB metrics consistently yield superior predictive power across PACS, Camelyon17, and Terra Incognita datasets, achieving up to 0.766 R2 correlation with OOD performance and outperforming baselines by 13.4% on average. DDB also accurately reflects training dynamics: in robust models, increases in DDB parallel increases in OOD accuracy, confirming its utility not only for selection but for real-time generalization tracking.

Figure 4: Alignment of OOD accuracy with the DDB dynamic during training, distinguishing robust and weak generalizers.

Post-Deployment: Circuit Shift Score for Performance Monitoring

After deployment, static inter-layer topology no longer correlates reliably with performance due to contradictory motif patterns across shifts (e.g., PACS vs. FMoW), as CCA analysis reveals.

Figure 5: Contradictory post-deployment generalization motifs, illustrating the breakdown of consistent layerwise signals.

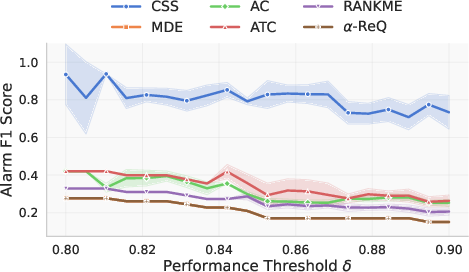

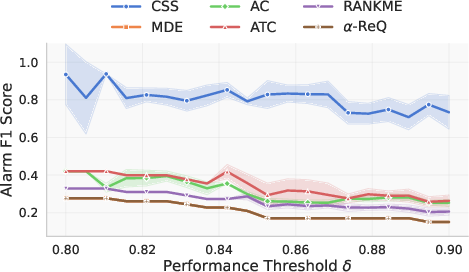

The Circuit Shift Score (CSS) is thus introduced, quantifying the relative deviation between circuits induced by ID and OOD distributions via vector or graph-based distances. Fine-grained vector-based measures (especially SRCC rank correlation) prove most effective. CSS metrics achieve up to 0.811 R2 correlation with OOD performance, exceeding the strongest baseline by 0.341, and enable early “alarm” of performance degradation with 45% gain in F1 detection scores in clinically relevant ranges.

Figure 6: F1 score for alarms on Camelyon17, with CSS outperforming baselines within clinically valid performance thresholds.

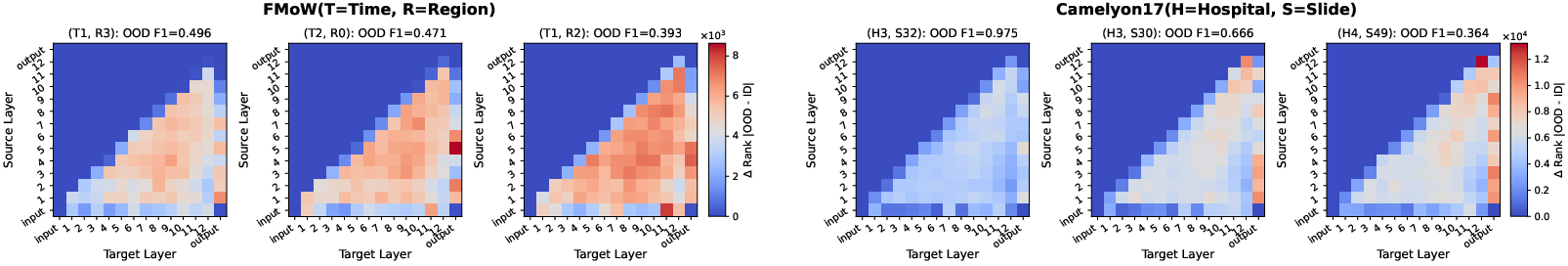

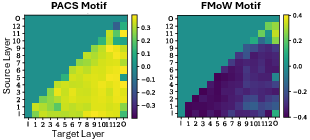

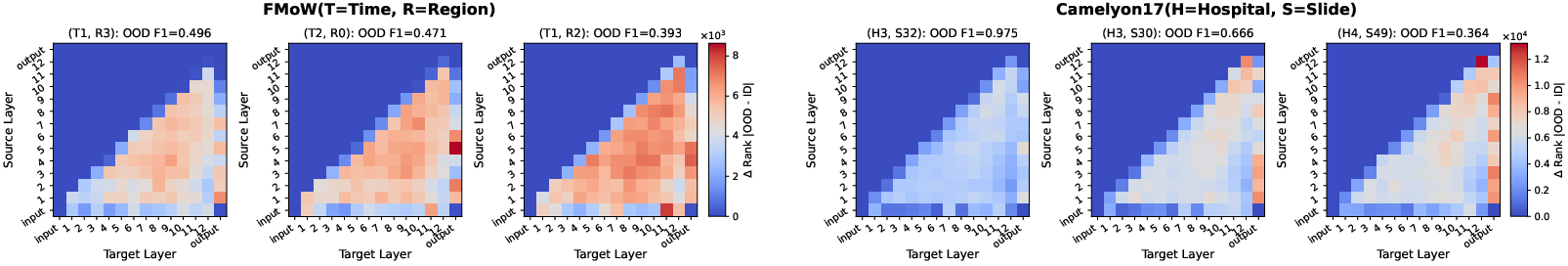

Visualization of circuit rank changes across domains provides qualitative insight into the nature of performance degradation: FMoW experiences widespread cross-layer changes, while Camelyon17 shifts are concentrated in deeper layers.

Figure 7: Heatmaps depicting circuit rank changes across source-target layers under distribution shift for FMoW and Camelyon17.

Practical and Theoretical Implications

The findings demonstrate that internal circuit structure in ViTs robustly predicts generalization, both for label-free model selection and monitoring. This establishes mechanistic interpretability-based circuit discovery as a new paradigm for generalization evaluation, supplementing and sometimes surpassing output-based proxies. The methodology is readily applicable to a wide spectrum of vision benchmarks and is extensible to other domains (e.g., NLP, clinical imaging). Furthermore, the use of circuit dynamics offers a pathway to optimizing models directly for robust generalization motifs, influencing future training protocols and architectural search.

The main limitation is computational overhead: circuit discovery currently incurs substantially greater cost than confidence-based metrics due to repeated gradient estimation. However, the overhead is primarily in the backward pass and can be mitigated by adopting lighter methods (e.g., EAP), batch parallelization, or zeroth-order approximations.

Conclusion

This work establishes the predictive utility of circuit-based metrics for generalization in ViTs across both pre-deployment and post-deployment scenarios. Dependency Depth Bias and Circuit Shift Score surpass conventional metrics in correlation strength and alarm efficacy, validating the practical value of mechanistic interpretability in high-stakes deployment. Future directions include developing scalable circuit discovery algorithms and explicitly optimizing circuit metrics during training to induct robust and generalizable computational pathways.