- The paper introduces NC-Diffusion that constrains the diffusion process by using quantization noise for deterministic reconstruction.

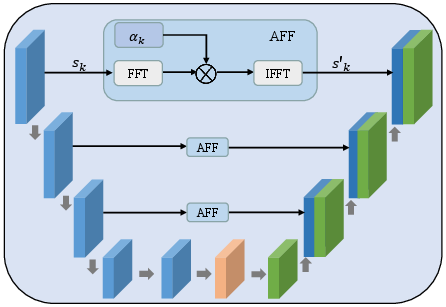

- It employs a U-Net with Adaptive Frequency-Domain Filtering to preserve high-frequency image details and reduce artifacts.

- Extensive experiments demonstrate lower BD-rate, improved FID and LPIPS metrics, and order-of-magnitude faster inference compared to prior methods.

Noise-Constrained Diffusion for High-Fidelity Image Compression

Introduction

Conventional learned image compression frameworks, typically built on deep neural networks, optimize end-to-end rate-distortion trade-offs but often fail to reconstruct high-frequency details due to quantization effects in the latent space. Recent attempts to use diffusion models—especially Denoising Diffusion Probabilistic Models (DDPMs)—for generative compression have led to perceptual improvements but introduce stochasticity due to the use of random Gaussian noise in the diffusion process. This noise mismatch between traditional quantization noise and Gaussian noise leads to suboptimal fidelity and increased inference time when applying diffusion models to image compression.

The paper "A Noise Constrained Diffusion (NC-Diffusion) Framework for High Fidelity Image Compression" (2604.06568) addresses these limitations by introducing a noise-constrained diffusion paradigm that explicitly models quantization-induced noise as the starting distribution for the reverse diffusion process. This enables deterministic, faithful reconstruction with improved perceptual quality and inference efficiency.

Problem Characterization and Motivation

Traditional diffusion-based image compression methods initiate the generative process from Gaussian noise, which favors sample diversity but compromises faithfulness to the input. The authors identify and experimentally validate a noise mismatch problem: the structured, image-dependent quantization noise differs substantially from random Gaussian noise in both signal statistics and spatial structure.

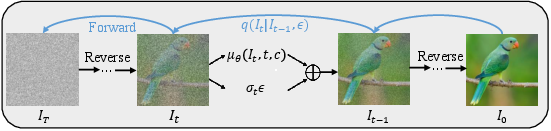

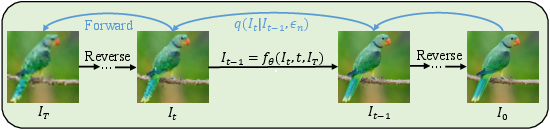

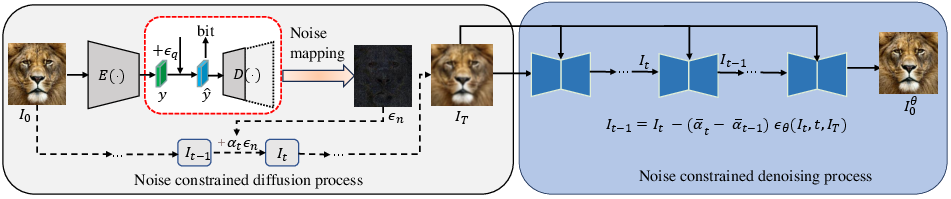

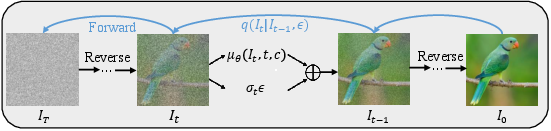

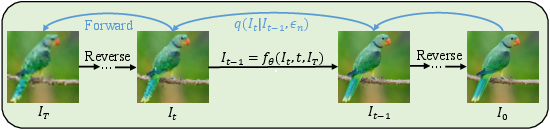

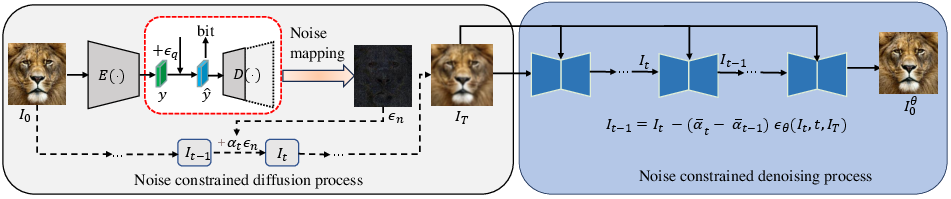

Figure 1: A comparison of inference processes for existing diffusion-based image compression and the proposed NC-Diffusion framework, with the latter leveraging quantization-induced noise for deterministic reconstruction.

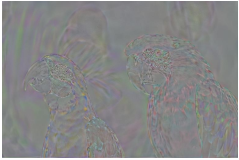

This mismatch propagates random artifacts into reconstructions—contradictory to the objectives of data compression, which demands high-fidelity, faithful reconstructions. Figure 2 from the paper vividly compares the patterns of normalized quantization noise and Gaussian noise, clearly illustrating structured edge patterns in quantization noise that are absent in Gaussian noise.

Figure 2: Normalized quantization noise (left) exhibits strong structure near edges, in contrast to the spatially white Gaussian noise (right), underscoring the noise mismatch in existing approaches.

The NC-Diffusion Framework

The core innovation of this work is formulating a noise-constrained diffusion process, where the diffusion forward and reverse processes are matched with the quantization noise statistics of neural codecs. Rather than injecting synthetic Gaussian noise, the process starts from the quantized latent code, models the noise as strictly arising from quantization, and applies the diffusion model deterministically during inference.

Figure 3: Overview of the NC-Diffusion compression framework, showcasing the noise-constrained forward and reverse diffusion using quantization noise.

Key aspects of the framework:

Experimental Evaluation

Quantitative Assessment

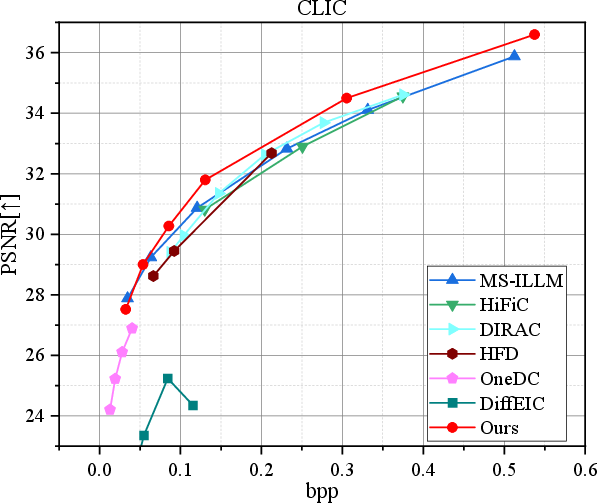

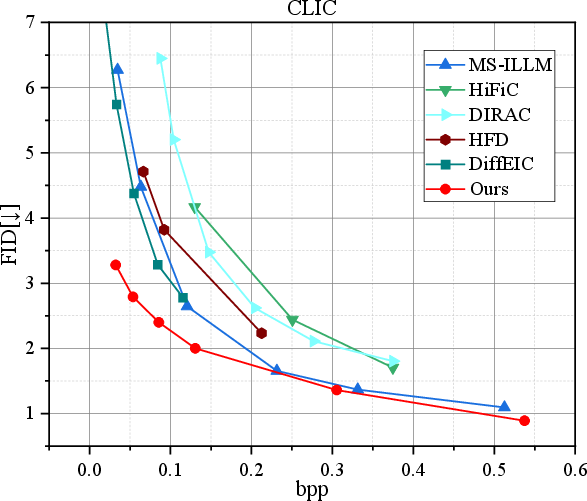

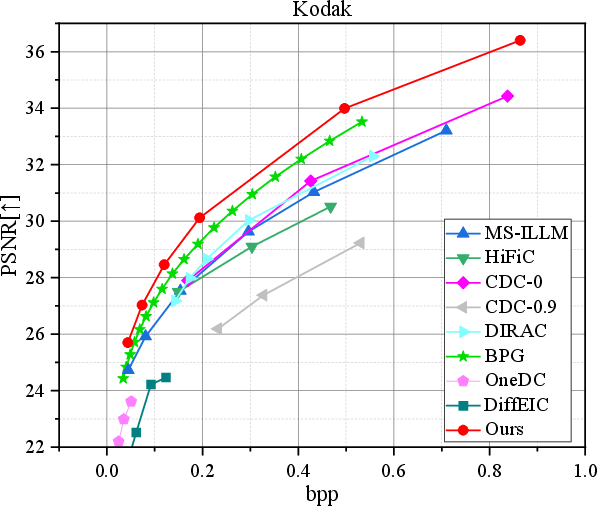

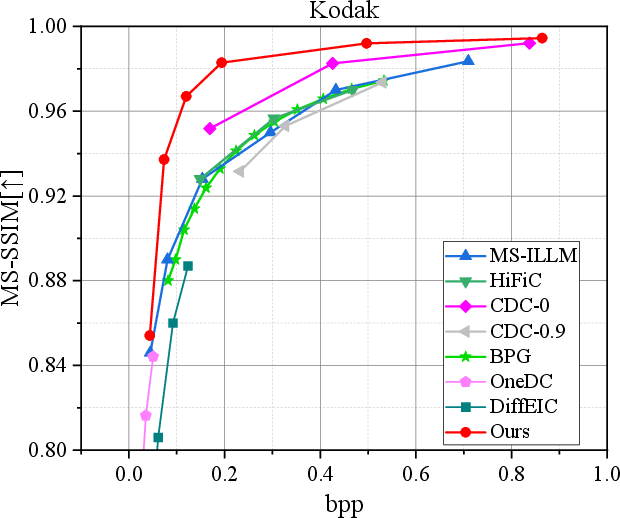

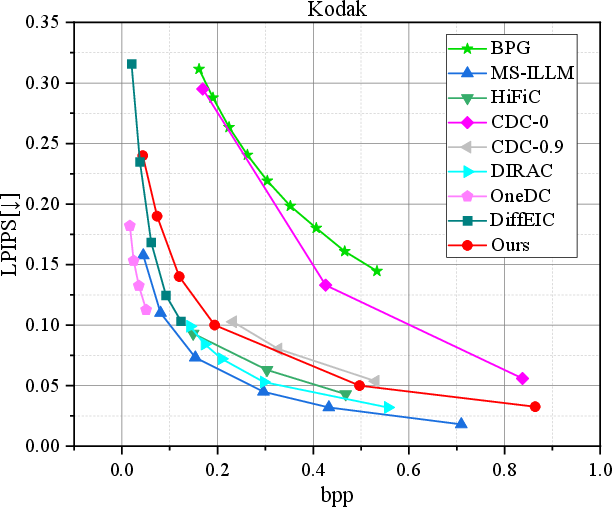

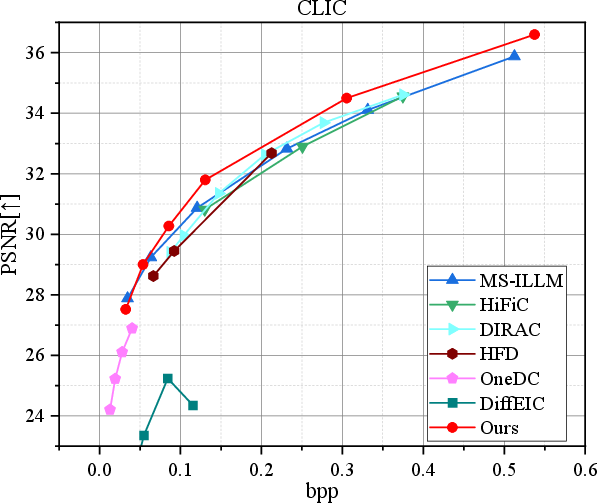

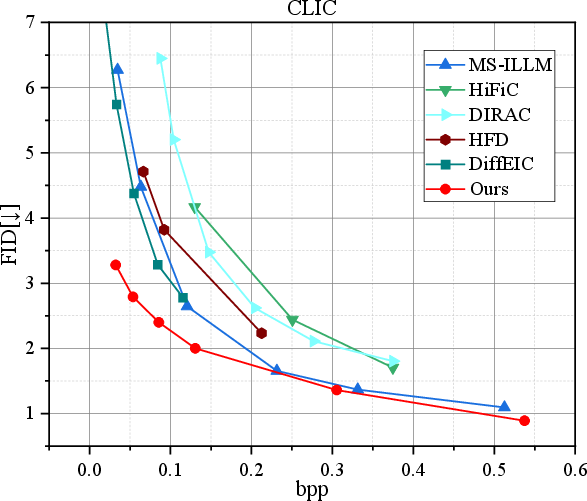

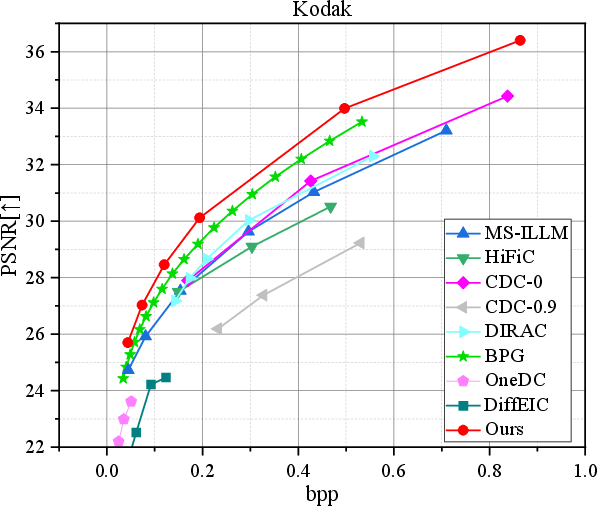

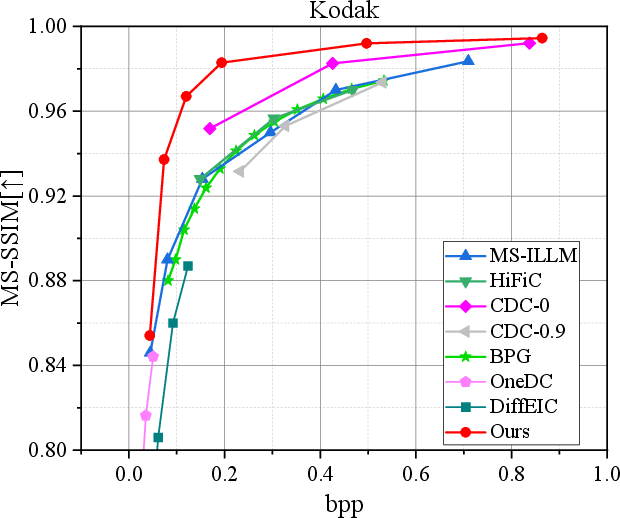

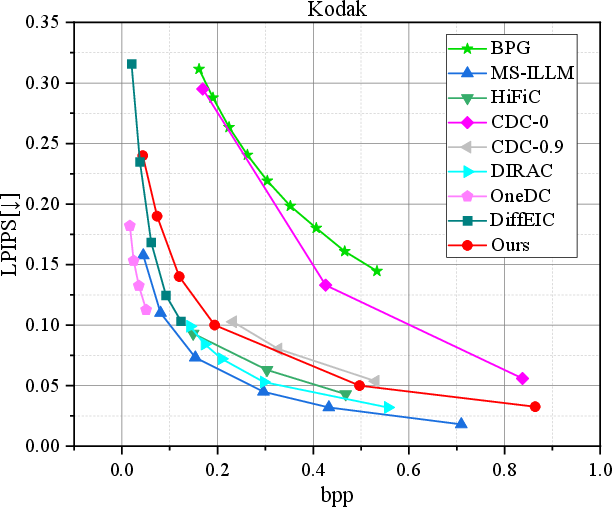

Comprehensive evaluations on CLIC2020 and Kodak datasets demonstrate that NC-Diffusion surpasses both rate-distortion and rate-perception trade-offs achieved by state-of-the-art generative compression baselines, including HiFiC, MS-ILLM, and recent diffusion models (CDC, DiffEIC).

Numerical Claims:

- Rate-Distortion/Perception: Significant BD-rate savings and lower FID and LPIPS at matched or lower bit-rates compared to prior methods.

- Inference Efficiency: Decoding speeds are order-of-magnitude faster than previous diffusion-based approaches, attributable to the deterministic, non-random-start inference regime.

Figure 5: Rate-distortion comparison demonstrating superior performance of NC-Diffusion on CLIC2020 (left).

Figure 6: Rate-distortion and rate-perception trade-offs on Kodak, reflecting improvements in both objective and perceptual metrics.

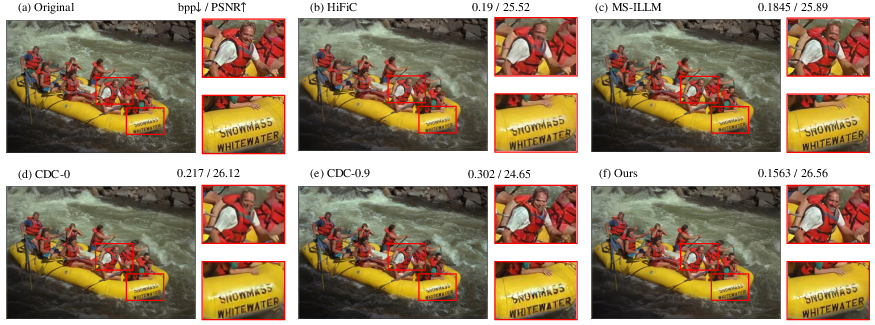

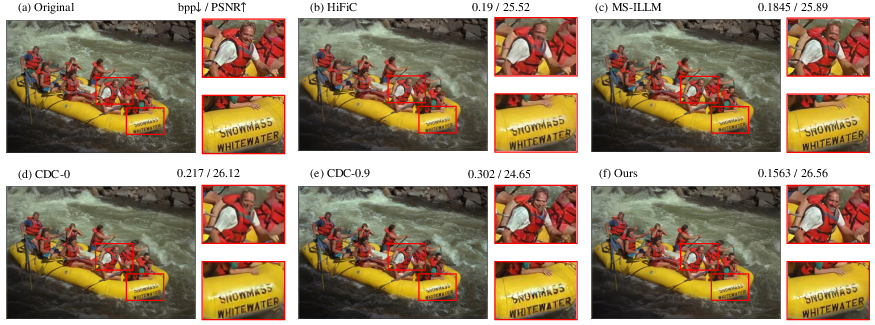

Figure 7: Visual comparison (Kodak kodim14) showing NC-Diffusion achieves better detail preservation with fewer artifacts at lower bitrates.

Contradictory Results: The paper demonstrates that, unlike other generative codecs, NC-Diffusion does not sacrifice PSNR dramatically for perceptual gains—contradicting the widely assumed rate-distortion-perception coupling.

Ablation and Analysis

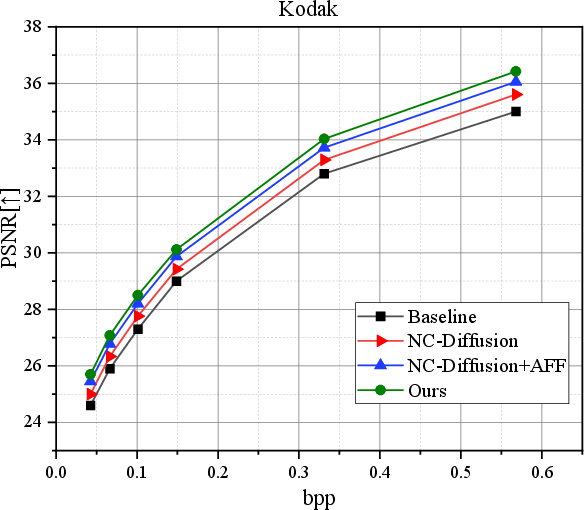

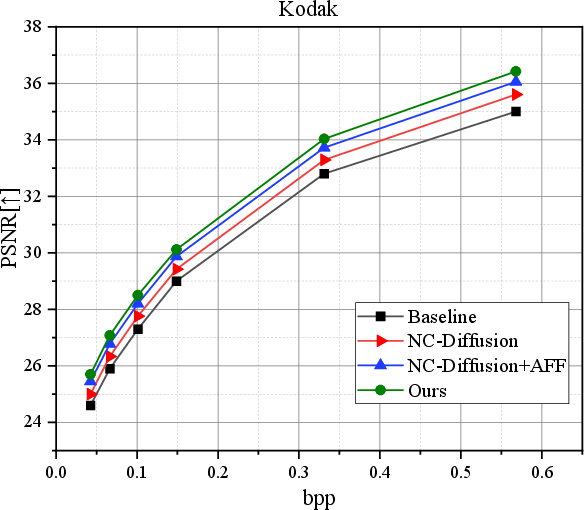

Ablations confirm that the largest performance gains stem from constraining the diffusion noise to quantization statistics; further improvements accrue from AFF and high-frequency loss integration.

Figure 8: Ablations illustrate additive contributions from NC-Diffusion, AFF, and high-frequency preservation on performance curves.

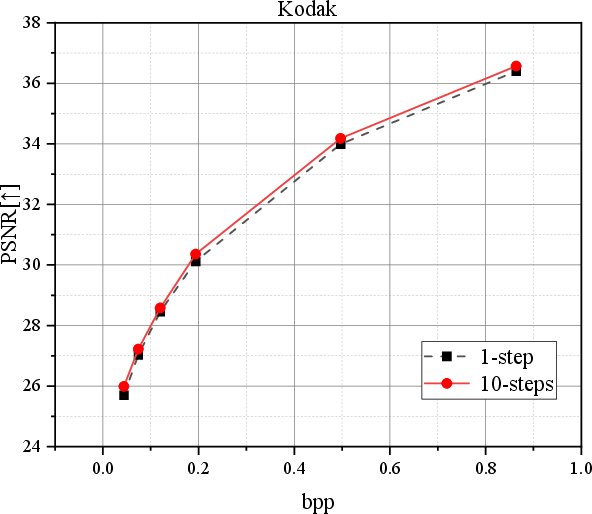

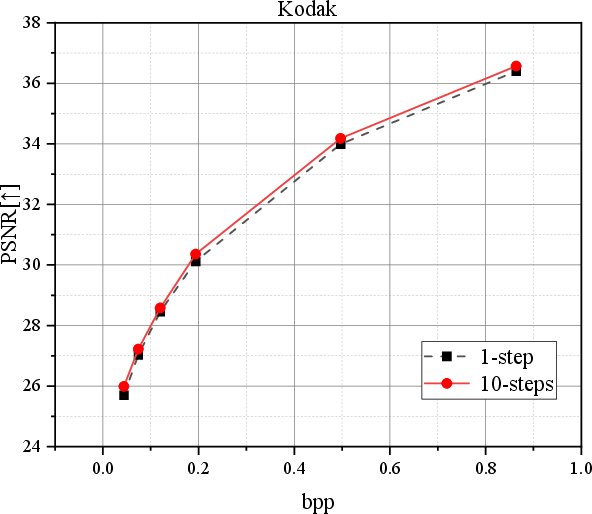

Deterministic and fast inference is further validated by comparing performance with respect to the number of diffusion steps, demonstrating that even a single step achieves strong results, with marginal gains over more iterations.

Figure 9: PSNR vs. inference steps reveals diminishing returns for additional steps, evidencing the efficiency of NC-Diffusion.

Theoretical and Practical Implications

The findings have substantial consequences:

- Compression-Faithful Diffusion: The framework demonstrates that diffusion processes can be reconfigured for compression tasks—where faithfulness trumps diversity—by replacing Gaussian noise with structured quantization noise.

- Efficiency and Determinism: Deterministic inference and rapid convergence become feasible, enabling the use of diffusion models in practical low-latency, high-fidelity image codecs.

- Module Generality: The adaptive frequency-domain filtering (AFF) can be extended to other signal restoration/generation contexts requiring enhanced high-frequency fidelity.

Limitations and Future Work:

- The framework is closely tied to the statistics of the initial neural codec; generalization to extreme compression rates or other modalities (e.g., video, audio) merits further investigation.

- Extension to conditional or semantic compression regimes, as alluded to in recent ultra-low bitrate generative pipelines, offers an avenue for integrating semantic fidelity explicitly in the loss.

Conclusion

The NC-Diffusion paradigm (2604.06568) represents a shift from stochastic, diversity-favoring diffusion in compression to a process strictly grounded in the statistics of quantization noise. The approach achieves a remarkable balance between fidelity and perceptual quality without the inherent randomness of traditional diffusion models. With efficient inference, strong quantitative and visual performance, and extensibility via frequency-domain filtering, NC-Diffusion is poised to influence the next generation of learned compression algorithms and broader applications that demand faithful, high-frequency-preserving signal reconstruction.