- The paper establishes a structured compliance architecture for AI agents under EU law by mapping key regulatory requirements and obligations.

- It develops a detailed taxonomy linking AI agent use cases to specific regulatory triggers across frameworks like the GDPR, CRA, and DSA.

- It proposes a practical 12-step compliance sequence, integrating QMS, risk management, and cybersecurity measures in line with harmonised standards.

AI Agents Under EU Law: A Compliance Architecture for AI Providers

Introduction

The paper "AI Agents Under EU Law" (2604.04604) explores the regulatory challenges and solutions for AI agents within the framework of the European Union's AI Act. AI agents are systems that autonomously perform sequences of actions with reduced human involvement. They are being deployed across various sectors, including customer service, healthcare, and critical infrastructure management.

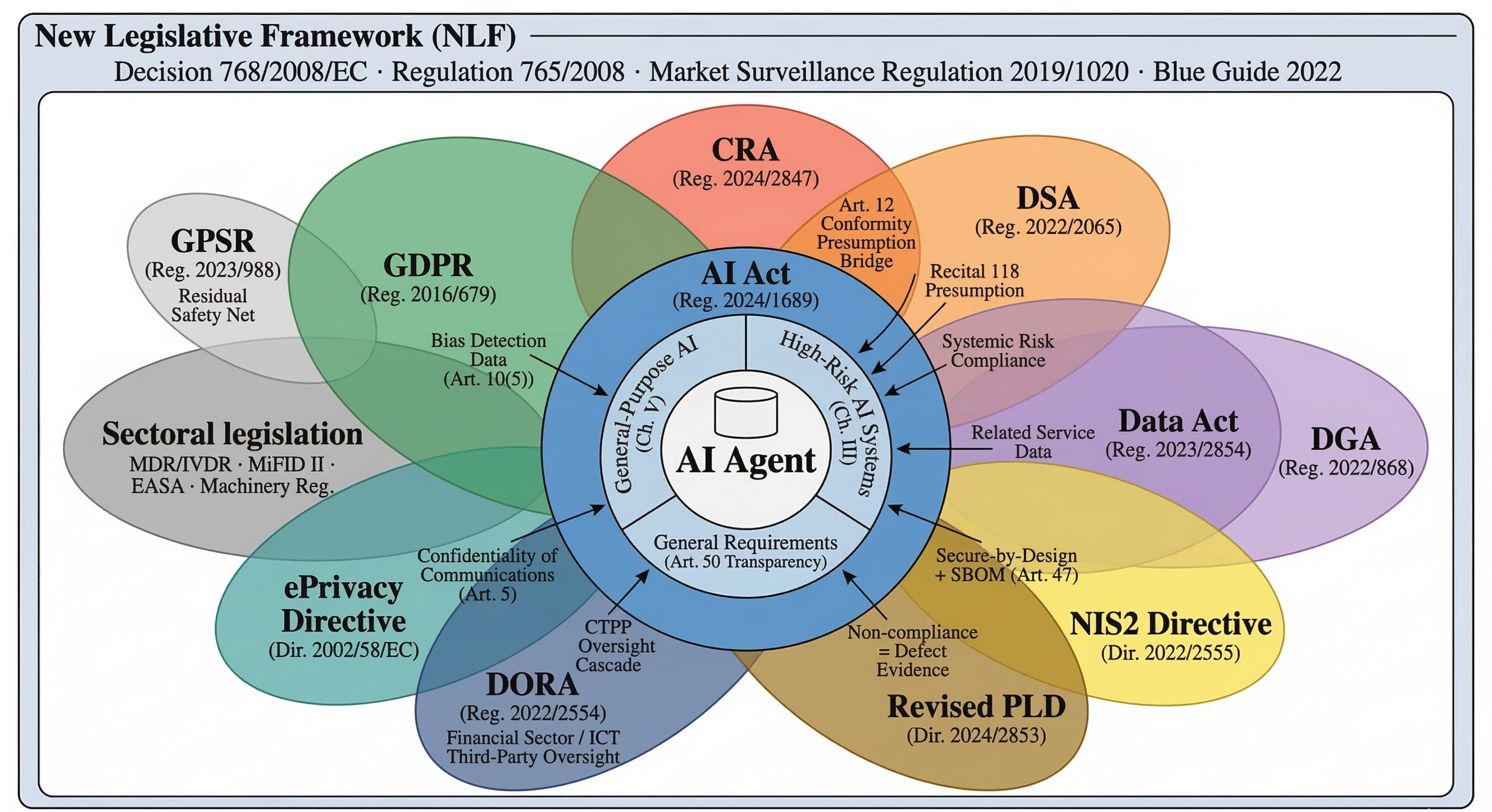

The EU AI Act regulates these systems through a risk-based framework, which integrates obligations from multiple legislative instruments, including the GDPR, Cyber Resilience Act, and Digital Services Act. This paper provides a systematic regulatory mapping for AI agent providers, focusing on draft harmonised standards and sector-specific legislation.

Regulatory Challenges and Taxonomy

AI agents present unique regulatory challenges due to their autonomous tool invocation and adaptive behavior. The absence of a formal legal definition of "agent" in the EU AI Act does not diminish their regulatory significance. Instead, these systems amplify risks associated with cybersecurity, oversight, transparency, and behavioral drift.

The paper establishes a practical taxonomy of AI agent use cases, mapping concrete actions to regulatory triggers, such as transparency obligations under EU AI Act Article 50 and GDPR compliance due to processing personal data. This taxonomy serves as a foundation for developing hierarchical action ontologies for runtime governance.

Figure 1: Non-exhaustive taxonomy of AI Agent Use Cases and Actions, detailing the concrete tasks performed across different domains using a shared LLM-based architecture.

High-Risk AI Agent Classification and GPAI Model Obligations

Under the AI Act, AI agents are classified as high-risk based on application domain rather than architecture. Systems managing critical infrastructure or involved in recruitment and healthcare, among others, trigger high-risk classification due to inherent regulatory compliance requirements. Providers must assess risks associated with reasonably foreseeable misuse.

Most AI agents are built on general-purpose AI models. Obligations for GPAI model providers (Chapter V of AI Act) are distinct from system-level obligations, and model providers are tasked with documentation, copyright compliance, training data transparency, and adversarial testing for systemic risk.

Compliance Architecture: Harmonised Standards and Implementation

The paper outlines compliance using harmonised standards developed under Standardisation Request M/613, addressing the essential requirements of the AI Act. Key standards relevant to AI agent providers include:

- prEN 18286 (QMS): Integrates essential requirements and post-market monitoring.

- prEN 18228 (Risk Management): Covers continuous lifecycle risk management, including fundamental rights.

- prEN 18229-1/2 (Trustworthiness Framework): Addresses logging, transparency, human oversight, accuracy, and robustness.

- prEN 18282 (Cybersecurity): AI-specific cybersecurity measures for threat mitigation.

The standards create a dependency graph; they are interdependent, requiring an integrated approach for compliance. Providers should use the QMS as the coordinating framework.

Regulatory Perimeter: Beyond AI Act

The AI Act is one layer in a multi-layer regulatory ecosystem. AI agent providers must navigate additional instruments:

- GDPR: Applies broadly due to personal data processing.

- Cyber Resilience Act (CRA): Imposes cybersecurity requirements for products with digital elements.

- Digital Services Act (DSA): Affects agents operating as intermediaries or on online platforms.

- Sectoral legislation: Includes MDR/IVDR for healthcare and MiFID II for finance.

These instruments must be applied simultaneously based on the agent's specific context.

Figure 2: The Multi-Layer Compliance Architecture for AI under EU Law, demonstrating that the AI Act is embedded within a complex regulatory ecosystem.

Practical Compliance Sequence

The paper proposes a practical twelve-step compliance sequence, ensuring robust adherence to regulatory requirements, including:

Conclusion

This analysis highlights the intricacies of deploying AI agents under EU law, emphasizing the importance of comprehensive regulatory mapping and adherence to both AI-specific standards and broader legislative requirements. The implications for AI agent providers extend beyond compliance; they contribute to shaping the governance of advanced AI systems in Europe.

Each regulatory challenge identified in the paper is addressed through a structured compliance strategy, facilitating the safe deployment and operation of AI agents within the EU. As AI systems continue to evolve, ongoing engagement with regulatory developments will be crucial for maintaining compliance and leveraging AI's full potential in delivering societal benefits.