- The paper presents a framework where adaptive densification and graph-based optimization significantly reduce memory usage while preserving rendering fidelity.

- It employs an ELBO-inspired loss for automatic point densification and opacity-aware pruning, achieving up to 12.5% of the original point count with superior PSNR values.

- Graph-based Spatial Distribution Optimization enforces spatial coherence through MLP embedding and unsupervised regularizers, ensuring artifact-free, real-time view synthesis.

GS²: Graph-based Spatial Distribution Optimization for Compact 3D Gaussian Splatting

Introduction

This paper presents GS², a framework that significantly optimizes 3D Gaussian Splatting (3DGS) for real-time novel view synthesis and rendering. While 3DGS has shown substantial promise in photorealistic scene reconstruction and interactive graphics, its deployment is hindered by the prohibitive memory requirements—a consequence of utilizing millions of Gaussian points to model complex scenes. Existing pruning and compression methods often degrade spatial continuity, resulting in notable rendering artifacts. GS² introduces an adaptive, spatially coherent solution that dramatically reduces memory usage without sacrificing, and in many cases improving, rendering fidelity.

Methodology

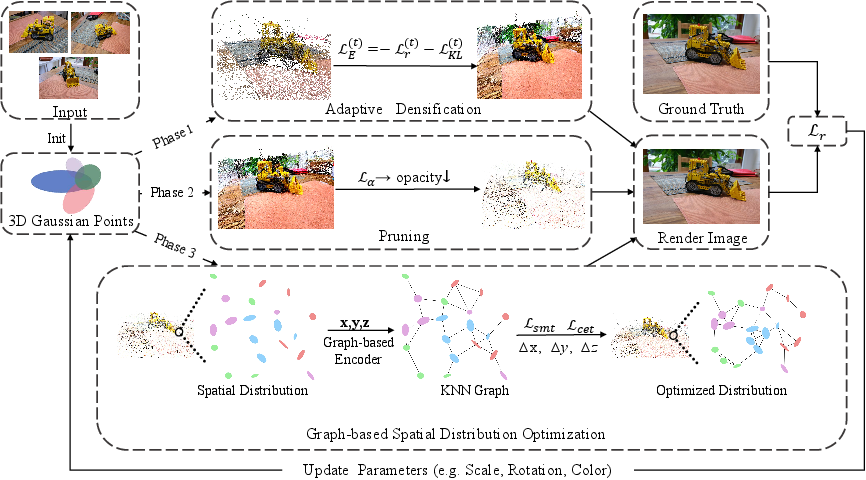

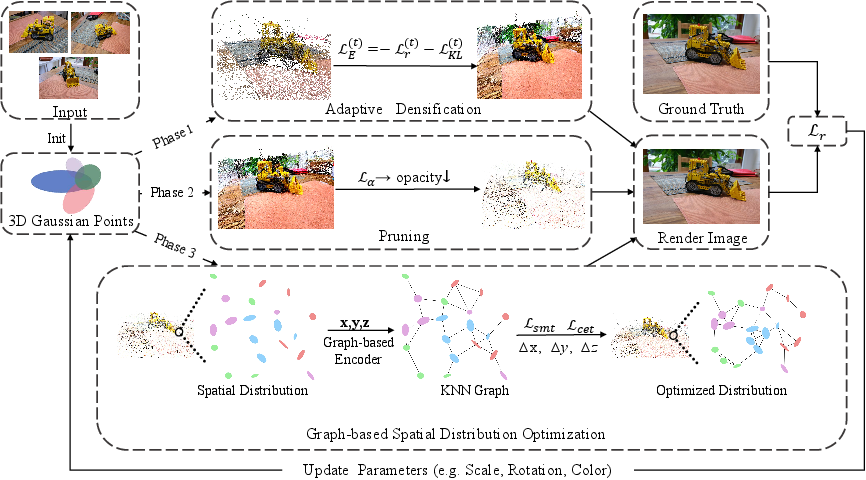

GS² comprises two orthogonal modules: Adaptive Densification and Pruning (ADP), and Graph-based Spatial Distribution Optimization (GSDO).

Adaptive Densification and Pruning (ADP)

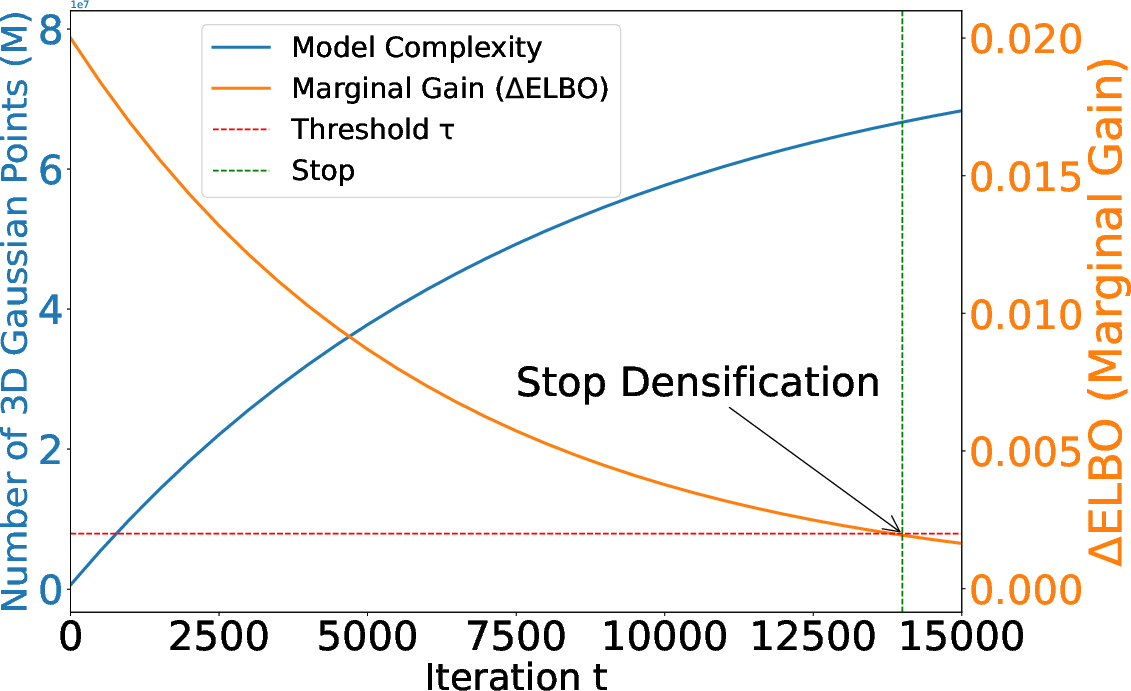

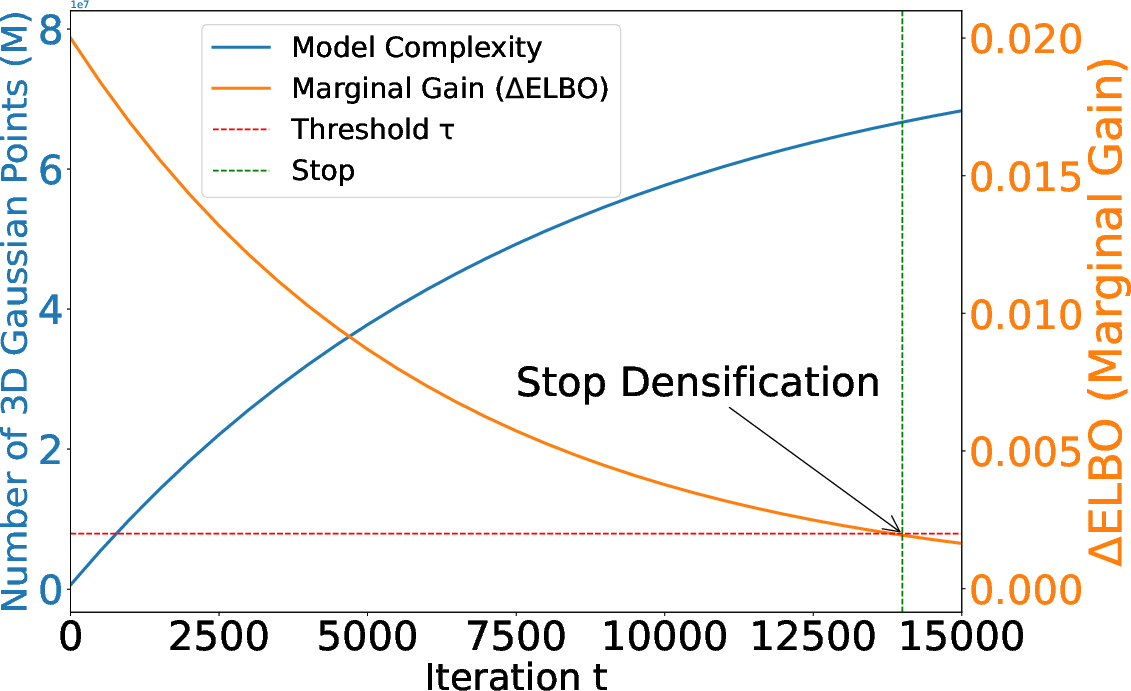

Unlike prior approaches relying on hand-tuned heuristics for point densification, GS² leverages an Evidence Lower Bound (ELBO)-guided strategy. Densification halts automatically when the marginal gain in rendering quality becomes negligible relative to increasing model complexity. The ELBO-inspired loss incorporates rendering error and 3DGS point density, smoothing short-term fluctuations via an exponential moving average. This dynamic adaptation prevents the proliferation of redundant points in low-detail regions and addresses the over-parameterization endemic in 3DGS (Figure 1).

Figure 1: ELBO-guided adaptive densification.

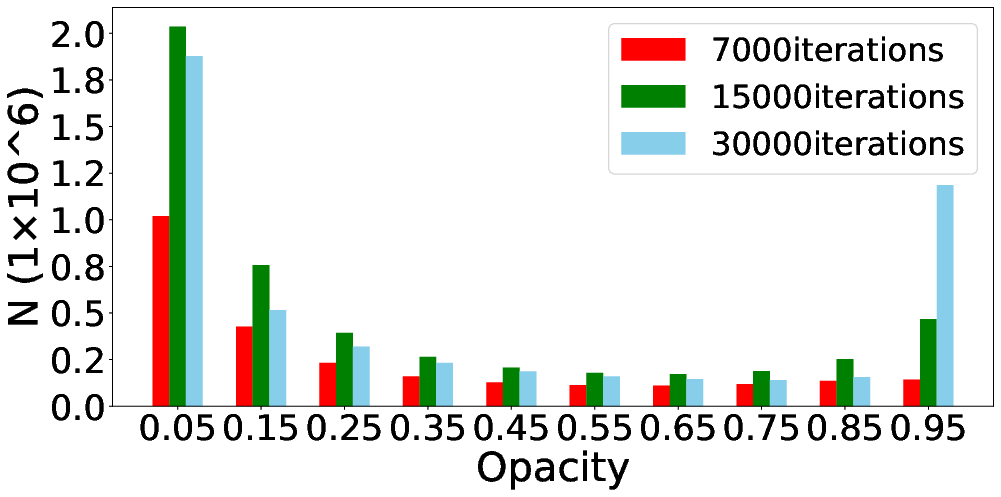

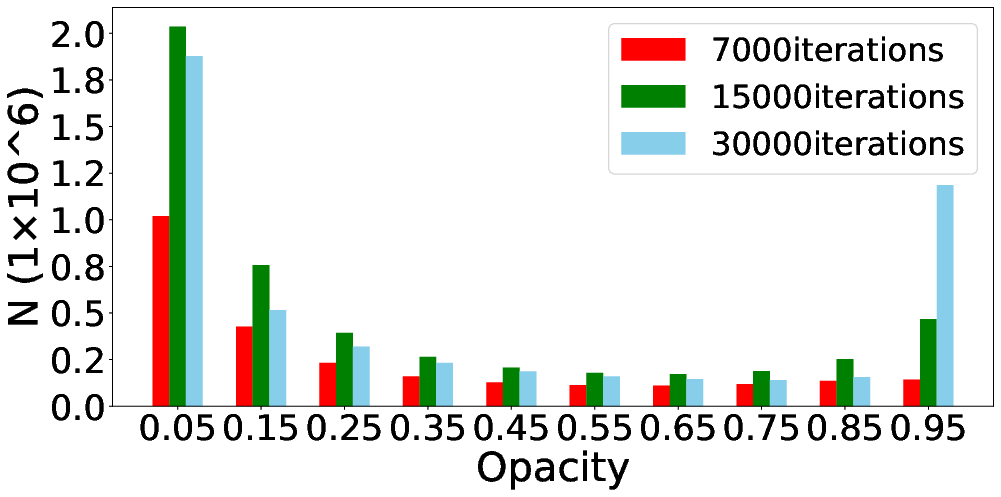

Post-densification, GS² employs an opacity-based progressive pruning regime that iteratively eliminates low-importance points. Pruning invokes an opacity-aware loss with higher-order penalties to more aggressively suppress both low-opacity and artifact-inducing high-opacity Gaussians. This phase yields exceptionally compact representations—often under 12.5% of the point count required by conventional 3DGS—while retaining scene structure (Figure 2).

Figure 2: Low-opacity Gaussians typically constitute 40% of points, justifying strict pruning strategies.

Graph-based Spatial Distribution Optimization (GSDO)

Pruning, while efficient, introduces local discontinuities and spatial incoherence in the retained point cloud. GS² directly addresses this with a lightweight GSDO encoder: Gaussians are embedded into a latent graph space according to their local and global geometric context. Each point acquires a feature via MLP-based embedding, k-NN aggregation, local residual pooling, and concatenation with a scene-level global feature.

Spatial consistency is enforced using two unsupervised regularizers:

- Centroid alignment loss: Ensures the mean of latent features aligns with the geometric centroid of the pruned population.

- Local smoothness loss: Penalizes pairwise geometric-feature discrepancies within neighborhoods.

The result is a marked improvement in spatial continuity, minimizing migration artifacts and yielding a coherent, high-quality rendering even under extreme compression.

Figure 3: Overview of GS² pipeline: ADP adaptively densifies and prunes points, while GSDO refines spatial distribution through feature-guided shifting and joint regularization.

Experimental Results

GS² was evaluated on the Mip-NeRF 360 and Tanks & Temples datasets—standard, high-complexity benchmarks—and compared against contemporary SOTA methods including EAGLES, LightGaussian, Mini-Splatting, MaskGaussian, and CompGS.

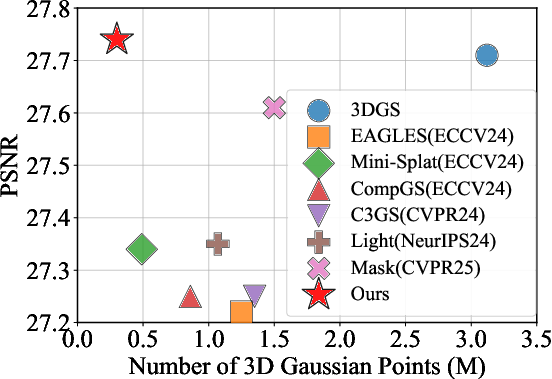

Quantitative Results:

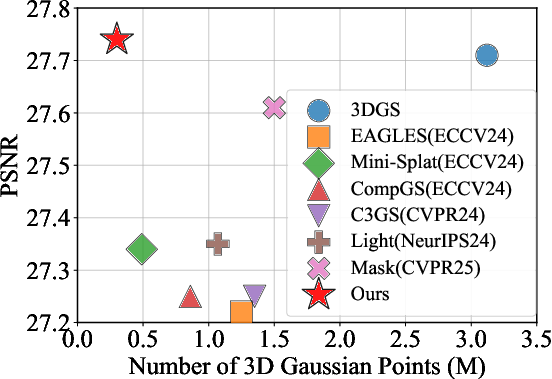

GS² exceeds classical 3DGS in PSNR while requiring only approximately 12.5% of the point budget (0.30M vs. 3.12M on Mip-NeRF360, 0.24M vs. 1.57M on Tanks & Temples). It consistently outperformed all baselines in fidelity-metrics and memory usage, with improvements especially pronounced in indoor scenes, as geometric constraints favor the GSDO module's optimization process.

Figure 4: Plot of PSNR vs. number of Gaussian points on Mip-NeRF360, demonstrating GS²'s superior quality-relative-to-compression tradeoff.

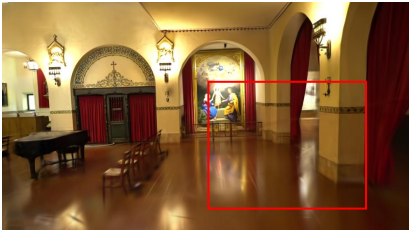

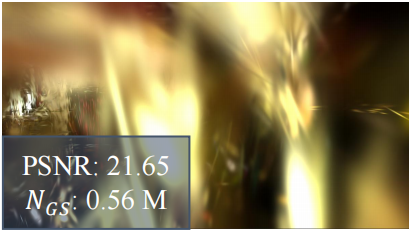

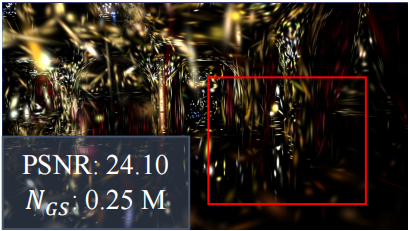

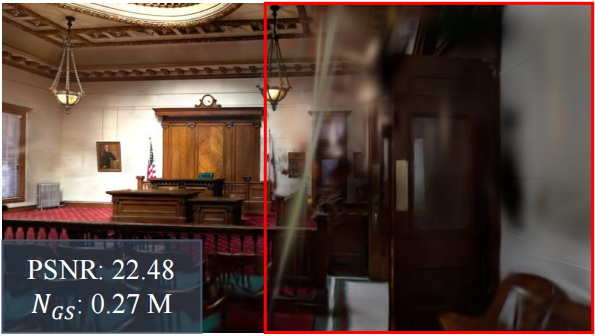

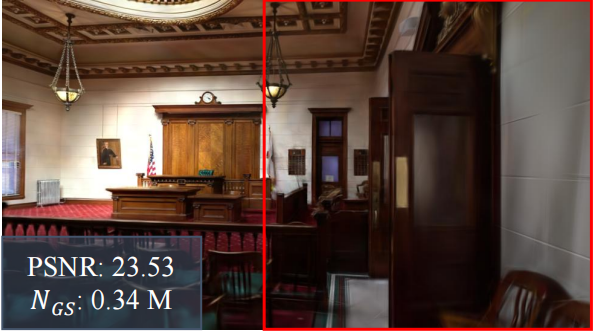

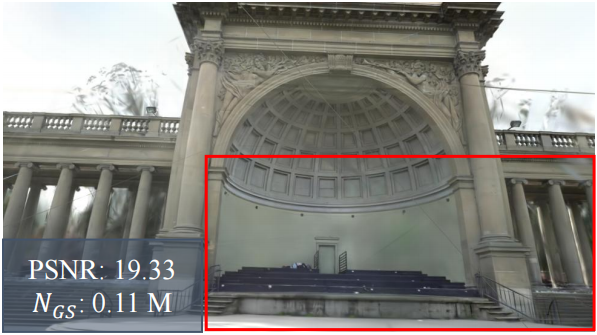

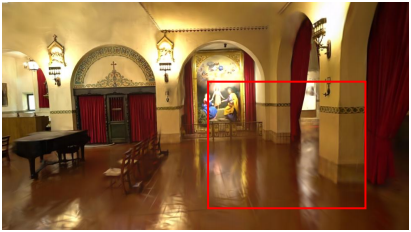

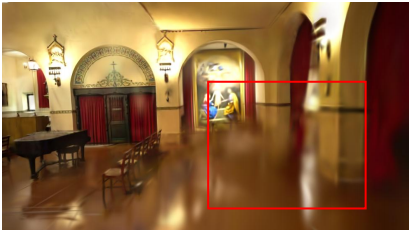

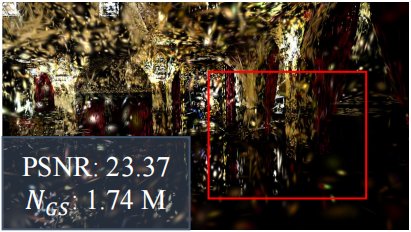

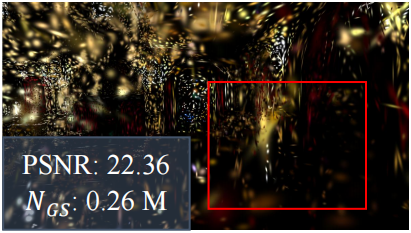

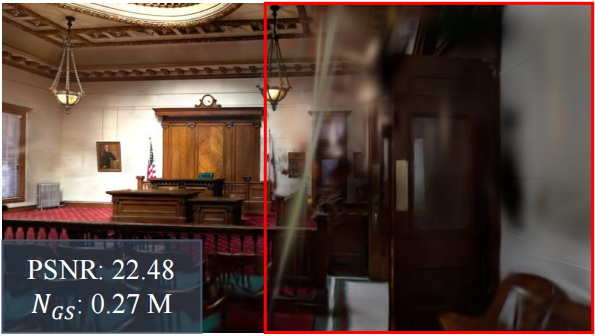

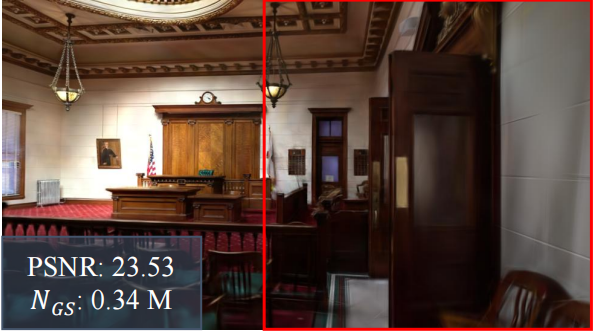

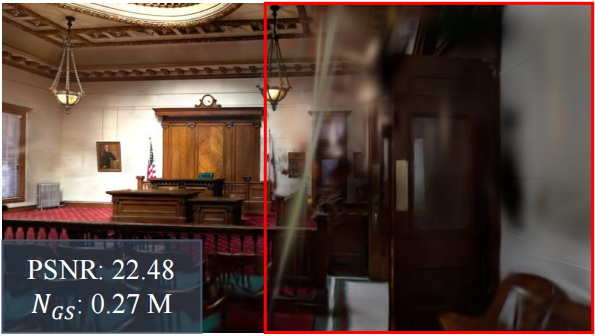

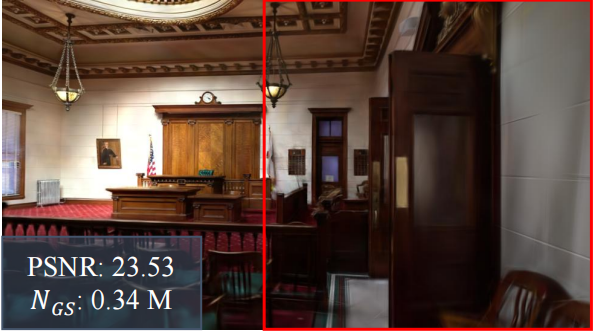

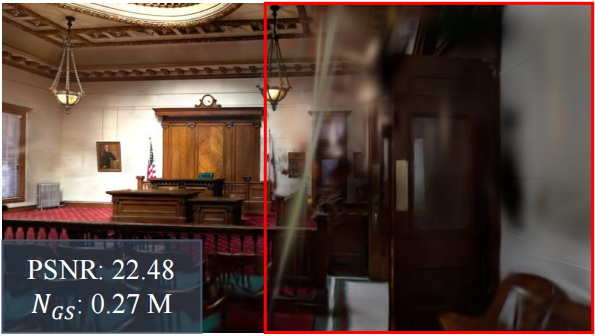

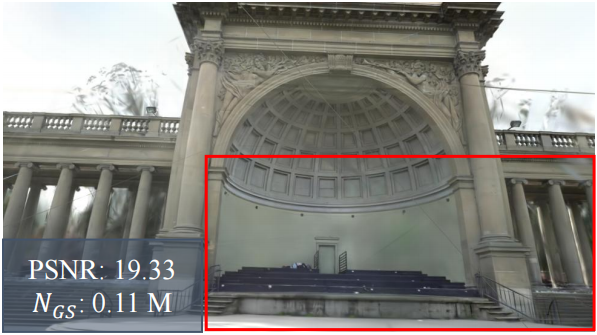

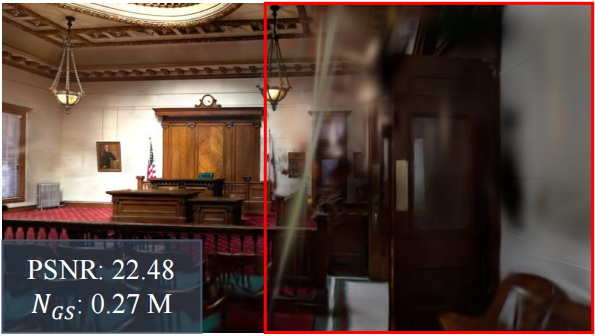

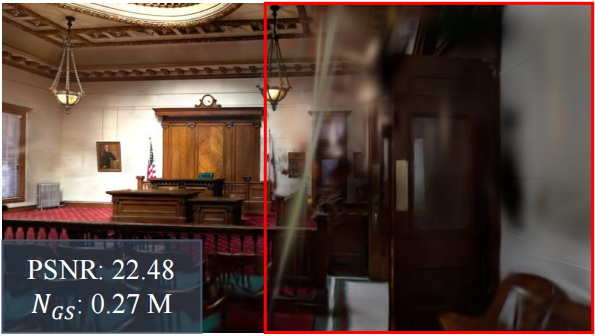

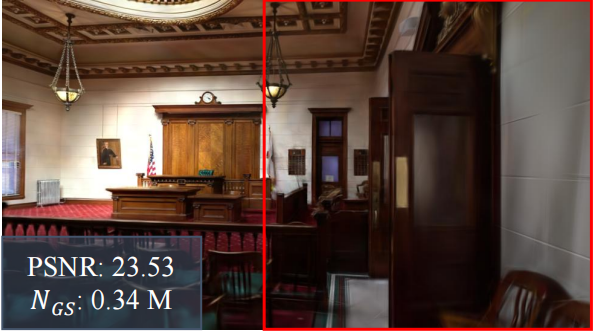

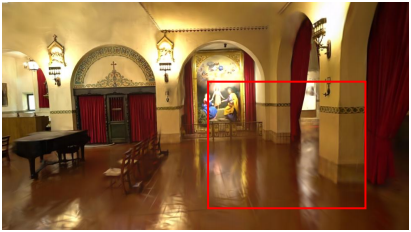

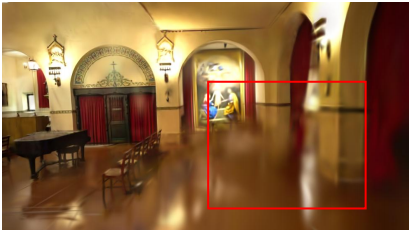

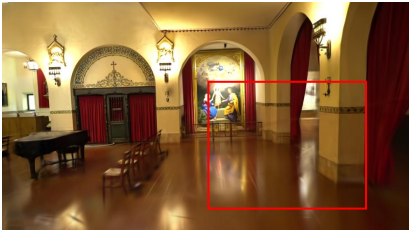

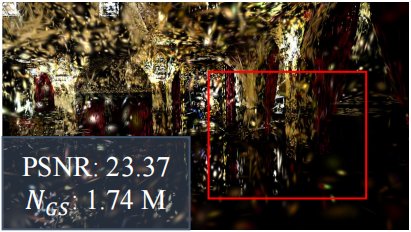

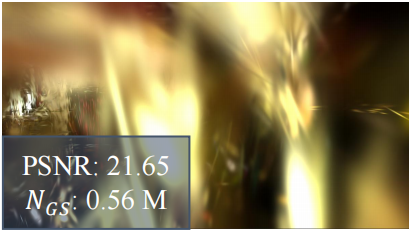

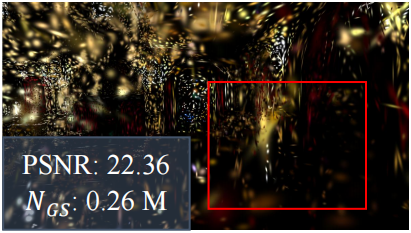

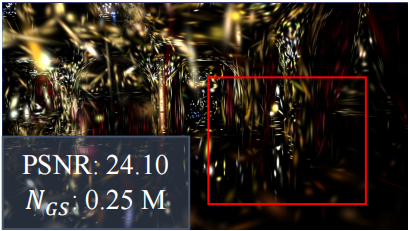

Qualitative Results:

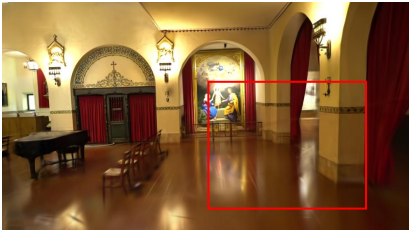

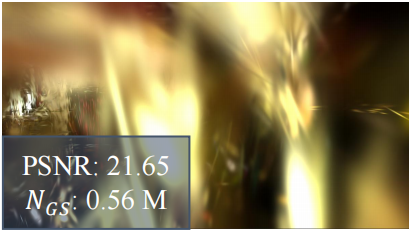

Side-by-side visual comparisons demonstrate the spatial coherence attained by GS². Competing methods exhibit substantial artifacts—particularly in textureless, low-contrast, or object boundary regions after aggressive pruning—whereas GS² preserves edge detail and scene geometry.

Figure 5: Room scene example. GS² shows higher PSNR with far fewer points and an artifact-free, coherent spatial distribution compared to LightGaussian.

Figure 6: Qualitative comparison across Room, Palace, Temple, Lighthouse, and Courtroom scenes; GS² produces visually accurate, uniformly distributed reconstructions at a fraction of the point count.

Ablation Studies:

Component analyses confirmed both ADP and GSDO contribute substantial gains. Neither increased training nor naïve mask-based pruning recovers the quality loss induced by severe point reduction. Integrating GSDO with LightGaussian and MaskGaussian baselines improved their PSNR/SSIM considerably without changing model size.

Figure 7: Pruning analysis on Church scene illustrates the tradeoff between spatial continuity and aggressive compression.

Efficiency:

Despite the added graph encoding stage, GS² matches or exceeds baseline runtime efficiency, rendering at over 500fps (second only to Mini-Splatting) on Tanks & Temples.

Implications and Future Directions

Practical Impact:

GS²'s drastic reduction of geometry memory overhead with maintained—or even enhanced—rendering quality makes high-fidelity 3DGS viable for resource-constrained deployments. This extends real-time photorealistic view synthesis to settings such as embedded AR systems, mobile robotics, and large-scale scene rendering in cloud environments.

Theoretical Significance:

This work rigorously demonstrates that aggressive pruning—historically assumed to be antithetical to high-quality view synthesis—can be reconciled with spatial regularization in feature space. The results assert that memory/performance is not an inevitable tradeoff, provided spatial continuity is properly encoded and enforced post-pruning.

Future Work:

Several directions are evident:

- Dynamic and Non-static Scenes: Integration of temporal or scene-consistency modules to extend compact representation to video or 4D capture settings.

- Neural-Guided Graph Construction: Learnable neighborhoods and adaptively structured latent spaces may further refine the compactness/quality envelope.

- Cross-modal Extensions: Joint optimization with semantic, physics, or material attributes for real-time multipurpose scene understanding.

Conclusion

GS² introduces a principled, highly efficient approach to 3D Gaussian Splatting that achieves state-of-the-art rendering quality with unprecedented compression ratios. Its combination of ELBO-based adaptive densification and graph-based spatial distribution addresses limitations posed by naively pruned representations and advances the field toward scalable, memory-efficient, and photorealistic real-time synthesis.