- The paper introduces entropy-based adaptive sampling to allocate 3D Gaussian primitives according to local scene information, cutting redundancy and computational cost.

- It employs a KNN-driven local attribute regression with geo-aware attention layers to predict essential Gaussian parameters, enhancing rendering fidelity.

- Experimental results show significant improvements in efficiency and robustness, achieving high visual quality with far fewer primitives across diverse datasets.

SparseSplat: Efficient Feed-Forward 3D Gaussian Splatting with Pixel-Unaligned Prediction

Introduction

SparseSplat redefines feed-forward 3D Gaussian Splatting (3DGS) architectures by explicitly discarding the pixel-aligned mapping and uniform primitive allocation constraints that dominate prior models. The proposed approach instead orchestrates a content-adaptive, entropy-based sampling and a locality-aware attribute regression, resulting in a scene representation that is both highly compact and competitive in visual fidelity. This provides strong suitability for real-time robotics, AR/VR, and SLAM scenarios, where the compute and memory overheads associated with pixel-aligned or naïvely voxel-aligned approaches are prohibitive.

Methodology

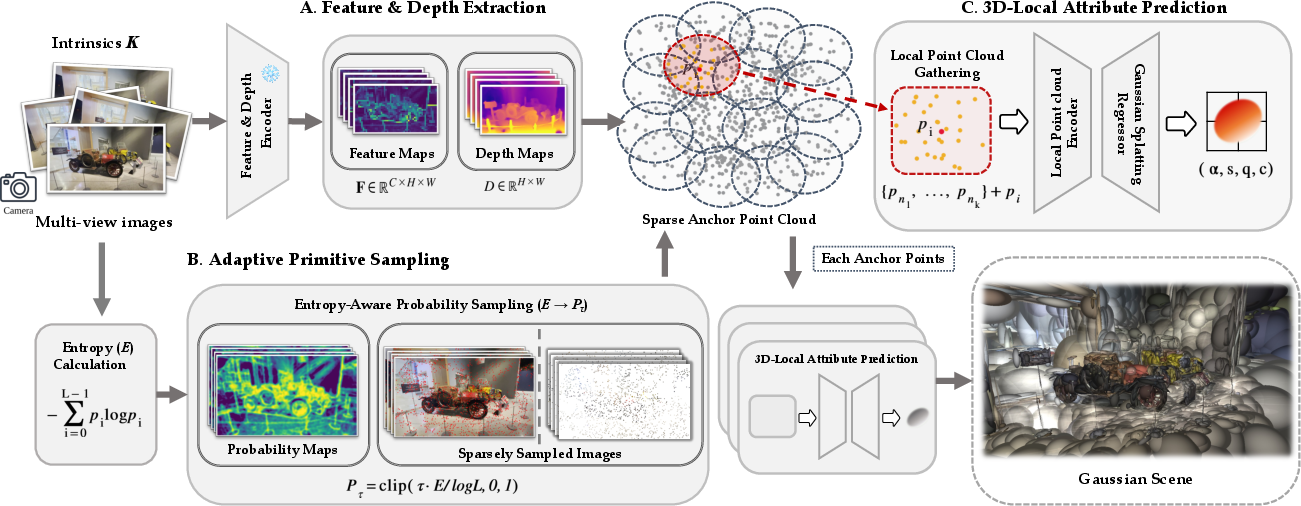

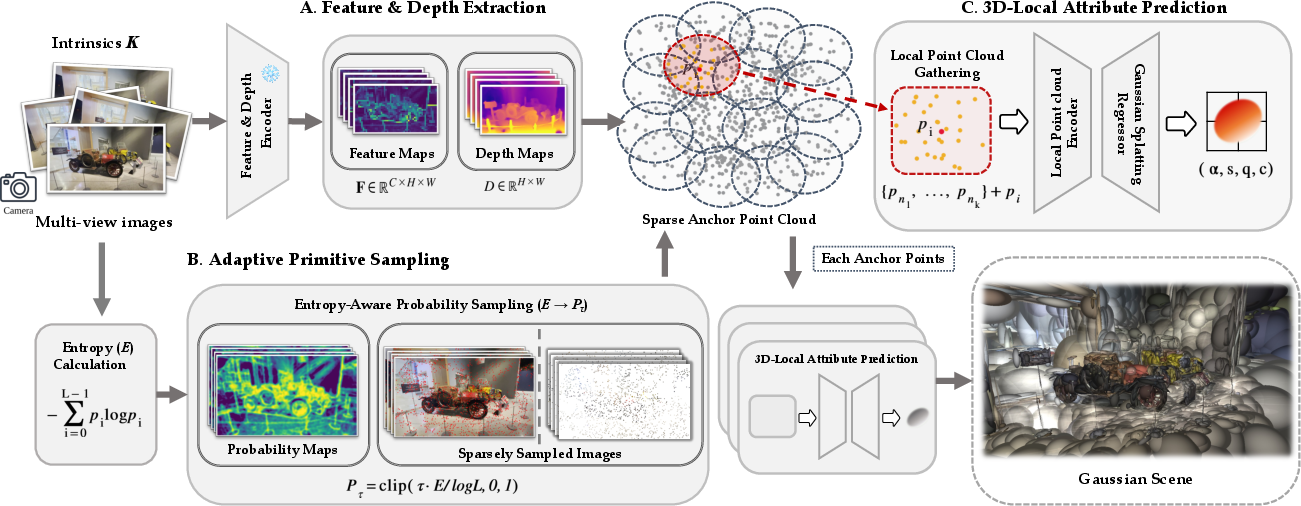

The SparseSplat pipeline consists of three major stages: (1) backbone-based image and depth feature extraction, (2) probabilistic, entropy-guided adaptive primitive sampling, and (3) local-neighborhood-based 3D Gaussian parameter regression.

Figure 1: Overall pipeline of SparseSplat, emphasizing entropy-based adaptive primitive sampling and local attribute prediction for highly compact and efficient 3DGS scene construction.

Adaptive Primitive Sampling

To break away from rigid, redundant representations, SparseSplat introduces an entropy-based mechanism for quantifying scene information richness at the per-pixel level. The method computes local Shannon entropy maps in grayscale input images, normalized and then modulated by a user-controllable temperature parameter τ to yield a probabilistic sampling mask. This mask governs the stochastic selection of pixels for further processing, spatially concentrating Gaussians in regions with richer structural or textural information, while allowing very sparse coverage in uniform areas.

Pixels are then back-projected into 3D anchor points using depth and camera pose. This ensures that the resulting primitives are spatially consistent and distributed according to true geometric context.

3D-Local Attribute Prediction

SparseSplat directly addresses the receptive field mismatch of previous networks by employing a localized attribute regression design. For each sampled anchor, its K-nearest neighbors (KNN) in 3D are gathered (using FAISS-based fast search). Each anchor and neighbor is encoded using dual streams: one for geometric cues (position, normals, rays) and another for backbone-extracted appearance features, projected and concatenated for unified representation.

Splat attribute regression is realized by a geo-aware attention aggregation—point transformer-style layers contextually blend anchor and KNN features, informed by both appearance and spatial arrangement. The output is a lightweight prediction of Gaussian opacity, scale (size), orientation (quaternion), and RGB spherical harmonics coefficients.

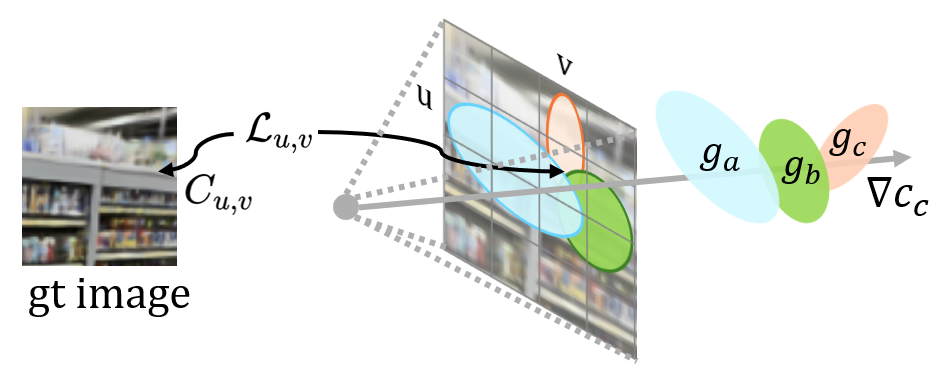

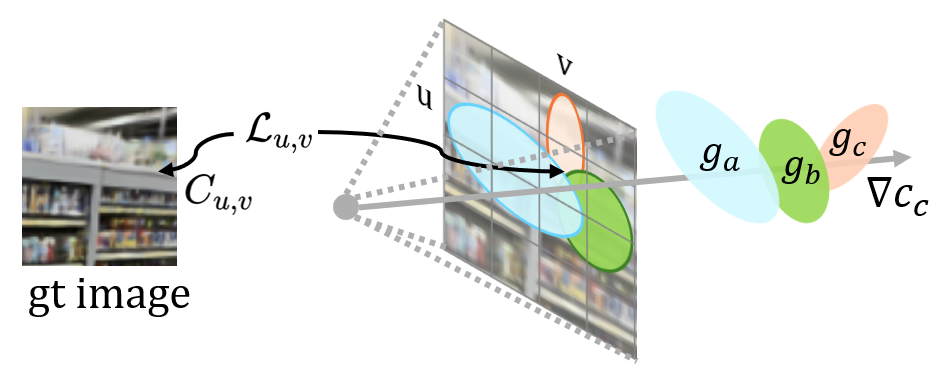

Analysis of Locality and Optimization

SparseSplat's architectural commitments are grounded in the observation that 3DGS scene optimization and gradient flows are inherently local: each 3D Gaussian mainly influences the pixels it projects onto, with direct and indirect (neighbor) modulation only within overlapping regions.

Figure 2: Schematic illustration demonstrating the localized influence and gradient flow of individual and overlapping 3D Gaussians during optimization.

By explicitly processing KNN neighborhoods rather than individual pixels, the network’s receptive field aligns with the spatial locality of the underlying 3DGS representation. This is shown empirically to improve robustness, particularly in regions prone to geometric estimation error, as the local structure and appearance context can dampen propagating artifacts.

Experimental Results

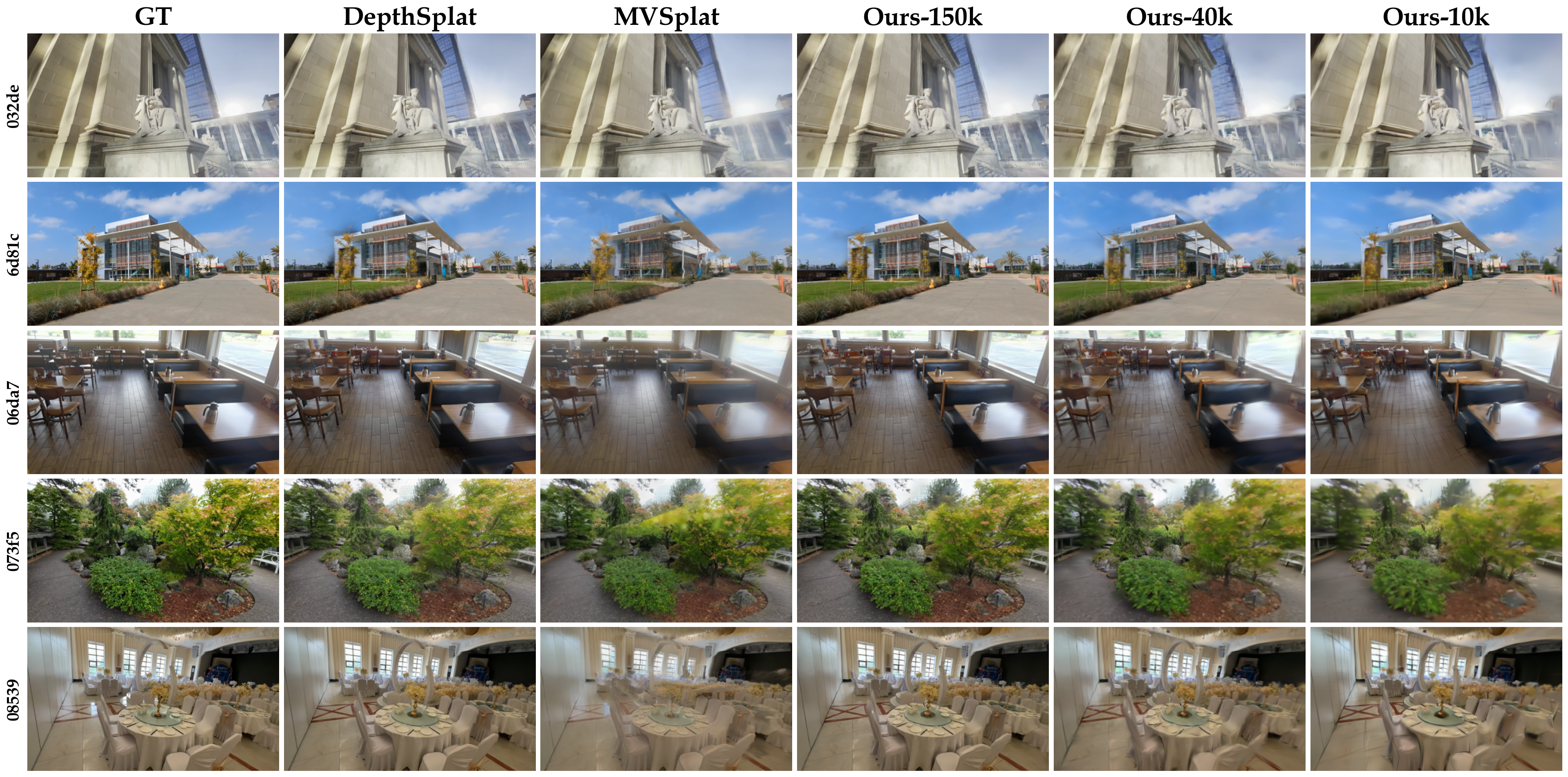

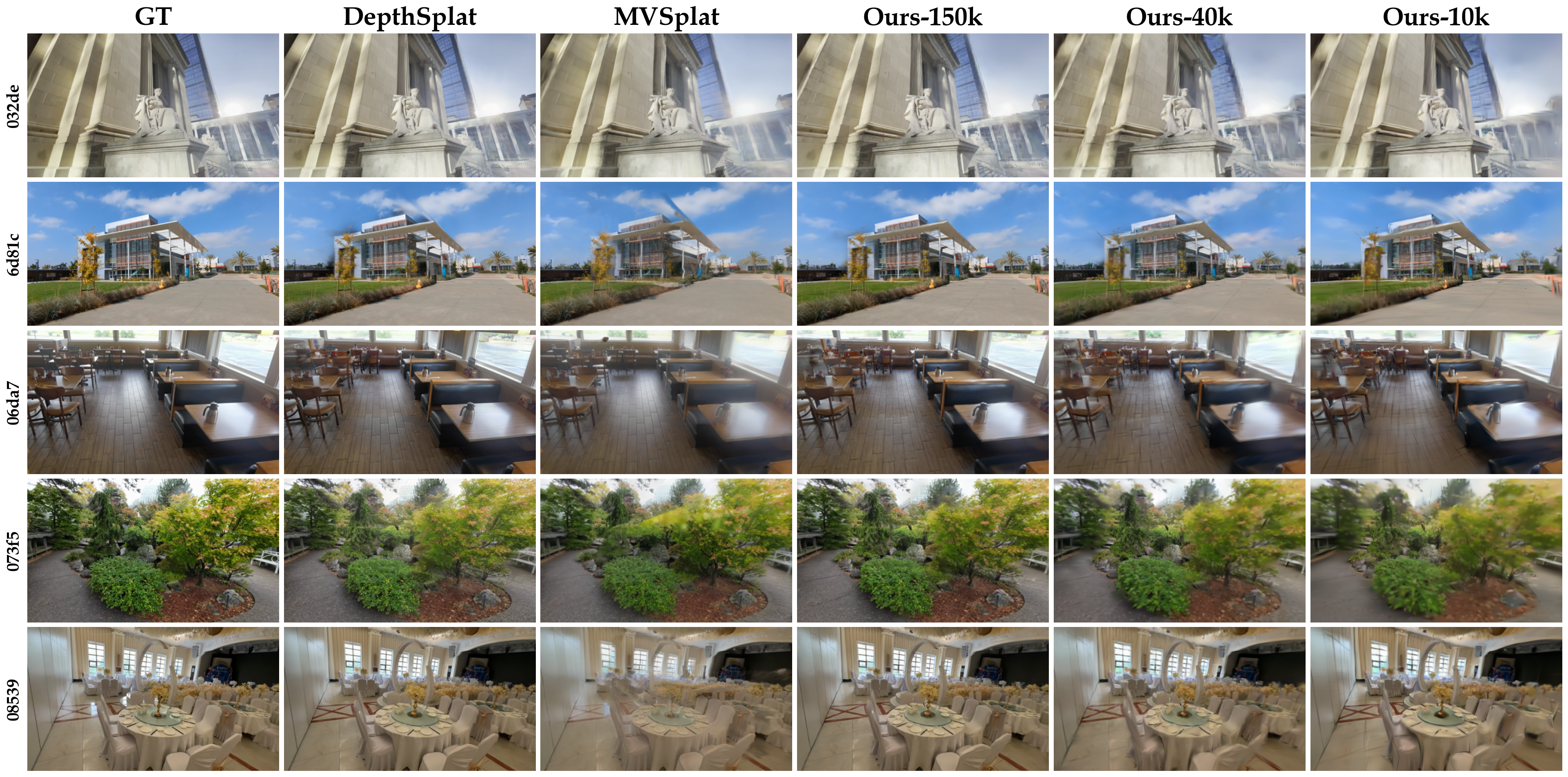

SparseSplat demonstrates that adaptive, local, and sparse design choices can support both high-fidelity rendering and efficient representation.

On the challenging DL3DV dataset, SparseSplat achieves 24.2 PSNR and 0.817 SSIM using only 150k Gaussians, matching DepthSplat’s visual quality, but with less than 22% of its primitive count (688k). Moreover, further lossy sparseness (down to 10–40k Gaussians) degrades visual quality gracefully, supporting real-time mapping scenarios without catastrophic collapse. Rendering and inference benchmarks show speedups proportional to Gaussian reduction, and the model generalizes strongly to unseen domains (Replica dataset), often exceeding prior baselines.

Figure 3: Qualitative comparison on DL3DV scenes; SparseSplat maintains sharp, artifact-free reconstructions using far fewer Gaussians than pixel-aligned methods, with controlled blurring at extreme sparsity.

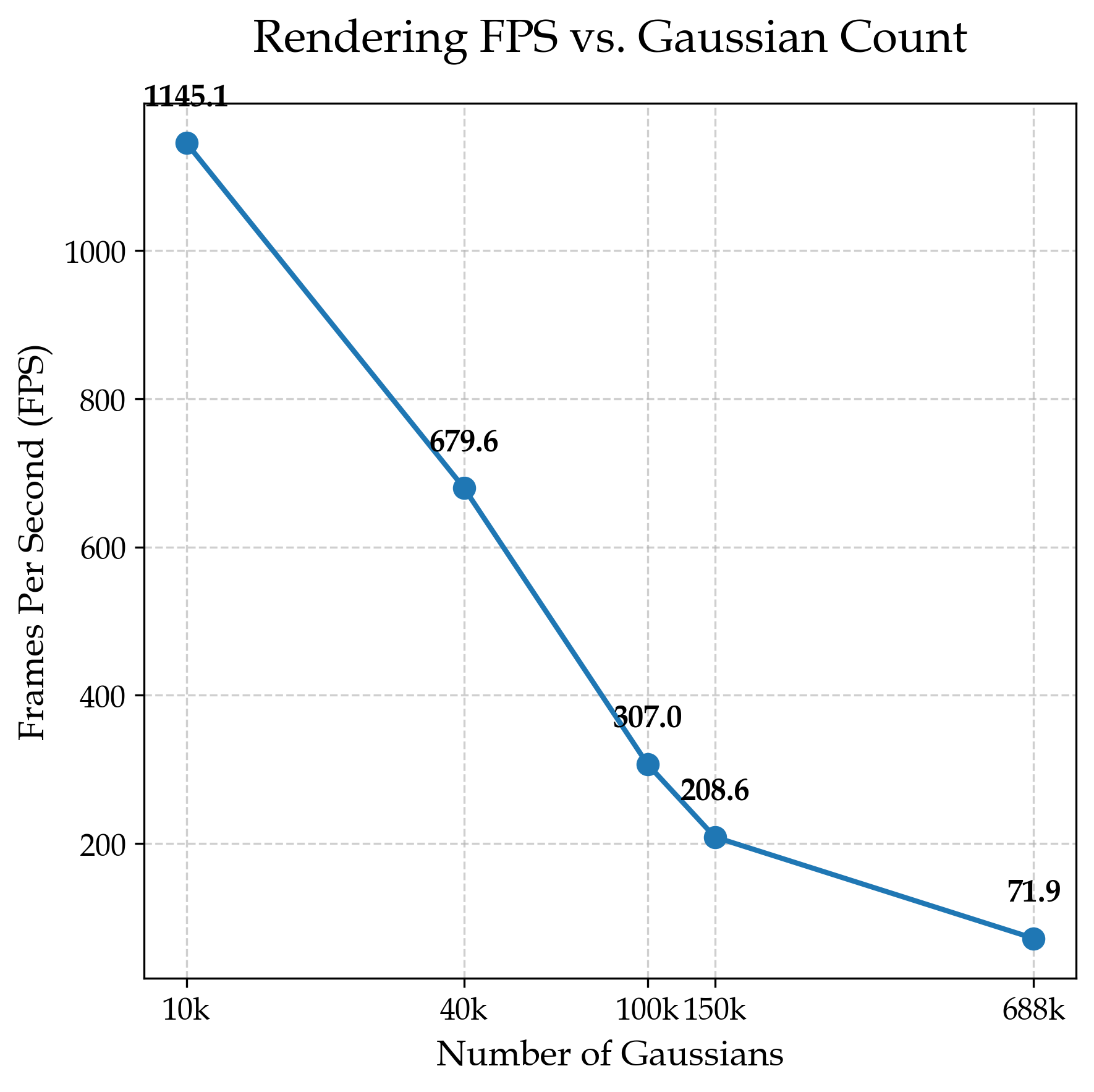

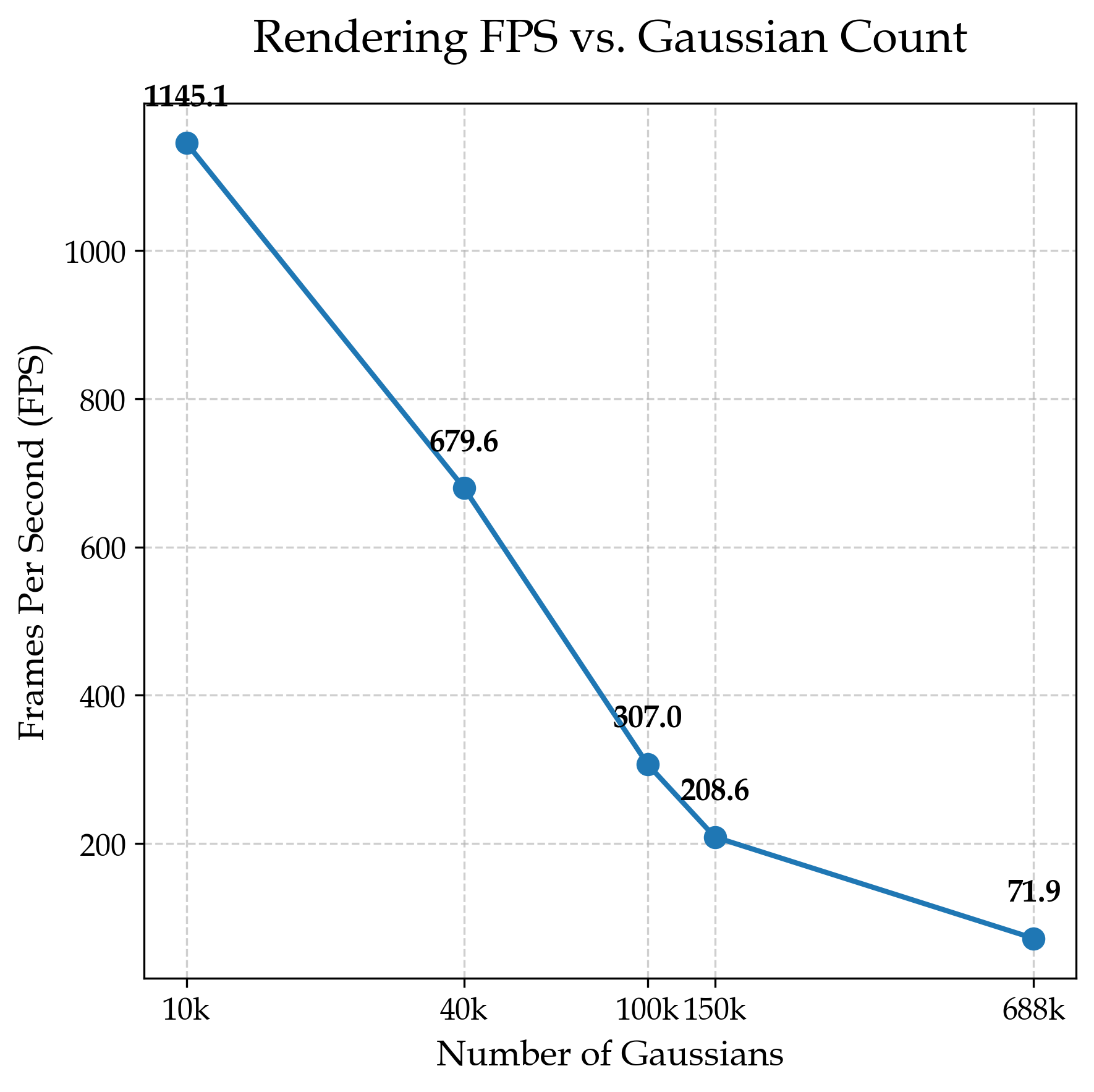

Efficiency and Scalability

Rendering efficiency analysis (FPS vs. number of Gaussians) confirms that SparseSplat unlocks >3x speedup for common AR/VR settings and achieves >600 FPS at extreme sparsity, a threshold suitable for real-time control in robotics environments.

Figure 4: Rendering frame rate as a function of the Gaussian primitive budget, depicting SparseSplat’s large speed advantage at comparable or improved visual quality.

The adaptive, sparse regime also resolves a core limitation in SLAM and online mapping, where the rapid accumulation of redundant primitives previously led to memory blowup and latency. In contrast, SparseSplat naturally scales with frame count and scene complexity.

Ablation and Design Validation

Ablation studies confirm the superiority of entropy-based sampling over random or edge-based alternatives, and demonstrate the necessity of KNN-conditioned attribute prediction: performance consistently improves with neighborhood size, saturating at K≈20. The geo-aware attention head outperforms both pooling and MLP baselines, with PointNet-style architectures failing outright to converge given the task structure.

Limitations and Future Directions

SparseSplat’s KNN-based context aggregation is robust to modest depth errors but may be suboptimal in presence of severe occlusion artifacts—2D co-visibility-based neighborhoods may better capture semantically or optically correlated structure. Additionally, while computation scales with anchor count, attention-based aggregation could be further optimized for deployment.

Implications and Outlook

SparseSplat closes the efficiency gap for feed-forward 3DGS, making real-time SLAM, AR/VR, and simulation practical on constrained devices. Its content-aware, sparse-by-design representation uncouples fidelity from rigid allocation, and its locality-driven prediction sets a new baseline for efficient downstream integration. In broader terms, the paradigm shift from rigid alignment to adaptive composition is promising for general scene representation, particularly for lifelong robotics, scalable simulators, and high-throughput synthetic environments.

Conclusion

SparseSplat introduces adaptive primitive sampling and local attribute regression to 3DGS, achieving state-of-the-art rendering quality with minimal primitives and computational cost. These advances support the deployment of 3DGS in bandwidth- and memory-constrained environments while retaining compatibility with established multi-view stereo backbones. Future work will likely focus on further increasing robustness to depth estimation failures via improved neighborhood selection and on integrating dynamic update strategies for incremental mapping.