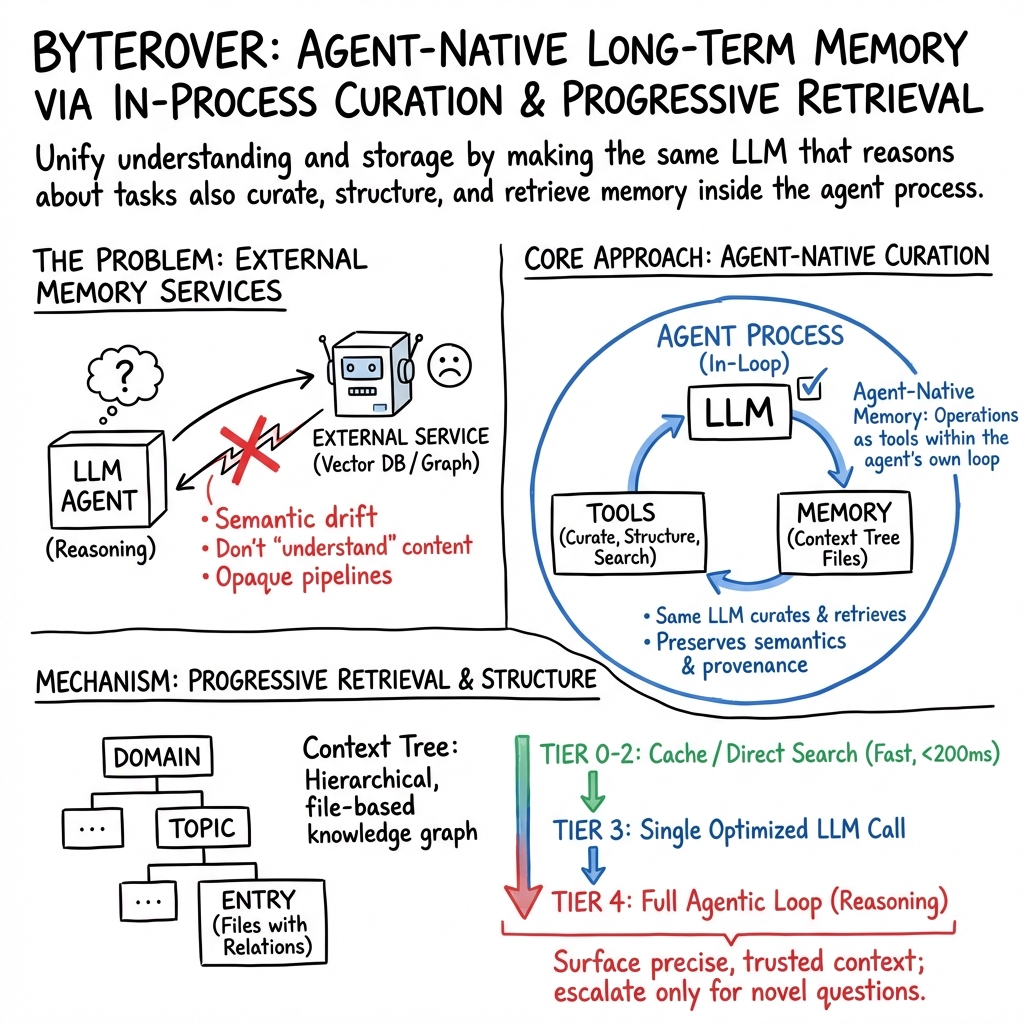

- The paper introduces an agent-native memory architecture that eliminates semantic drift by co-locating memory curation with LLM reasoning.

- It leverages a hierarchical Context Tree and a 5-tier progressive retrieval pipeline to achieve efficient, scalable, and fine-grained memory operations.

- Empirical results on LoCoMo and LongMemEval benchmarks demonstrate notable gains in multi-hop and temporal reasoning, validating its practical and theoretical benefits.

ByteRover: Agent-Native Memory Through LLM-Curated Hierarchical Context

Motivation and Limitations of External Memory in MAG

The ByteRover architecture directly addresses the core limitations of prevalent Memory-Augmented Generation (MAG) systems, namely the architectural bifurcation between reasoning (LLM agent) and knowledge storage (external memory service). Contemporary MAG pipelines universally adopt an external-service paradigm in which memory is treated as a black-box subsystem, isolated from the agent’s semantic intent. This segregation introduces semantic drift due to mismatches in chunking, embedding, and organization, precludes fine-grained provenance and rationale tracking across multiple agents, and results in recovery fragility as state becomes opaque following agent or system failure.

ByteRover proposes an agent-native memory architecture that co-locates curation, structuring, and retrieval of knowledge with the core LLM agent loop. This inversion enables the agent to maintain complete epistemic control over memory, eliminating semantic drift and ensuring that the stored knowledge graph mirrors the agent’s operational intent.

ByteRover Architecture and Context Tree

Hierarchical Context Tree

The central data structure, the Context Tree, is a hierarchical file-based knowledge graph spanning Domain > Topic > Subtopic > Entry. Each entry is a markdown file encapsulating:

- Explicit relation annotations (@references as edges),

- Provenance, rationale, and task-level metadata,

- Curated narrative, rules, code/data snippets,

- Lifecycle metadata (importance, maturity tier, recency decay).

Cross-references and backlinks yield a full bidirectional relation index, supporting O(1) access for navigational queries. Symbolic representation (domain/topic/subtopic trees) is surfaced to the agent as injected context, facilitating structure-aware retrieval.

Adaptive Knowledge Lifecycle (AKL)

Knowledge entries maintain an adaptive lifecycle using compounded scores:

- Importance: Updated by access and modification, decaying daily.

- Maturity Tiers: Governed by hysteresis, entries progress from draft to validated to core.

- Recency: Time-decayed evidence modulates the retrieval influence.

The overall retrieval score is a linear combination of BM25 search, normalized importance, and recency.

Agent-Native Memory Operations

Memory modification is agent-native: all memory management tools (ADD, UPDATE, UPSERT, MERGE, DELETE) are invoked as explicit operations from within the LLM’s agentic loop, rather than as API calls to external services. Each operation is atomic, returns structured feedback, and is guarded by temp-then-rename semantics for crash safety.

The curation pipeline enacts LLM-driven compaction via aggressive multistage summarization and deterministic truncation, always yielding curation termination independent of input size.

Progressive Retrieval Pipeline

ByteRover implements a 5-tier progressive retrieval pipeline to minimize LLM calls and maximize efficiency:

- Tier 0 (Exact cache hit): Query fingerprintful match; ms-scale latency.

- Tier 1 (Fuzzy cache match): Jaccard similarity; subsumes paraphrase queries.

- Tier 2 (Direct search): High-confidence BM25/prefix/fuzzy match from MiniSearch full-text index.

- Tier 3 (LLM + Prefetch): Optimized LLM call with top-ranked context entries.

- Tier 4 (Full agentic tool loop): Multi-turn reasoning invoking arbitrary code and file tools.

Only ambiguous, novel, or OOD queries escalate beyond Tier 2, ensuring the majority of queries are resolved locally without incurring LLM overhead.

Rigorous OOD detection prevents the system from hallucinating answers by explicitly rejecting queries with no semantically adequate match.

Empirical Results and Comparative Analysis

LoCoMo Benchmark

ByteRover achieves 96.1% overall accuracy on LoCoMo, outperforming all evaluated baselines including HonCho and Hindsight. Results on multi-hop retrieval (+9.3pp over the strongest baseline) and temporal queries (+9.6pp) are particularly strong, illustrating the advantages of explicit relation graphs and timestamped entries for synthesizing and temporal reasoning.

The sole underperformance arises on open-domain queries, where approaches leveraging non-local parametric knowledge (e.g., Hindsight) have an inherent advantage.

LongMemEval Benchmark

On LongMemEval-S, ByteRover attains 92.8% overall accuracy, narrowly exceeding all comparable systems operating under similar backbone constraints. Notably, it establishes a new best on categories emphasizing precise update tracking and temporal reasoning, due to AKL-driven recency scoring and structured provenance in the Context Tree. The weakest performance is in cross-session multi-hop questions—an artifact of the current tiered retrieval strategy and a known challenge for all symbolic memory systems.

Operationally, median (p50) query latency remains below 2s even at corpus scale (23k+ entries), with minimal tail degradation, validating the practical scalability of the design.

Ablation Study: Component Contributions

Eliminating the 5-tier retrieval pipeline results in catastrophic degradation (−29.4pp), affirming the architectural necessity of progressive retrieval. Ablating OOD detection or explicit relation graphs incurs minor declines (−0.4pp), concentrated in the temporal-reasoning regime, indicating substantial robustness conferred by curation structure and cache efficiency.

Theoretical and Practical Implications

ByteRover demonstrates that embedding memory operations as first-class agentic tools can resolve longstanding challenges in MAG frameworks—achieving high accuracy, debuggability, provenance tracking, and crash safety, all without dependence on external vector/graph databases or embedding services. This agent-native paradigm exposes new opportunities for:

- Stateful, reasoning-compatible memory workflows,

- Fine-grained control over memory evolution and provenance,

- Seamless local deployment and offline operation for privacy-sensitive or resource-constrained environments.

However, the approach incurs increased LLM-induced curation latency, limiting throughput, and may require further optimization or hybridization for data ingestion at production scale. File-system-based storage imposes upper bounds on corpus size and concurrent write efficiency, suggesting future work in sharding, replica management, and distributed indexing.

The backbone LLM's reasoning quality determines curation fidelity, mirroring limitations faced by all agentic memory systems.

Conclusion

ByteRover presents a comprehensive, empirically validated architecture for agent-native long-horizon memory, centering the LLM as both knowledge curator and consumer through a structured, rationale-aware Context Tree, an adaptive knowledge lifecycle, and a highly efficient retrieval stack. Its demonstrated gains on long-term conversational and temporal reasoning tasks, operational simplicity, and resilience to semantic drift position it as a compelling framework for evolving LLM-driven agent ecosystems. Future research should explore scaling strategies, write-path throughput optimization, and dynamic co-learning of curation heuristics jointly with task objectives.