- The paper demonstrates that replacing conventional pixel-wise losses with WD-R significantly improves human-perceived visual quality and state-of-the-art metrics.

- The methodology employs gradient-driven optimization on 3D Gaussian primitives, validated by extensive human preference studies and rate-distortion analyses.

- WD-R effectively reduces artifacts and model size while delivering superior texture fidelity across varied 3DGS frameworks and compression regimes.

Drop-In Perceptual Optimization for 3D Gaussian Splatting

Introduction

"Drop-In Perceptual Optimization for 3D Gaussian Splatting" (2603.23297) presents a systematic study of perceptual loss functions for 3D Gaussian Splatting (3DGS) in novel view synthesis, targeting improved perceptual quality without modifications to the underlying splatting algorithm. The authors propose and validate, via the first large-scale human preference study in this domain, that replacing conventional pixel-wise losses with an advanced perceptual metric—specifically, a regularized Wasserstein Distortion (WD-R)—consistently delivers superior human-perceived visual quality, state-of-the-art LPIPS/DISTS/FID scores, and efficient representational resource usage. The methodology is evaluated across multiple datasets and diverse 3DGS frameworks, with extensive ablation analyses and quantitative/qualitative assessments.

3D Gaussian Splatting and Loss Function Design

3DGS leverages collections of 3D Gaussian primitives, differentiably rendered into 2D novel views. Parameter optimization is gradient-driven, using losses imposed on the rendered images. Conventional approaches (e.g., L1+SSIM) are computationally efficient but insufficiently correlated with human perception, often resulting in texture blurring and inefficient representational allocations. The paper decouples perceptual modeling from algorithm-specific heuristics, positioning the loss function as the central optimization driver.

Three loss categories are assessed:

- Original Loss (L1 + SSIM): Canonical, but suboptimal for perceptual fidelity.

- Composite Loss: Weighted combination of L1, L2, MS-SSIM, and LPIPS, tuned via ablation for improved trade-offs.

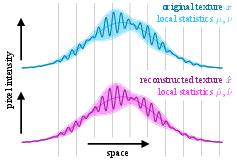

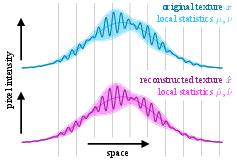

- Wasserstein Distortion (WD): Operates on local statistics in deep feature space; captures texture realism by comparing the RMSE of local mean and standard deviation over VGG features.

WD is further regularized (WD-R) with a small pixel-level fidelity term to suppress artifacts—primarily web-like structures under low splat budgets—without loss of texture realism.

Figure 1: WD distinguishes textures with large pointwise differences but similar local statistics, aligning with human perception.

Experimental Setup: Subjective and Objective Evaluation

The study employs comprehensive evaluation protocols across 21 scenes from four datasets, multiple baselines (Pixel-GS, Perceptual-GS), and alternative 3DGS frameworks (Mip-Splatting, Scaffold-GS, Comp-GS compression). Models are trained under fixed resource budgets (splat count or model size), and perceptual performance is quantified using LPIPS, DISTS, FID, CMMD. Critically, a large-scale human preference study is conducted via blind pairwise comparisons, aggregating 39,320 votes from 428 participants using Bayesian Elo ratings.

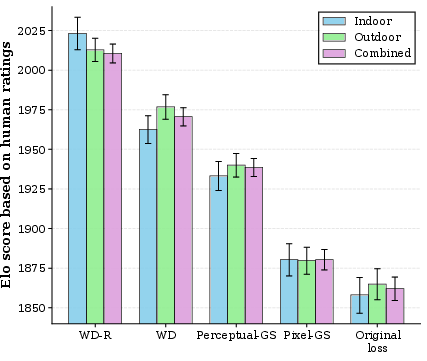

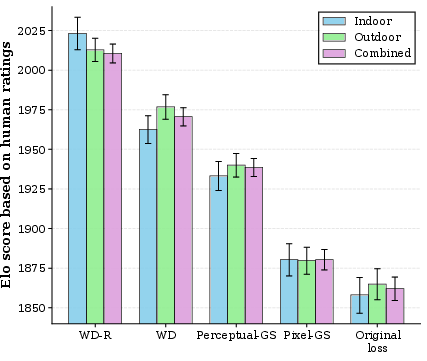

The subjective study validates that WD-R is preferred by human raters 2.3× over the original loss and 1.5× over Perceptual-GS, corroborated by strong Elo score margins.

Figure 2: Bayesian Elo scores demonstrate WD-R dominance across indoor/outdoor benchmarks and frameworks.

Quantitative and Qualitative Results

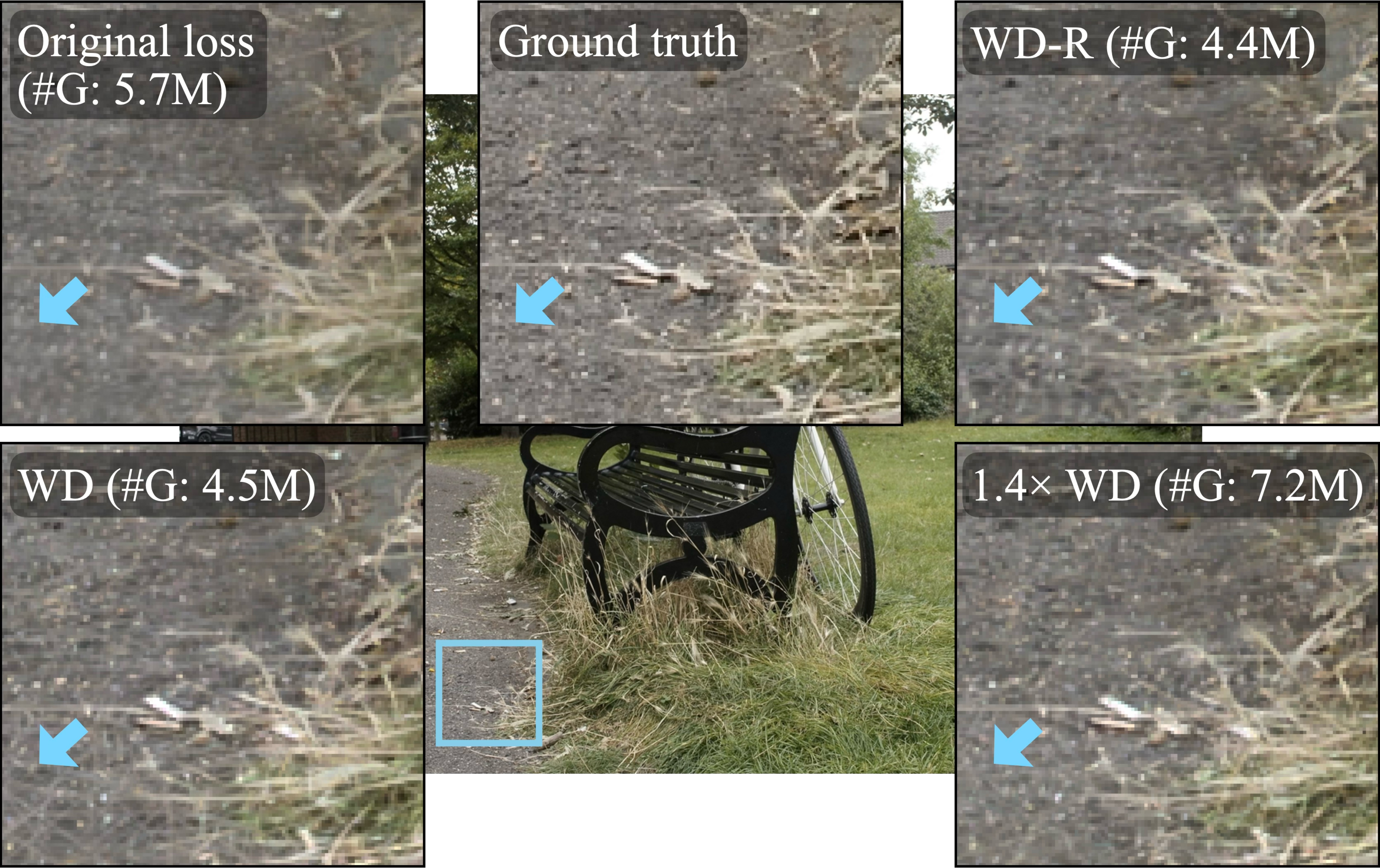

WD-based losses consistently dominate perceptual metrics and produce more compact representations, reducing Gaussian counts while improving texture fidelity (e.g., BungeeNeRF: 6.92M → 4.89M). WD-R achieves the lowest LPIPS/DISTS/FID and highest subjective preference rates, outperforming prior state-of-the-art approaches even under controlled resource constraints.

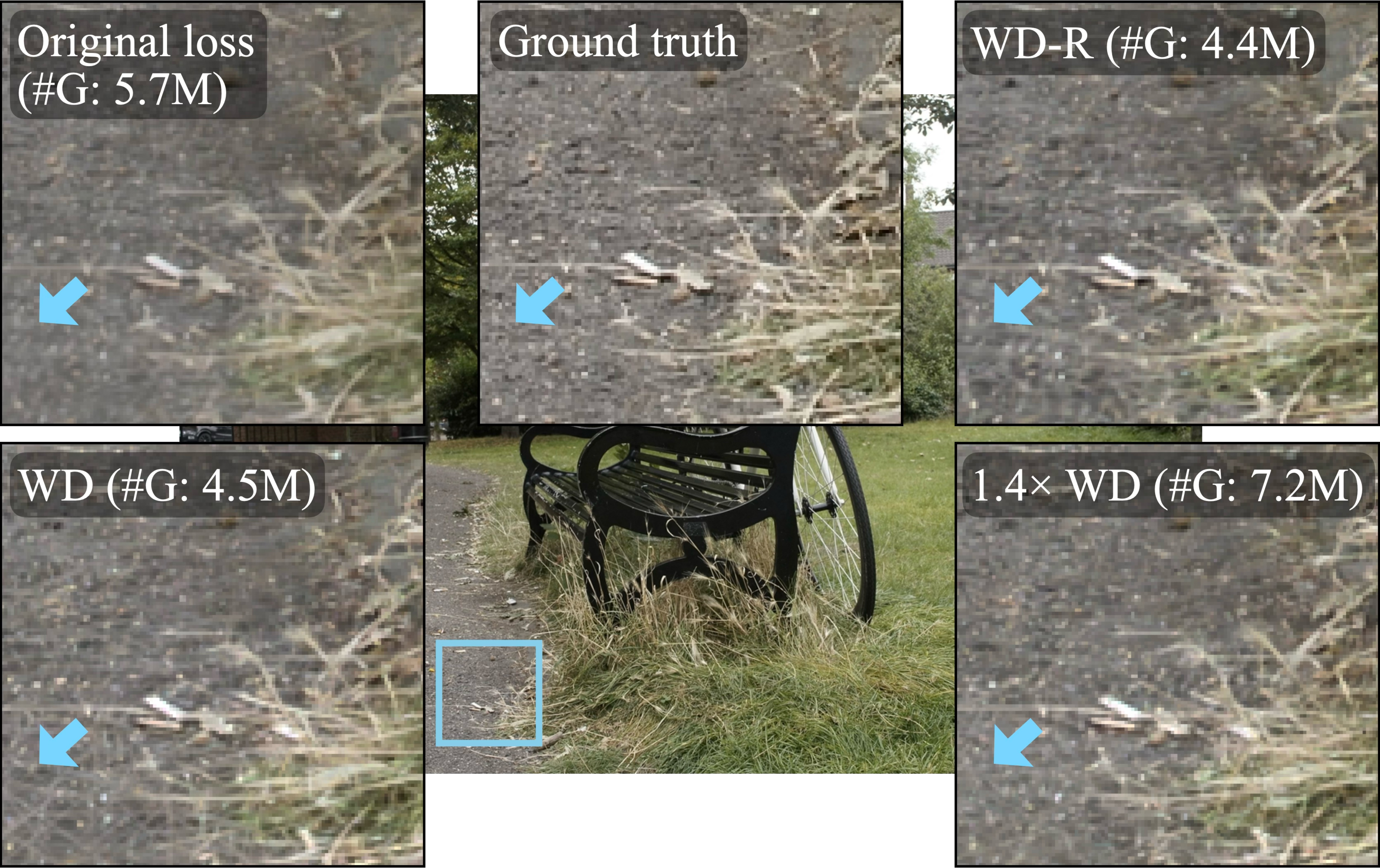

Qualitative comparisons show WD-based objectives recover finer textures and structural details, notably in challenging cityscape scenes (Barcelona), outperforming edge-based densification and other heuristics.

Figure 3: Visual comparison highlights WD-/WD-R superiority in reconstructing texture details and local visual structure.

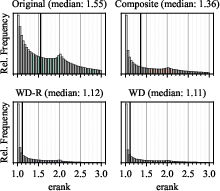

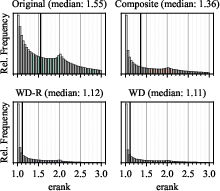

Anisotropy and Artifacts

The impact of WD-based losses extends to geometric adaptation: analysis of effective rank (erank) demonstrates that WD induces anisotropic Gaussian shapes, efficiently capturing high-frequency structure and reducing rendering blur. However, excessive anisotropy can cause web-like artifacts in high-detail regions under low splat constraints. Regularization (WD-R) effectively suppresses such artifacts without increasing splat count.

Figure 4: Illustration of web-like artifacts caused by WD under splat budget constraints and their suppression via WD-R.

Generalization Across Frameworks

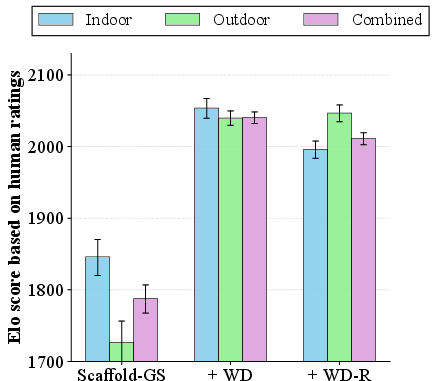

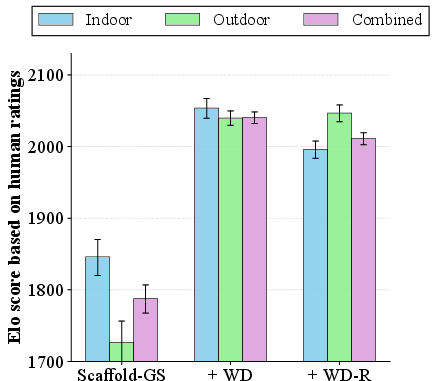

WD-based optimization generalizes robustly across alternative frameworks:

- Mip-Splatting: Multi-scale filtering benefits from drop-in WD/WD-R integration, yielding improved LPIPS and human preference statistics under identical splat counts.

- Scaffold-GS: Structured anchor-based Gaussian representations likewise benefit, achieving increased perceptual scores and preference rates without inflating model size.

Figure 5: WD-based losses improve Bayesian Elo scores and model compactness across Mip-Splatting and Scaffold-GS.

Figure 6: Visual comparison confirms superior texture reproduction and structure with WD-based optimization.

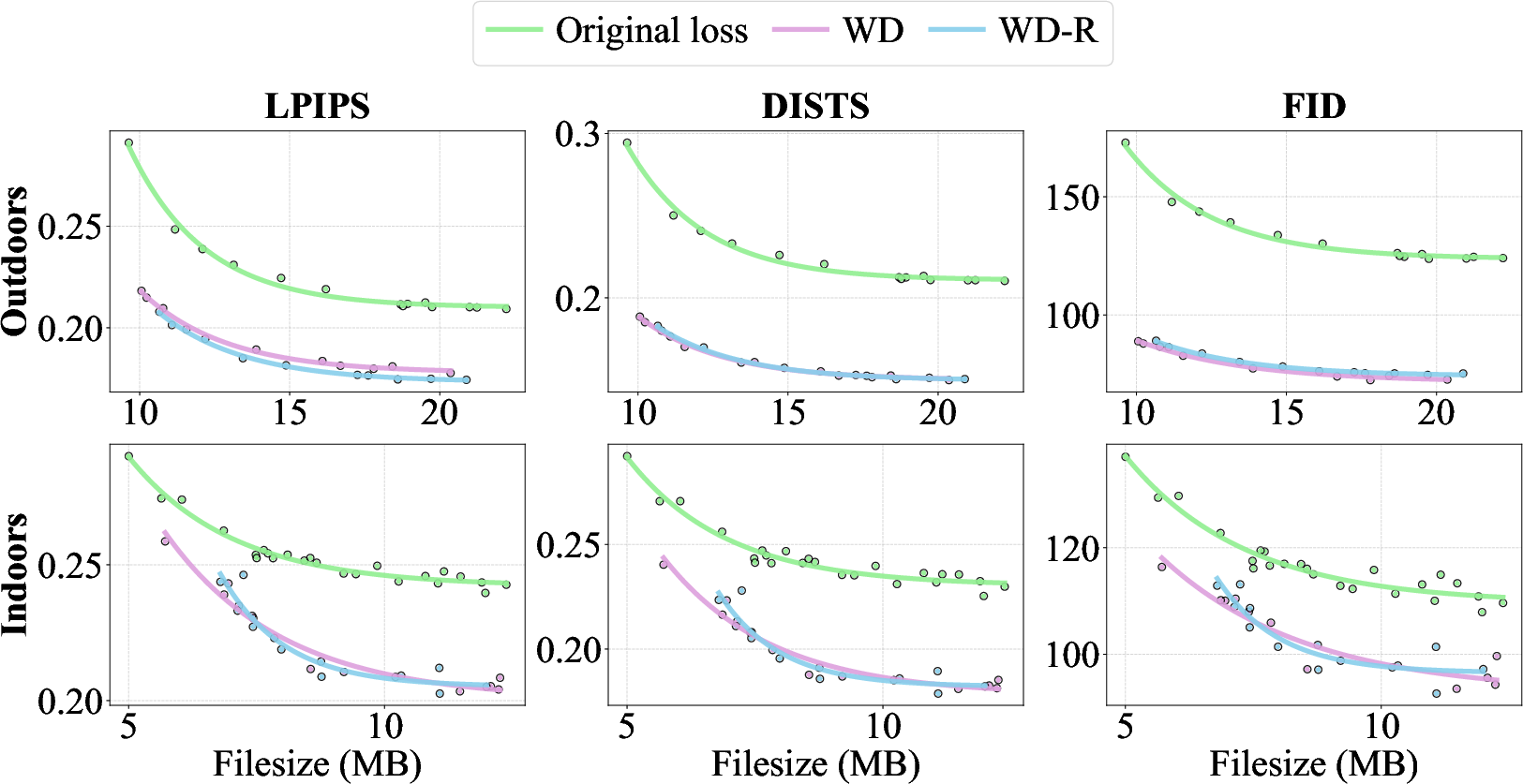

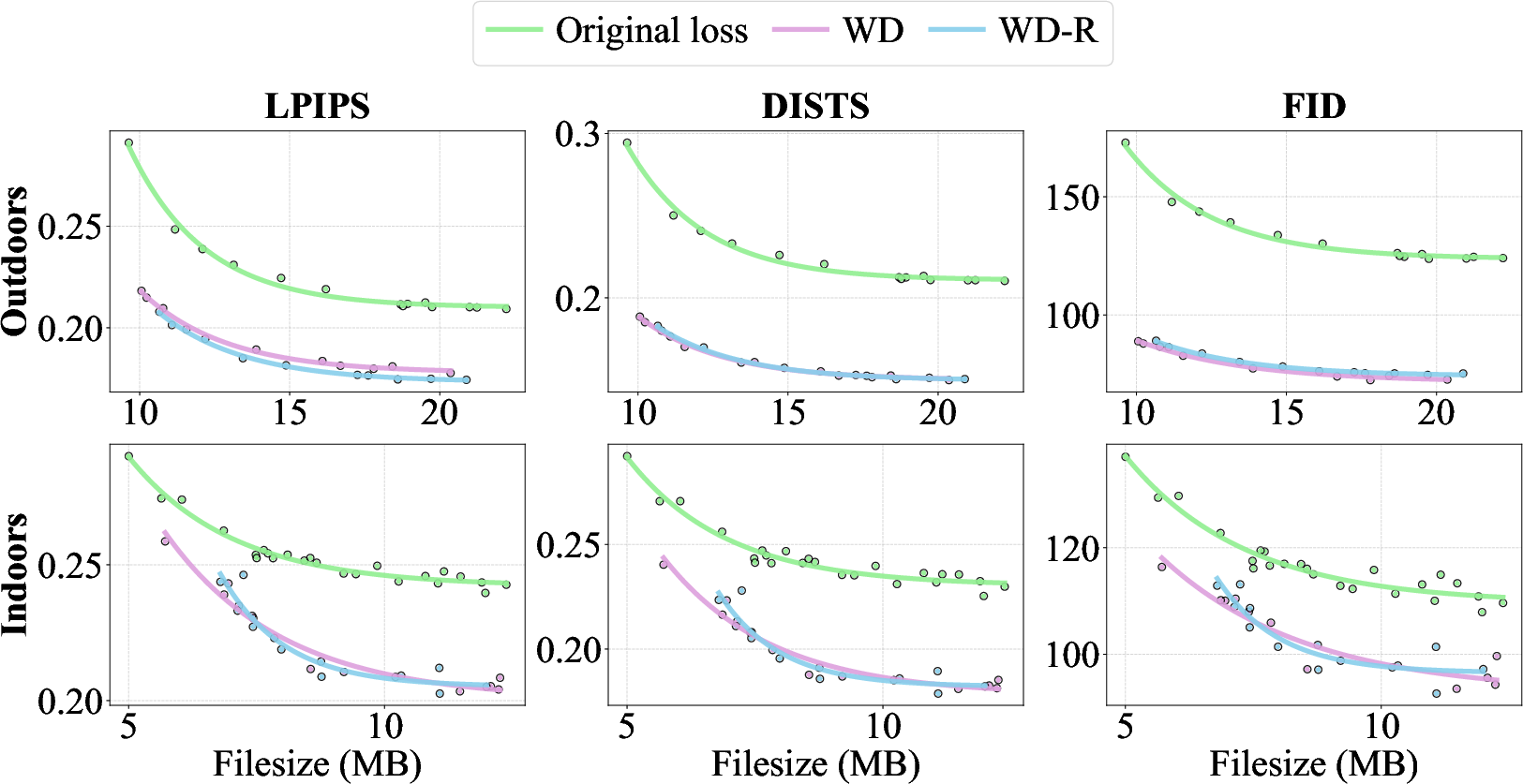

Compression and Rate-Distortion Analysis

In variable-rate compression (Comp-GS), WD/WD-R achieve superior rate-distortion efficiency, affording ∼50% bitrate savings at comparable perceptual quality metrics. The findings support WD-based perceptual optimization as a plug-and-play enhancement for efficient, realistic scene compression.

Figure 7: Rate–distortion curves show substantial bitrate reductions with WD-based perceptual losses.

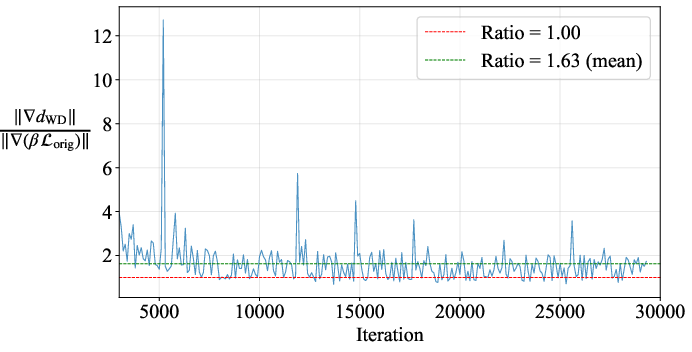

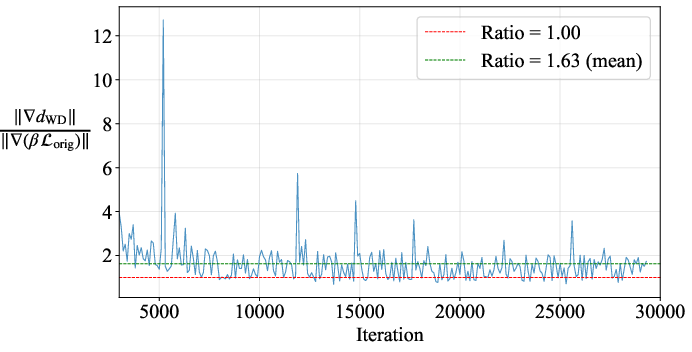

Implementation, Ablations, and Practical Considerations

The computational overhead of WD is significant (4.5× in training time versus L1+SSIM) due to deep feature extraction and statistical computation, although convergence to fewer splats may offset rendering cost. Ablations on pooling size (σ), saliency/adaptive strategies, and loss component weights are provided; fixed pooling size (σ=4) balances metric performance and representation efficiency. Warm-up regimes stabilize geometry initialization, especially for large, variable datasets.

Figure 8: Gradient ratio analysis demonstrates the regularization effect of the original loss within the WD-R objective.

Figure 9: Comparison of constant versus saliency-guided adaptive pooling in WD, confirming similar aggregate perceptual metrics.

Implications and Future Directions

Theoretical implications are significant: the disentanglement of perceptual losses from geometric/model design allows future work to focus alternatively on algorithmic improvements and perceptual fidelity as orthogonal optimization axes. Practically, WD-based optimization is immediately deployable across a wide range of 3DGS pipelines, enhancing visual realism for human observers without sacrificing efficiency.

Key open questions remain regarding principled artifact suppression, instability in low-data regimes, and adaptive pooling strategies. Adversarial losses may further increase perceptual realism, but at high computational cost. Integration of splat count/model size directly into perceptual optimization (fully end-to-end rate-distortion frameworks) is a promising trajectory.

Conclusion

The paper rigorously establishes that advanced perceptual loss functions, particularly regularized Wasserstein Distortion, substantially elevate 3DGS rendering quality both in objective metrics and human-perceived realism, without requiring architectural changes. WD-R outperforms prior edge- and gradient-based heuristics, efficiently allocates representational capacity, and generalizes across novel frameworks and compression regimes. The findings mandate a shift toward principled loss-centric optimization for perceptually faithful 3DGS, with broad implications for real-time scene rendering, storage, and transmission.