Does This Gradient Spark Joy?

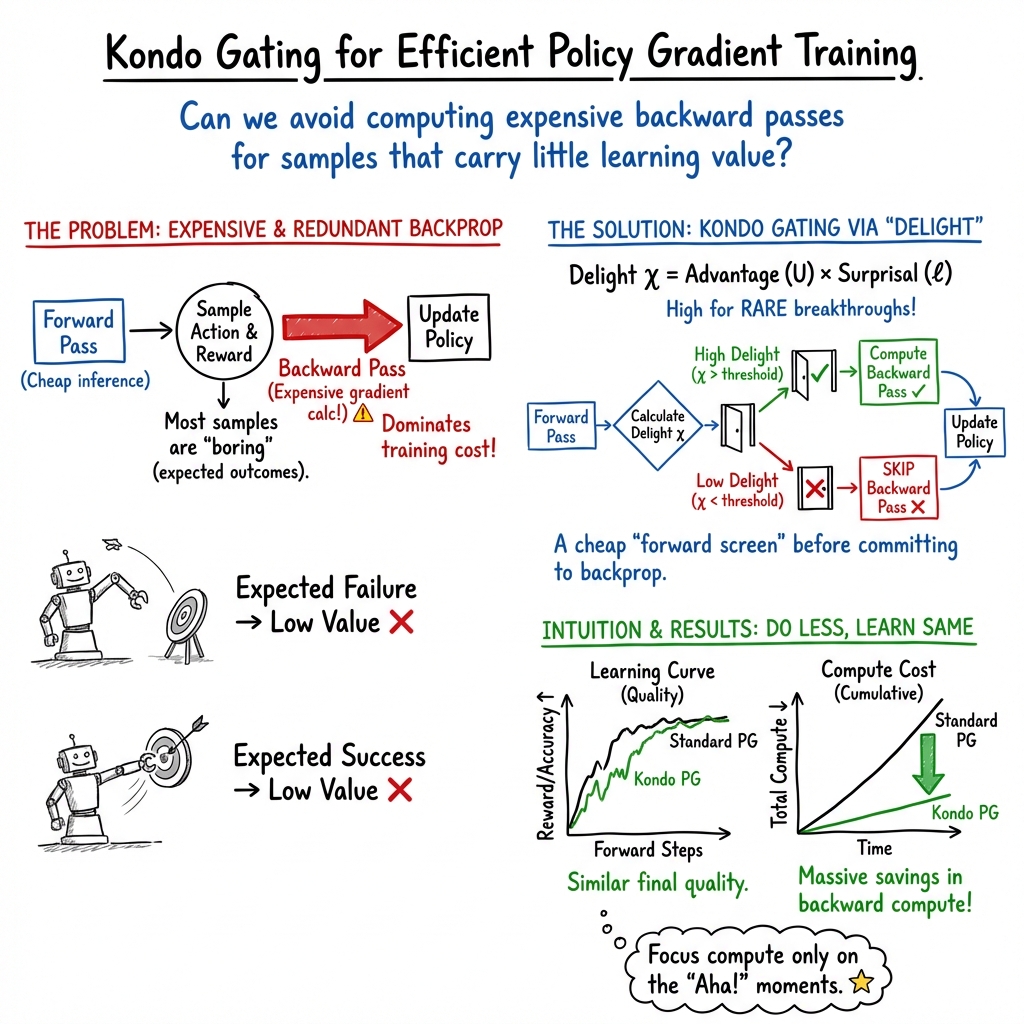

Abstract: Policy gradient computes a backward pass for every sample, even though the backward pass is expensive and most samples carry little learning value. The Delightful Policy Gradient (DG) provides a forward-pass signal of learning value: \emph{delight}, the product of advantage and surprisal (negative log-probability). We introduce the \emph{Kondo gate}, which compares delight against a compute price and pays for a backward pass only when the sample is worth it, thereby tracing a quality--cost Pareto frontier. In bandits, zero-price gating preserves useful gradient signal while removing perpendicular noise, and delight is a more reliable screening signal than additive combinations of value and surprise. On MNIST and transformer token reversal, the Kondo gate skips most backward passes while retaining nearly all of DG's learning quality, with gains that grow as problems get harder and backward passes become more expensive. Because the gate tolerates approximate delight, a cheap forward pass can screen samples before expensive backpropagation, suggesting a speculative-decoding-for-training paradigm.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Collections

Sign up for free to add this paper to one or more collections.