The $\mathbf{Y}$-Combinator for LLMs: Solving Long-Context Rot with $λ$-Calculus

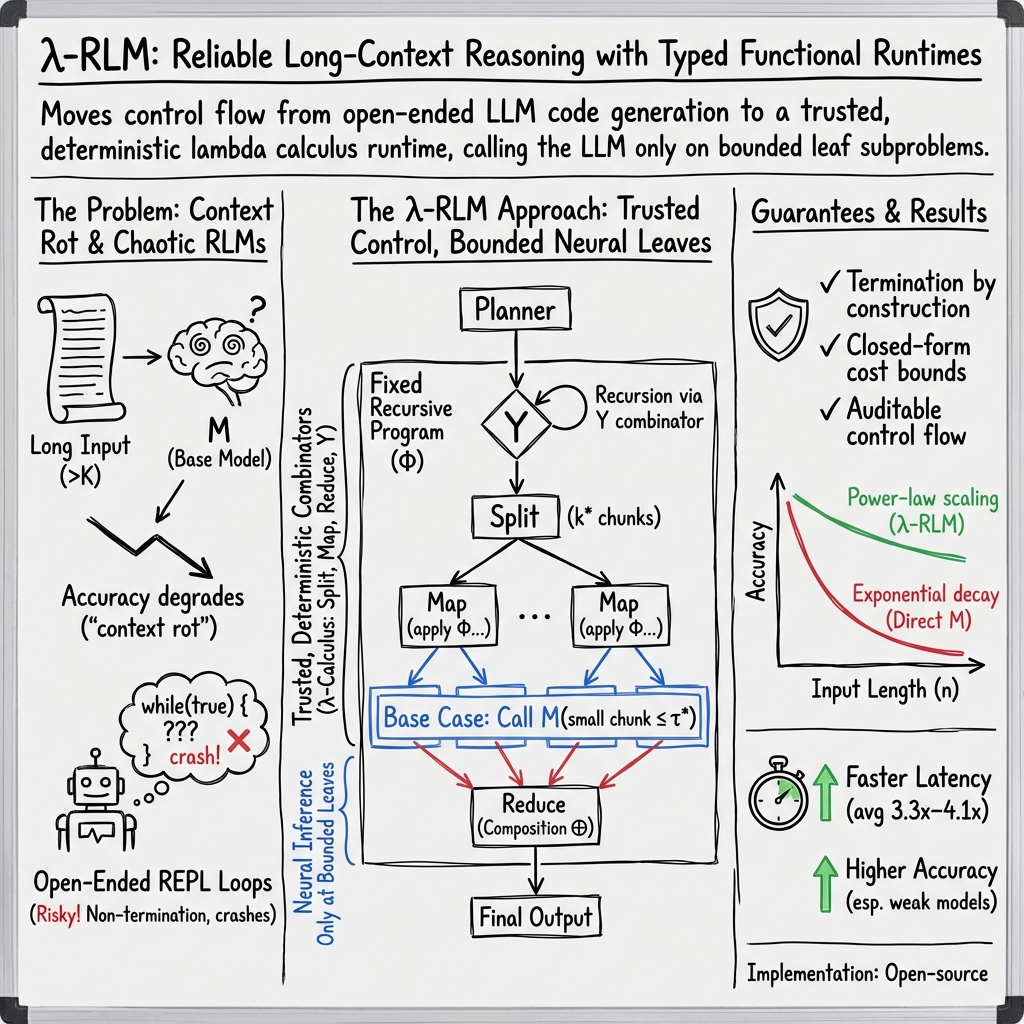

Abstract: LLMs are increasingly used as general-purpose reasoners, but long inputs remain bottlenecked by a fixed context window. Recursive LLMs (RLMs) address this by externalising the prompt and recursively solving subproblems. Yet existing RLMs depend on an open-ended read-eval-print loop (REPL) in which the model generates arbitrary control code, making execution difficult to verify, predict, and analyse. We introduce $λ$-RLM, a framework for long-context reasoning that replaces free-form recursive code generation with a typed functional runtime grounded in $λ$-calculus. It executes a compact library of pre-verified combinators and uses neural inference only on bounded leaf subproblems, turning recursive reasoning into a structured functional program with explicit control flow. We show that $λ$-RLM admits formal guarantees absent from standard RLMs, including termination, closed-form cost bounds, controlled accuracy scaling with recursion depth, and an optimal partition rule under a simple cost model. Empirically, across four long-context reasoning tasks and nine base models, $λ$-RLM outperforms standard RLM in 29 of 36 model-task comparisons, improves average accuracy by up to +21.9 points across model tiers, and reduces latency by up to 4.1x. These results show that typed symbolic control yields a more reliable and efficient foundation for long-context reasoning than open-ended recursive code generation. The complete implementation of $λ$-RLM, is open-sourced for the community at: https://github.com/lambda-calculus-LLM/lambda-RLM.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

Overview

This paper tackles a big challenge for LLMs: handling very long inputs like books, large codebases, or collections of documents. Normal LLMs can only read up to a fixed “context window” (a limit on how many tokens they can process at once). When the input is longer than that, accuracy often drops because the model “forgets” earlier parts. The authors introduce a new way—called lambda-RLM (λ‑RLM)—to break long problems into smaller pieces, solve the small parts, and combine the answers, all using a carefully controlled, reliable system based on ideas from math and computer science (lambda calculus and the Y‑combinator).

Key Questions

The paper asks:

- Can we make LLMs reason over long inputs without letting them write and run unpredictable code?

- Can we guarantee that the process stops, stays efficient, and remains accurate as inputs get longer?

- Does a structured, math-backed “controller” beat the usual approach where the LLM generates its own recursive code?

How They Did It

The Problem: Fixed Context Windows and “Context Rot”

Think of an LLM like a reader who can only see a few pages at a time. If the book is longer than the reader’s window, they miss or forget earlier pages. This causes “context rot,” where accuracy drops as inputs get longer.

The Usual Fix (and Its Issues): Recursive LLMs (RLMs)

RLMs keep the long prompt outside the model (like storing the book on a shelf) and let the model write code to peek at parts, split it up, and recursively call itself on chunks. This helps with long inputs, but it has problems:

- The model writes arbitrary code each step, which can crash or not finish.

- It’s hard to predict cost and time.

- It’s difficult to verify and audit what’s happening.

Their Solution: λ‑RLM with Typed, Trusted Combinators

Instead of letting the model write any code, λ‑RLM provides a small set of pre-checked building blocks (combinators) that control the process. The LLM is only used for the actual “thinking” on small, safe chunks that fit within its context window.

You can think of this like an assembly line:

- A planner decides how to slice the big job into manageable tasks.

- Trusted tools perform the slicing, filtering, and combining.

- The LLM only works at the final stations where each task is small enough to handle well.

The combinators (tools) include:

- Split: break a long input into smaller chunks.

- Map: apply the same process to each chunk.

- Filter: keep only the useful parts.

- Reduce/Concat/Cross: combine multiple results into one final answer.

- M: the only “neural” step—call the LLM on a small chunk.

A “planner” picks how many pieces to split into, the stopping size for chunks, and how to combine results. This makes the whole process predictable: you know upfront how deep the recursion goes and how many LLM calls will happen.

A Simple Idea Behind the Scenes: Lambda Calculus and the Y‑Combinator

Lambda calculus is a minimalist way of describing computation using only functions. The Y‑combinator is a clever trick that lets a function refer to itself (do recursion) without needing a name, like tying a knot so the process loops in a controlled way. λ‑RLM uses this to build recursive behavior safely without the LLM inventing it on the fly.

Main Findings

- Formal guarantees:

- It always stops (termination), as long as the splitter reduces chunk sizes.

- You can calculate in advance how many times the LLM will be called and the total cost.

- Accuracy scales in a controlled way with depth, avoiding the rapid decay you get with very long direct inputs.

- Under a simple cost model, splitting into two chunks at each step is the cost-optimal strategy.

- Empirical results across 4 long-context tasks and 9 different LLMs (with context windows up to 128K tokens):

- λ‑RLM beats standard RLM in 29 out of 36 comparisons.

- Average accuracy improves by up to +21.9 points on weaker models and +18.6 points on medium models.

- Latency (time to get an answer) drops by up to 4.1×.

- On the toughest benchmark (pairwise reasoning), it gains +28.6 points with a 6.2× speedup.

- The full implementation is open-source: github.com/lambda-calculus-LLM/lambda-RLM

Why It’s Important

This approach separates “what the model thinks” from “how the process runs.” The LLM focuses on understanding and answering small parts, while a reliable, math-backed controller manages splitting, recursion, and combining. This means:

- More predictable costs and performance.

- Fewer runtime failures.

- Better accuracy on long tasks.

- A stronger foundation for building trustworthy AI systems that deal with large, messy inputs (like long documents, big codebases, or large evidence sets).

Takeaway

λ‑RLM shows that giving LLMs a disciplined, pre-verified toolkit to handle long inputs is better than letting them write arbitrary recursive code. By using a small set of trusted combinators and a planner grounded in lambda calculus (with the Y‑combinator for clean recursion), the system:

- Guarantees stopping and predictable compute,

- Improves accuracy and speed,

- And scales to long inputs more reliably.

In short, smart, structured control beats open-ended code generation for long-context reasoning.

Knowledge Gaps

Knowledge gaps, limitations, and open questions

Below is a single, consolidated list of the main uncertainties, omissions, and open problems that the paper leaves unresolved, phrased to guide concrete follow-up work:

- Typing and formalization gap: The method is described as a “typed functional runtime,” but all core definitions are given in untyped λ-calculus; no type system, typing rules, or type-safety (preservation/progress) proofs are provided for the combinator library or for REPL execution.

- Composition semantics and guarantees: The task-specific composition operator

⊕is treated as a black box with an assumed correctness probabilityA_⊕. The paper does not formalize⊕’s semantics per task, how to compute/estimateA_⊕, or verify that⊕preserves correctness under realistic, noisy leaf outputs. - Accuracy model realism: The leaf accuracy model

A(n) = A₀·ρ^{n/K}and independence assumptions among leaf calls are unvalidated. The paper does not test whether leaf errors are correlated across chunks, how cross-chunk dependencies affect error propagation, or how to estimate/learnA(·)andA_⊕from data. - Planner optimality under realistic costs: The optimal split result (

k* = 2) assumes linear per-token pricing and constant per-level composition cost. It ignores batching, parallelism, caching, API-tier non-linearities, and variance in output lengths. There is no sensitivity analysis or alternative optimization under realistic cost models and latency constraints. - Accuracy–cost trade-off optimization: The planner uses an accuracy target

αand simple bounds but does not optimizek*,τ*, and recursion depth jointly with empirically calibrated accuracy/cost models. Procedures to learn or adapt these parameters online are not provided. - Non-decomposable and cross-dependency tasks: The framework assumes size-decreasing splits and local leaf solvability. It does not address tasks requiring global constraints, long-range cross-references, or semantic coherence across chunks (e.g., codebases with cross-file dependencies), nor how to integrate overlap, stitching, or cross-chunk consistency checks.

- Content-aware splitting:

Split(P, k*)produces contiguous slices without semantic awareness. The impact of boundary misalignment (e.g., splitting mid-function, section, or sentence) is not studied, and no methods are proposed to learn or detect better split points (e.g., AST-aware, section-aware, or learned segmenters). - Pruning strategy specification:

PruneIfNeededis referenced but unspecified—no criteria, correctness guarantees, or safety checks for recall-preserving pruning are given, and there is no analysis of how pruning affects accuracy or latency. - Use of the LLM beyond leaves: The method claims to invoke the LLM only at leaf subproblems but uses an LLM call for task detection (menu selection). The paper does not quantify or mitigate the impact of this additional non-leaf LLM dependency.

- Combinator library sufficiency and extension: The minimal library might be insufficient for many domains. The paper provides no principled procedure for adding new combinators, for verifying them, or for maintaining termination/cost guarantees when extending the library.

- Verification of “pre-verified” combinators: There is no description of the formal verification methodology (e.g., specifications, property tests, proof artifacts) used to certify that combinators are total, deterministic, and safe in the REPL environment.

- Robustness and safety: The approach does not address prompt-injection resilience, adversarial inputs, or malicious content in the external environment. There are no guardrails for LLM outputs at leaves (e.g., schema validation, type-checking, or robust parsing) or strategies for error recovery and retries.

- Determinism vs. stochastic LLM outputs: The runtime is deterministic except for leaf LLM calls, which are stochastic. The paper does not discuss how to reconcile determinism with non-deterministic leaves (e.g., via majority vote, self-consistency, or confidence filtering) or how that affects theoretical guarantees.

- Parallelism and scheduling: The cost and latency analyses do not model parallel execution of

Mapcalls or potential bottlenecks (I/O, memory). There is no scheduling policy or empirical exploration of how concurrency affects throughput, cost, and variance. - Symbolic operator resource costs: Some symbolic operations (e.g.,

Crossproducing O(n²) pairs) may be memory- or time-intensive even if neural costs are zero. The paper does not analyze or bound the resource usage of symbolic steps on very large inputs. - Leaf formatting and window compliance:

LeafPrompt(P, π)is a black box. There is no specification of how to ensure that system/user instructions plus leaf content fit withinK, nor a method to account for templating overhead and tokenizer boundary effects. - Failure handling and reliability: The runtime does not specify what happens on API failures, timeouts, or malformed LLM outputs. There are no retry policies, fallback strategies, or guarantees that execution remains bounded and auditable under partial failures.

- Empirical evaluation completeness and fairness: Experimental details are incomplete (the results section is truncated; hardware, hyperparameters, concurrency, and caching policies are not fully described). There are no ablations isolating the effects of individual components (e.g., planner,

k*,τ*, library size), nor checks that baselines have optimized scaffolds and equal infrastructure. - Benchmark coverage and generalization: The benchmarks listed are limited and partially described; widely used long-context suites (e.g., LongBench, LV-Eval, Needle-in-a-Haystack variants beyond S-NIAH) are not reported. Generalization to unseen task types, codebases with complex cross-references, or multilingual settings is not evaluated.

- Expressivity vs. guarantees: While the library constrains control flow for guarantees, the paper does not characterize the expressivity frontier—what classes of algorithms/tasks can be represented with the current combinators and fixed-point scheme, and how expressivity scales with added operators without losing termination/cost bounds.

- Learning to plan: Task-to-plan (

π) mapping is hand-coded/looked up. There is no mechanism to learn or adapt plans, compositions, or thresholds from data, nor an analysis of when learned meta-controllers outperform fixed plans. - Integration with retrieval and tools: The framework assumes prompt-as-environment but does not explore integration with retrieval-augmented generation, tool calls, or external knowledge bases, which could change decomposability and cost/accuracy trade-offs.

- Formal correctness beyond termination and cost: Theoretical results cover termination and cost bounds, but there is no formal notion of partial/total correctness with respect to task specifications or conditions ensuring end-to-end semantic correctness.

- Presentation and reproducibility issues: Several equations and tables appear malformed or incomplete (e.g., the cost function definition, typos/encoding issues, inconsistent use of

$$for the method name, missing appendix content referenced for proofs). These hinder reproducibility and clarity and should be rectified with a complete, consistent artifact (code, data, prompts, and proof appendices).

Practical Applications

Overview

Based on the paper’s findings—typed, auditable control via a lambda-calculus runtime; pre-verified combinators (Split, Map, Filter, Reduce, Concat, Cross); bounded neural calls at leaf subproblems; formal guarantees on termination, cost, and accuracy; and empirical gains over open-ended RLMs—below are concrete, real-world applications. Each item identifies sectors, actionable workflows or product ideas, and the assumptions/dependencies that affect feasibility.

Immediate Applications

These can be deployed now using the open-sourced implementation and current LLMs.

- Healthcare — Long EHR summarization and medication reconciliation

- What: Summarize multi-year patient records; reconcile problem lists and medications; flag contradictions using pairwise checks (Cross + Filter).

- Workflow: Split records by encounter/time; Map(M) to summarize segments; Reduce(M or Merge) to synthesize; Cross to detect conflicts between meds/allergies or notes.

- Tools/products: “Clinical Long-Record Summarizer” microservice; EHR-integrated assistant with audit logs and predictable LLM call budgets.

- Dependencies/assumptions: HIPAA/PHI safeguards; domain-tuned leaf model for clinical text; decomposability of tasks; reliable composition prompts; clinician-in-the-loop review.

- Finance — 10‑K/10‑Q analysis and cross-year risk comparison

- What: Extract KPIs, risks, and accounting changes from long filings; compare across years/entities (pairwise).

- Workflow: Split by sections; Map(M) to extract structured fields; Reduce(Merge) to aggregate; Cross for year-over-year comparisons; FilterBest for targeted queries.

- Tools/products: “CFO Filing Analyzer”; analyst copilot with formal cost bounds and execution trace.

- Dependencies/assumptions: Accurate extraction prompts; availability of filings; domain-specific evaluation; adherence to compliance policies.

- Legal & E‑discovery — Evidence triage and contradiction/citation mapping

- What: Triage large corpora, extract evidence, map contradictions and references across documents (O(n2) symbolic pairing with few neural calls).

- Workflow: Split documents; Map(M) to extract claims/citations; Parse + Cross to generate candidate pairs; Filter to prune; minimal leaf classification calls on pairs.

- Tools/products: “E‑Discovery Cross‑Ref Engine”; court-compliant audit trails showing deterministic control flow.

- Dependencies/assumptions: High-precision extraction at leaves; reproducible parsing pipelines; chain-of-custody and audit requirements.

- Software Engineering — Monorepo code Q&A and impact analysis

- What: Answer repo-wide questions, identify impacted modules, and summarize PRs across multi-file codebases.

- Workflow: Split by directories/files; Map(M) to summarize components; Reduce(Merge/Concat) for repo-level synthesis; optional Cross for dependency reasoning.

- Tools/products: IDE plugin; CI pipeline step “Long-Repo Analyzer” with fixed recursion depth and predictable compute.

- Dependencies/assumptions: Code-aware leaf prompts; repository indexing; language-specific heuristics for chunking; secure sandboxed REPL.

- Customer Support — Long knowledge-base search and synthesis

- What: Answer tickets using large KBs without truncation; ensure consistent, auditable retrieval with controlled compute.

- Workflow: Split KB; Map(Peek) + Filter to shortlist; Map(M) to generate answers; Reduce(Best/M) to synthesize final responses.

- Tools/products: “KB Answerer” for support platforms (e.g., Zendesk/ServiceNow) with latency improvements and cost predictability.

- Dependencies/assumptions: High-quality metadata for filtering; reliable Best/Filter operators; tuned prompts for answer synthesis.

- Education — Automated grading and feedback on long essays and reports

- What: Grade and summarize feedback for long student submissions with section-level analysis and overall synthesis.

- Workflow: Split by rubric/sections; Map(M) to evaluate subsections; Reduce(Merge) to compile rubric scores and feedback; final M synthesis for per-student summary.

- Tools/products: LMS-integrated “Long-Submission Grader” showing per-section evidence and bounded model calls.

- Dependencies/assumptions: Clear rubrics; calibrated grading prompts; fairness and bias checks.

- Public Policy/Government — Summarization of public comments and rulemaking documents

- What: Aggregate themes, deduplicate points, and produce structured summaries from large comment sets and regulatory texts.

- Workflow: Split by document batches; Map(M) for local summaries; Reduce(Merge) for themes; Cross for duplicate/theme matching.

- Tools/products: “Public Comment Synthesizer” with audit logs and termination guarantees for procurement/legal review.

- Dependencies/assumptions: Transparent provenance; bias mitigation; domain evaluation criteria.

- Data Integration — Entity resolution and product matching

- What: Match similar products/entities across catalogs using symbolic O(n2) candidate generation with minimal neural calls.

- Workflow: Cross for candidate pairs; Filter to prune; leaf M classifier on short pair representations; Reduce(Merge) to finalize links.

- Tools/products: “Catalog Matcher” for retail/marketplaces.

- Dependencies/assumptions: Good blocking strategies to limit pairs; accurate pairwise leaf classification.

- IT/Ops — Log and incident report summarization at scale

- What: Summarize long, multi-file incident logs; produce root-cause narratives.

- Workflow: Split by time/source; Map(M) summaries; Reduce(Merge/Concat) across services; optional M synthesis for executive reports.

- Tools/products: “Incident Summarizer” for observability stacks (e.g., Datadog, Splunk).

- Dependencies/assumptions: Robust log parsing; domain prompts; privacy controls.

- Platform/MLOps — LLM execution firewall and observability

- What: Middleware that replaces open-ended REPL with typed combinators to reduce error/attack surfaces and provide predictable budgets.

- Workflow: RegisterLibrary + BuildExecutor pipeline; expose dashboards with depth, call counts, and cost bounds; enforce k*=2 default.

- Tools/products: “Typed Runtime Gateway” for enterprise LLM platforms.

- Dependencies/assumptions: Integration with existing LLM services; policy rules for max depth/cost; operator sandboxing.

Long-Term Applications

These require further research, scaling, domain extensions, or ecosystem maturation.

- Cross‑modal long-context reasoning (text + code + tables + images/video)

- What: Extend Split/Map/Reduce to multi-modal chunks; bounded leaf models per modality; unified composition operators.

- Potential products: Multi-modal report analyzers (e.g., clinical notes + imaging captions), video compliance audits.

- Dependencies/assumptions: Modal-specific leaf oracles; typed operators for non-text data; eval standards across modalities.

- Jointly trained leaf oracles optimized for the runtime

- What: Train/fine-tune LMs to perform better on short, leaf prompts and composition-aware outputs; RL to align with decomposition plans.

- Potential products: “Leaf-Optimized LMs” offered with the runtime as a package.

- Dependencies/assumptions: Training data for leaf tasks; stability of operator interfaces; measurable alignment gains.

- Retrieval-augmented lambda-RLM (RAG + typed control)

- What: Use symbolic Filter and preview-based pruning guided by vector databases before any leaf calls; tight accuracy/cost control.

- Potential products: Scalable enterprise RAG with formalized orchestration and termination guarantees.

- Dependencies/assumptions: High-quality embeddings; retrieval evaluation; typed operators for retrieval hooks.

- Audited and certified LLM systems for regulated sectors

- What: Standardize proofs of termination, cost bounds, and composition reliability for certification (e.g., in healthcare/finance).

- Potential products: “Proof-carrying LLM Pipelines” with compliance attestations.

- Dependencies/assumptions: Regulator-accepted standards; additional formal methods for new combinators; logging/provenance frameworks.

- Dynamic planners with SLA/cost-aware optimization

- What: Online selection of (k*, τ*, plans) under budget, latency, and token pricing variability; multi-objective optimization.

- Potential products: “SLA-Aware Planner” for enterprise LLM gateways.

- Dependencies/assumptions: Accurate cost models; stable performance predictors; feedback from real workloads.

- Knowledge graph induction from large corpora

- What: Use Map/Parse/Cross/Reduce to extract entities/relations and reconcile across documents at scale.

- Potential products: Auto-generated, auditable knowledge bases for enterprises and research.

- Dependencies/assumptions: Reliable IE at leaf level; noise-tolerant merge operators; truth maintenance/conflict handling.

- Real-time assistants with unbounded session memory

- What: Maintain unlimited conversation history as an external prompt; incremental Split/Filter/Map with bounded leaf calls.

- Potential products: Long-horizon personal or enterprise assistants with controllable memory costs.

- Dependencies/assumptions: Efficient incremental planning; session security; memory consistency policies.

- Embodied/robotic agents with typed orchestration

- What: Extend typed combinators to state/action loops; guarantee termination and bounded planning steps for long-horizon tasks.

- Potential products: Reliable planning layers that delegate only leaf perception/actuation to learned modules.

- Dependencies/assumptions: Typed interfaces for action/state; real-time constraints; safety certification.

- Hardware/software co-design for symbolic + neural execution

- What: Accelerate symbolic combinators on CPUs/FPGAs while batching leaf LLM calls on GPUs; exploit predictable call patterns.

- Potential products: Edge appliances for long-context processing with small on-device LMs.

- Dependencies/assumptions: Systems engineering; scheduler design; stable batching interfaces.

- Domain-specific combinator libraries (legal, biomedical, code, math)

- What: Add pre-verified operators for citations, evidence chains, dependency graphs, theorem pipelines.

- Potential products: Packaged “LegalOps” or “BioOps” libraries with stronger guarantees and task coverage.

- Dependencies/assumptions: Formalization of new operators; proofs of termination/cost; domain testing.

- Energy and infrastructure analysis

- What: Analyze long grid reports, incident logs, and regulatory filings; produce cross-period comparisons and risk syntheses.

- Potential products: “GridOps Report Analyzer.”

- Dependencies/assumptions: Domain-tuned leaf prompts; access to proprietary datasets; validation against SME benchmarks.

Notes on Feasibility and Dependencies

- Task decomposability: Success depends on whether tasks can be partitioned so leaf prompts fit within model window K while preserving enough context to answer sub-queries.

- Leaf model quality: End-to-end accuracy is bounded by leaf accuracy and composition reliability; domain-tuned or specialized leaf models often required.

- Composition operators: Deterministic operators must be chosen/constructed to preserve correctness for the task; for synthesis steps, a final leaf M may be needed.

- Planning parameters: Default k*=2 is cost-minimizing under simple assumptions; τ* must be ≤ K; planning may require calibration for domain performance.

- Security and sandboxing: The REPL must be sandboxed; the restricted combinator library reduces but does not eliminate prompt injection risks in prompts to M.

- Auditability and cost: Predictable call counts and depth enable cost forecasting and SLAs; ensure logging of operator traces for compliance.

- Integration: Adapters to existing LLM stacks (vLLM, OpenAI-compatible APIs) and data systems (vector DBs, EHRs, code hosts) are necessary for productionization.

Glossary

- Accuracy decay: A function describing how a model’s accuracy decreases as prompt length increases, often modeled exponentially with respect to the context window. "The accuracy of on a prompt of length :"

- Beta-reduction: The core evaluation rule in lambda calculus that applies a function to an argument by substituting the argument for the bound variable in the function body. "In the untyped lambda calculus, the central computational rule is -reduction, which formalises what it means to apply a function to an argument."

- Bounded oracle: A component (here, the base LLM) that is only invoked on inputs within a guaranteed size limit and used to solve leaf subproblems. "The base LLM is used only as a bounded oracle on small leaf subproblems."

- Cartesian product: The set of all ordered pairs from two lists, used here to form all pairwise combinations. "Cartesian product of two lists"

- Composition operator: A deterministic function that combines partial results from subproblems into a single output. "A composition operator is a deterministic function that combines partial results."

- Context rot: The phenomenon where model performance degrades as inputs approach or exceed the effective context capacity. "the onset of "context rot", i.e., the exponential decay in accuracy"

- Context window: The maximum number of tokens a model (e.g., a Transformer) can reliably process at once. "a Transformer consumes a fixed-length context window"

- Cost function: A model of the compute or monetary cost to invoke the base model as a function of input and output token lengths. "The cost of invoking on tokens:"

- Finite State Machines (FSMs): Computational models with a finite set of states, noted here as inadequate for unbounded recursion depths. "While Finite State Machines (FSMs) are insufficient for the arbitrary recursion depths required in complex document decomposition,"

- Fixed-point combinator: A higher-order function that returns a fixed point of another function, enabling recursion without named self-reference. "A fixed-point combinator is a higher-order term satisfying for all ."

- Inference-time scaling: A strategy to improve capability by allocating more computation at inference (e.g., recursive decomposition) rather than increasing model size or retraining. "reframes long-context reasoning as inference-time scaling: rather than increasing model parameters or training new architectures, we can scale computation at inference by decomposing problems into smaller subproblems and composing their solutions"

- Lambda calculus: A minimal formal system for computation using function abstraction and application. "The lambda calculus is a minimal formal language for describing computation using only functions and functional operations."

- Neural oracle: A role in the runtime where the LLM is called as a black-box solver for leaf subprompts. "Neural oracle: invoke the base model on a sub-prompt"

- Operational semantics: A formal description of how programs execute step-by-step, used to reason about properties like termination and cost. "We formalise an operational semantics and prove termination and cost bounds under standard size-decreasing decomposition assumptions;"

- Planning Domain Definition Languages (PDDL): Formal languages for specifying planning problems, oriented toward state-space search rather than data transformations. "and Planning Domain Definition Languages (PDDL) are optimised for state-space search rather than data transformation,"

- Power-law decay: A rate of decline (e.g., in accuracy) that follows a polynomial relationship with input size, slower than exponential decay. "reveals power-law decay, strictly slower than ."

- Read–Eval–Print Loop (REPL): An interactive programming environment that reads code, evaluates it, and prints the result in a loop. "Recursive LLMs (RLMs) depend on an open-ended readâevalâprint loop (REPL) in which the model generates arbitrary control code"

- Typed functional runtime: A runtime system enforcing type constraints that executes programs composed of functional combinators, providing predictable control flow. "a typed functional runtime grounded in -calculus."

- vLLM: A high-throughput serving system for LLMs used to run experiments in the paper. "All models are open-weight and served via vLLM."

- Y-combinator: A specific fixed-point combinator in untyped lambda calculus that enables anonymous recursion. "The Y-combinator enables recursion in the untyped lambda calculus: "

Collections

Sign up for free to add this paper to one or more collections.