How Out-of-Equilibrium Phase Transitions can Seed Pattern Formation in Trained Diffusion Models

Abstract: In this work, we propose a theoretical framework that interprets the generation process in trained diffusion models as an instance of out-of-equilibrium phase transitions. We argue that, rather than evolving smoothly from noise to data, reverse diffusion passes through a critical regime in which small spatial fluctuations are amplified and seed the emergence of large-scale structure. Our central insight is that architectural constraints, such as locality, sparsity, and translation equivariance, transform memorization-driven instabilities into collective spatial modes, enabling the formation of coherent patterns beyond the training data. Using analytically tractable patch score models, we show how classical symmetry-breaking bifurcations generalize into spatially extended critical phenomena described by softening Fourier modes and growing correlation lengths. We further connect these dynamics to effective field theories of the Ginzburg-Landau type and to mechanisms of pattern formation in non-equilibrium physics. Empirical results on trained convolutional diffusion models corroborate the theory, revealing signatures of criticality including mode softening and rapid growth of spatial correlations. Finally, we demonstrate that this critical regime has practical relevance: targeted perturbations, such as classifier-free guidance pulses applied at the estimated critical time, significantly improve generation control. Together, these findings position non-equilibrium critical phenomena as a unifying principle for understanding, and potentially improving, the behavior of modern diffusion models.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

Simple explanation of the paper: How sudden “phase changes” help diffusion models make patterns

Overview: What is this paper about?

This paper tries to answer a big question about diffusion models (the AI systems that turn noise into pictures): how do they suddenly go from random fuzz to clear, structured images? The authors argue that, during generation, these models pass through a special “tipping point” where tiny bumps in the noise get amplified into large, meaningful patterns—much like water suddenly freezing into ice. They show this using ideas from physics (phase transitions and pattern formation), simple toy models, and real trained networks. They also show a practical trick: if you nudge the model at just the right moment, you can control what it makes more effectively.

The main questions in plain terms

To guide their study, the authors focus on a few simple questions:

- When a diffusion model creates an image, does it build structure slowly, or is there a brief, special moment when big patterns appear?

- How do the design choices in neural networks (like looking at small neighborhoods and using the same filters everywhere) change what kind of patterns can appear?

- Can we spot warning signs of this special moment (the “critical window”) while the model is sampling?

- If we can find that window, can we push the model then to steer the final result more strongly?

How they approached it (with everyday analogies)

The paper uses both theory and experiments, and translates complicated math into simple, physical ideas:

- Diffusion models as “unfreezing movies”: A diffusion model starts with pure noise and repeatedly removes noise to reveal an image. The authors say this cleaning process doesn’t just get steadily clearer; instead it hits a point where small wiggles in the noise suddenly grow into large shapes—like frost patterns racing across a window.

- Phase transitions and “criticality”: A phase transition is a sudden, big change, like water turning to ice. Right at the freezing point, tiny disturbances can spread far and fast. In the model, at this critical moment, gentle “wave-like” patterns become very easy to excite and grow. The authors call these easy-to-excite patterns “soft modes.”

- Why network design matters (locality and shared filters): Convolutional networks look at small patches and reuse the same filters across the image. That limits how the model can “decide” what to generate. Instead of choosing a single memorized training example, the model tends to form broad, repeating patterns across space—like waves on a pond—because those are exactly the kinds of changes the network can easily represent.

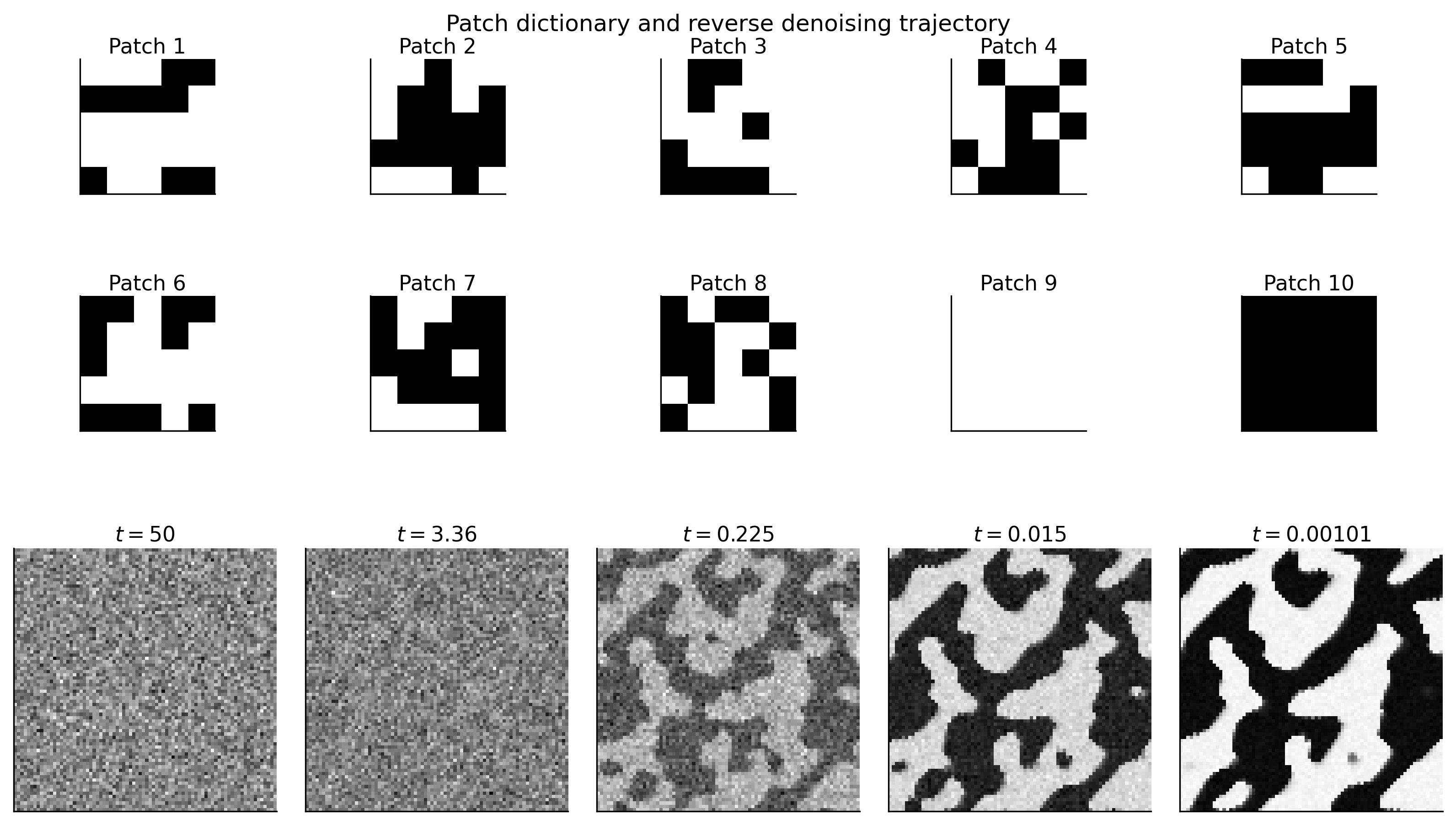

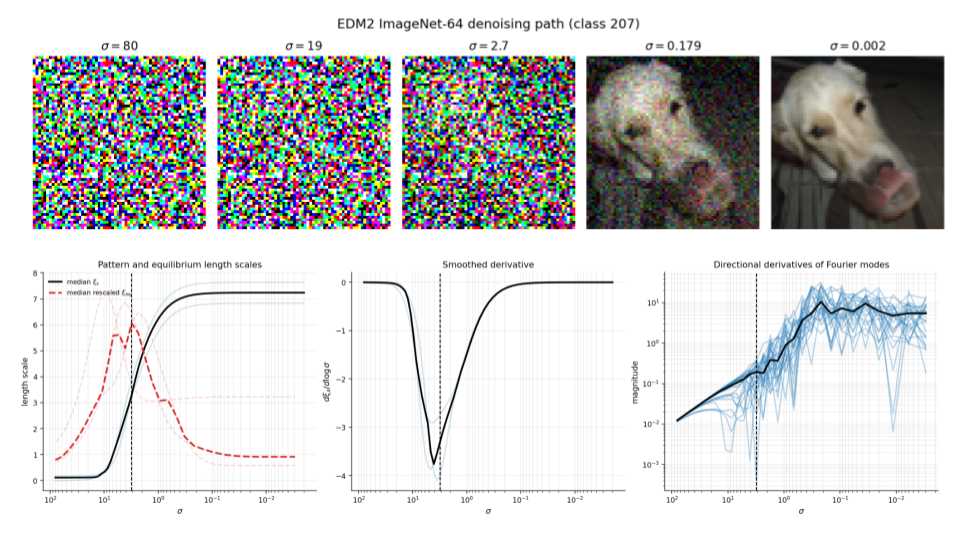

- A simple “patch model” you can solve by hand: To understand the effect of looking at small neighborhoods, the authors study a toy model that only uses information from nearby pixels (“patches”). This model is simple enough to analyze mathematically, and it shows the same kind of sudden pattern growth that the authors see in real networks.

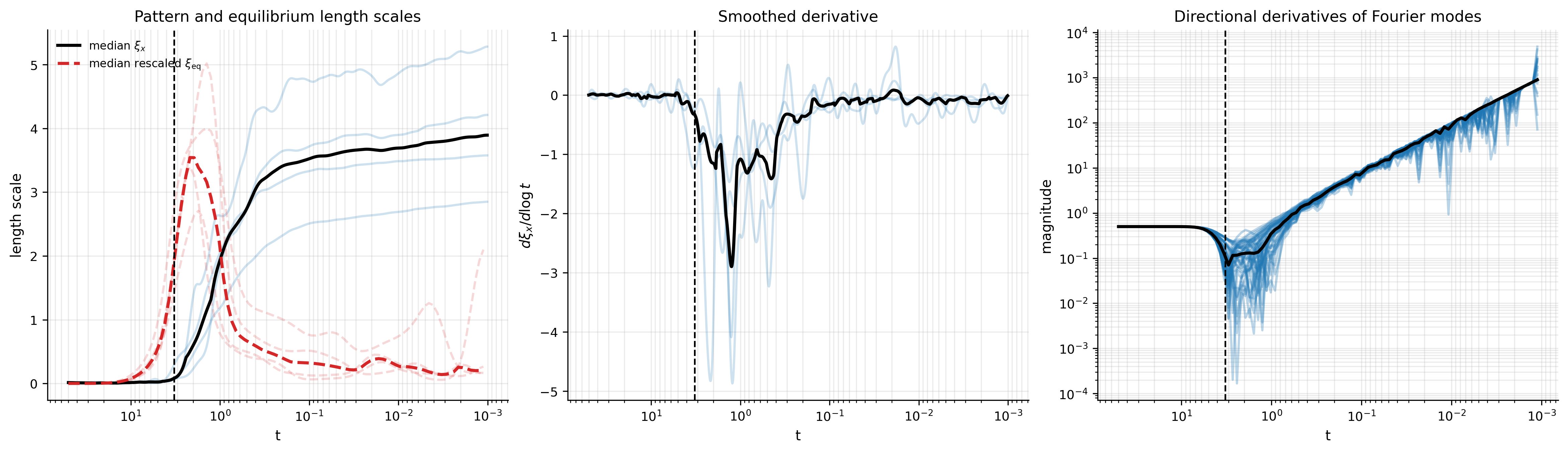

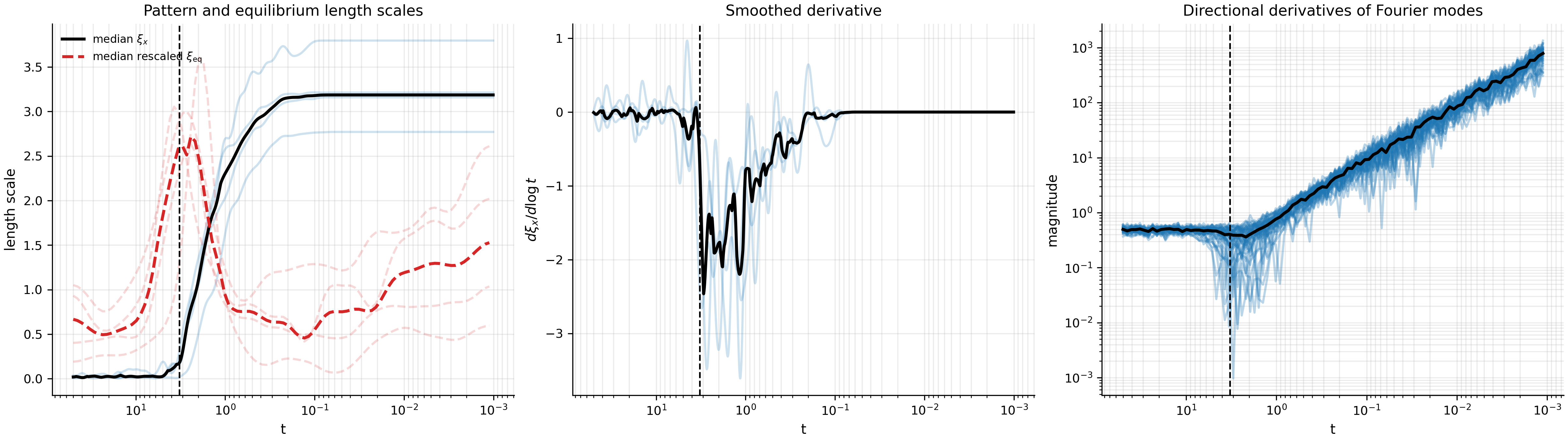

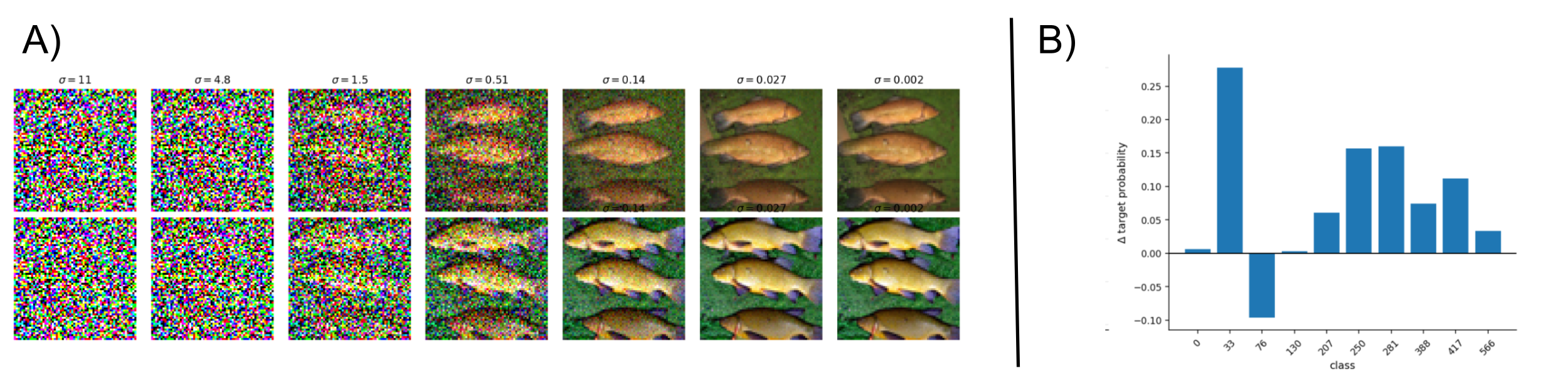

- Measuring the moment of change: They watch how far similarities spread across the image—this is called the correlation length (think: how big are the regions that “agree” in pattern). They also “poke” the image with gentle wave patterns (like pressing on a drumhead at different frequencies) and see how strongly the model pushes back. If the push-back becomes weak for big, smooth waves, those waves are “softening”—a sign the system is near its critical moment.

What they found and why it matters

The paper reports several main results:

- There is a “critical window” during sampling:

- In both the simple patch model and real trained diffusion models, there’s a short period where long-range structure grows very quickly.

- Signs of this include “softening” of low-frequency (large, smooth) waves and a fast increase in correlation length. In plain terms, big shapes appear suddenly.

- Network design turns memorization into creativity:

- If a model could remember every training picture perfectly, it might just “snap” to a stored example. But because convolutional networks are local and translation-friendly (same rules everywhere), they instead create large-scale, wave-like patterns. This turns rigid memorization into flexible pattern formation, which helps the model produce new images, not just copies.

- Real models show the same physics-like behavior:

- The authors see the same “soft mode” signals and fast growth of spatial correlations in trained convolutional diffusion models and a strong, modern model called EDM2. This suggests the toy theory captures something real about how today’s diffusion models work.

- A practical trick: nudge at the critical moment:

- The authors tried a “pulse” of guidance (a strong but brief push toward a target class) only during the estimated critical window. Compared to pushing at a random time, the critical-time pulse made the final images match the target class better. In other words, the model is extra sensitive right at that moment—so small nudges go further.

Why this is important

- Better understanding: It gives a clear, physics-inspired picture of how images emerge: a brief, special window where small fluctuations grow into big patterns.

- Better control: If you know when the model is most sensitive, you can time your guidance or edits for maximum effect, improving class alignment or steering the style/content more reliably.

- Unifying ideas from physics and AI: Concepts like soft modes, correlation length, and phase transitions help explain and predict the behavior of complex AI systems, not just magnets or water.

- Guidance for design and diagnostics: Watching for softening modes and correlation growth could become practical tools to tune models, pick schedules, or design new architectures that produce cleaner, more controllable generations.

In short

This paper says diffusion models don’t just slowly clean up noise—they pass through a brief “tipping point” where large patterns burst into view. The model’s architecture makes it easier to grow big, wave-like structures instead of just copying training images. The authors show this with theory, toy models, and real experiments, and they use it to time guidance pulses that make generation more accurate. Understanding and using this critical window could help us build and steer diffusion models more effectively.

Knowledge Gaps

Knowledge gaps, limitations, and open questions

Below is a concise, actionable list of what remains missing, uncertain, or unexplored in the paper’s theory and experiments.

- Lack of a rigorous thermodynamic limit for trained networks:

- How should image size, receptive fields, and parameter counts co-scale to define a meaningful limit where true phase transitions (not just crossovers) can be proved?

- What assumptions on training (loss, optimizer, regularization) are needed to ensure convergence of the learned Jacobian/operator family as system size grows?

- Formal derivation of a non-equilibrium field theory for reverse diffusion:

- The paper uses a Ginzburg–Landau operator as a local effective description at fixed time, but does not derive a stochastic, time-dependent field theory for the reverse SDE (including noise and non-normality).

- How do nonlinearities and time-dependent annealing enter a principled coarse-grained action, and what predictions (e.g., defect densities, domain growth laws) follow?

- Kibble–Zurek predictions not tested:

- Does the “freeze-out” correlation length scale with the annealing rate as predicted by Kibble–Zurek theory?

- Actionable experiment: vary the reverse sampling rate/schedule and test finite-time scaling of domain sizes and mode softening across image resolutions.

- Finite-size scaling and universality classes:

- No finite-size scaling analysis (e.g., across 32×32, 64×64, 128×128) is provided to check whether peak correlation length and spectral gaps obey Ising- or model-specific exponents.

- How do different data symmetries (e.g., non-Ising, anisotropic, multi-class) map to different universality classes in practice?

- Non-normal dynamics and spectral diagnostics:

- The reverse drift Jacobian is generally non-symmetric; using directional derivatives along Fourier modes may miss transient growth and pseudo-spectral effects.

- Need tools to measure pseudospectra, non-normal amplification, and the gap between eigenvalues and singular values along the trajectory.

- Robustness and identifiability of the “equilibrium correlation length” proxy:

- The proxy is inferred from normalized low-frequency dispersion; its sensitivity to solver step size, discretization, noise schedule (VP vs VE), and local linearization noise is unclear.

- Provide calibration studies and confidence intervals, or alternative estimators (e.g., cross-correlation of perturbation responses, Hessian–vector product power iterations).

- Computational feasibility of Jacobian-based measurements:

- Full Jacobian evaluation is impractical in large models. What scalable approximations (e.g., FFT-based convolutional diagonalization, Hutchinson trace tricks, randomized SVD) preserve diagnostic fidelity?

- Architectural generality beyond convolutional locality:

- Many modern diffusion models use attention, transformers, or cross-attention to text, which break strict translation equivariance and locality.

- How do soft-mode instabilities manifest without convolutional diagonalization? What are the appropriate modes (e.g., learned basis, graph/Laplacian modes) in non-grid or attention-heavy architectures?

- Conditional generation and symmetry breaking:

- Conditioning (class labels, text) explicitly breaks spatial and global symmetries. How does this alter the onset, location, or nature of the critical regime and the relevant soft modes?

- Measuring effective locality and sparsity of learned dynamics:

- The theory assumes architectural/local constraints steer instabilities into spatial modes, yet real networks can learn long-range couplings.

- Develop empirical measures of effective receptive field and Jacobian sparsity/locality throughout the reverse trajectory.

- Multiple critical windows and feature scales:

- EDM2 results suggest non-synchronized softening across modes. Are there multiple, feature-specific critical windows (global layout vs texture vs color)?

- Actionable path: decompose softening by frequency bands/features and learn multi-pulse guidance schedules tailored to each window.

- Control and guidance optimization:

- Guidance pulses were aggregated over classes with a single global critical time. Would class-specific or prompt-specific critical times perform better?

- Optimize pulse amplitude, duration, and timing via black-box or gradient-based optimal control, and quantify trade-offs with FID, diversity, and artifacts.

- Causality versus correlation in critical-window interventions:

- Do increased correlations cause later structure or merely correlate with it? Ablations with noise injections, delayed/advanced pulses, or “score freezing” around the peak could establish causal leverage.

- Dataset and modality breadth:

- Experiments are limited (binarized FashionMNIST, ImageNet EDM2). Test across diverse datasets (natural scenes, medical, satellite), resolutions, and modalities (audio, video, 3D, molecules).

- For non-grid data, define and test appropriate spatial/spectral modes (e.g., graph Laplacians).

- Patch model realism and dependence on dictionary design:

- The tractable patch model includes hand-crafted global patterns; unclear how conclusions extend when patches are learned and priors are complex.

- Test learned patch dictionaries and richer, non-binary distributions; stress-test with conflicting local patterns and long-range constraints.

- Effect of model misspecification and training error:

- Real score networks approximate the true score; how do approximation errors perturb the location and sharpness of the critical window?

- Quantify how training noise, regularization, and finite data affect mode softening and correlation growth.

- Noise schedule dependence (VP vs VE and schedules within each):

- The paper notes VE-specific behaviors but does not systematize how schedules shift or broaden critical windows.

- Map critical-time estimates across schedules and solvers, and design schedule-aware control policies.

- Anisotropy and boundary conditions:

- Real images and architectures can be anisotropic; boundary padding and strides may bias mode structure.

- Measure direction-dependent softening and correlation lengths; test alternative padding/boundary schemes.

- Link between memorization-to-generalization transition and spatial criticality:

- The paper states that architectural constraints transform memorization-driven instabilities into spatial modes but does not quantify the transition boundary.

- Controlled studies varying data size, capacity, and regularization to chart when (and how) the instability shifts from low-rank (global) to spatially extended modes.

- Quantitative universality tests:

- No measurement of critical exponents (e.g., order parameter scaling, susceptibility) or their stability across datasets/architectures.

- Design experiments to extract exponents via finite-size/finite-time scaling and test consistency with Ising or other classes.

- Latent diffusion and feature-space measurements:

- Many models operate in latent space. How should spatial correlations and modes be defined/measured in the latent domain, and how do they map back to pixels?

- Evaluation metrics beyond class logits:

- Guidance effects are evaluated via DINOv2 class alignment; need broader metrics (FID/KID, CLIP alignment, diversity, human judgments) to ensure critical-window interventions don’t degrade realism or diversity.

- Reproducibility and statistical confidence:

- Reported medians and qualitative plots lack variance/confidence intervals; the number of runs is small.

- Provide statistical testing, sensitivity analyses, and open-source code to ensure robustness and reproducibility.

- Safety and robustness implications:

- High susceptibility near the critical window may increase vulnerability to adversarial or malicious perturbations during sampling.

- Assess robustness to adversarial guidance/noise and develop safeguards for deployment.

Practical Applications

Immediate Applications

Below are actionable applications that can be deployed now, leveraging the paper’s findings on critical windows, soft-mode analysis, and guidance susceptibility in diffusion models.

- Critical-window guidance scheduling

- What: Apply strong classifier-free guidance (or other conditioning) only within a narrow window centered on the estimated “critical time/scale” where long-wavelength modes soften and spatial correlations peak.

- Sector(s): Software/creative industries (image/video generation), advertising, gaming.

- Tools/Workflows: “Critical-Guidance Scheduler” for Diffusers/ComfyUI/Stable Diffusion forks; one-time or periodic estimation of the critical noise scale (σ_c) via low-frequency Jacobian dispersion; pulse guidance around σ_c.

- Assumptions/Dependencies: Access to model internals or finite-difference directional derivatives; extra compute to estimate σ_c; calibration differs by model and noise schedule (VP/VE).

- Adaptive compute and step allocation around critical regimes

- What: Densify sampler steps and guidance strength near σ_c; coarsen elsewhere to reduce steps or inference time without degrading quality/control.

- Sector(s): Software, cloud inference providers.

- Tools/Workflows: Custom ODE/SDE schedulers that allocate steps using a correlation-length proxy; plug-in samplers for EDM/SDXL/Flux-like models.

- Assumptions/Dependencies: Reliable proxy for σ_c; modest validation per model; small risk of quality loss if σ_c shifts across prompts or domains.

- Better user-facing controls for “structure vs. detail”

- What: Map timing of conditioning pulses to a UI slider: early/critical pulses to steer global layout and semantics; late pulses to refine texture and style.

- Sector(s): Consumer generative apps, design tools, game engines.

- Tools/Workflows: Two-stage or multi-pulse conditioning schedules aligned to measured soft-mode dynamics.

- Assumptions/Dependencies: Critical regime reasonably stable across prompts; simple presets can be used when on-the-fly estimation is costly.

- Model auditing and interpretability via soft-mode diagnostics

- What: Monitor soft-mode softening and a correlation-length proxy along reverse trajectories to detect memorization-like instabilities vs. collective spatial dynamics.

- Sector(s): Industry model governance, academic model analysis.

- Tools/Workflows: “Correlation-Length Monitor” (Fourier directional derivatives of reverse-drift Jacobian), dashboards tracking σ_c and mode softening trends across datasets.

- Assumptions/Dependencies: Convnets/local architectures yield clear Fourier modes; for global-attention models, use alternative bases or random projections; measurement noise manageable via smoothing/bootstrapping.

- Safety and trust interventions at susceptibility peaks

- What: Apply stronger safety classifiers/filters or content policy checks specifically in the critical window where perturbations have maximal leverage.

- Sector(s): Policy/Trust & Safety for generative platforms.

- Tools/Workflows: Time-localized safety guidance and p(image|policy) penalties; gating or throttling of unsafe prompts during the critical window.

- Assumptions/Dependencies: Critical susceptibility generalizes from class guidance to safety signals; requires careful calibration per model/domain.

- Watermarking and robust provenance via low-frequency pulses

- What: Inject imperceptible, structured low-frequency perturbations during the critical window to embed robust, global watermarks; design detectors keyed to these structured modes.

- Sector(s): Content provenance, platform safety.

- Tools/Workflows: “Critical-Window Watermarker” scheduler and watermark detector service.

- Assumptions/Dependencies: Watermark visibility/robustness must be validated; potential sensitivity to upscaling/editing pipelines.

- Inverse problems: data-consistency at critical windows

- What: In score-based reconstruction (MRI/CT, microscopy, seismic, remote sensing), concentrate data-consistency operators or physics-based constraints near σ_c to enforce global structure while preserving fine detail elsewhere.

- Sector(s): Healthcare imaging, geoscience, Earth observation.

- Tools/Workflows: Conditional diffusion pipelines with critical-window projections (e.g., conjugate-gradient or proximal steps) and adaptive guidance schedules.

- Assumptions/Dependencies: Need σ_c under conditional sampling; compliance with clinical quality/regulatory standards.

- Teaching and reproducible demos of non-equilibrium criticality

- What: Course labs using the analytic patch model and simple ConvNet experiments to visualize mode softening, correlation growth, and controllability pulses.

- Sector(s): Academia (ML, physics of AI, complex systems).

- Tools/Workflows: Notebook kits with the patch model and Fourier diagnostics; small-scale U-Net examples.

- Assumptions/Dependencies: None beyond standard ML tooling.

Long-Term Applications

These leverage the paper’s framework but require further research, scaling, or standardization.

- Architecture co-design for controllable pattern formation

- What: Engineer locality/equivariance and receptive-field geometry to shape the soft-mode spectrum (and thus universality class) for desired compositionality and creativity.

- Sector(s): Software/AI, creative tools.

- Tools/Workflows: Kernel/attention designs that yield targeted dispersion relations; spectral regularizers during training.

- Assumptions/Dependencies: Formal scaling laws for “thermodynamic limits” of trained networks; stable training under new constraints.

- Critical-aware training objectives and regularizers

- What: Encourage spectra that improve controllability/generalization (e.g., correlation-length or dispersion-shape regularization; penalties on low-rank, memorization-like instabilities).

- Sector(s): AI research, enterprise model development.

- Tools/Workflows: Jacobian-spectrum shaping losses; curriculum schedules that avoid brittle pitchfork-like modes.

- Assumptions/Dependencies: Efficient and stable Jacobian or Hessian approximations at scale; avoiding excessive computational overhead.

- Standardized provenance via critical-window watermarks

- What: Industry standards for time-localized watermark embeddings and detectors exploiting high leverage at σ_c.

- Sector(s): Policy, standards bodies, platforms.

- Tools/Workflows: Open watermark specs; certification tests for robustness and false-positive rates.

- Assumptions/Dependencies: Broad adoption; resilience against post-processing and model-to-model transfers.

- Robustness and adversarial defense at susceptibility peaks

- What: Damp soft modes or inject stabilizing noise specifically at σ_c to mitigate adversarial or prompt-based manipulation.

- Sector(s): Safety/security of generative systems.

- Tools/Workflows: “Critical-window damping” modules; training-time robustness shaping.

- Assumptions/Dependencies: Clear mapping from critical modes to adversarial channels; minimal impact on user-desired control.

- Cross-domain extensions (audio, video, 3D, planning/robotics)

- What: Identify and exploit domain-specific critical windows to steer global rhythm/structure (audio), cinematography/layout (video), coarse geometry (3D), or high-level plan topology (diffusion planning).

- Sector(s): Media production, AR/VR, robotics/autonomy.

- Tools/Workflows: Domain-appropriate mode bases (e.g., temporal spectra, spherical harmonics); pulse guidance of semantic controllers.

- Assumptions/Dependencies: Existence of analogous soft modes in non-spatial domains; domain-specific validation.

- Real-time adaptive controllers for production sampling

- What: Online estimation of σ_c per prompt and dynamic re-weighting of guidance/steps during sampling for optimal cost–quality–control tradeoffs.

- Sector(s): Cloud inference, on-device generation.

- Tools/Workflows: Lightweight estimators (finite-difference directional derivatives or learned σ_c predictors) and adaptive schedulers.

- Assumptions/Dependencies: Tight latency budgets; approximate estimators that are accurate enough without large overhead.

- New evaluation benchmarks and health checks

- What: Benchmarks that compare models by soft-mode softening curves, peak correlation length, and controllability gains from critical pulses.

- Sector(s): Academia and industry model evaluation.

- Tools/Workflows: Public benchmark suites; leaderboards reporting “critical controllability metrics.”

- Assumptions/Dependencies: Community consensus on metrics and their link to downstream quality and safety.

- Regulatory auditing in high-stakes imaging

- What: Use critical-regime diagnostics to demonstrate limited memorization and robust generalization in medical or scientific imaging models.

- Sector(s): Healthcare regulators, clinical providers.

- Tools/Workflows: Auditable reports of spectral diagnostics and reconstruction behavior around σ_c.

- Assumptions/Dependencies: Regulatory acceptance; clinical validation that diagnostics correlate with safety/effectiveness.

Glossary

- Annealed process: A process where a control parameter (like effective temperature) is gradually changed during dynamics; here, diffusion time plays that role. "From a physics perspective, this is an annealed process since has both the role of dynamic time and effective temperature of the potential."

- Architectural constraints: Structural restrictions imposed by a network’s design (e.g., locality, sparsity, equivariance) that shape the representable dynamics. "architectural constraints, such as locality, sparsity, and translation equivariance, transform memorization-driven instabilities into collective spatial modes"

- Autocorrelation function: A measure of how a field correlates with itself as a function of spatial displacement, used to estimate correlation length. "The spatial correlation length is estimated from the radially averaged autocorrelation function of ."

- Center manifold: The low-dimensional set of marginally stable directions near a fixed point that captures the system’s reduced dynamics at bifurcation. "This instability is purely mean-field. A single eigenvalue crosses zero, the center manifold is finite-dimensional, and the order parameter scales with exponent $1/2$."

- Classifier-free guidance: A sampling technique that steers diffusion models using conditional and unconditional networks without an external classifier. "classifier-free guidance pulses applied at the estimated critical time"

- Coarse-grained field: A smoothed, continuum field approximating long-wavelength behavior of a discrete system. "a coarseâgrained field "

- Convolution operator: A linear operator implementing convolution, diagonalized by Fourier modes in translation-invariant settings. "the Jacobian becomes a convolution operator,"

- Correlation function: The expected product (or covariance) of field values at two points as a function of their separation. "In nearly equilibrated stochastic systems, susceptibility to the noise is captured by the correlation function"

- Correlation length: The characteristic distance over which spatial correlations decay significantly. "Left: spatial correlation length along several reverse trajectories (transparent blue) and their median (black)."

- Critical regime: A parameter region near a transition where fluctuations grow and system behavior changes rapidly. "reverse diffusion passes through a critical regime"

- Critical time: The particular reverse-diffusion time scale at which critical behavior (e.g., mode softening, rapid correlation growth) peaks. "highlighting the rapid growth of spatial coherence near the estimated critical time."

- Directional derivatives: Derivatives of a vector field taken along specified directions (e.g., Fourier modes) to probe local linear response. "Directional derivatives of the drift are computed along Fourier modes"

- Dispersion: The dependence of mode eigenvalues on spatial frequency; here, low-frequency dispersion is used to infer correlation length. "the normalized low-frequency dispersion of the reverse-drift Jacobian"

- Dynamical susceptibility: A measure of how strongly a system’s dynamics respond to perturbations near a transition. "the correlation length and dynamical susceptibility peak at a specific noise level"

- Effective field theory: A coarse-grained continuum description capturing long-wavelength, low-frequency behavior via a simplified functional. "effective field theories of the GinzburgâLandau type"

- Eigenvalue: A scalar indicating the stretching or contraction of an eigenvector under a linear operator. "a single eigenvalue along the direction of "

- Equilibrium correlation length: An inferred correlation length assuming local equilibrium around an instantaneous state of the dynamics. "the equilibrium correlation length estimate "

- Fourier modes: Basis functions corresponding to spatial sinusoids used to analyze translation-invariant systems. "Such operators are diagonalized by spatial Fourier modes."

- Fourier shell: The set of modes with the same wavevector magnitude, grouped for spectral analysis. "the -th Fourier shell"

- Ginzburg–Landau theory: A phenomenological field theory (often ) describing symmetry-breaking and critical behavior at long wavelengths. "Ginzburg--Landau field theory"

- Ising universality class: The category of systems sharing Ising-model critical exponents and scaling near a Z2-symmetric transition. "belongs to the Ising universality class"

- Jacobian: The matrix of first derivatives of a vector field that linearizes dynamics around a state. "the reverse-drift Jacobian"

- Kibble–Zurek mechanism: A theory describing how finite-rate traversals of phase transitions produce domains via frozen fluctuations. "KibbleâZurek mechanism, which was developed to study domain structure formation through cosmological phase transitions in the early universe"

- Long-wavelength limit: The regime where spatial variations are slow compared to microscopic scales, enabling continuum approximations. "it is convenient to analyze the longâwavelength limit"

- Mean-field: An approximation treating interactions through averaged effects, neglecting spatial fluctuations. "This instability is purely mean-field."

- Mode softening: The reduction of restoring forces for certain modes, reflected by eigenvalues approaching zero. "showing the softening of spatial modes"

- Order parameter: A quantity that is zero in the symmetric phase and nonzero after symmetry breaking, characterizing the phase. "the order parameter scales with exponent $1/2$."

- Out-of-equilibrium criticality: Critical-like behavior in systems not at thermodynamic equilibrium, often during driven dynamics. "reverse diffusion exhibits some of the characteristic signatures of out-of-equilibrium criticality"

- Phase transition: A qualitative change in macroscopic behavior as control parameters vary, often via symmetry breaking. "spontaneous symmetry breaking phase transitions"

- Pitchfork bifurcation: A symmetry-breaking bifurcation where a symmetric state loses stability and two symmetric branches emerge. "a supercritical pitchfork bifurcation occurs"

- Receptive field: The local neighborhood of inputs that influence a unit’s output in a network. "the number of sites in the receptive field."

- Reverse-time SDE: A stochastic differential equation integrated backward in the diffusion process to generate samples. "Once the score is learned, samples are generated by the reverse-time SDE"

- Soft mode: A mode whose eigenvalue approaches zero near a transition, leading to large fluctuations. "Modes whose eigenvalues approach zero are called soft modes."

- Spontaneous symmetry breaking: The emergence of an ordered state that selects a particular symmetry-broken configuration without explicit bias. "spontaneous symmetry breaking phase transitions"

- Thermodynamic limit: The limit of infinite system size where fluctuations average out and transitions become sharp. "genuine phase transitions in the thermodynamic limit."

- Translation equivariance: A property where translating the input results in an equivalent translation of the output, typical of convolutional models. "when the architecture is translation equivariant, as in convolutional score networks."

- Universality class: A grouping of systems that share the same critical exponents and scaling functions near a transition. "can these be related to underlying universality classes?"

- Unstable manifold: The set of perturbations that grow away from an unstable fixed point. "the unstable manifold is one-dimensional."

- Variance exploding model: A diffusion parameterization where noise variance increases with time during the reverse process. "EDM2 uses a variance exploding model"

- Variance-preserving (VP) formulation: A forward diffusion setup that preserves overall variance while gradually corrupting data. "In the variance-preserving (VP) formulation"

- Z2 symmetry: A two-element symmetry (e.g., sign flip) common in Ising-like systems and binary patterns. "the minimal -symmetric dataset"

Collections

Sign up for free to add this paper to one or more collections.