- The paper demonstrates that locality in diffusion models is learned from second-order statistics, specifically pixel correlations and principal components.

- The authors validate their theory with extensive experiments across various architectures and datasets, achieving superior performance over traditional approaches.

- The study reveals that manipulating dataset statistics can control sensitivity fields, providing new insights for designing efficient generative models.

Locality in Image Diffusion Models Emerges from Data Statistics

Introduction

This paper rigorously investigates the origins of locality in image diffusion models, challenging the prevailing hypothesis that locality is primarily a consequence of neural network architectural inductive biases, such as those found in convolutional networks. The authors demonstrate, both theoretically and empirically, that locality in denoising diffusion models is a learned property that emerges from the second-order statistics of the training dataset, specifically the pixel correlations and principal components. This insight is leveraged to construct an analytical denoiser that more accurately matches the behavior of deep diffusion models than previous expert-crafted alternatives.

Theoretical Foundations

The denoising diffusion model is trained to reverse a Gaussian noise process, with the score-matching objective admitting a closed-form optimal denoiser. However, this optimal denoiser, which is a conditional expectation over the training set, fails to generalize and only reproduces images from the training set, exhibiting perfect memorization. Prior works have attempted to bridge the gap between the optimal denoiser and the empirical behavior of deep neural diffusion models by introducing architectural constraints such as locality and shift equivariance.

The paper provides a formal analysis showing that the sensitivity field of a trained denoiser—defined as the input-output Jacobian—is closely related to the projection operator onto the high signal-to-noise ratio (SNR) principal components of the data covariance matrix. For natural images, these principal components correspond to low-frequency features, and the resulting sensitivity field resembles a low-pass filter. However, for specialized datasets, such as centered human faces, the principal components and sensitivity fields are highly nonlocal and dataset-specific.

Empirical Validation

The authors validate their theoretical claims through extensive experiments across multiple architectures (U-Net and DiT) and datasets (CIFAR10, CelebA-HQ, AFHQv2, MNIST, FashionMNIST). They show that:

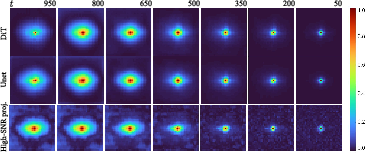

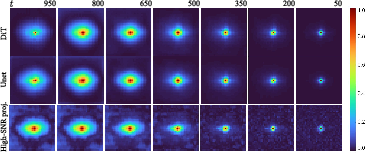

- Sensitivity fields learned by different architectures are similar and align with the Wiener filter, the optimal linear denoiser under Gaussian assumptions.

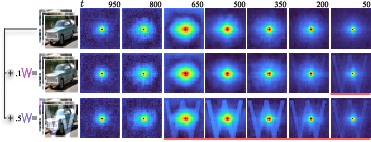

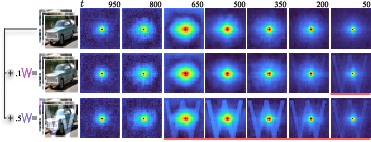

- Manipulating the pixel correlations in the training data can induce arbitrary patterns in the sensitivity field of the trained model, including highly nonlocal structures.

Figure 1: Manipulating pixel correlations in CIFAR10 induces arbitrary patterns in the sensitivity field, demonstrating that locality arises from data statistics rather than architectural bias.

- On datasets with strong translation equivariance (e.g., CIFAR10), the sensitivity fields are local and isotropic. On datasets with structured correlations (e.g., CelebA-HQ), the sensitivity fields are nonlocal and location-dependent.

Figure 2: Comparison of sensitivity fields for U-Net and DiT architectures on CIFAR10, showing strong alignment with the Wiener filter.

Figure 3: Qualitative comparison of the analytical model with Wiener filter and patch-based baselines, highlighting the superior retention of dataset-specific features.

Analytical Model and Benchmarking

Building on these insights, the authors propose an analytical denoiser that replaces empirically measured locality masks with projection operators onto high-SNR principal components, binarized with a threshold. This model is nonlinear and does not assume Gaussianity of the dataset, relying only on second-order statistics for locality estimation. The model is benchmarked against the vanilla optimal denoiser, Wiener filter, and recent patch-based analytical models.

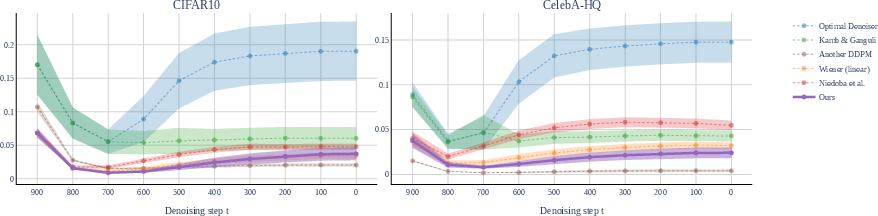

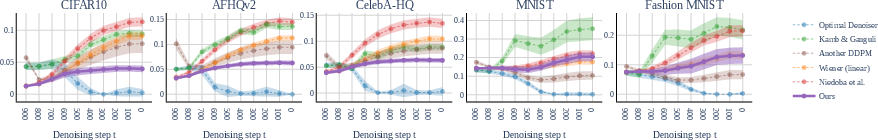

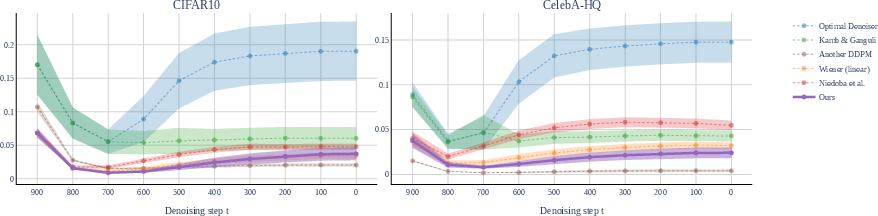

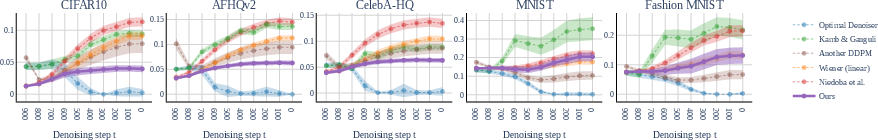

Quantitative results show that the proposed analytical model consistently outperforms all baselines in terms of r2 and MSE when compared to a trained DDPM model across all datasets. The Wiener filter is the second-best performer, further supporting the claim that locality is a function of data statistics.

Figure 4: Mean Squared Error (MSE) between baseline predictions and a trained DDPM model across CIFAR10 and CelebA-HQ, demonstrating the superior accuracy of the proposed analytical model.

Figure 5: L2 distance between generated samples and the closest training image, quantifying the ability of analytical models to produce novel images.

Implementation Details

The analytical model requires precomputing the covariance matrix of the dataset and performing SVD to obtain principal components and singular values. The Wiener matrix is constructed as Wt=Udiag(λi2+σt2λi2)UT, where U and λi are the eigenvectors and eigenvalues of the covariance matrix, and σt2 is the noise variance at timestep t. The projection operator is binarized with a threshold τ to obtain locality masks for each pixel.

The computational complexity of the analytical model is O(nptm), where n is the dataset size, pt is the patch size at denoising step t, and m is the image resolution. This is more efficient than patch-based models with translation equivariance, which scale as O(nptm2).

Implications and Future Directions

The findings have significant implications for the design and analysis of generative models:

- Locality in diffusion models is not an architectural artifact but a learned property dictated by dataset statistics.

- Analytical models that incorporate dataset-dependent locality outperform those relying on architectural heuristics.

- Manipulating dataset statistics provides a mechanism to control the sensitivity and generalization properties of diffusion models.

The work highlights limitations, including the focus on second-order statistics and simpler architectures. Future research should explore the role of higher-order statistics, nonlinear regimes, and conditional generation to further elucidate the mechanisms underlying generalization in diffusion models.

Conclusion

This paper provides a comprehensive theoretical and empirical analysis demonstrating that locality in image diffusion models emerges from the statistical properties of the training data, not from neural network architectural biases. The proposed analytical model, grounded in dataset statistics, achieves superior alignment with trained diffusion models and offers enhanced interpretability and efficiency. These insights pave the way for principled design of generative models and open avenues for further research into the statistical foundations of generalization in deep learning.