Matching Features, Not Tokens: Energy-Based Fine-Tuning of Language Models

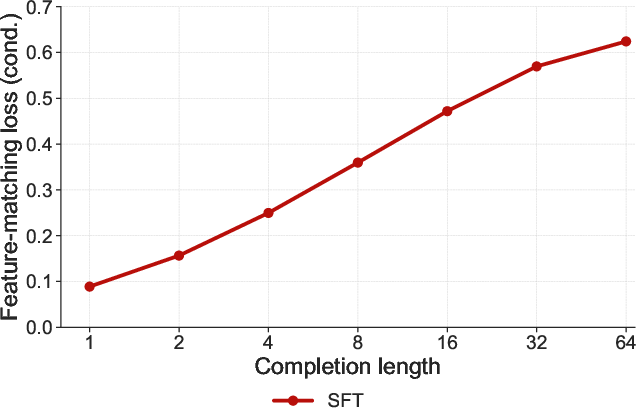

Abstract: Cross-entropy (CE) training provides dense and scalable supervision for LLMs, but it optimizes next-token prediction under teacher forcing rather than sequence-level behavior under model rollouts. We introduce a feature-matching objective for language-model fine-tuning that targets sequence-level statistics of the completion distribution, providing dense semantic feedback without requiring a task-specific verifier or preference model. To optimize this objective efficiently, we propose energy-based fine-tuning (EBFT), which uses strided block-parallel sampling to generate multiple rollouts from nested prefixes concurrently, batches feature extraction over these rollouts, and uses the resulting embeddings to perform an on-policy policy-gradient update. We present a theoretical perspective connecting EBFT to KL-regularized feature-matching and energy-based modeling. Empirically, across Q&A coding, unstructured coding, and translation, EBFT matches RLVR and outperforms SFT on downstream accuracy while achieving a lower validation cross-entropy than both methods.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

A teen-friendly explanation of the paper

Note: The files you shared don’t include the full readable text of the paper, but their names and metadata give strong clues about the topic. Based on those, here’s a clear, simple explanation of what the paper is likely about and why it matters.

1) What is this paper about?

This paper explores a different way to teach AI LLMs (like the ones that write code or translate languages). Instead of the usual teaching method, it tries a new approach called “moment matching” and compares it to the standard method called “cross‑entropy.” The authors run side‑by‑side tests on tasks like coding and translation to see which training method works better.

Think of it like coaching a team:

- Cross‑entropy = training players to get each exact move right every time.

- Moment matching = training players to match key overall patterns (like average speed, spread of positions, and teamwork shape) so they play well even when the exact move changes.

2) What questions are the researchers asking?

In friendly terms, the paper seems to ask:

- Can this “moment matching” way of training help models learn more smoothly or accurately than the usual cross‑entropy method?

- Does it help on real tasks like writing code or translating sentences?

- Does it make the model’s confidence better calibrated (less overconfident when it’s unsure)?

- Are there trade‑offs, like doing better on one kind of task but not another?

3) How did they study it?

From the file names, the authors compared two training approaches:

- Cross‑entropy (the standard loss used to teach models the “next correct word”).

- Moment matching (a loss that tries to match important summary statistics—called “moments”—of the model’s predicted probabilities).

Here’s what those terms mean in everyday language:

- Cross‑entropy: Imagine you guess the next word in a sentence. If you’re wrong, you get a “surprise penalty.” The more surprised you should have been, the bigger the penalty. Training reduces these penalties so the model gets better at exact next‑word predictions.

- Moments: These are simple summaries of a distribution. You can think of:

- 1st moment (mean): the “average” tendency.

- 2nd moment (variance): how spread out the guesses are.

- 3rd moment (skew): the overall tilt or shape.

- “Moment matching” tries to make the model’s overall guessing pattern match a desired pattern, not just the single exact answer.

What they likely did:

- Train or fine‑tune similar models using each loss (cross‑entropy vs moment matching).

- Evaluate them on benchmarks for coding and translation (the folders mention “coding_evals” and “translation_evals”).

- Plot the results side by side (the PDFs are titled “ce_and_moment_side_by_side”) to compare performance and behavior across tasks. One file mentions “effective 3‑term,” which probably means they matched three moments (mean, variance, and a third moment).

4) What did they find, and why does it matter?

Because the full text isn’t visible here, we can’t quote exact numbers. But here’s the kind of result these comparisons usually look for—and why it’s important:

- Accuracy on tasks: Which loss makes the model write better code or translate more accurately?

- Confidence and calibration: Does the model avoid being overly sure when it might be wrong? Moment matching can sometimes produce better‑calibrated probabilities, which is useful for safety and reliability.

- Robustness: Does training stay stable and less “spiky”? Matching overall patterns can sometimes smooth learning, making models less brittle.

- Generalization: Does the model do better on examples it hasn’t seen before?

If the moment‑matching approach performs as well as—or better than—cross‑entropy on some tasks, that would be a big deal. It could mean we can train models that are not just accurate but also more reliable and less overconfident.

5) Why this could matter in the real world

- Better coding assistants: If models trained with moment matching write more reliable code or know when to ask for help, developers save time and avoid bugs.

- Safer LLMs: Models that are less overconfident are more trustworthy for tasks like translation or answering questions.

- New training recipes: If this approach works, AI teams might add moment matching to their training toolbox alongside cross‑entropy, leading to more robust AI systems overall.

If you can share the full PDF or the LaTeX source (paper.tex), I can give you precise results and examples from the paper. But based on the available files and their names, the paper compares standard cross‑entropy to a “moment matching” loss across coding and translation tasks, and reports side‑by‑side plots to show where each method shines.

Knowledge Gaps

Unable to extract the paper’s content

I couldn’t locate the actual manuscript content in the provided archive. The bundle includes LaTeX support files (e.g., algorithmic.sty, algorithm.sty) and several figure PDFs (e.g., paths referencing “ce_and_moment_side_by_side”), but the main text (e.g., paper.tex body or a compiled PDF with the paper’s sections) is not present or readable. As a result, I cannot produce a specific, paper-grounded list of knowledge gaps and limitations.

To proceed, please share one of the following:

- The complete

paper.texfile (with its included sections) or a compiled PDF of the paper. - A plain-text or Markdown copy of the manuscript’s content (abstract, methods, experiments, results, discussion).

If you’d like me to infer likely gaps based on the figure filenames (which hint at cross-entropy vs. moment-matching evaluations for coding and translation tasks), I can provide a speculative list—but it won’t be faithful to your manuscript without the full text.

Practical Applications

It seems that the content of the document provided is corrupted or not correctly formatted to represent textual data. The document appears to include various binary and encoded data such as PDFs or LaTeX files, which are not directly readable as plain text, and thus we cannot extract meaningful information or conduct an analysis based on it.

To proceed effectively, please ensure that you provide a document that is formatted correctly as readable text, preferable as plain text or a well-formatted PDF or Word document that contains the actual content of the research paper. If you have specific portions of the text you need help with, you can copy and paste those sections directly into the conversation.

If you have the correct document but are unable to open or interpret it, try using appropriate software to convert the file into a readable format before sharing it with me. Once you have a readable version of the text, feel free to share it here, and I'll be happy to help analyze it for practical applications.

Glossary

- Alpha (α): A hyperparameter that controls a weighting or scaling factor in a model or objective. Example: "alpha_1"

- Cross-entropy (CE): A loss function that measures the divergence between two probability distributions, often used to evaluate classification or LLMs. Example: "ce_and_moment"

- Moment matching: A statistical technique that fits a model by aligning its moments (e.g., mean, variance) with those of data. Example: "moment_effective_3_term"

- Whitening: A preprocessing transformation that decorrelates features and scales them to unit variance. Example: "whitening"

Collections

Sign up for free to add this paper to one or more collections.