Reinforced Generation of Combinatorial Structures: Ramsey Numbers

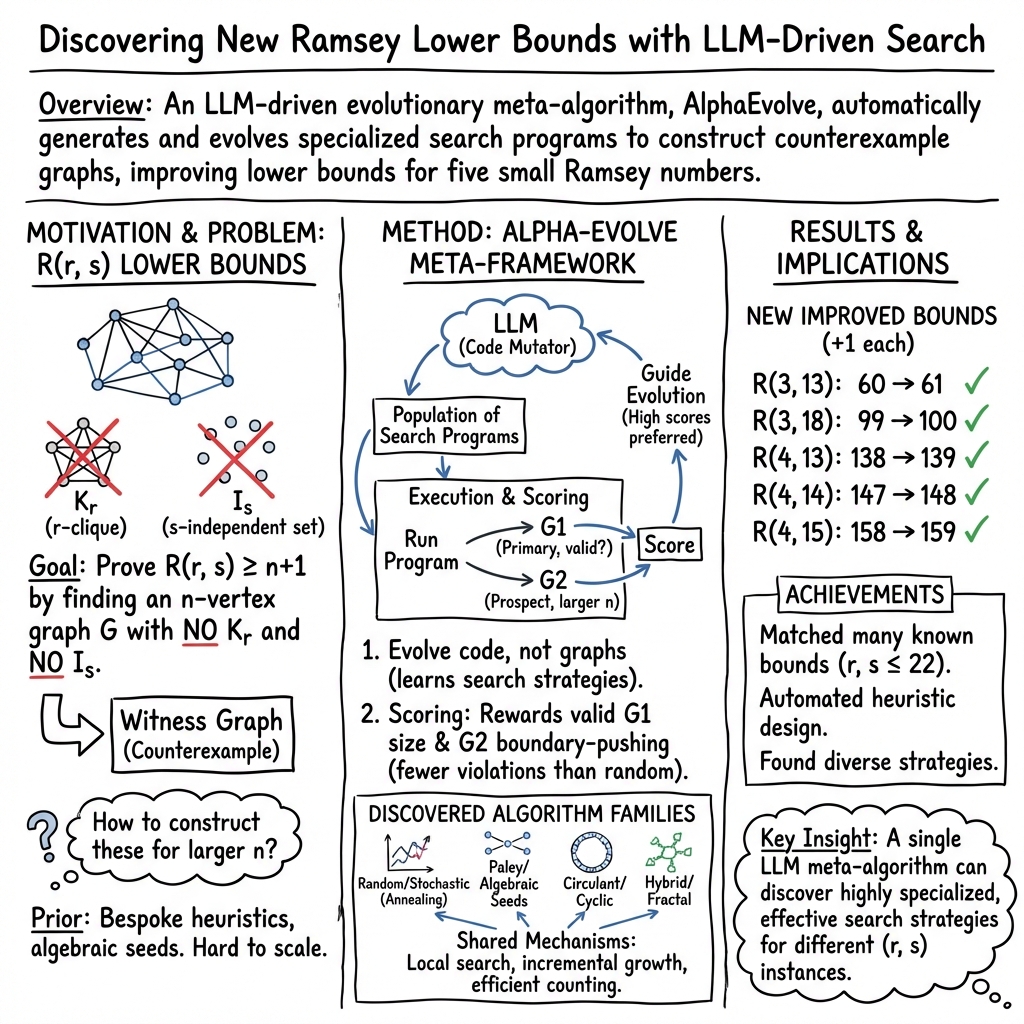

Abstract: We present improved lower bounds for five classical Ramsey numbers: $\mathbf{R}(3, 13)$ is increased from $60$ to $61$, $\mathbf{R}(3, 18)$ from $99$ to $100$, $\mathbf{R}(4, 13)$ from $138$ to $139$, $\mathbf{R}(4, 14)$ from $147$ to $148$, and $\mathbf{R}(4, 15)$ from $158$ to $159$. These results were achieved using~\emph{AlphaEvolve}, an LLM-based code mutation agent. Beyond these new results, we successfully recovered lower bounds for all Ramsey numbers known to be exact, and matched the best known lower bounds across many other cases. These include bounds for which previous work does not detail the algorithms used. Virtually all known Ramsey lower bounds are derived computationally, with bespoke search algorithms each delivering a handful of results. AlphaEvolve is a single meta-algorithm yielding search algorithms for all of our results.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

What is this paper about?

This paper studies “Ramsey numbers,” a famous idea in math about patterns that must appear when things get big enough. Think of a party: if there are enough people, you are guaranteed to find either a group of r people who all know each other or a group of s people who are all strangers. The smallest number of people that always forces one of these groups to exist is called the Ramsey number, written .

Finding exact Ramsey numbers is very hard. Instead, researchers often try to improve bounds—numbers that the true answer must be at least as big as (lower bounds) or at most as big as (upper bounds). This paper improves several lower bounds using an AI system called AlphaEvolve that writes and evolves computer code to search for special graphs (networks) that avoid certain patterns.

What questions were the authors trying to answer?

- Can an AI-driven system automatically discover better lower bounds for Ramsey numbers by finding large graphs that avoid “forbidden” patterns (no -cliques and no -independent sets)?

- Can a single, general “meta-algorithm” (one big strategy that creates many specific strategies) match or beat many different known results across a wide range of values?

- How do the AI’s discovered search strategies compare to past, human-designed methods?

(Quick glossary:

- A “clique” is a group of nodes in a graph where every pair is connected—like a tight friend group.

- An “independent set” is a group of nodes where no one is connected—like a group of strangers.

- A “lower bound” for means there exists a graph with nodes that avoids both patterns, so .)

How did they do it? (Methods in simple terms)

The authors used AlphaEvolve, an AI system that:

- “Evolves” code: It keeps a population of different small programs that try to build large graphs without the forbidden patterns.

- Mutates and improves: It uses a LLM to rewrite parts of these programs, like evolving playbooks to perform better in the next round.

- Tests two outputs from each program:

- A main graph that should be fully valid (no forbidden patterns).

- A larger “prospect” graph that may still have some forbidden patterns, but shows promise.

To decide which programs are good, AlphaEvolve gives them a score:

- Bigger valid graphs get higher scores (extra points if they beat the current best-known results).

- The “prospect” graph gets a bonus if it has fewer forbidden patterns than you’d expect by chance, encouraging the search to stretch toward larger sizes.

The AI experiments started from different kinds of “seed” graphs (starting points), such as:

- Random graphs (like flipping a coin for each edge).

- Special algebraic constructions (like Paley or circulant graphs) known to have helpful structure.

Over time, the AI learned which starting points and tweaks worked for each target and produced tailored search strategies.

What did they find and why does it matter?

The AI improved five classical lower bounds (each by 1), meaning it found larger graphs that avoid the patterns than anyone had found before:

- : 60 → 61

- : 99 → 100

- : 138 → 139

- : 147 → 148

- : 158 → 159

These improvements matter because even a +1 is hard-won in Ramsey theory—each step is a proof that a bigger “pattern-free” graph exists. Beyond the new records, the AI:

- Matched the best-known lower bounds for many other with .

- Recovered all cases where the exact value is known.

- Did so with one general approach that creates many specialized search algorithms, rather than hand-crafting a new algorithm for each case.

The team also made the new constructions (the actual graphs) public so others can check and build on them.

What’s the bigger picture?

- For lower bounds, building a single example graph is enough to prove . This is perfect for AI-driven searches.

- For upper bounds, you must prove no larger graph exists—this is a different type of challenge that usually needs formal proofs. The paper notes recent proof progress (like formal verification for ), but their AI method targets the “build examples” side.

In simple terms: this work shows that AI can act like a creative lab assistant for difficult math searches, discovering or rediscovering complex constructions across many problems with one unified system. It suggests a future where AI helps push the boundaries in other hard areas of combinatorics too—finding large, well-structured objects that humans haven’t yet imagined.

Knowledge Gaps

Unresolved gaps, limitations, and open questions

The paper establishes new and matched lower bounds for several small Ramsey numbers using AlphaEvolve but leaves multiple aspects unspecified or unexplored. The following concrete gaps can guide future work:

- Reproducibility details are missing:

- Exact LLM(s) used (model/version), prompting templates, mutation strategies, selection policy, population size, compute budget, hardware, and all hyperparameters (e.g., scoring weights 4×/2×/1×, bonus coefficient 1/2).

- Random seeds, number of runs per cell, runtime distributions, and success rates to quantify variability.

- Verification pipeline is not fully specified:

- Independent, external validation of the discovered graphs (beyond in-process counting) is not described; provision of independent checkers or SAT/MaxSAT encodings with certified proofs (e.g., DRAT) is absent.

- Full adjacency matrices/certificates are released only for the five improved cells; certificates for all matched bounds and isomorphism checks against known constructions are not provided.

- Risk of reward hacking by LLM-generated code is not addressed:

- No description of sandboxing or the use of trusted evaluation modules to prevent mutated programs from exploiting the scoring function (e.g., miscounting cliques/independent sets).

- Scoring function design lacks justification and ablations:

- The choice of weights for beyond-SoTA vs. at-SoTA vs. below-SoTA graphs and the prospect-graph bonus is ad hoc; ablation studies on these coefficients and alternative reward formulations are missing.

- The “expected violations” baseline assumes ; tuning or using analytic bounds tailored to regimes (e.g., Turán-type or known extremal densities) is not explored.

- Transferability of evolved algorithms is limited but unexamined:

- The paper observes poor cross-cell transfer yet does not quantify it or attempt meta-learning, curriculum learning, or representation sharing across tasks.

- Systematic experiments to determine which features (initialization family, move sets, tabu mechanisms) generalize across cells are missing.

- Initialization selection is only informally categorized:

- Criteria for choosing algebraic/circulant/Paley seeds are not formalized or automated; no search over seed families, parameter sweeps (e.g., modulus, residues), or seed-composition strategies is reported.

- Quantitative comparisons across initialization families for the same cell are absent.

- Scaling limits are untested:

- The approach is demonstrated for , where exact counting is feasible; scaling to larger (where clique/IS counting dominates) is not evaluated.

- Integration with faster exact/approximate clique and independent-set solvers (e.g., specialized branch-and-bound, SAT reductions, MaxSAT, or GPU-accelerated search) is not explored.

- Benchmarks against established baselines are incomplete:

- No head-to-head comparisons (time-to-solution, solution quality distribution) with canonical methods (simulated annealing, tabu search, circulant search, SAT/MaxSAT) on shared cells and budgets.

- Absence of standardized benchmarks and reporting of negative results or failure modes.

- Theoretical understanding is lacking:

- No guarantees on convergence, expected improvement, or sample complexity of AlphaEvolve; no analysis of why the “prospect” bonus correlates with eventual valid larger graphs.

- No formal characterization linking discovered heuristics to known extremal structures or to bounds from probabilistic methods.

- Sensitivity to LLM choice and contamination is unstudied:

- Effects of different LLMs, temperatures, and training data contamination (memorization of known graphs) are not measured; beyond R(3,3) and R(3,4), provenance checks for other cells are absent.

- Cross-LLM replication (e.g., Gemini vs. GPT vs. open models) is not reported.

- Limited transparency into the evolved programs:

- Only high-level summaries of algorithms are given; no release of the evolved code lineage, intermediate programs, or instrumentation that would enable interpretability analyses.

- Systematic extraction of common motifs (move operators, acceptance criteria, structure-preserving transformations) is not provided.

- Objective shaping and exploration policy need study:

- The impact of two-graph evaluation (G1 valid, G2 “prospect”) versus alternatives (e.g., multi-armed bandits over moves, intrinsic motivation, novelty search) is not analyzed.

- The “Select()” policy and population management (diversity preservation, elitism) are unspecified and not ablated.

- Coverage of the Ramsey grid is partial and uneven:

- Many cells remain unattempted or unreported; criteria for cell selection and compute allocation policies are unclear.

- No discussion of where the method plateaus (e.g., why only +1 improvements) and what additional ingredients might push further in the improved cells.

- Connections to upper bounds remain undeveloped:

- While upper bounds are noted as needing different techniques, concrete pathways to adapt AlphaEvolve to produce unsatisfiability certificates (e.g., evolving SAT encodings/solvers with proof logging) are not attempted.

- Structural exploitation is ad hoc:

- Constraint-driven searches within graph families (e.g., circulant, Cayley) are used, but a unified framework to impose, relax, or switch structural constraints adaptively is missing.

- Automated discovery of new algebraic/number-theoretic templates (beyond Paley/circulant) is not explored.

- Independent set counting methodology is unspecified:

- Exact vs. approximate counting strategies, pruning, or use of symmetry reductions are not documented, limiting reproducibility and future scaling.

- Lack of robustness analysis:

- No study of performance under different random seeds, failure probabilities, or confidence intervals on achieved bounds.

- No stress-tests for code robustness across environments or for deterministic replay.

- Novelty claims are not rigorously substantiated:

- Claims that some search strategies are novel lack detailed side-by-side algorithmic descriptions and bibliographic coverage; providing code and formal descriptions would strengthen this.

- Data and artifact release is incomplete:

- Besides five constructions, there is no full release of code, prompts, logs, evaluation harnesses, or the full set of discovered graphs for matched bounds, hindering community validation and extension.

Practical Applications

Overview

The paper introduces AlphaEvolve, an LLM-driven program-evolution framework that discovers search algorithms for constructing extremal combinatorial objects. Using a single meta-search process with surrogate scoring (including a “prospect graph” violation-reduction bonus), it improves several classical Ramsey lower bounds and matches many others. The practical value extends beyond Ramsey theory: AlphaEvolve operationalizes a general workflow for automatically discovering effective heuristics for hard combinatorial search, with reusable components such as program mutation, population selection, domain-aware initialization (e.g., algebraic/circulant graphs), and fast approximate evaluation.

Below are actionable applications derived from these findings and methods, grouped by near-term deployability and longer-term potential. Each entry lists suggested sectors and notes important dependencies or assumptions.

Immediate Applications

- Auto-heuristic discovery for small/medium combinatorial optimization

- Sector(s): Software, logistics/operations research, telecom

- What: Use the AlphaEvolve-style meta-search (LLM-guided code mutation + surrogate rewards) to generate problem-specific heuristics for routing, scheduling, bin packing, clustering, and topology design on moderate instance sizes.

- Tools/workflows: Integrate with OR-Tools or local solvers; maintain a population of candidate Python heuristics; score candidates via proxy metrics (e.g., violation counts, partial objective improvements).

- Assumptions/dependencies: Reliable evaluators for candidate quality; access to competent LLMs; compute budget for many runs; domain-specific seeds often improve outcomes.

- Program-synthesis layer for search-based engineering tasks

- Sector(s): EDA/chip design, software performance tuning

- What: Evolve scripts that compose multiple heuristics (tabu search, annealing, greedy growth) for placement, routing, or auto-tuning compilation passes.

- Tools/workflows: “Meta-heuristic studio” in CI/CD that proposes and tests mutated optimization pipelines on a benchmark suite.

- Assumptions/dependencies: Stable benchmarking harness; guardrails to prevent regressions; human-in-the-loop for safety/acceptance.

- Constraint-guided search using “prospect” scoring

- Sector(s): Software testing, security, networking

- What: Apply the paper’s violation-aware scoring (rewarding candidates that reduce expected violation counts vs. random baselines) to drive near-feasible solution discovery when feasibility is rare or hard to certify.

- Tools/workflows: Oracles to count or approximate constraint violations; stochastic sampling for expectation baselines.

- Assumptions/dependencies: Reasonable proxy metrics that correlate with true feasibility; Goodharting risks mitigated via periodic exact checks.

- Automated discovery of extremal/combinatorial constructions in academia

- Sector(s): Academia (mathematics/theoretical CS)

- What: Replicate and extend lower bounds for other small-parameter extremal problems (e.g., girth-vs-degree graphs, triangle-free constructions with high chromatic number).

- Tools/workflows: Port the AlphaEvolve harness; include algebraic/circulant initializations; develop fast substructure counters.

- Assumptions/dependencies: Efficient exact/approximate counting tools; curated seeds (Paley/cubic residue/circulant); compute quotas.

- Benchmark suites and reproducibility artifacts

- Sector(s): Academia, software

- What: Use the released Ramsey constructions and harness to build public benchmarks for search algorithm evaluations.

- Tools/workflows: Repository of graphs (sparse6 formats), scripts for verification, logging seeds and mutations.

- Assumptions/dependencies: Clear licensing for AI-generated code/data; reproducibility protocols (fixed RNG seeds, evaluator versions).

- Curriculum modules for combinatorics and algorithms

- Sector(s): Education

- What: Classroom labs where students evolve heuristics to reach known Ramsey bounds; compare seeds (random vs. Paley/circulant) and scoring strategies.

- Tools/workflows: Prepackaged notebooks with counters and mutation prompts; leaderboards for bounds attained.

- Assumptions/dependencies: Modest compute; faculty oversight to interpret outcomes and avoid LLM memorization pitfalls.

- Code taxonomy and documentation via AI summarization

- Sector(s): Software engineering, academia

- What: Adopt the paper’s workflow of using an LLM to cluster and summarize evolved code into families (e.g., random, algebraic, circulant initializations).

- Tools/workflows: Static analysis + AI summaries stored alongside code; auto-generated READMEs describing heuristics used.

- Assumptions/dependencies: Human verification of AI summaries; maintain provenance to avoid incorrect documentation.

- Stress-testing algorithms with adversarial/extremal instances

- Sector(s): Networking, databases, distributed systems

- What: Evolve graphs or inputs that are hard cases for existing algorithms (e.g., coloring, cut, cluster detection), improving robustness testing.

- Tools/workflows: Plug evolved instance generators into fuzzing frameworks; compare performance deltas across versions.

- Assumptions/dependencies: Fast evaluators for “hardness” proxies (e.g., violation density, solver time); sandboxing for resource use.

- Rapid prototyping of hybrid heuristic pipelines

- Sector(s): Software/AI engineering

- What: Automatically chain diverse heuristics (e.g., greedy → local search → spectral filter) as AlphaEvolve often does; deploy the best pipeline as a microservice.

- Tools/workflows: Component library of heuristics with standard interfaces; mutation operators that permute/compose them.

- Assumptions/dependencies: Monitoring to catch regressions; cost controls for search runs.

Long-Term Applications

- General AI “algorithm engineer” for combinatorial optimization at scale

- Sector(s): Cross-industry (logistics, telecom, manufacturing, finance)

- What: A platform that continuously evolves domain-specialized heuristics for large instances, learning initialization families and reward shaping per problem class.

- Tools/products: Enterprise “Meta-Optimization Platform” that co-designs heuristics with solvers (MIP/CP/SAT/RL).

- Assumptions/dependencies: Stronger LLMs; significant compute; scalable evaluators and simulators; IP/governance for AI-generated methods.

- Scientific discovery copilots for extremal combinatorics and beyond

- Sector(s): Academia, R&D

- What: Systematically explore conjectures by evolving constructions, mining patterns across successes, and proposing candidate generalizations.

- Tools/workflows: Integration with formal methods to certify upper bounds, SAT/MaxSAT back-ends for exactness, and proof assistants.

- Assumptions/dependencies: Toolchain maturity for formal verification; workflow to translate search insights into proofs.

- Design-of-experiments and trial scheduling heuristics

- Sector(s): Healthcare, life sciences, manufacturing

- What: Evolve heuristics for combinatorial design (e.g., balanced assignments, cohort schedules) with feasibility and fairness constraints.

- Tools/products: “Auto-DOE” module linked to clinical trial or factory scheduling systems.

- Assumptions/dependencies: High-fidelity simulators/evaluators; regulatory constraints; robust feasibility oracles.

- Grid and market optimization under complex constraints

- Sector(s): Energy, utilities

- What: Generate domain-tuned heuristics for unit commitment, network reconfiguration, or market clearing that respect temporal and security constraints.

- Tools/products: Co-optimization plugins to EMS/SCADA; “prospect” scoring to reduce violation risk before full feasibility checks.

- Assumptions/dependencies: Real-time data interfaces; safety validation; operator-in-the-loop oversight.

- Network topology and resilience co-design

- Sector(s): Telecom, data centers

- What: Learn topologies that avoid undesirable substructures (e.g., to reduce congestion or failure cascades), inspired by subgraph-avoidance in Ramsey constructions.

- Tools/products: Topology generator integrated with traffic simulators; rollback/blue-green deployment for trials.

- Assumptions/dependencies: Accurate performance and reliability simulators; staged deployment and monitoring.

- Robotics task and motion planning heuristics

- Sector(s): Robotics, manufacturing

- What: Evolve composite planning heuristics (sampling, local optimization, heuristic pruning) tailored to facility layouts and tasks.

- Tools/products: Planner plugins that adapt to environment statistics; offline evolution, online execution.

- Assumptions/dependencies: Fast physics/collision evaluators as scoring oracles; safety constraints; sim-to-real gaps addressed.

- Finance optimization and market mechanism design

- Sector(s): Finance, ad tech

- What: Heuristic discovery for portfolio selection with combinatorial constraints or bidding strategies in combinatorial auctions.

- Tools/products: Sandbox “AlphaEvolve-Fin” with backtesting; risk-aware surrogate objectives.

- Assumptions/dependencies: Strong risk controls; regulatory compliance; explainability for high-stakes decisions.

- Integrated AI+formal pipelines for bounds and certification

- Sector(s): Academia, assurance tooling

- What: Combine evolved lower-bound constructions with automated refutation/upper-bound tools (SAT, MaxSAT, proof assistants) for tight results.

- Tools/workflows: Continuous loop: generate candidates → certify → refine reward shaping.

- Assumptions/dependencies: Scalable certification; proof artifact standards.

- Governance frameworks for AI-generated scientific artifacts

- Sector(s): Policy, publishing, funding agencies

- What: Standards for releasing AI-discovered algorithms (code, seeds, evaluations), credit assignment, and reproducibility checklists.

- Tools/workflows: Audit trails for LLM prompts and mutations; artifact repositories with verifiers.

- Assumptions/dependencies: Community consensus; infrastructure for artifact evaluation; ethical/IP policies.

- Educational platforms for algorithmic creativity

- Sector(s): Education/EdTech

- What: Platforms where learners co-create algorithms with LLMs, seeing how seeds and reward shaping alter outcomes; competitions on extremal tasks.

- Tools/products: Gamified interfaces; instructor dashboards; automated feedback on search strategies.

- Assumptions/dependencies: Guardrails against memorization; equitable access to compute.

Cross-cutting assumptions and risks

- Access to capable LLMs and sufficient compute budget for evolutionary search.

- Existence of fast, reliable evaluators (exact or approximate); surrogate metrics must correlate with true objectives to avoid Goodhart’s law.

- Domain-specific seeding often matters (e.g., Paley/circulant/algebraic constructions); portability across problem families is limited, as observed in the paper.

- Necessity of rigorous verification and reproducibility (logs, seeds, versions); consider formal methods for high-stakes settings.

- Legal/IP and licensing clarity for AI-generated code and constructions; governance for credit and accountability.

- Energy/cost considerations for iterative meta-search at scale.

Glossary

- AlphaEvolve: An LLM-driven code-mutation system that evolves programs for searching extremal graphs. "AlphaEvolve is a single meta-algorithm yielding search algorithms for all of our results."

- branch-and-bound: A systematic combinatorial search technique that prunes subproblems using bounds. "who employed a branch-and-bound search on circulant colorings."

- Cayley graph: A graph defined from a group and a generating set, with edges encoding group multiplication by generators. "diverges from the Cayley graph initialization of~\cite{exoo2015new}"

- circulant graph: A graph whose adjacency structure is invariant under cyclic shifts of vertex labels. "used a class of circulant graphs with varying periodicities."

- clique: A set of vertices in a graph all pairwise adjacent. "has either has a clique of size "

- clique counting: The algorithmic task of enumerating or estimating the number of cliques in a graph. "clique and independent set counting is often a computational bottleneck"

- cubic graph: A 3-regular graph in which every vertex has degree three. "initialized the search with a cubic graph"

- cubic residue graph: An algebraically defined graph built using cubic residues modulo a prime. "cubic residue and Paley graphs, respectively"

- cyclic graph: Here, a graph constructed to respect a cyclic symmetry or ordering of vertices. "opts for a cyclic graph initialization."

- extremal combinatorial objects: Structures that achieve best-possible (extremal) values for combinatorial parameters. "the discovery of extremal combinatorial objects"

- extremal combinatorics: The study of maximizing or minimizing combinatorial quantities under constraints. "Our work lies within the domain of extremal combinatorics"

- formal methods: Mechanized logical techniques (e.g., proof assistants, SAT/SMT) used to prove mathematical statements. "formal methods have been successfully employed to prove that "

- G(n, p): The binomial random graph model on n vertices with independent edge probability p. "Initialization: Random graphs () or empty/greedy baseline."

- independent set: A set of vertices in a graph with no edges between them. "an independent set of size "

- Kakeya problem: A geometric-combinatorial problem over finite fields concerning sets containing lines in every direction. "improving bounds for the finite field Kakeya problem"

- Large-LLMs: Neural LLMs used here to mutate and improve search programs. "uses LLMs (Large-Language-Models) to iteratively evolve code-snippets"

- lower bound: A guaranteed minimum value for a quantity; here, the smallest n such that R(r,s) ≥ n+1 is demonstrated by constructions. "focus on improving lower bounds on "

- meta-algorithm: A higher-level procedure that generates or orchestrates other algorithms. "AlphaEvolve is a single meta-algorithm yielding search algorithms"

- Paley graph: A strongly regular graph constructed from quadratic residues over finite fields. "cubic residue and Paley graphs, respectively"

- prospect graph: In the paper’s scoring framework, a larger candidate graph used to gauge progress via violation counts. "a larger \"prospect\" graph ."

- quadratic residue graph: A graph defined via quadratic residues modulo a prime (e.g., Paley-type constructions). "Explicit seeding with Paley graphs, cubic, and quadratic residue graphs."

- Ramanujan graph: A highly expanding regular graph with eigenvalues meeting optimal bounds. "discovering extremal Ramanujan graphs"

- Ramsey number: The smallest n such that any graph on n vertices contains a specified clique or independent set. "Ramsey numbers have been extensively studied in the literature"

- simulated annealing: A stochastic optimization heuristic inspired by annealing in metallurgy. "the standard simulated annealing employed in~\cite{exoo2015new}"

- state-of-the-art (SoTA): The best known results or methods at the time of writing. "the previous state-of-the-art (SoTA) for the entries"

- sum-free set: A subset of an abelian group with no solutions to x+y=z within the set. "Bootstrapped from circulant graphs, sum-free sets, or cyclic constructions."

- synthetic objective function: A proxy scoring function used to guide search when the true objective is hard to optimize directly. "typically this process uses a synthetic objective function to guide AlphaEvolve"

- tabu search: A metaheuristic that uses memory structures to avoid cycling back to recently visited solutions. "integrates sophisticated tabu search mechanisms with sequential growth."

- upper bound: A proven maximum value; here, the largest n for which no valid construction exists above that size. "matching current best-known lower bounds (where the upper bound remains strictly higher)"

- witness graph: A constructed example that certifies a combinatorial bound (e.g., showing R(r,s) ≥ n+1). "generate a witness graph of a target size"

Collections

Sign up for free to add this paper to one or more collections.