- The paper presents BayesFusion-SDF, which integrates heteroscedastic Bayesian inference with a coarse TSDF reconstruction to achieve probabilistic 3D geometry fusion.

- It employs sparse linear algebra and randomized diagonal estimators to quantify uncertainty and enable effective next-best-view planning on a CPU platform.

- Experimental results demonstrate improved geometric accuracy using metrics like Chamfer distance, highlighting its potential for uncertainty-aware 3D reconstruction applications.

BayesFusion-SDF: Probabilistic Signed Distance Fusion with View Planning on CPU

Introduction

The paper presents BayesFusion-SDF, a CPU-focused framework for probabilistic signed distance fusion, significant for applications involving dense 3D reconstruction. Traditional volumetric fusion methods like TSDF, despite their deterministic and efficient reconstruction capabilities, lack principled uncertainty modelling, which limits their applicability in uncertainty-aware tasks such as view planning and decision-making. Neural implicit methods achieve high fidelity reconstructions but often require intensive GPU resources and complicate subsequent decision-making processes. BayesFusion-SDF seeks to bridge this gap by using a sparse Gaussian random field (GRF) formulated on a CPU-friendly platform, allowing for probabilistic and efficient reconstruction of the 3D geometry.

Methodology

The proposed method begins with a coarse TSDF reconstruction to define an adaptive narrow-band domain where depth observations are integrated using a heteroscedastic Bayesian approach. The framework utilizes sparse linear algebra and preconditioned conjugate gradients to solve the resulting optimization problem, and estimates uncertainty using randomized diagonal estimators, enabling both surface extraction and next-best-view (NBV) planning under uncertainty.

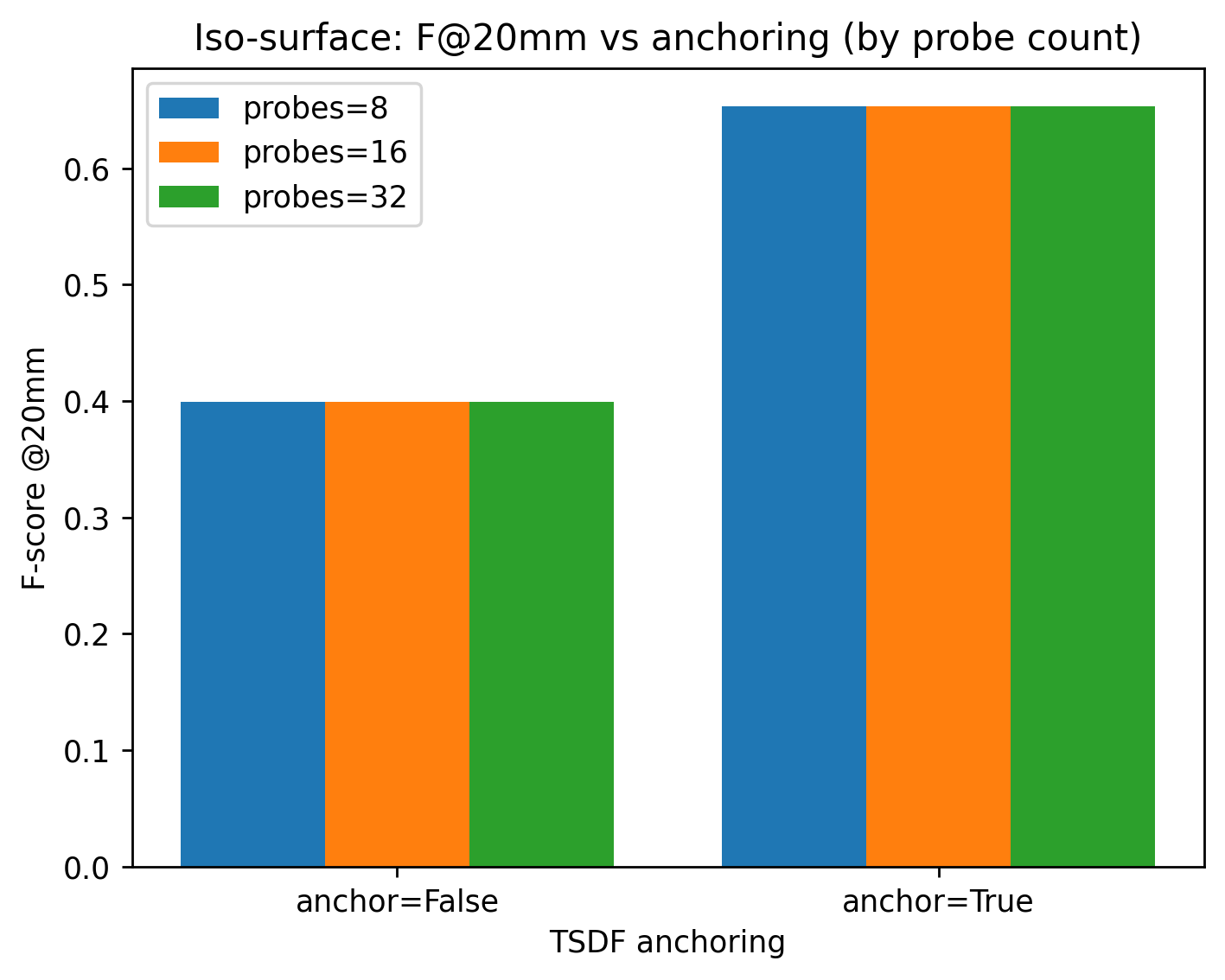

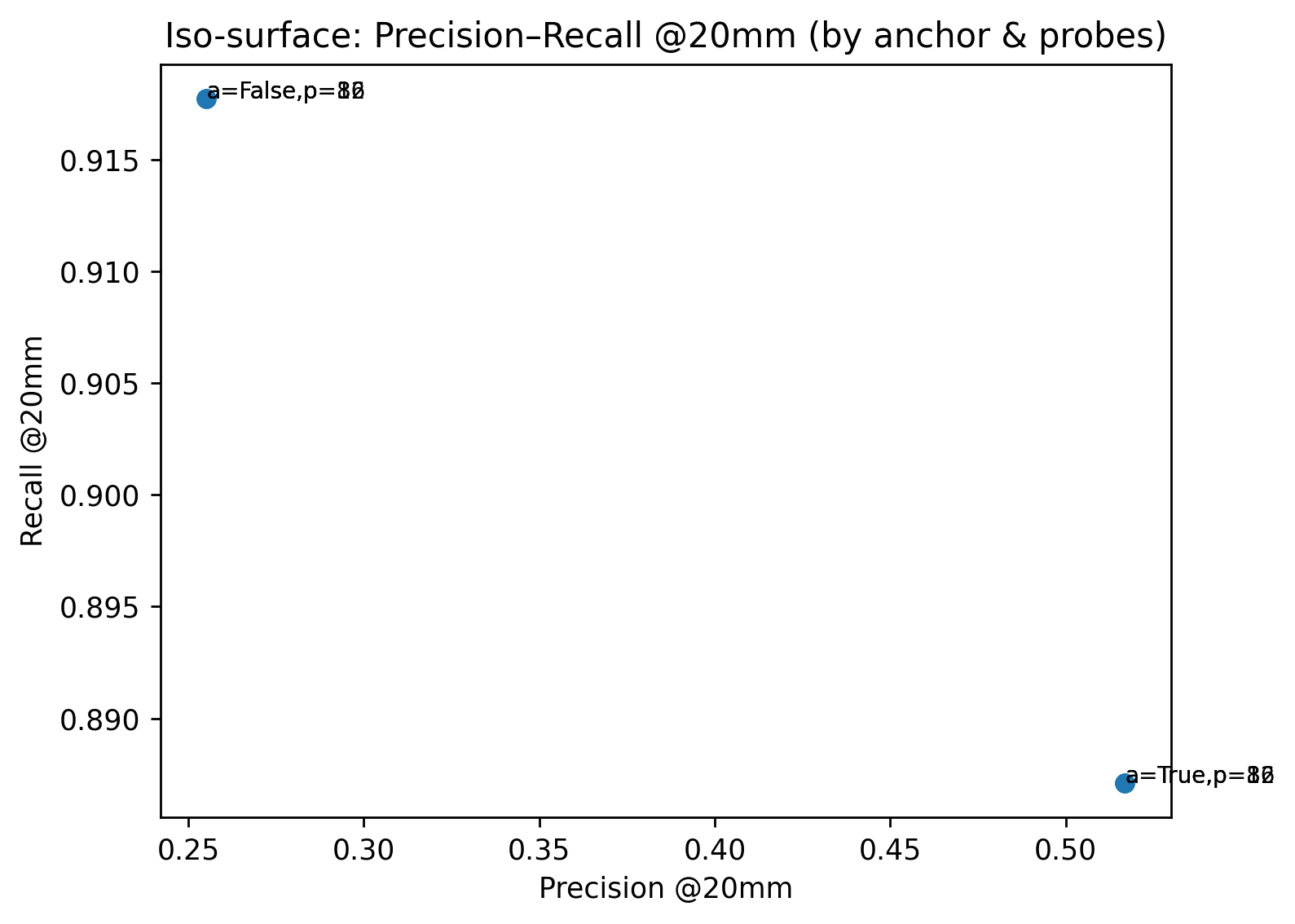

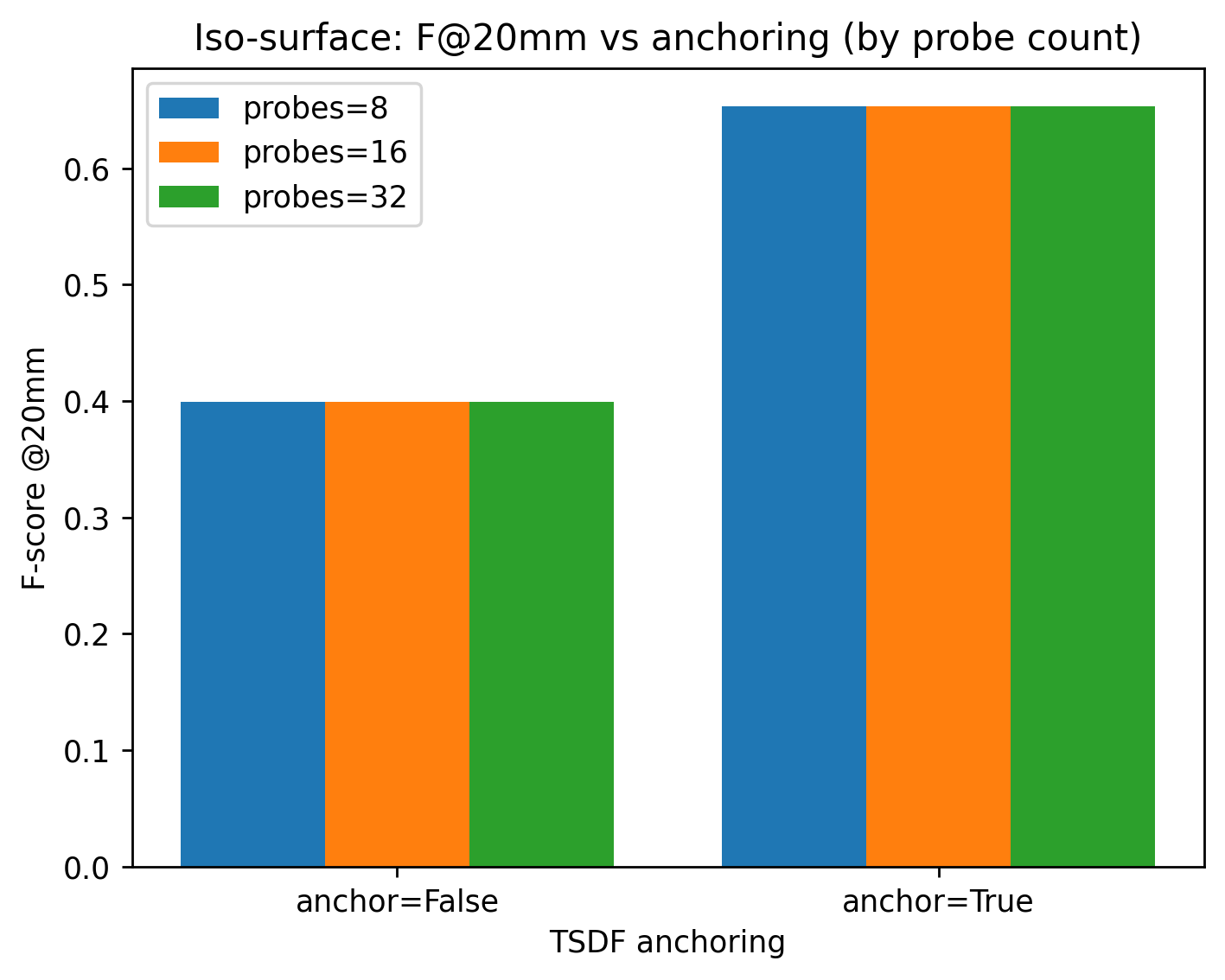

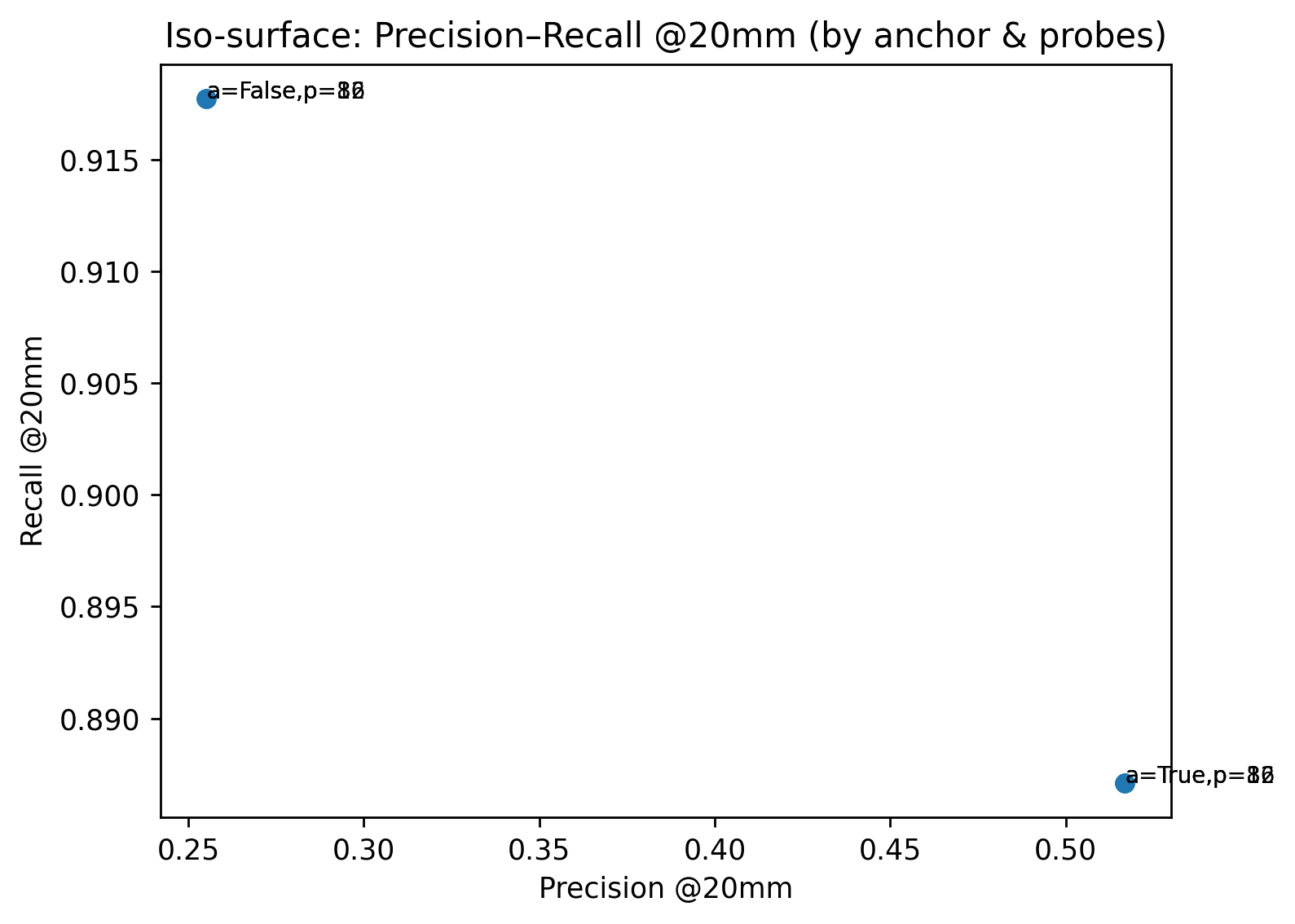

Figure 1: TSDF Anchoring Effect

The pipeline ensures scalability via sparse hierarchical domain representation and leverages a Gaussian Markov random field (GMRF) for exploiting sparse precision formulation, aligning with CPU-only deployment constraints. By explicitly integrating uncertainty within the reconstruction process, the framework facilitates active NBV planning, a crucial component for maximizing information gain during the 3D reconstruction process.

Experimental Results

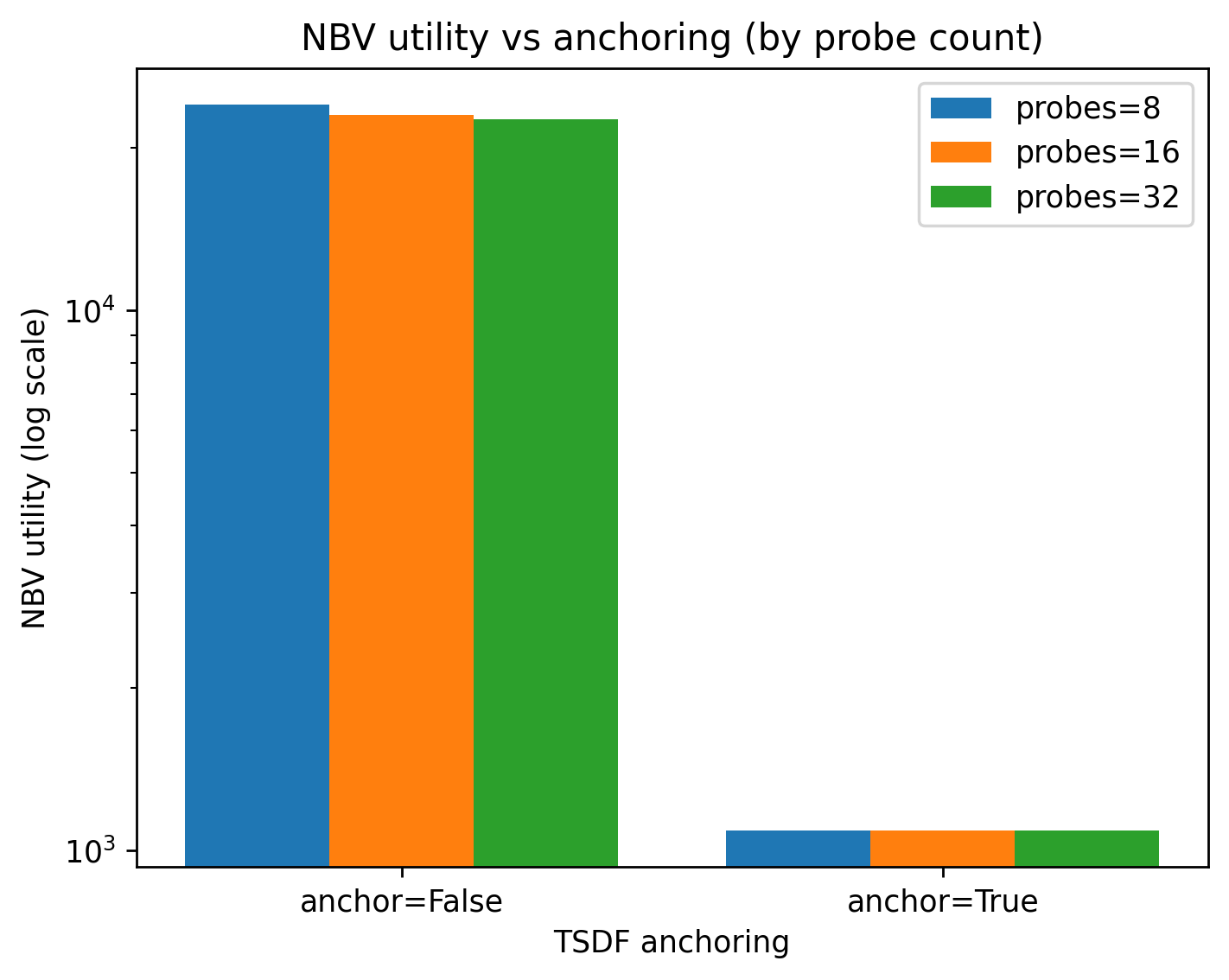

Experiments were conducted on controlled scenes and CO3D object sequences. The method demonstrated superior geometric accuracy compared to traditional TSDF baselines, as validated by metrics such as Chamfer distance, mean accuracy, and mean completeness.

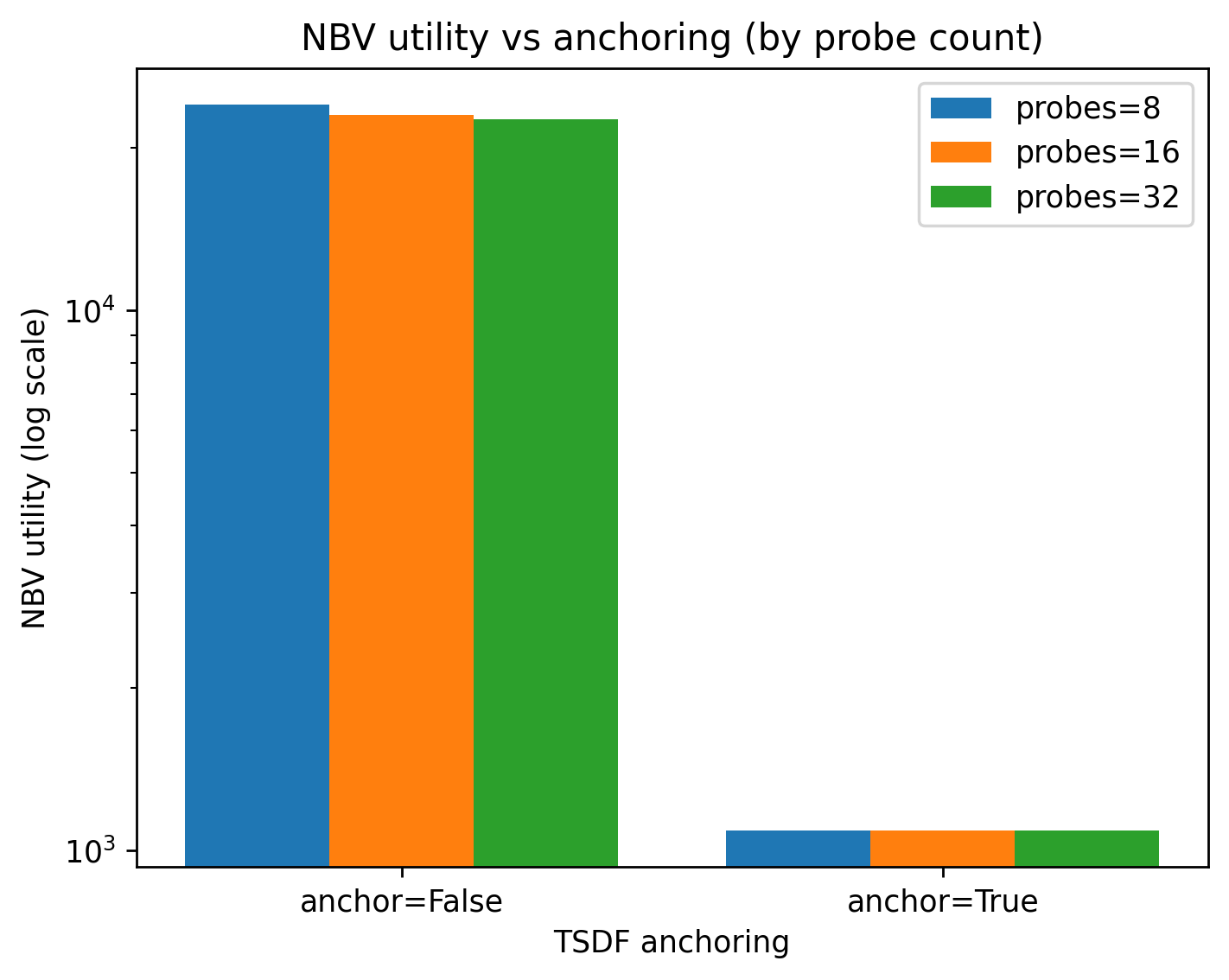

Figure 2: NBV Utility Trends

The results underscore the method's potential in achieving high geometric fidelity and effective uncertainty representation, suitable for active sensing applications beyond GPU-intensive neural methods.

Discussion and Limitations

BayesFusion-SDF advances the state-of-the-art by incorporating probabilistic inference into volumetric fusion without the added complexity and resource demands of neural networks. While effective, the probabilistic formulation introduces additional overhead in terms of memory and computation, necessitating further optimization for scalability to larger scenes and higher resolutions.

The requirement for enhancements in solver methods, adaptive parameter selection, and integration with autonomous exploration systems remains crucial for the method's application to dynamic environments and real-world scenarios.

Conclusion

BayesFusion-SDF represents a significant step forward in CPU-based 3D reconstruction by systematically integrating probabilistic modeling and uncertainty estimation into the workflow. It maintains the computational simplicity of classical volumetric techniques while introducing principled Bayesian inference methods. The ability to estimate posterior variance near reconstructed surfaces provides actionable insights for applications that demand both precise geometry and uncertainty-awareness, such as robotics and autonomous navigation.

By addressing the challenges of resource-intensive neural methods, this framework offers a viable and practical solution for real-time deployment in constrained environments, emphasizing its potential to reshape the 3D reconstruction landscape.