- The paper introduces TraceMem, a novel memory architecture that consolidates episodic conversations into coherent narrative memory schemata.

- It segments dialogues with XML-based tagging and employs multi-stage clustering (PCA/UMAP, HDBSCAN, KNN) for precise memory consolidation.

- Empirical evaluations show significant performance gains in multi-hop and temporal reasoning, achieving up to 30% improvement over baseline models.

TraceMem: Constructing Narrative Memory Schemata from User Conversational Traces

Introduction and Motivation

TraceMem introduces a cognitively inspired memory system targeting the challenge of sustaining long-term coherence in conversational AI, particularly in LLMs with limited context windows. Current memory architectures primarily treat dialogues as fragmented, contextually isolated units, which hinders agentic reasoning and persistent persona formation. TraceMem addresses this deficiency by weaving fragmented conversational experiences into coherent, evolving narrative schemata, leveraging principles from human memory consolidation and personal semantic theory. The system orchestrates multi-stage memory construction and retrieval to enable longitudinal, agentic interaction and facilitates stateful dialogue intelligence.

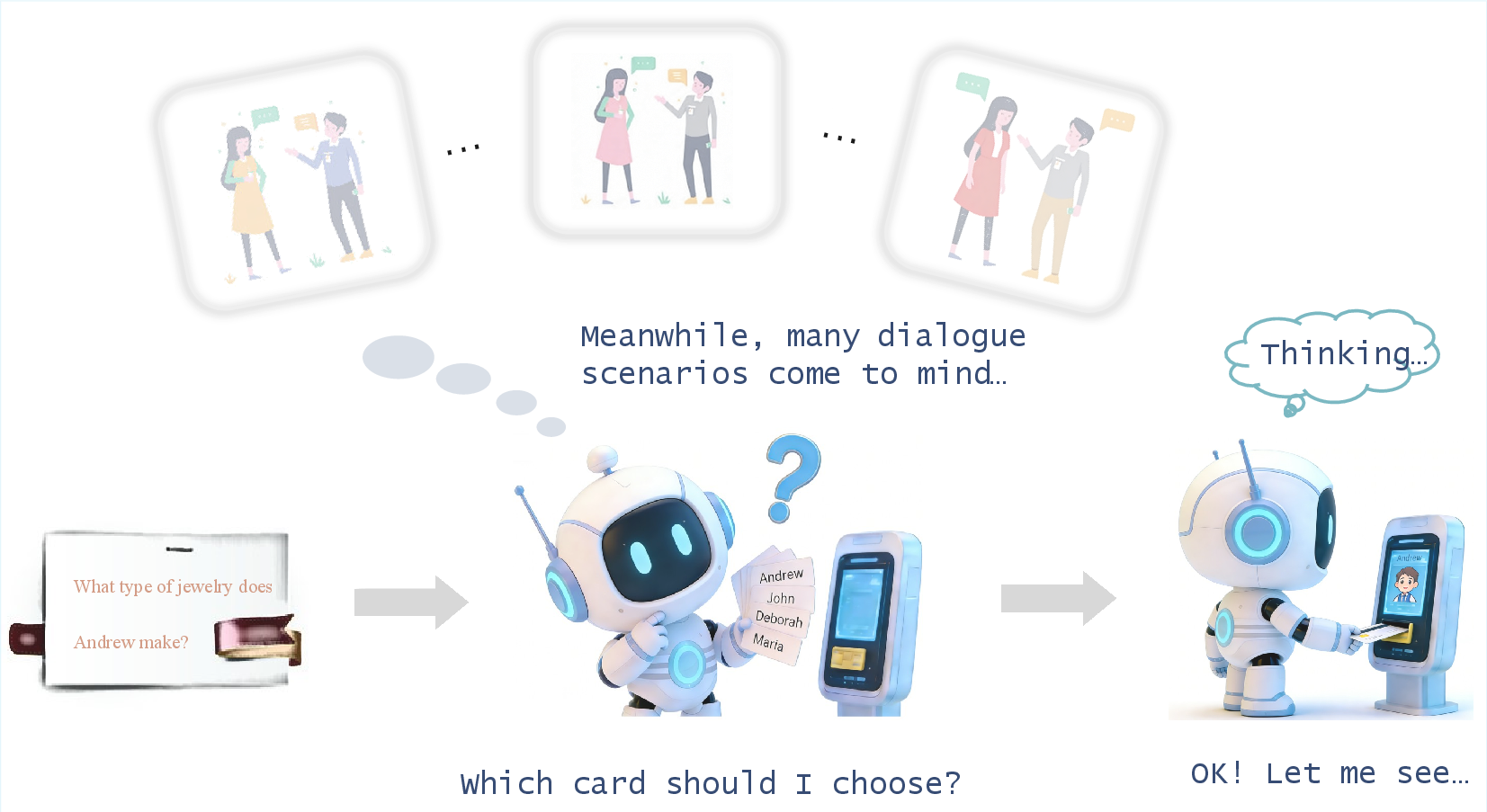

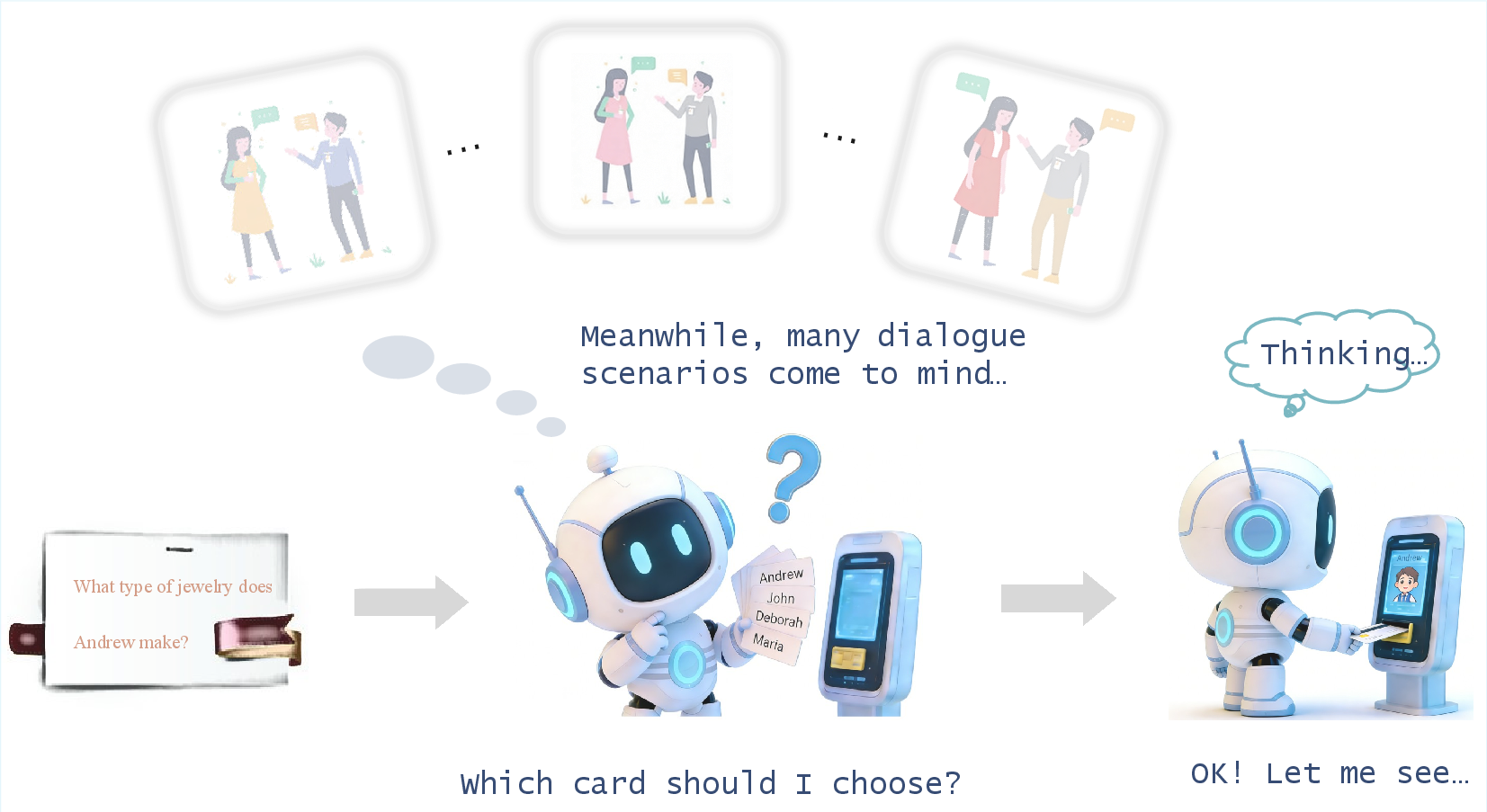

Figure 1: TraceMem’s “remembering” paradigm models human recall by evoking a coherent impression and tracing back to specific knowledge-acquisition episodes.

Architectural Overview

TraceMem operationalizes memory construction through three progressive stages:

- Short-term Memory Processing: Dialogues are partitioned into episodes via deductive topic segmentation (TC/TD tagging), extracting structured semantic representations using XML-prompted templates.

- Synaptic Memory Consolidation: Episodes are summarized, and personal experience traces are distilled by rule-based filtering—yielding factual autobiographical units aligned with semantic contexts.

- Systems Memory Consolidation: A two-stage hierarchical clustering pipeline (PCA, UMAP, HDBSCAN, KNN) organizes experience traces into topic-level clusters and thread-level narrative units, then encapsulates them as persistent, structured memory cards with semantic hierarchy.

Figure 2: TraceMem Pipeline: Short-term memory extraction, synaptic consolidation, and multi-stage clustering yield organized user memory cards.

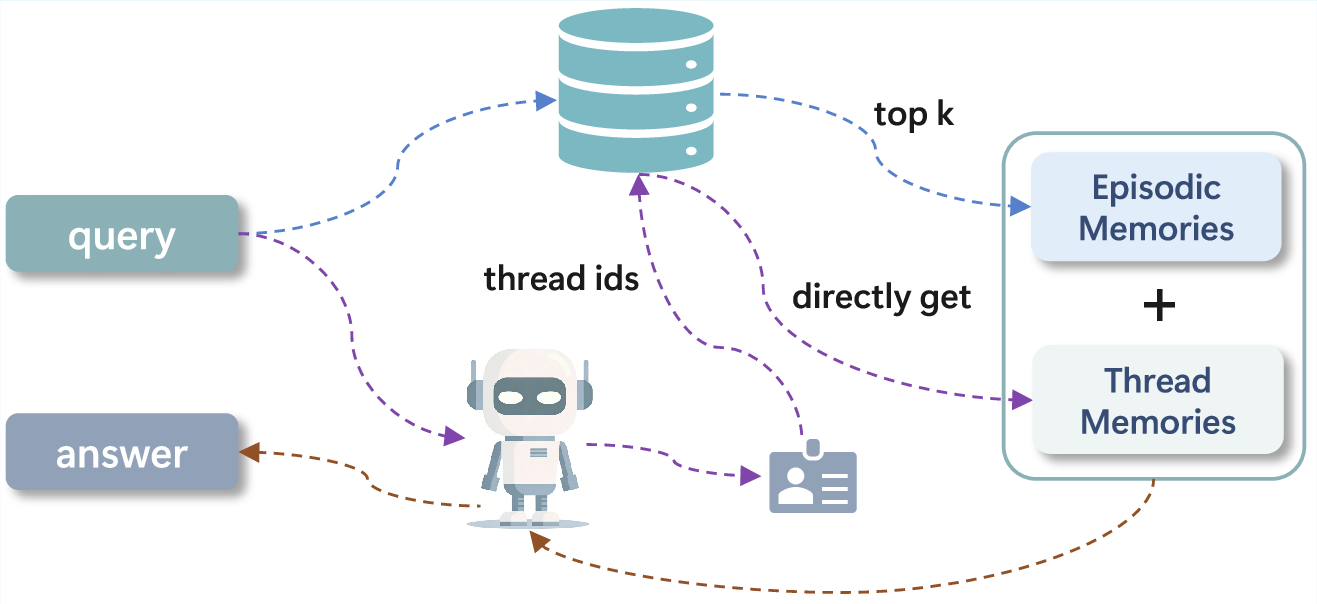

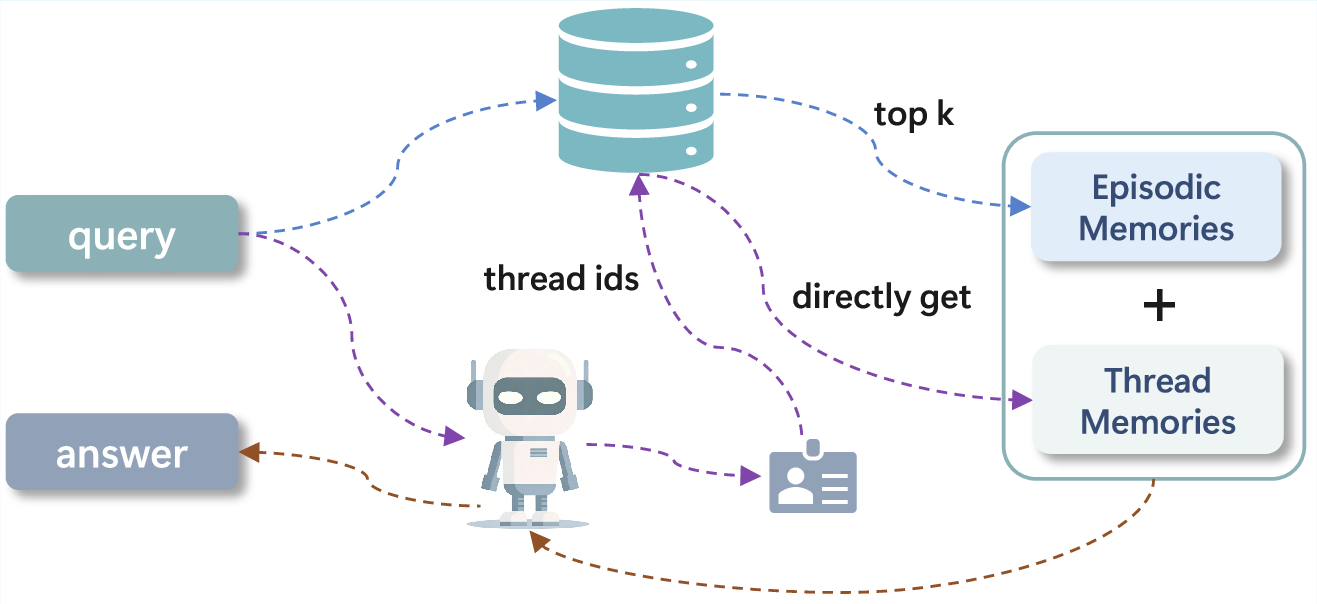

The architecture supports agentic search for memory utilization. Episodic memories and narrative threads are retrieved using semantic keys and card selection, providing multilevel contextual input to the reasoning engine.

Figure 3: Agentic search mechanism: The system retrieves top-k episodic fragments and relevant narrative threads for consistent, source-attributed response generation.

Memory Construction Details

Short-Term Processing

TraceMem employs a deductive XML-based intent tagging to recognize topic shifts, delimiting episodes as contiguous utterance subsequences. Semantic representations are generated per utterance, mapping raw (text, image) data into structured factual spaces. This yields sequences of episodes (Ei) and semantic sets (Si) for downstream processing.

Synaptic Consolidation

Episodes are summarized, and user experience traces are distilled via prompt-based rule filters targeting biographical and contextual retrieval. Experience traces (ETi) encapsulate personal semantics—facts, repeated events, self-knowledge—residing at the crossroads of episodic and semantic memory.

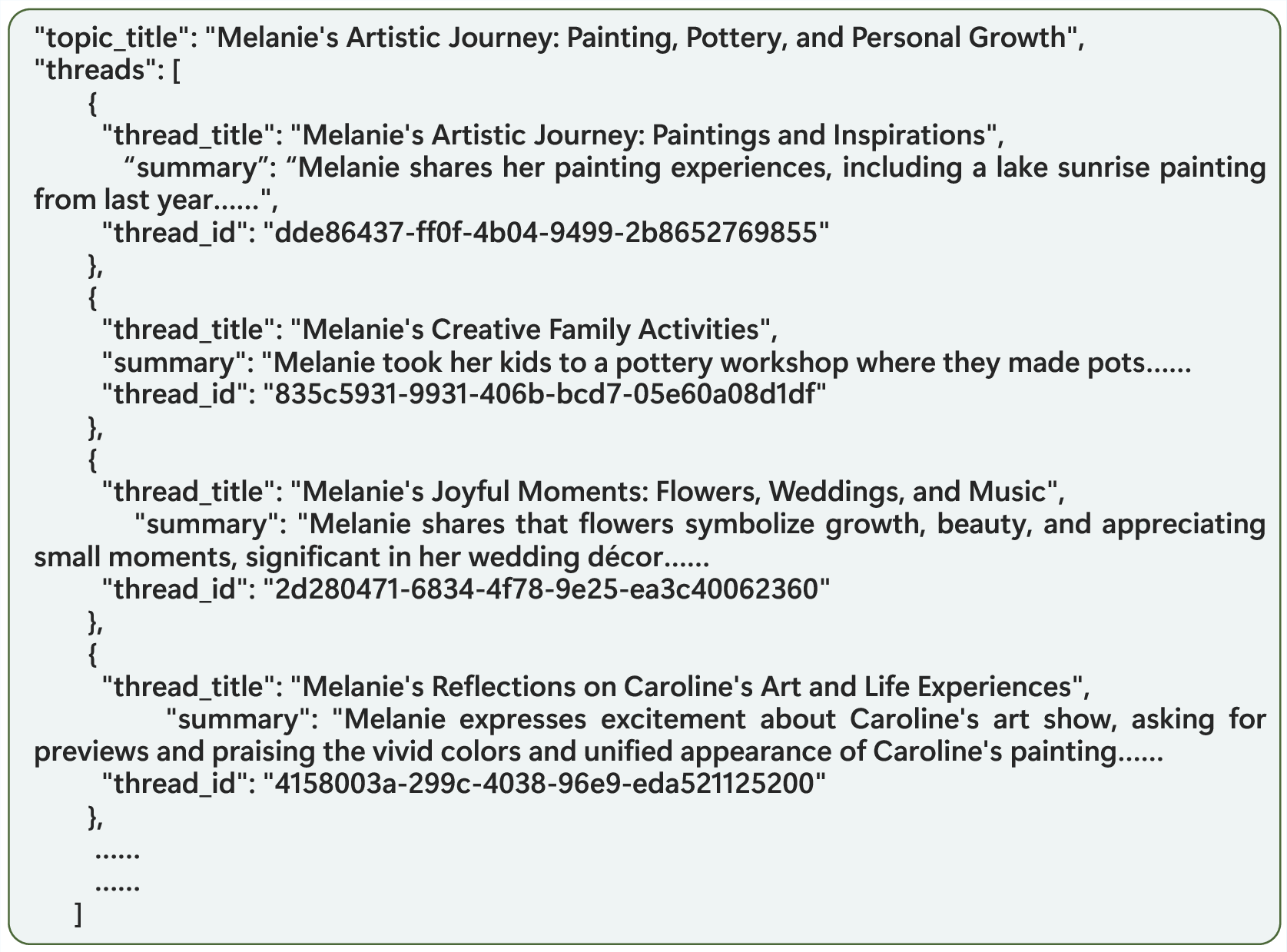

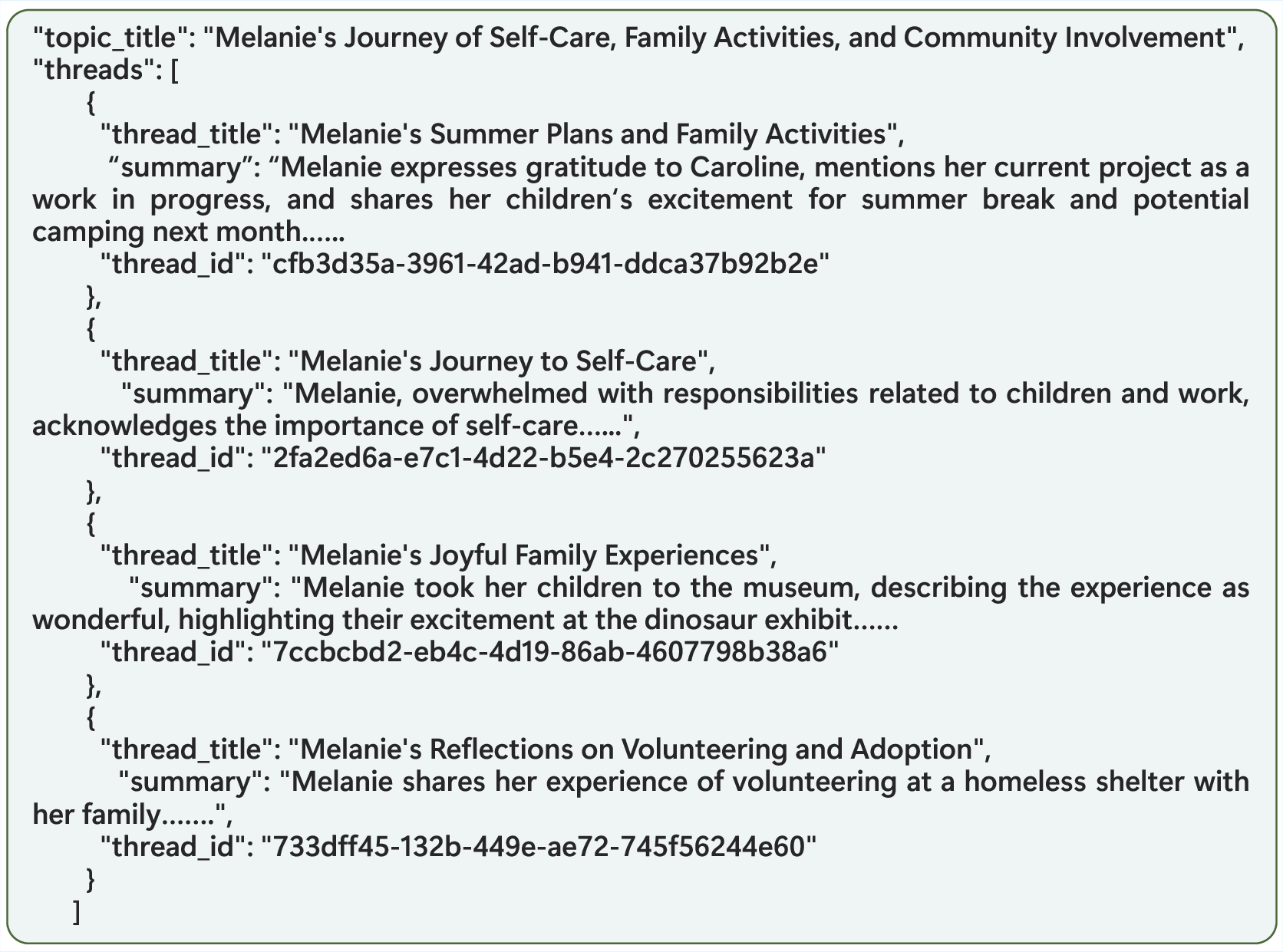

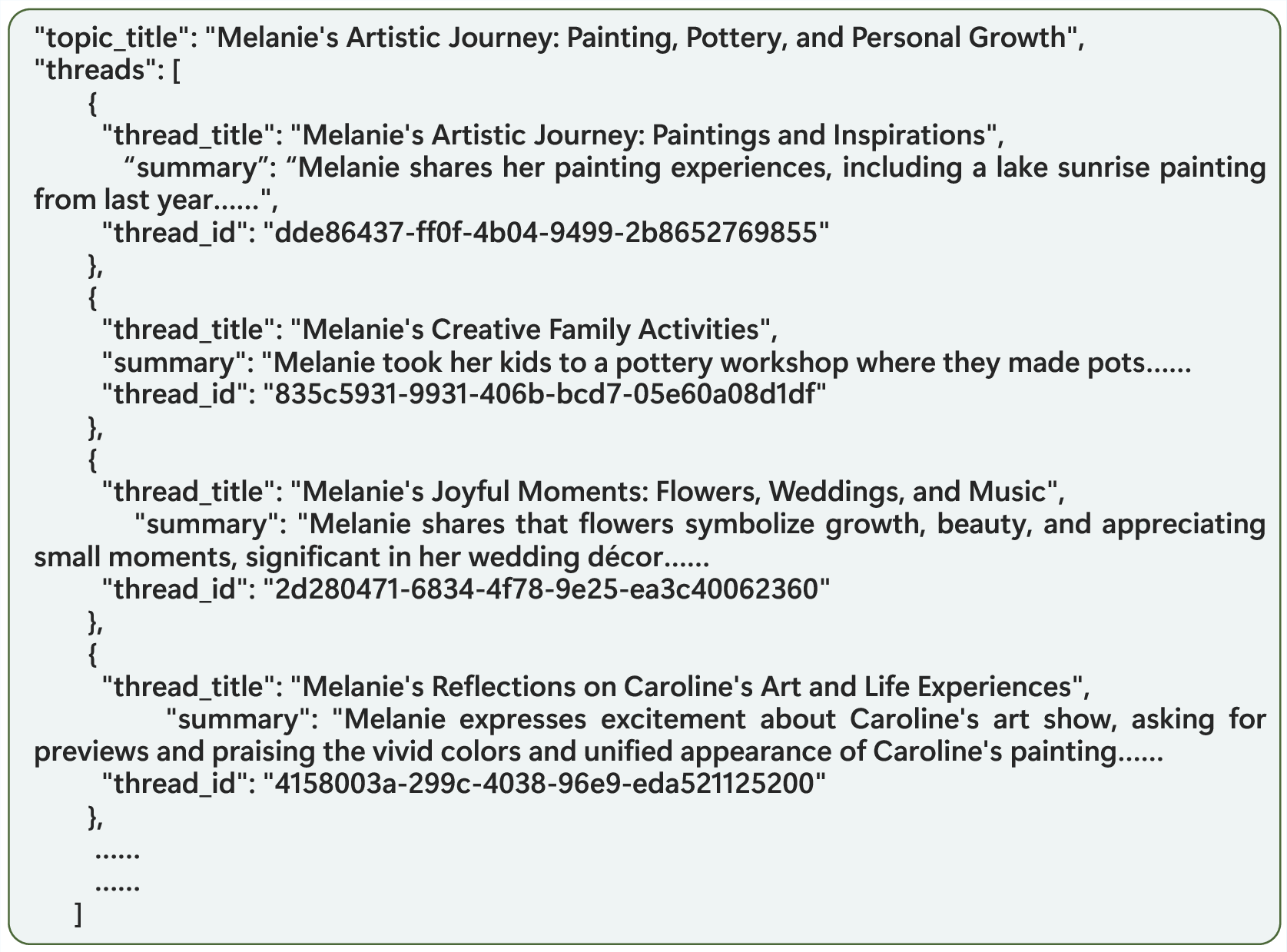

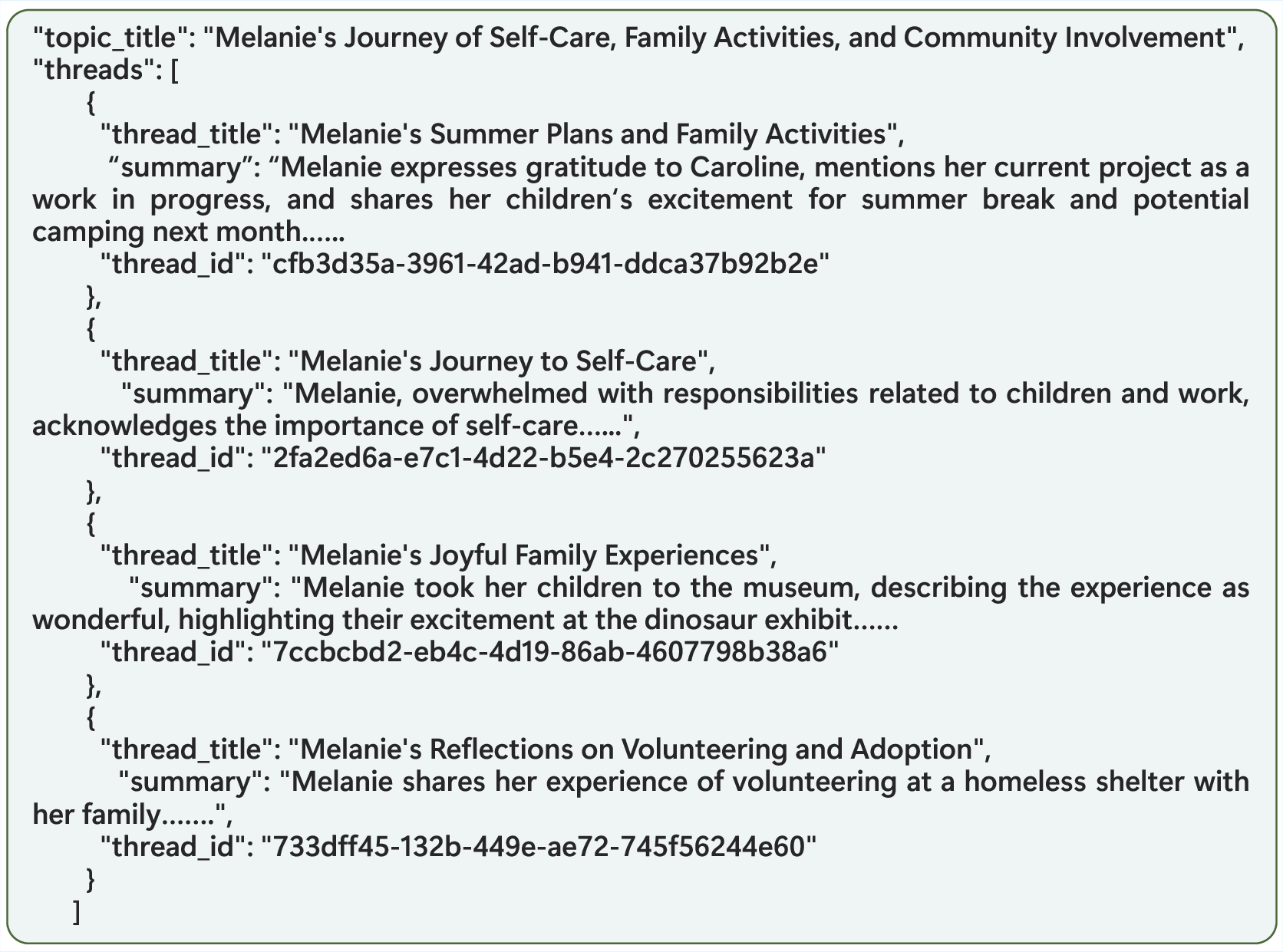

Systems Consolidation

High-dimensional trace embeddings undergo PCA/UMAP dimensionality reduction. HDBSCAN identifies dense clusters (topics), and KNN assigns noise points. Topics are further subdivided into semantically/sequentially coherent threads. Final memory cards present a three-level structure: theme, topics, and threads (with descriptive titles, summaries, and unique IDs).

Figure 4: Example topic in Melanie’s Memory Card, capturing self-care, family routines, and community engagement.

Agentic Search and Utilization

When queried, TraceMem first retrieves top-K episodic memories via vector search. Memory cards are selected based on semantic cues; relevant threads are identified and directly fetched by their IDs, providing human-like source-attribution for agentic reasoning. This fusion enables historically consistent responses and supports complex inference tasks.

Experimental Evaluation

TraceMem was validated on the LoCoMo benchmark—10 long dialogues, 1540 annotated questions spanning single-hop, multi-hop, temporal, and open-domain reasoning. Evaluation utilized GPT-4o-mini and GPT-4.1-mini backbones, with text-embedding-3-small; memory retrieval employed ChromaDB indexing and UMAP/HDBSCAN clustering. Accuracy was judged by an LLM-as-a-judge, using a standardized prompt.

TraceMem demonstrated strong numerical superiority:

- On GPT-4o-mini: 0.9019 overall accuracy, outperforming Nemori by 16% and FullText by 17%.

- On GPT-4.1-mini: 0.9227 overall, >30% higher than A-mem and 16% above LightMem.

- In multi-hop, temporal reasoning, TraceMem consistently maintained accuracies near/above the 0.90 threshold.

- Temporal task performance: 0.8660 (GPT-4o-mini), 0.9097 (GPT-4.1-mini), exceeding baselines by 10–12%.

- Multi-hop performance: 0.9220 (GPT-4o-mini), 0.8936 (GPT-4.1-mini), a >60% improvement over NaiveRAG.

Ablation studies revealed the following:

- Removing agentic search led to a substantial drop in multi-step reasoning (0.8652 vs. 0.9220).

- Episodic memory-only configurations plateaued at 0.8429 accuracy, with progressive declines as task complexity increased.

- Augmenting with additional semantic representations modestly boosted accuracy but exhibited diminishing returns beyond 40 contexts.

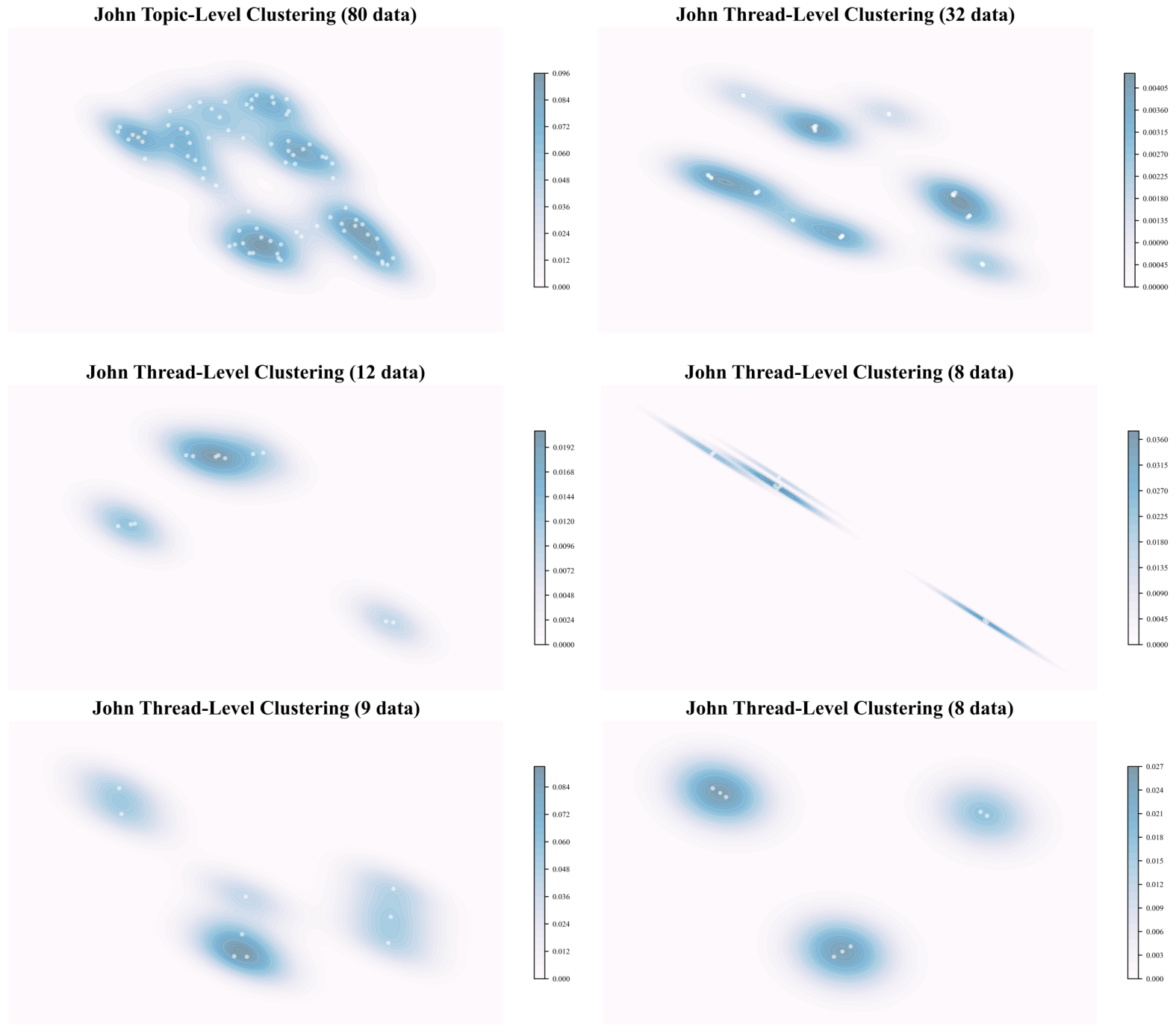

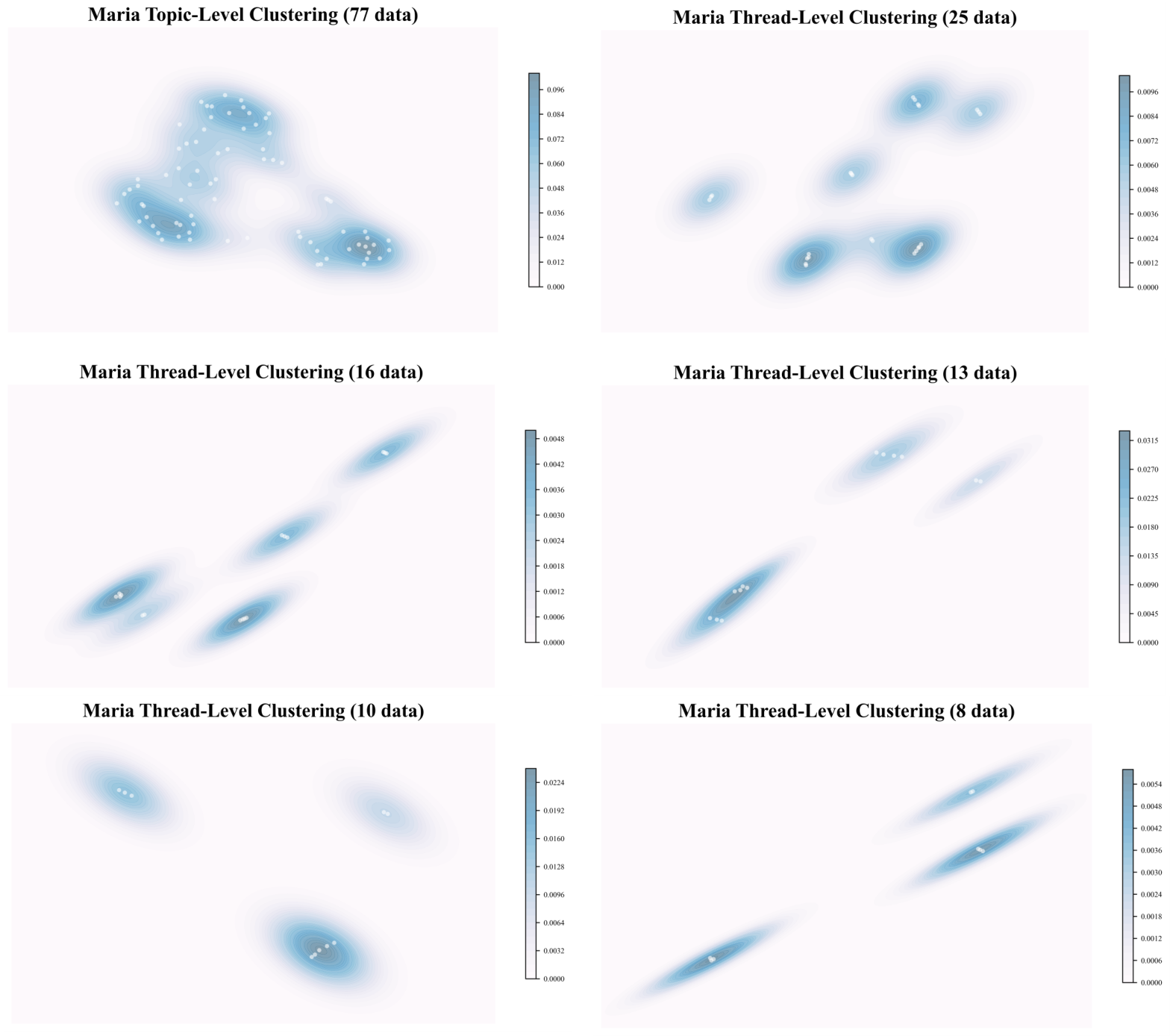

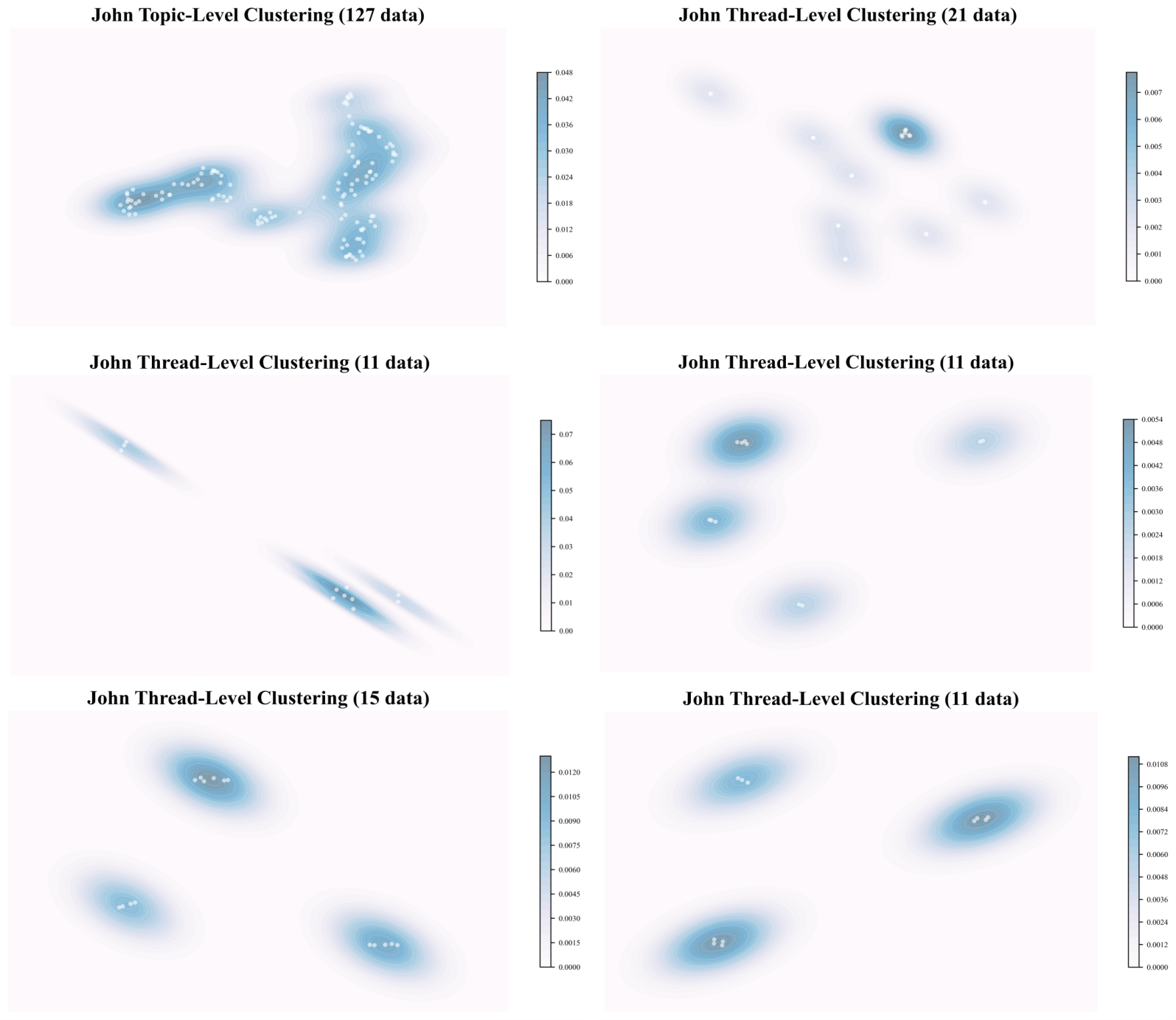

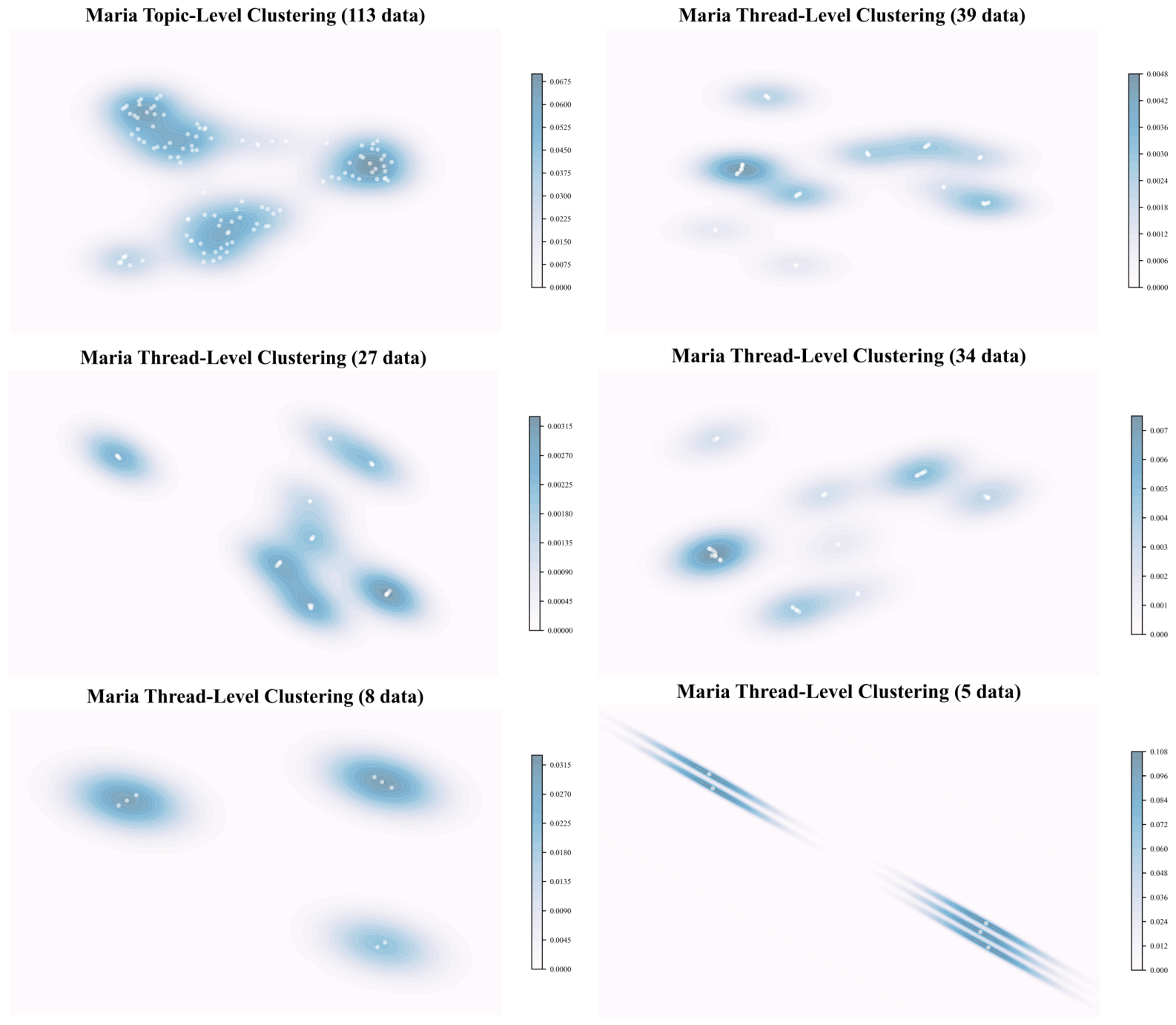

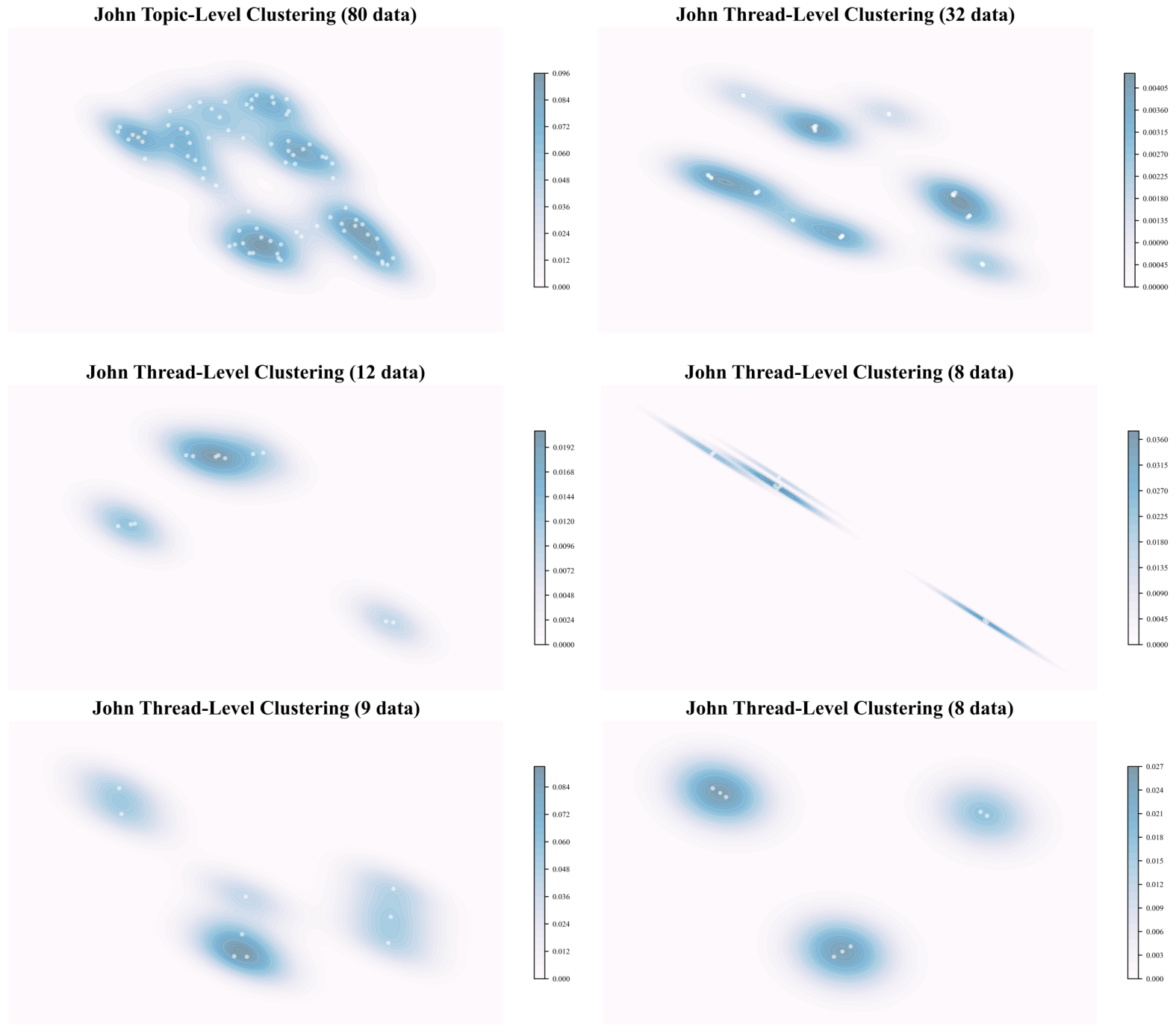

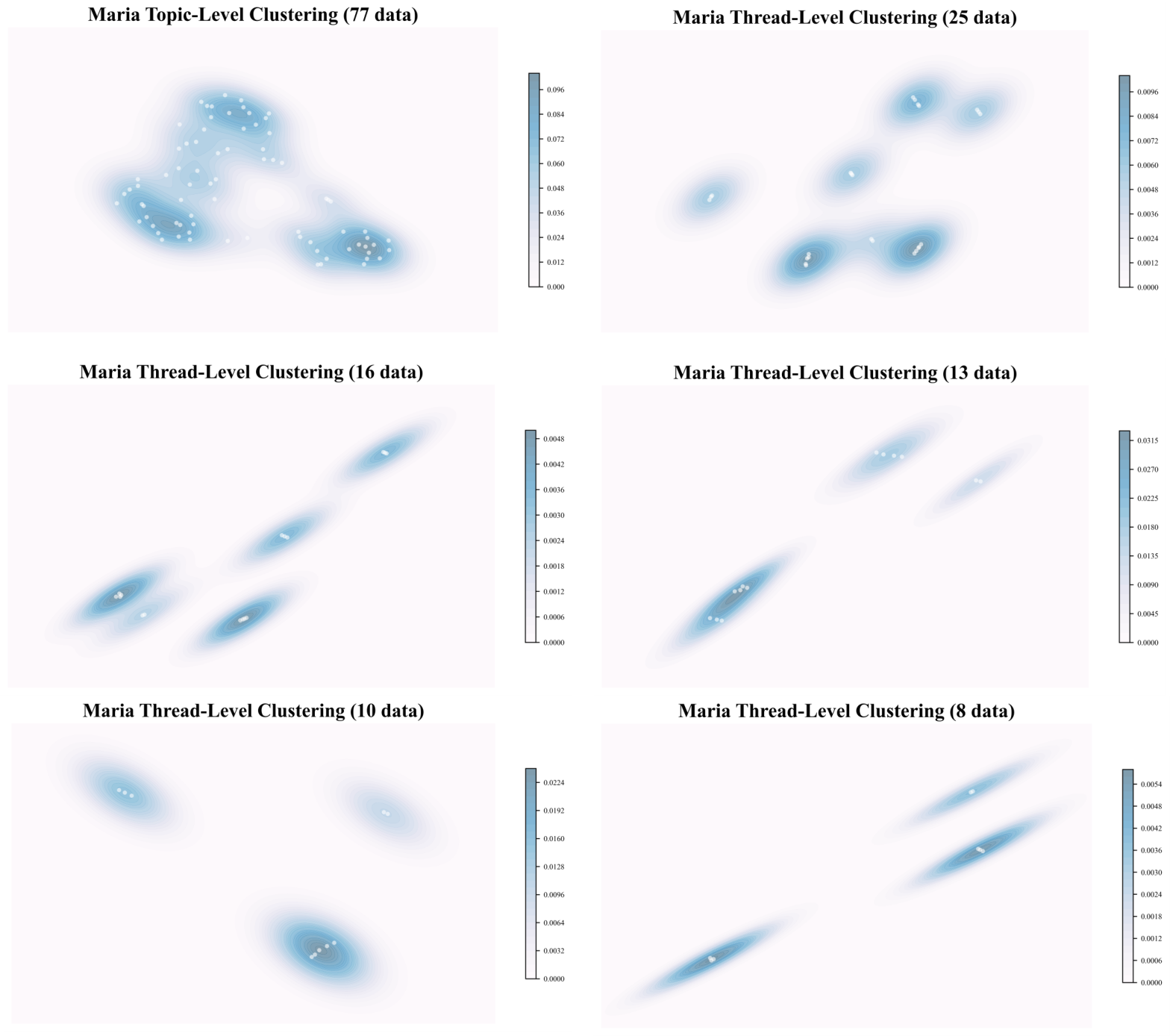

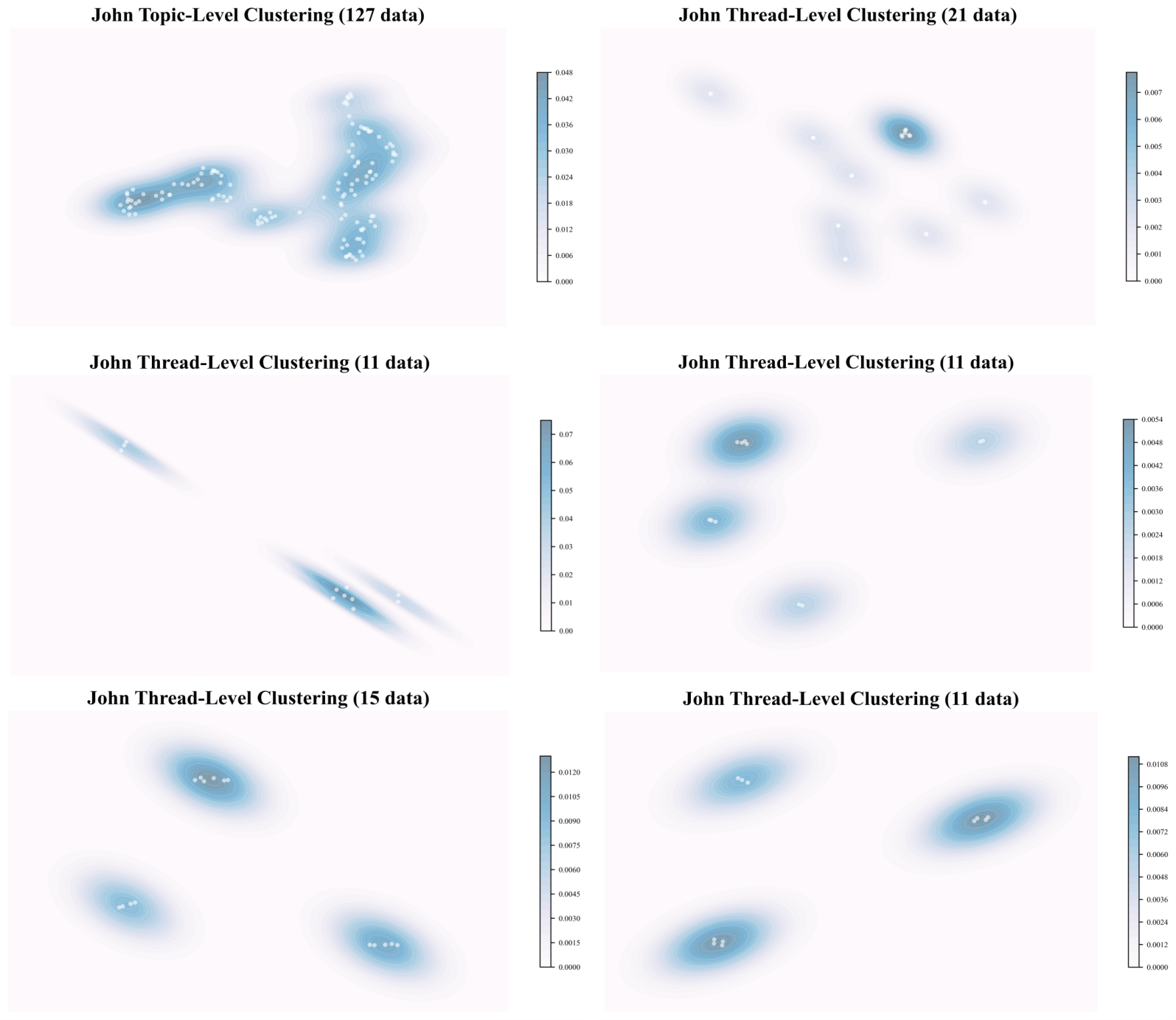

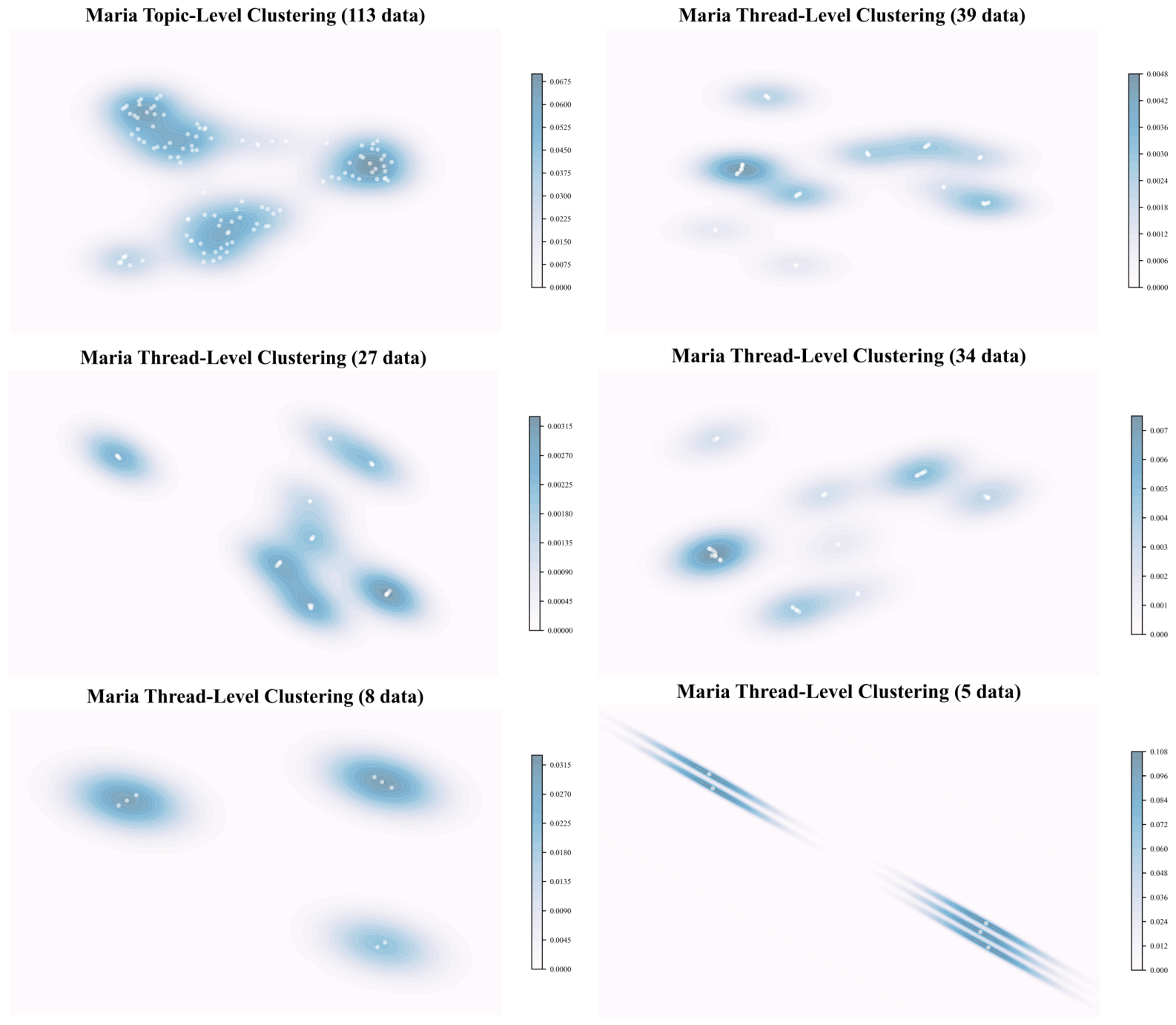

Clustering visualizations (Figures 5, 6) highlighted clear topic/thread density stratification in user memory spaces, evidencing TraceMem’s organization hierarchy.

Figure 5: Clustering results for User A (John) with GPT-4o-mini backbone; topic and thread segmentation manifest as density regions.

Figure 6: Clustering results for User A (John) with GPT-4.1-mini backbone; improved sensitivity to topic shifts, lower discard rate.

Theoretical and Practical Implications

TraceMem embodies an overview of cognitive science theory—dynamically consolidating episodic and semantic memories into durable, narrative schema. This architecture supports agentic search, persistent persona retention, and source attribution, enabling advances in conversational statefulness and deep context integration. The observed performance gains in temporal and multi-hop inference underscore the essentiality of structured, dynamic memory consolidation for longitudinal reasoning in LLMs.

Memory rewriting and regulated forgetting emerge as future research vectors; adaptive memory invocation based on agentic goals or dialogic context could further refine system utility. Agent personality modeling presents additional avenues for memory prioritization and selective recall aligned with intrinsic attributes.

Conclusion

TraceMem constitutes a principal advance in cognitive-inspired memory systems. Through deductive segmentation, synaptic and systems consolidation, and agentic search, it achieves persistent and coherent narrative memory schemata. Empirical evaluation affirms decisive improvements in complex reasoning tasks, reinforcing the critical role of dynamic memory structuring for achieving deep conversational intelligence in LLMs (2602.09712).