Sparse Reward Subsystem in Large Language Models

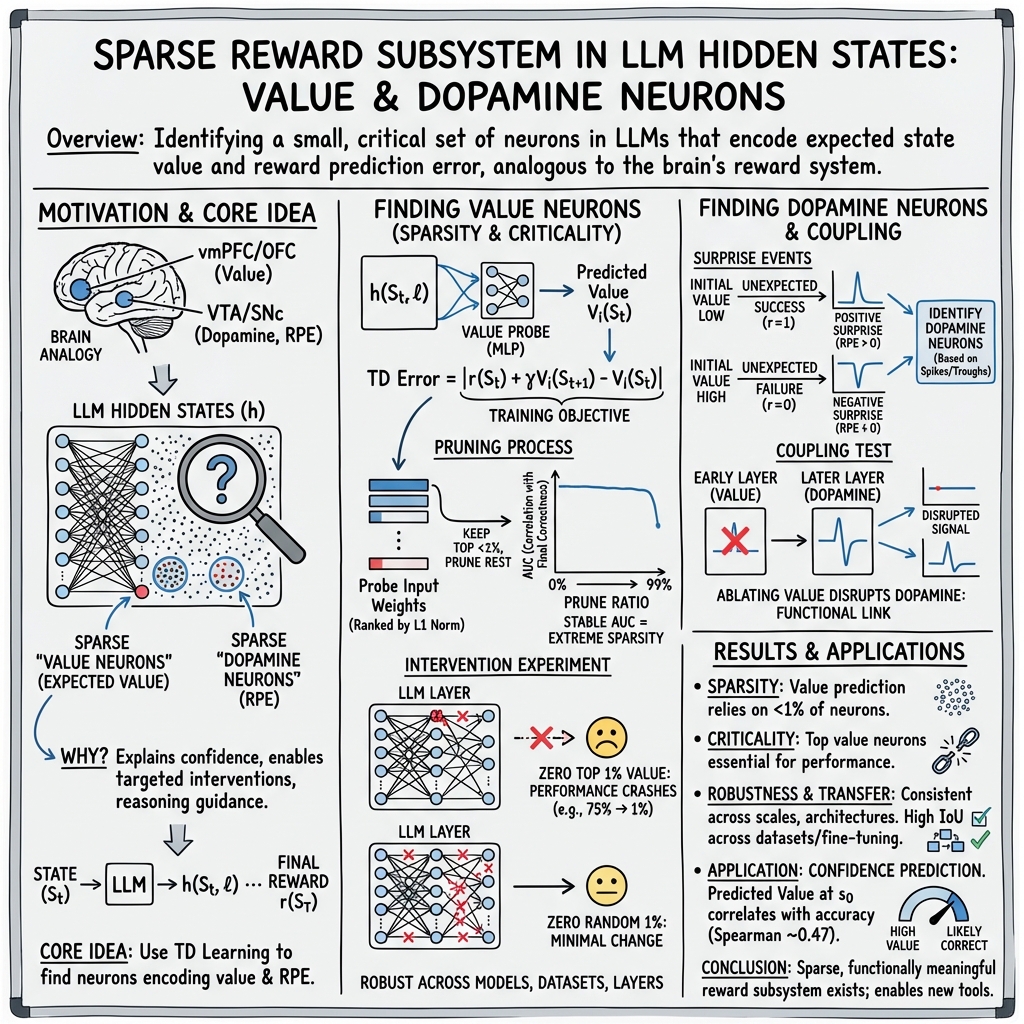

Abstract: In this paper, we identify a sparse reward subsystem within the hidden states of LLMs, drawing an analogy to the biological reward subsystem in the human brain. We demonstrate that this subsystem contains value neurons that represent the model's internal expectation of state value, and through intervention experiments, we establish the importance of these neurons for reasoning. Our experiments reveal that these value neurons are robust across diverse datasets, model scales, and architectures; furthermore, they exhibit significant transferability across different datasets and models fine-tuned from the same base model. By examining cases where value predictions and actual rewards diverge, we identify dopamine neurons within the reward subsystem which encode reward prediction errors (RPE). These neurons exhibit high activation when the reward is higher than expected and low activation when the reward is lower than expected.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

What This Paper Is About

This paper looks inside LLMs to find a small “reward subsystem,” similar to how the human brain has special parts that track rewards. The authors show that:

- Some neurons act like “value neurons” that estimate how good the current situation is (for example, how likely the model’s answer will be correct).

- Other neurons act like “dopamine neurons” that react when the outcome is better or worse than expected (this difference is called a reward prediction error).

The Main Questions the Paper Tries to Answer

- Do LLMs contain a small set of neurons that predict success or failure while the model is working on an answer?

- Are these neurons crucial for reasoning, or just “nice to have”?

- Are these neurons found consistently across different tasks, model sizes, and architectures?

- Can we find neurons that respond specifically to surprises (when results don’t match expectations), like dopamine neurons in the brain?

- How are these “value” and “dopamine” neurons related to each other?

How the Researchers Studied It (In Everyday Terms)

Think of an LLM like a student solving a problem one step at a time. At each step, the model writes internal notes to itself (these are called hidden states). The researchers built a small tool, a “value probe,” to read those notes and guess how likely the final answer will be correct.

Here’s what they did, explained with simple analogies:

- Hidden states: These are the model’s private notes during thinking. Each layer in the model keeps its own version of these notes.

- Value probe: A tiny program (a small neural network) that looks at the notes and outputs a single number: how “good” the current state seems. Think of it like a confidence meter.

- Temporal Difference (TD) learning: Imagine you’re playing a game and you keep updating your guess of your final score based on what just happened. TD learning trains the value probe to update its predictions step-by-step, so it learns to track expected success as thinking unfolds.

- Reward: At the end, if the model’s answer is correct, the reward is 1; if it’s wrong, the reward is 0.

- AUC (Area Under the ROC Curve): A score that measures how well the probe can separate “correct final answers” from “incorrect final answers.” Higher is better.

- Pruning: They tested whether only a few neurons carry most of the “value” signal. They gradually removed many input dimensions to the probe (like ignoring most of the notes and only reading the few most important ones) and checked if the probe still worked well. If performance stays high even when most inputs are removed, the value signal is sparse—carried by a small set of special neurons.

- Intervention (ablation): They “turned off” the suspected value neurons during inference and watched the model’s accuracy drop. As a comparison, they turned off a random set of neurons to see if the drop was special or just any neurons.

They repeated these tests across:

- Different datasets (math and science questions),

- Different model sizes (small, medium, large),

- Different layers,

- Different architectures (like Qwen, Llama, Gemma, Phi),

- Different fine-tuned versions of the same base model.

They also hunted for “dopamine neurons”:

- They looked at cases where the model expected a high reward but got a low one (negative surprise), or expected a low reward but got a high one (positive surprise).

- They tracked neurons whose activity spiked during positive surprises and dipped during negative surprises—matching the idea of dopamine neurons signaling reward prediction error.

- Finally, they showed that disrupting value neurons breaks the characteristic patterns of dopamine neurons, linking the two sets.

What They Found and Why It Matters

- A sparse reward subsystem exists:

- Only a tiny fraction (often less than 1%) of neurons carry strong “value” information about whether the model is on track to a correct answer.

- Even when they removed most inputs to the value probe, it still predicted well, proving the value signal is concentrated in a small set of neurons.

- Value neurons are critical for reasoning:

- Turning off just the top 1% of value neurons in a single layer caused accuracy to collapse on a math dataset (from about 75% to around 20% on average).

- Turning off a random 1% barely changed accuracy. This shows these specific neurons are uniquely important.

- Robust and transferable:

- The same kind of value neurons show up across many tasks (math word problems, science questions), model sizes, layers, and different architectures.

- The positions of these neurons overlap a lot across different datasets and even across models fine-tuned from the same base, meaning they’re a stable, general feature.

- Dopamine-like neurons exist:

- The researchers found neurons that activate strongly when things go better than expected (positive surprise) and suppress when things go worse (negative surprise).

- If you mess with value neurons earlier, these dopamine neurons stop behaving like “surprise detectors.” This suggests the reward subsystem has connected parts that depend on each other.

- Practical uses:

- These neurons can help estimate the model’s confidence.

- They could guide training methods that use internal signals (like reinforcement learning from the model’s own feedback), and help detect reasoning mistakes.

Why This Is Important

Understanding how LLMs internally judge “how well things are going” can:

- Make models more reliable by detecting when they might be wrong before they finish.

- Help reduce “hallucinations” (confidently wrong answers) by monitoring the model’s internal confidence and surprise signals.

- Improve training methods that teach models using their own internal evaluations, potentially cutting costs and data needs.

- Provide a clearer map of how reasoning unfolds inside LLMs, similar to how neuroscience maps functions in the brain.

Simple Takeaway

Inside LLMs, there’s a small team of special neurons that:

- Estimate how likely the model is to succeed (value neurons),

- React to surprises when reality doesn’t match expectations (dopamine neurons).

These neurons are few but mighty: they’re essential for good reasoning, show up reliably across tasks and models, and are tightly connected. By finding and using them, we can build smarter, safer, and more self-aware AI systems.

Knowledge Gaps

Below is a consolidated list of concrete knowledge gaps, limitations, and open questions that remain unresolved in the paper. These points are intended to guide future research toward targeted experiments and analyses.

- Clarify the definition of “neuron” in this context: hidden-state dimensions in the residual stream are not anatomically distinct neurons; assess whether identified “value neurons” are basis-dependent features rather than canonical units.

- Test invariance of “value neuron” identification to coordinate rotations in the residual stream (e.g., random orthogonal transforms that preserve function); if the set changes, the subsystem may be an artifact of the chosen basis.

- Quantify sensitivity of findings to probe hyperparameters (width, depth, activation functions), regularization, and seeds; report variance across multiple random initializations of the value probe.

- Compare pruning criteria beyond L1 norms (e.g., L2 norm, input-gradient saliency, integrated gradients, mutual information, Shapley values) to verify the stability of neuron selection.

- Provide quantitative evidence that a two-layer MLP is “minimal complexity” for this signal (e.g., benchmark against a linear probe and a small SAE) and characterize performance gaps.

- Establish whether the AUC stability under pruning reflects true sparsity rather than redundancy; for example, apply knockoff or controlled feature-dropout tests to disentangle sparsity vs. distributed coding.

- Evaluate value prediction at multiple timesteps, not only at the initial state s0; characterize how the value signal evolves during reasoning and whether “value neurons” are temporally stable.

- Report sensitivity of probe performance and neuron identification to decoding strategies and sampling parameters (greedy vs. nucleus/beam, temperature, top-p/top-k).

- Extend tasks beyond math- and STEM-heavy benchmarks (e.g., long-form writing, code generation, summarization, translation, dialogue safety) to test domain generality of the reward subsystem.

- Assess cross-lingual robustness (non-English tasks), multimodal inputs, and instruction-following settings to validate universality claims.

- Examine whether the subsystem persists in closed-source frontier models (e.g., GPT-4/Claude/Gemini) and across training regimes (SFT, RLHF, DPO, RLVR, RLIF) rather than only a small set of open-source models.

- Specify and empirically test the discount factor γ used in TD learning; conduct sensitivity analysis to γ and alternative temporal targets (e.g., λ-returns).

- Validate that the probe does not learn spurious correlations (e.g., prompt format artifacts, “Answer:” tokens); include controls with prompt variations and adversarial reformatting.

- Move beyond binary correctness rewards: test continuous/graded rewards, step-level partial credit, or external preferences to evaluate generality of the subsystem.

- Provide quantitative metrics for “dopamine neurons”: compute correlations between activation and TD error (Pearson/Spearman), AUROC for positive vs. negative surprise classification, and statistical significance across large samples.

- Detail and justify the dopamine neuron selection pipeline (currently in Appendix E) with reproducible criteria, baselines, and cross-seed stability analyses.

- Replace case-study visualizations of dopamine neurons with population-level analyses across many neurons/tasks; report effect sizes and confidence intervals.

- Control for LayerNorm and distribution-shift confounds in ablation (e.g., rescale zeroed units, use masked residual rather than hard zeroing) to ensure observed effects are causal.

- Test ablations across multiple layers simultaneously and quantify interactions among layers; determine whether “value neurons” are localized or distributed across depth.

- Disaggregate where “value neurons” live (attention vs. MLP blocks, specific heads, positional channels); use mechanistic tools (e.g., logit lens, path patching, SAEs) to localize circuitry.

- Verify whether neuron positions are stable across training seeds and fine-tuning runs; report IoU across seeds and provide statistical tests against the random baseline.

- Analyze whether increasing IoU at extreme pruning ratios is a statistical artifact (e.g., convergence to a minimal set); include permutation tests and confidence bands for IoU curves.

- Characterize the directionality of contributions (positive vs. negative value coding); test whether some neurons encode “anti-value” and how signs interact in the probe.

- Investigate whether “value neurons” capture task difficulty or uncertainty rather than value per se; include controlled difficulty-stratified evaluations.

- Measure how ablation affects general calibration (ECE/Brier score) and perplexity; rule out global degradation as the cause of performance drops reported in Table 1.

- Replicate ablation results across models, datasets, and layers with full reporting (layers targeted, number of runs, variance); current table lacks layer identifiers and dispersion metrics.

- Test whether the subsystem can be exploited to improve training (e.g., adding value-heads, auxiliary losses, reward shaping); provide controlled experiments and sample-efficiency analyses.

- Evaluate interactions with hallucination and safety: do value/dopamine neurons predict harmful or incorrect outputs, and can they be used to suppress them?

- Present the full methodology for confidence prediction (Appendix G) and benchmark against strong baselines (entropy, margin, verifier models) with calibration metrics.

- Explore whether features are compositional (subspaces) rather than single dimensions; test subspace-based ablations and rotations.

- Examine robustness to architectural modifications (e.g., rotary embeddings variations, gated MLPs, residual scaling, attention dropout); confirm subsystem persistence under such changes.

- Investigate whether learned value signals are grounded in features accessible to the model (e.g., chain-of-thought structure, intermediate numeric correctness) via causal mediation tests.

- Provide code and detailed hyperparameters for reproducibility (Appendix B is referenced but full settings, seeds, and data splits should be made explicit).

- Clarify the reward computation for non-open-ended tasks (ARC/MMLU multiple choice): how is “final reward” defined when no chain-of-thought is required, and does it bias the probe?

Glossary

- ablation: Deliberate removal or suppression of specific components (e.g., neurons/activations) to test their causal effect on behavior. "Ablating even a small subset of these neurons severely impairs performance."

- activation trajectory: The time-varying pattern of a neuron's activation over the sequence of generated tokens/states during inference. "visualize their activation trajectories"

- Area Under the Receiver Operating Characteristic curve (AUC): A metric summarizing a classifier’s ability to distinguish classes across thresholds. "we utilize the Area Under the Receiver Operating Characteristic curve (AUC) as our metric"

- autoregressive: A generation process where each next token is predicted conditioned on all previous tokens. "Our study focuses on autoregressive LLMs."

- base model: The original pretrained model from which multiple fine-tuned variants are derived. "derived from the same base model"

- decoder-only LLMs: Transformer-based LLMs composed solely of decoder stacks that generate tokens sequentially. "modern decoder-only LLMs consist of multiple Transformer blocks"

- discount factor: A parameter in temporal-difference methods that down-weights future rewards relative to immediate ones. "Given a discount factor y,"

- dopamine neurons: Neurons that encode reward prediction errors, increasing activation for better-than-expected outcomes and decreasing for worse-than-expected ones. "we identify dopamine neurons within the reward subsystem"

- Intersection over Union (IoU): A set similarity measure defined as the size of the intersection divided by the size of the union. "computing the Intersection over Union (IoU) of value neurons"

- L1 norm: The sum of absolute values of a vector; used here for magnitude-based pruning. "we calculate the L1 norm of the weights"

- multi-layer perceptron (MLP): A feed-forward neural network with one or more hidden layers and nonlinear activations. "we employ a two-layer multi-layer per- ceptron (MLP) with ReLU activation."

- negative surprise: A situation where outcomes are worse than expected, corresponding to negative prediction error and reduced activation. "in the negative surprise shown in Figure 1(b)"

- orbitofrontal cortex (OFC): A brain region implicated in representing subjective value during decision-making. "orbitofrontal cortex (OFC)"

- positive surprise: A situation where outcomes are better than expected, corresponding to positive prediction error and increased activation. "In the case of the positive surprise shown in Figure 1(a)"

- pruning ratio: The fraction of input dimensions or neurons removed to test sparsity and importance. "Now we introduce a pruning ratio p."

- Reinforcement Learning from Internal Feedback (RLIF): A framework where models learn from intrinsic signals instead of external rewards or labeled data. "Reinforcement Learning from Internal Feedback (RLIF)"

- ReLU activation: A nonlinear activation function defined as max(0, x), used to introduce nonlinearity in networks. "with ReLU activation."

- Reward Prediction Error (RPE): The discrepancy between obtained reward and expected value. "encode the Reward Prediction Error (RPE)"

- reward subsystem: A hypothesized sparse set of neurons that encode value and prediction-error signals within LLMs. "We identify a reward subsystem in LLM hidden states analogous to that of the human brain."

- Sparse Autoencoders (SAEs): Autoencoders trained with sparsity constraints to yield interpretable latent features. "Sparse Autoencoders (SAEs)"

- substantia nigra pars compacta (SNc): A midbrain region dense with dopamine neurons involved in signaling prediction errors. "sub- stantia nigra pars compacta (SNc)"

- Temporal Difference (TD) learning: An RL method that updates value estimates using bootstrapped predictions from subsequent states. "The probe is optimized using Temporal Difference (TD) learning."

- TD error: The instantaneous temporal-difference error between successive value estimates (and terminal reward). "the advantages of utilizing the TD error training objective"

- Transformer blocks: Modular layers in Transformer architectures combining self-attention and feed-forward networks. "multiple Transformer blocks"

- value head: An auxiliary scalar-output head used to predict value or correctness from internal representations. "a value head to predict whether models can answer questions cor- rectly"

- value neurons: A sparse subset of hidden units encoding the model’s internal estimate of the current state’s value. "value neurons are robust across diverse datasets"

- value probe: A lightweight predictor trained on hidden states to estimate value or reward signals. "we introduce a value probe V."

- ventral tegmental area (VTA): A midbrain region containing dopamine neurons that signal prediction errors. "ventral tegmental area (VTA)"

- ventromedial prefrontal cortex (vmPFC): A frontal cortex region associated with representing subjective value. "ventromedial pre- frontal cortex (vmPFC)"

- zeroing out: Setting selected neuron activations to zero during inference to assess their causal role. "zero out the activations of the top 1% of value neurons"

Practical Applications

Immediate Applications

Below are concrete use cases that can be deployed with current capabilities, leveraging the paper’s discovery of sparse “value neurons” (predicting internal state value) and TD-trained value probes.

- Confidence gating and abstention at inference (healthcare, finance, enterprise support, education)

- What: Use a lightweight, two-layer MLP probe on hidden states at the prompt (or early tokens) to estimate the likelihood of a correct answer, then abstain, escalate to a human, or switch to a stronger model when confidence is low.

- Tools/workflows: “Confidence head” or probe attached to chosen layers; threshold-based router; fallback policies; logging of probe AUC over time.

- Assumptions/dependencies: Access to hidden states at inference; calibration data with binary rewards (correct/incorrect); threshold calibration; robustness beyond math/STEM needs validation.

- Dynamic compute allocation and routing (software, cloud, robotics planning)

- What: Adapt generation depth (chain-of-thought length), sampling width (n-best), and temperature using the value probe’s early predictions; early-stop when value saturates or increase compute when value is low.

- Tools/workflows: Token-budget scheduler keyed to value trajectory; early-exit hooks; autoscaling across model tiers.

- Assumptions/dependencies: Hidden-state access; latency budget for probe; policy for adaptation; evaluation on non-math tasks pending.

- Best-of-N sampling and internal reranking (cross-sector)

- What: Use the value probe to pick among multiple sampled reasoning paths without external labels, improving answer quality and efficiency (complements SWIFT-style strategies).

- Tools/workflows: N-sample generation plus internal value scoring; optional tie-breakers (e.g., verifiers, tools).

- Assumptions/dependencies: Probe trained on representative tasks; selection thresholds; coverage across tasks.

- Early hallucination/risk detection (healthcare, legal, news, enterprise knowledge management)

- What: Flag low-value predictions and trajectories as high-risk; trigger conservative response modes (cite sources, add disclaimers) or require grounding with tools.

- Tools/workflows: Risk score fused with other safety signals; policy engine; structured logging for post-hoc review.

- Assumptions/dependencies: Calibration for domain; risk thresholds; hidden-state access; generalization beyond evaluated datasets.

- Tool-use and fallback orchestration (software, data/analytics)

- What: When early value is low, automatically route to tools (calculator, code interpreter, retrieval) or stronger models; when value rises after tool use, continue generation.

- Tools/workflows: Tool router keyed to value deltas; decision graph for escalation; feedback loop to adjust tool budgets.

- Assumptions/dependencies: Tool integrations; latency overhead; probe sensitivity to tool-induced changes.

- MLOps diagnostics and training monitoring (AI research and engineering)

- What: Track value-probe AUC and neuron sets over training to monitor reasoning progress, distribution shift, and overfitting; use IoU of value neurons across datasets/models to detect drift.

- Tools/workflows: Training dashboards for AUC/IoU; regression tests across releases; alerts on neuron set shifts.

- Assumptions/dependencies: Offline access to training/eval traces; consistent logging; agreed-upon metrics.

- Transferable “confidence plug-ins” for model families (model providers, platform vendors)

- What: Ship pre-trained value probes for base models that transfer to fine-tuned variants (leveraging observed neuron overlap), reducing per-app calibration cost.

- Tools/workflows: Probe bundles per layer; minimal fine-tuning on customer data; API for confidence scores.

- Assumptions/dependencies: Licensing and access to activations; some domain adaptation may still be needed.

- Robustness and ablation testing (model development)

- What: Identify and stress-test critical value neurons via targeted ablation for robustness analysis; compare to random ablation to pinpoint reasoning bottlenecks.

- Tools/workflows: Automated ablation sweeps; failure case mining; reporting of performance deltas.

- Assumptions/dependencies: Care to avoid permanent model damage; run in offline evaluation.

- End-user confidence UX (consumer assistants, education)

- What: Display a calibrated confidence meter based on value neurons; prompt users to verify or request more evidence when confidence is low.

- Tools/workflows: UI elements (meters, warnings); user-configurable thresholds; A/B tests for trust impact.

- Assumptions/dependencies: Clear messaging to avoid overreliance; regulatory guidance for disclosures in sensitive domains.

- Data curation and active learning (data operations)

- What: Use low initial value or high value–outcome mismatches to prioritize examples for labeling, augmentation, or curriculum scheduling.

- Tools/workflows: Sampling policies keyed to value signals; data pipelines tagging “high learning value” items.

- Assumptions/dependencies: Labeling budget; stable link between low value and future performance gains.

Long-Term Applications

These use cases depend on additional research, scaling, or integration work—especially around “dopamine neurons” (reward prediction error) and neuron-level interventions.

- RPE-aware self-correction of chain-of-thought (software, education, coding assistants)

- What: Detect negative-surprise spikes (dopamine neuron patterns) during reasoning and trigger backtracking, replanning, or tool calls; exploit positive-surprise spikes to checkpoint key insights.

- Tools/products: “RPE-aware CoT supervisor” that edits or branches reasoning in real time.

- Assumptions/dependencies: Quantitative, reliable RPE detection beyond case studies; fast online signal extraction; evaluation of interference risks.

- Intrinsic-reward reinforcement learning (model training, foundation models)

- What: Use value and RPE signals as internal critics for RL (RLIF), reducing dependence on human labels and enabling self-improvement on unlabeled tasks.

- Tools/products: Training loops using TD-trained value heads; curriculum guided by internal surprise signals.

- Assumptions/dependencies: Stability and safety of self-rewarding; preventing reward hacking; generalization across domains.

- Neuron-level safety and calibration controls (safety-critical sectors: healthcare, aviation, finance)

- What: Modulate or regularize value neuron activations to prevent overconfidence and suppress hallucinations; enforce abstention under low internal value.

- Tools/products: Calibration layers, neuron clamps, or regularizers; safety dashboards with neuron-level telemetry.

- Assumptions/dependencies: Reliable mapping of value neurons across updates; minimal collateral damage to capabilities; regulatory acceptance.

- Architecture and hardware co-design for fast confidence screening (semiconductors, edge AI)

- What: Architect explicit “value pathways” and hardware kernels optimized to read a small neuron subset for millisecond pre-screening on edge devices.

- Tools/products: Value-head microkernels; accelerator support for sparse activation reads.

- Assumptions/dependencies: Standardization of neuron indices; consistent sparsity across versions; cost-benefit vs. full forward pass.

- Regulatory auditing and compliance frameworks (policy, governance)

- What: Define standardized, auditable internal-confidence metrics (based on value neurons) to certify abstention behavior and reliability thresholds in sensitive applications.

- Tools/products: Audit APIs exposing value trajectories with privacy-preserving summaries; compliance reports.

- Assumptions/dependencies: Consensus on metrics and thresholds; privacy and IP constraints around model internals.

- Continual learning and model/drift/tamper detection (security, MLOps)

- What: Monitor IoU of value-neuron sets and probe performance across time to detect distribution shifts, regressions, or tampering; trigger retraining or rollback.

- Tools/products: Neuron-set fingerprinting; automated alarms; integrity checks in CI/CD.

- Assumptions/dependencies: Stable baselines; sensitivity tuning to avoid false positives; access to internal activations.

- Cross-model transfer and standard “value neuron maps” (ecosystem tooling)

- What: Build shared maps of value neurons across model families to enable portable probes and faster deployment of confidence tooling.

- Tools/products: Open registries of neuron indices per checkpoint; conversion utilities across architectures.

- Assumptions/dependencies: Cooperation among model vendors; versioning standards; robustness of mappings post-fine-tune.

- Tool-use orchestration keyed to value/RPE trajectories (robotics, autonomous agents, enterprise workflow)

- What: Trigger external tools/search/planners when negative RPE indicates reasoning failure; allocate more sensing/planning budget upon positive RPE spikes signaling progress.

- Tools/products: RPE-driven orchestrators for agents; adaptive planning stacks.

- Assumptions/dependencies: Online RPE reliability; latency impact; safety validation in embodied settings.

- Personalized tutoring and adaptive explanations (education)

- What: Tailor depth, pace, and scaffolding of explanations based on internal value signals; prompt for verification when confidence drops mid-solution.

- Tools/products: Tutors with dynamic explanation policies; formative feedback keyed to internal confidence.

- Assumptions/dependencies: Validation in varied subjects/languages; pedagogy-aligned calibration.

- Computational neuroscience and cross-disciplinary research (academia)

- What: Use LLM reward subsystems as testbeds for hypotheses about biological value and dopamine systems; inform new algorithms and interpretability methods.

- Tools/products: Shared benchmarks connecting TD error, RPE-like signals, and learning dynamics.

- Assumptions/dependencies: Careful mapping from artificial to biological constructs; reproducibility standards.

Cross-cutting assumptions and dependencies

- Hidden-state access is essential for most applications; closed APIs may not permit this without vendor support.

- Current evidence is strongest on math/STEM and certain benchmarks; broader domain validation (e.g., open-ended dialogue, coding, multimodal tasks) is needed.

- Probes require training on representative data with final rewards; transfer helps but may not eliminate domain adaptation.

- Neuron positions shift with model updates; robust versioning and monitoring are needed to maintain tooling.

- Runtime interventions (e.g., neuron ablation/modulation) can degrade performance if misapplied; conservative deployment and offline evaluation are recommended.

Collections

Sign up for free to add this paper to one or more collections.