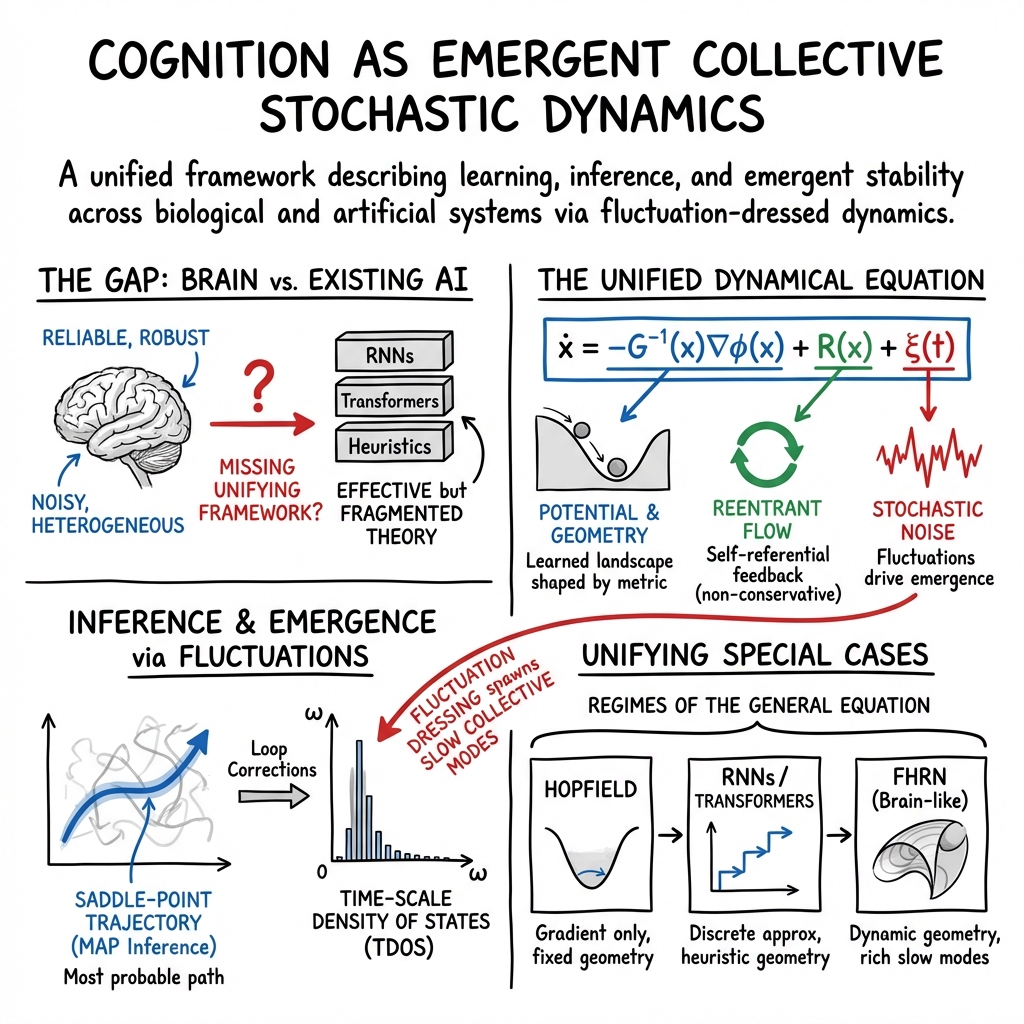

- The paper introduces a unified dynamical field framework that integrates energy-based models, transformers, and RNNs via a stochastic dynamic equation.

- The paper details how learning shapes effective potentials while inference emerges from saddle-point trajectories and collective mode evolution.

- The paper demonstrates that emergent cognitive properties arise from loop corrections and time-scale organization, highlighting implications for neuroscience and AI.

Unified Dynamical Field Theory: Learning, Inference, and Emergence

Introduction

The paper "A Unified Dynamical Field Theory of Learning, Inference, and Emergence" (2601.10221) introduces a comprehensive framework that bridges different architectures in biological and artificial systems, from energy-based models to transformers. The proposed unified dynamical field theory presents a stochastic dynamic equation rooted in the Martin–Siggia–Rose–Janssen–de Dominicis (MSRJD) formulation, establishing cognition as a collective phenomenon governed by dynamical time scales, rather than by individual units or static patterns.

Unified Dynamical Framework

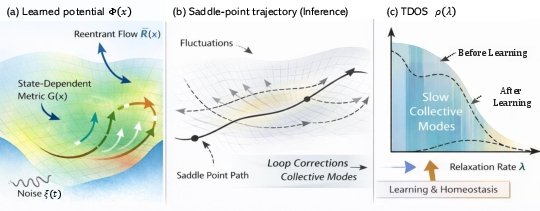

This theory delineates a unified dynamical equation, where neural states evolve on a state-space geometry influenced by effective potentials, reentrant flows, and stochastic fluctuations. Learning shapes this effective potential, inference traces stable trajectories within the landscape, and emergent cognitive properties arise from loop-induced collective modes and time scales.

Figure 1: Conceptual overview of the unified dynamical field theory illustrating neural state evolution and the influence of state-space geometry.

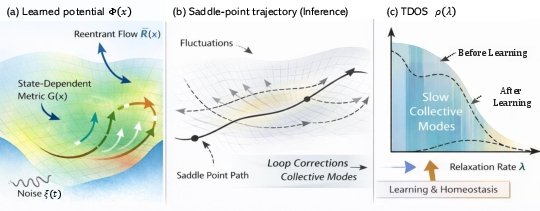

Dynamics of Learning and Inference

Inference in the unified theory is likened to saddle-point trajectories of the MSRJD action. Loop corrections contribute to emergent collective modes—dynamic structures that underscore temporal integration and stability. The inference emerges dynamically as relaxation on learned potential landscapes, avoiding algorithmic computations. The saddle-point trajectories capture the most probable evolution, which underpins Bayesian inference over system trajectories.

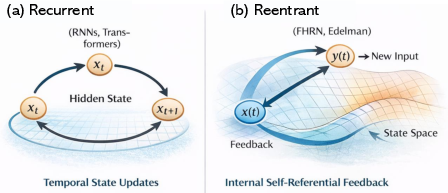

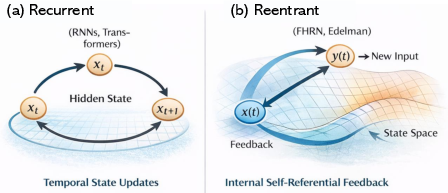

Figure 2: Recurrent vs. reentrant neural architectures, highlighting differences in state updating and feedback mechanisms.

Emergent Collective Modes

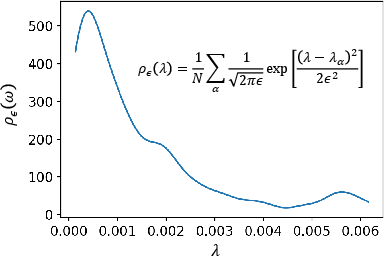

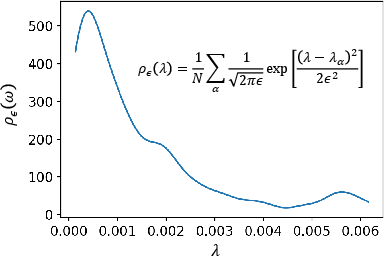

Fluctuations around inference trajectories lead to emergent phenomena such as critical slowdown and collective modes through loop corrections. Here, the Time-scale Density of States (TDOS) quantifies the organization of dynamical time scales crucial for inference dynamics. The theory suggests that cognition is reflected in these collective dynamics, not static attributes.

Learning as Structural Adaptation

Learning is conceptualized as the slow adaptation of structural dynamic components, driven by inference trajectory statistics. This adaptation reorganizes the TDOS, reinforcing slow collective modes pertinent to tasks and suppressing fast, unstable ones. This perspective shifts the focus from neuron-centric views to time-scale-based cognitive descriptions.

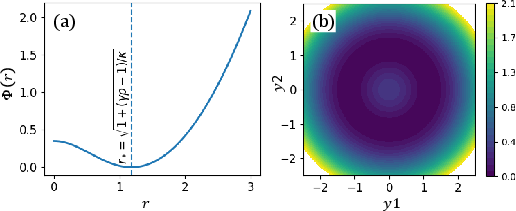

Special Limits and Model Unifications

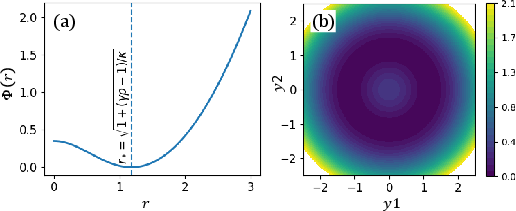

Hopfield networks, RNNs, transformers, and Fast-weights Homeostatic Reentry Networks (FHRNs) exemplify special limits of the unified framework, featuring diverse constraints on potential, geometry, and flows. While Hopfield networks underscore gradient descent dynamics, transformers embody reentrant dynamics in discrete time, and FHRNs represent homeostatic metric generation.

Figure 3: Effective homeostatic potential of the Fast-weights Homeostatic Reentry Network, demonstrating the emergence of a stable shell for collective neural activity.

Discussion and Implications

The unified framework provides a singular conceptual foundation for understanding learning architectures’ dynamics, extending its implications to AI. It emphasizes dynamic stability, geometry regularization, and reentrant feedback, over complex architectural features, as foundational principles for intelligent behavior and adaptive neutrality.

Figure 4: TDOS of the FHRN illustrating the distribution and accumulation of slow collective modes integral to inference dynamics.

Conclusion

This unified field theory reconceptualizes intelligence as governed by the coordination of dynamical time scales. It unifies diverse learning systems under a single theoretical canopy, emphasizing the geometric and collective nature of cognitive dynamics. The shift from static architecture to dynamic time-scale organization promises impactful implications for both neuroscience and artificial intelligence.