The Belief-Desire-Intention Ontology for modelling mental reality and agency

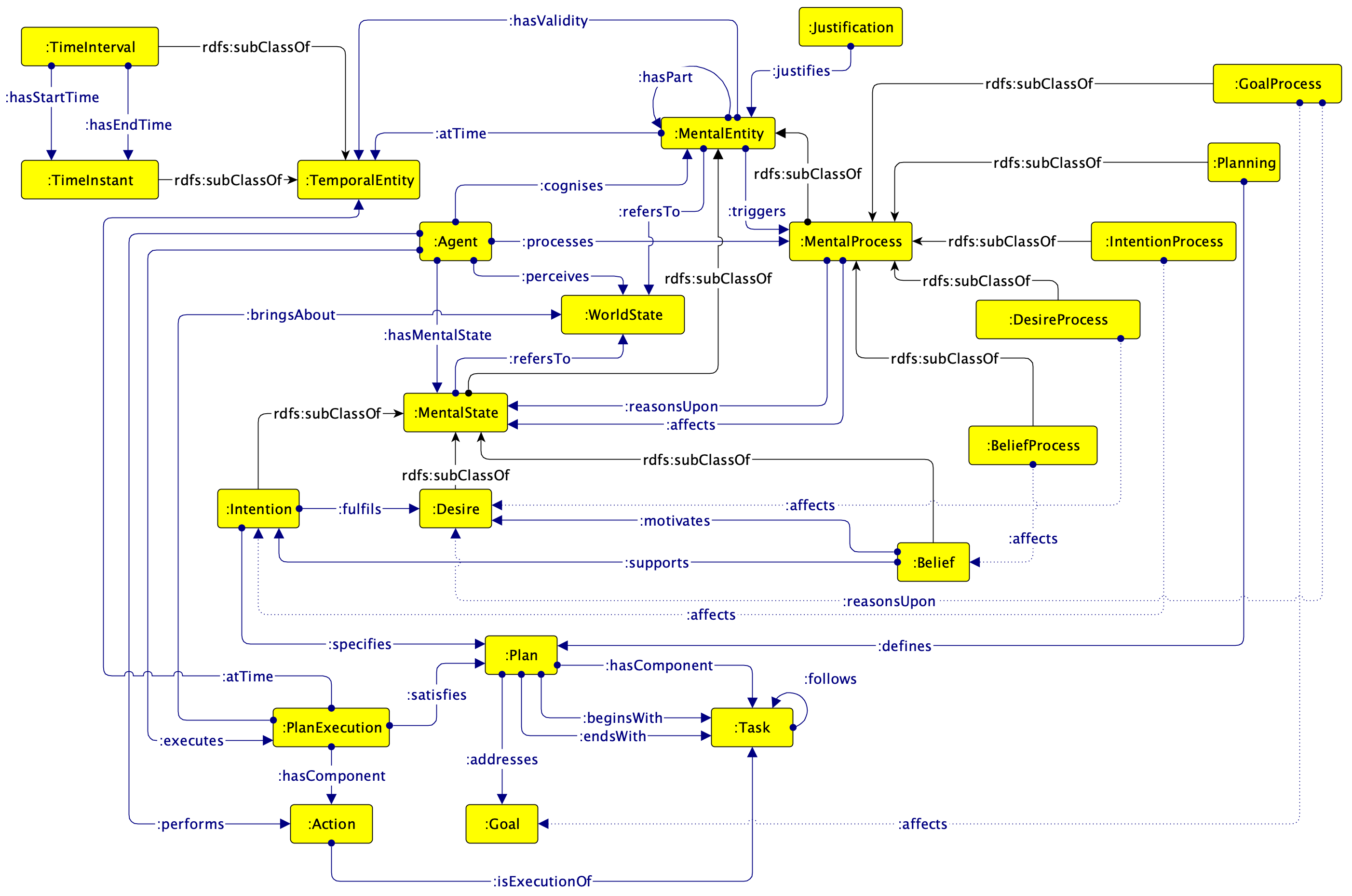

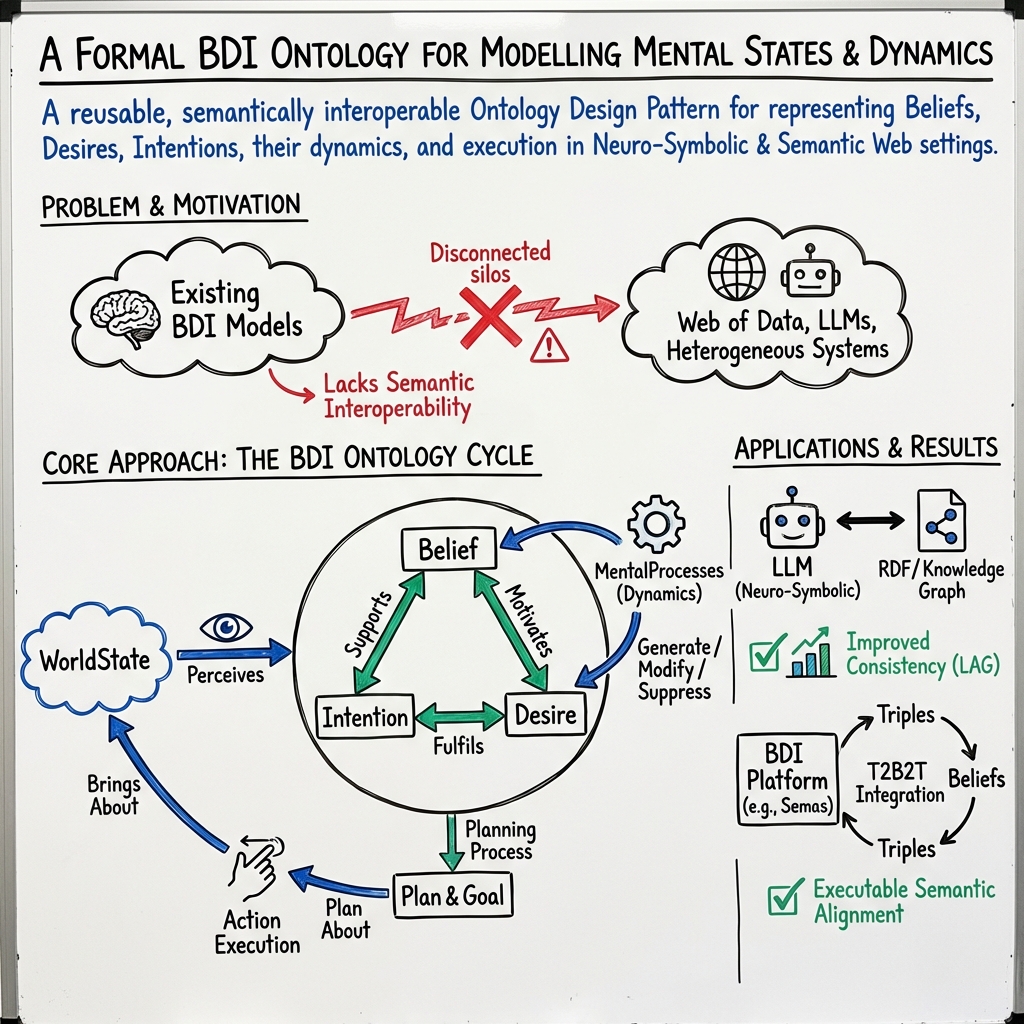

Abstract: The Belief-Desire-Intention (BDI) model is a cornerstone for representing rational agency in artificial intelligence and cognitive sciences. Yet, its integration into structured, semantically interoperable knowledge representations remains limited. This paper presents a formal BDI Ontology, conceived as a modular Ontology Design Pattern (ODP) that captures the cognitive architecture of agents through beliefs, desires, intentions, and their dynamic interrelations. The ontology ensures semantic precision and reusability by aligning with foundational ontologies and best practices in modular design. Two complementary lines of experimentation demonstrate its applicability: (i) coupling the ontology with LLMs via Logic Augmented Generation (LAG) to assess the contribution of ontological grounding to inferential coherence and consistency; and (ii) integrating the ontology within the Semas reasoning platform, which implements the Triples-to-Beliefs-to-Triples (T2B2T) paradigm, enabling a bidirectional flow between RDF triples and agent mental states. Together, these experiments illustrate how the BDI Ontology acts as both a conceptual and operational bridge between declarative and procedural intelligence, paving the way for cognitively grounded, explainable, and semantically interoperable multi-agent and neuro-symbolic systems operating within the Web of Data.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

What this paper is about

This paper introduces the BDI Ontology, a shared “map” for describing how intelligent agents (like robots or AI programs) think and act. BDI stands for Beliefs, Desires, and Intentions:

- Beliefs = what an agent thinks is true about the world

- Desires = what the agent wants

- Intentions = what the agent commits to doing

The ontology is like a carefully designed dictionary plus blueprint that computers can understand. It makes these mental ideas precise, reusable, and compatible with the Semantic Web. The authors also show how this ontology can work with LLMs and a reasoning platform called Semas.

The big questions they asked

The authors organized their goals into clear “competency questions,” which are practical checks the ontology should be able to answer. In simple terms, they asked:

- How do we describe agents, their thoughts (beliefs, desires, intentions), and the world they live in?

- How do these thoughts change over time—what causes a belief or desire to appear, change, or disappear?

- How do goals and plans connect to intentions so agents can actually do things?

- How can we keep track of when things happened and how an agent’s mind changed over time?

How they built it

Think of the ontology as building with LEGO bricks. Instead of starting from scratch, the authors reuse dependable “design patterns” (standard, proven pieces) and connect them to a strong foundation so everything fits together.

- What is an ontology? It’s a structured set of concepts and relationships—like a shared vocabulary—that computers use to agree on “what’s what.”

- What is an Ontology Design Pattern (ODP)? Like a reusable LEGO piece that solves a common modeling problem (for example, how to represent events or plans).

Here’s their approach, explained with everyday ideas:

- They used an agile method called eXtreme Design. They wrote simple questions the ontology must answer (like “Which beliefs led to this desire?”) and built the model to answer them.

- They aligned their terms with a foundational ontology called DOLCE (think of this as a grammar book for concepts) to keep meanings consistent.

- They reused patterns for:

- Events and situations (EventCore, Situation): to model mental processes (like “forming an intention”) and snapshots of the world.

- Provenance: to record why a belief, desire, or intention exists (its justification).

- BasicPlan and Sequence: to describe plans and the order of tasks.

- TimeIndexedSituation: to mark when a mental state starts and ends.

They modeled:

- Mental states (Belief, Desire, Intention) as “things” an agent holds.

- Mental processes (like belief formation) as “activities” that generate, modify, or suppress those states.

- Causal links: beliefs can motivate desires; beliefs can support intentions; intentions aim to fulfill desires.

- Goals and plans: goals are descriptions of desired outcomes; plans are structured steps to reach a goal; plan execution is what actually happens in the real world.

- Justifications: reasons or evidence attached to mental states to improve explainability.

- Time: when states appear, change, and end, so we can trace how an agent’s mind evolves.

What they tried it on

The authors tested the BDI Ontology in two complementary ways to show it’s useful both for understanding and for doing:

- With LLMs using Logic Augmented Generation (LAG)

- Idea: Give an LLM a “logic-aware guide” (the ontology) so its answers are more consistent and make sense with clear rules.

- Analogy: It’s like giving a storyteller a rulebook and a character map so the story doesn’t contradict itself.

- They used the MS-LaTTE dataset to see if ontological grounding helps an LLM:

- Spot contradictions

- Build coherent belief–desire–intention explanations

- Answer the ontology’s competency questions

- Inside a reasoning platform called Semas using the T2B2T paradigm

- T2B2T stands for Triples-to-Beliefs-to-Triples.

- RDF triples are simple data statements like “Alice knows Bob” (subject–predicate–object). Think of them as tiny facts.

- This system turns external facts (triples) into an agent’s beliefs, updates intentions and plans, then writes the results back as new triples.

- Analogy: It’s like an agent reading facts from the web, thinking about them, deciding what to do, and then writing back its conclusions—so it can talk to other systems smoothly.

Together, these tests show the ontology works both as a clear conceptual model and as a practical tool that connects symbolic knowledge (facts and rules) with procedures (plans and actions).

Main findings

Here are the main takeaways in a short list for clarity:

- The authors built a formal, modular BDI Ontology that cleanly represents beliefs, desires, intentions, goals, plans, and the processes that create and change them.

- The ontology is aligned with well-known foundations and reuses standard patterns, making it easy to share, extend, and plug into other systems.

- It supports explainability: each mental state can point to justifications and a chain from belief → desire → intention → plan → action → outcome.

- When combined with LLMs using LAG, the ontology offers structure that can help improve logical coherence and consistency in generated answers.

- When integrated with the Semas platform via T2B2T, agents can smoothly move between web data and internal mental states, making them interoperable in multi-agent and Web of Data environments.

Why it matters

This work helps build AI agents that think more like careful planners and can explain themselves. Because the ontology is:

- Clear: it defines mental states, processes, and time explicitly.

- Reusable: it follows best-practice patterns, so others can adopt or extend it.

- Interoperable: it fits into the Semantic Web and works with different tools, from LLMs to logic-based systems.

In practical terms, this means future AI systems—like digital assistants, robots, or coordination tools—can:

- Reason more consistently about what they know, want, and plan to do.

- Share and understand knowledge across different platforms.

- Explain their decisions with traceable justifications and timelines.

Overall, the BDI Ontology acts as a bridge between “declarative intelligence” (facts and meanings) and “procedural intelligence” (plans and actions). This makes it a promising foundation for explainable, cooperative, and human-aligned AI operating on the Web of Data.

Knowledge Gaps

Unresolved knowledge gaps, limitations, and open questions

Below is a concise, actionable list of what remains missing, uncertain, or unexplored in the paper.

- Formal semantics for propositional content: The ontology uses

refersTo -> WorldStateto anchor mental content but does not specify how propositional content (e.g., beliefs as propositions, truth conditions, intensionality) is represented, compared, or matched across agents and time. - Modal/rational constraints missing: Core BDI rationality properties (e.g., intention–belief consistency, means–end coherence, non-contradiction of intentions) are not axiomatized; there are no modal semantics or rules to enforce or check these constraints.

- Temporal logic under-specified: While

TimeIndexedSituationis referenced, the ontology does not define temporal relations (e.g., Allen relations), persistence conditions, or lifecycle constraints for states/processes, nor rules for when states begin/end or how updates propagate over time. - Belief revision not defined: The ontology introduces

modifiesandsuppressesbut provides no formal belief/desire/intention revision operators, policies, or constraints (e.g., AGM-style postulates, priority/justification-based revision). - Plan–goal satisfaction conditions unclear: There is no explicit linkage between a goal’s description and the world state(s) that constitute its satisfaction (e.g., mappings between

dul:GoalandbringsAboutoutcomes, precondition/effect semantics, equivalence criteria). - Action preconditions/effects absent: Plans and actions lack formal preconditions, effects, and context applicability; there is no representation of causal rules needed for planning/execution reasoning or post-hoc verification.

- Handling of uncertainty and probabilities omitted: The model does not support graded beliefs, utilities/preferences, or decision-theoretic constructs (despite decision-tree discussion), leaving desire prioritization and intention selection underspecified.

- Nested/iterated mental attitudes: The current design limits

refersTotoWorldState, with no support for beliefs about beliefs (or desires/intentions), preventing meta-reasoning common in BDI/MAS settings. - Multi-agent interaction primitives missing: There is no modeling of communication acts (e.g., FIPA ACL), social commitments, joint intentions, shared goals, or mechanisms for resolving conflicting goals/intentions across agents.

- Provenance and justification granularity:

Justificationlacks typed structures, strength/confidence, provenance linkage (e.g., to specific observations/inferences), or counter-argument modeling; integration with PROV-O is only mentioned, not specified. - Mereology and acyclicity not constrained:

hasPartis used widely (e.g., for mental states, plans), but part-whole axioms (transitivity, asymmetry, acyclicity) are not declared; similarly,follows/precedesare called transitive in text but not formally characterized (and acyclicity is not enforced). - Category mixing in ontology commitments:

MentalProcess ⊑ MentalEntitywhile also⊑ d0:Activitymay blur endurant/perdurant distinctions in DOLCE; the ontological correctness of dual typing is not justified or constrained. - OWL profile and reasoning guarantees unspecified: The ontology’s OWL profile (EL/QL/RL/DL) and the expected reasoning capabilities/complexity are not stated, leaving interoperability and performance uncertain.

- Alignment coverage with DUL/d0: The choice and implications of using

d0:Eventuality,dul:Plan,dul:Goal, etc., are not formally validated (e.g., no competency-mapped alignment, domain/range harmonization, or consistency checks demonstrated). - CQ validation missing: The competency questions are listed but there is no evidence of SPARQL queries, test datasets, or formal tests showing that the ontology actually answers them.

- Goal vs. desire separation consequences: Modeling

Goalas adul:Description(not a mental state) is conceptually motivated, but the impact on agent-internal decision processes (e.g., desire-to-goal mapping, goal adoption/abandonment) is not operationalized. - Plan execution monitoring and failure handling: There is no representation of execution monitoring, plan failure, exception handling, or replanning triggers, despite their centrality to BDI agency.

- Integration with existing BDI languages: Concrete mappings to AgentSpeak/Jason/Jadex constructs (belief bases, plans, events) are not provided, hindering adoption and interoperability with established platforms.

- Scalability and performance: No benchmarks (ontology size, reasoning times, TBox/ABOX complexity) are presented; it is unclear how the model performs in realistic MAS or neuro-symbolic settings.

- LAG–LLM coupling specifics absent: The Logic Augmented Generation experiment lacks details on prompt engineering, grounding methods, error modes, evaluation metrics, baselines, ablations, and the reproducibility of MS-LaTTE-based results.

- T2B2T semantics and conflict resolution: The bidirectional mapping between RDF triples and mental states is not specified (e.g., mapping rules, update semantics, conflict resolution, concurrency control in multi-agent contexts).

- Security and privacy concerns: Exposing mental states and justifications on the Web of Data raises privacy/security issues not addressed (e.g., access control, redaction policies, selective disclosure).

- Domain extensibility patterns: While positioned as an ODP, concrete guidance/examples on specializing the pattern for domain-specific agency (e.g., robotics, policy agents) are not provided.

- Normative/deontic aspects missing: Obligations, permissions, norms, and their interaction with intentions/plans are not modeled, limiting applicability to socio-technical environments.

- Evaluation on real-world cases: Beyond the stated experiments, there are no applied case studies showing end-to-end use (from KG perception to plan execution and explanation), limiting empirical validation.

- Tooling and artefacts: Namespaces, persistent URIs, online deployment, and a reference OWL file are not documented in the text; tooling for validation, editing, and reasoning (and their versions) are not reported.

Glossary

- Agentive causality: The idea that causal relations within an agent’s mind link mental entities via internal processes (e.g., beliefs causing desires, desires leading to intentions). Example: "Such transitions are grounded in an ontological understanding of agentive causality, where one mental entity serves as a contributing factor in the generation of another, mediated through a mental process."

- AgentSpeak: A BDI agent-oriented programming language for specifying agents’ beliefs, desires, and intentions. Example: "Moreira et al.~\cite{moreira2006agent} propose an extension of the BDI agent-oriented programming language AgentSpeak, grounded in Description Logic (DL)."

- BasicPlan ODP: A reusable Ontology Design Pattern for representing plans, goals, and their relationships. Example: "BasicPlan ODP"

- BDI Ontology: A formal ontology capturing beliefs, desires, intentions, and their relations to support interoperable, explainable agent reasoning. Example: "This paper presents a formal BDI Ontology, conceived as a modular Ontology Design Pattern (ODP) that captures the cognitive architecture of agents through beliefs, desires, intentions, and their dynamic interrelations."

- Computational Ontology of Mind (COM): An ontology derived from DOLCE to formally characterize mental categories. Example: "leading to the definition of the Computational Ontology of Mind (COM)."

- Competency questions (CQs): Requirements expressed as questions that an ontology should be able to answer. Example: "These requirements are formalised as {\em competency questions} (CQs)~\cite{Gruninger1995}, a widely adopted approach to represent requirements in various ontology engineering methodologies, including {\em eXtreme Design} (XD)~\cite{Blomqvist2010}, which we adopted in this work."

- Decision Trees (DT): A decision-theoretic model using nodes and probability/payoff functions to evaluate action sequences. Example: "Such belief states can be modelled using Decision Trees (DT)."

- Declarative and procedural intelligence: The distinction between knowledge representations (declarative) and the processes that act on them (procedural). Example: "a conceptual and operational bridge between declarative and procedural intelligence"

- Description Logic (DL): A family of logic-based knowledge representation formalisms supporting reasoning over concepts and roles. Example: "grounded in Description Logic (DL)."

- Diachronic reasoning: Reasoning about how entities or states evolve over time. Example: "This allows for diachronic reasoning such as querying which mental states were active at a given moment, or how an agentâs intention evolved in response to new beliefs."

- dMARS (Distributed Multi-Agent Reasoning System): A successor system to PRS that extends BDI architecture for agent reasoning. Example: "dMARS"

- DOLCE (Descriptive Ontology for Linguistic and Cognitive Engineering): A foundational ontology used to ground conceptual modeling of cognitive and linguistic entities. Example: "DOLCE (Descriptive Ontology for Linguistic and Cognitive Engineering)~\cite{masolo2002wonderweb}"

- DOLCE Ultra Lite: A lightweight variant of DOLCE used for aligning concepts like plans and executions. Example: "thereby aligning it with the DOLCE Ultra Lite foundational ontology"

- DOLCE-0: A supplementary ontology generalizing DOLCE+DnS Ultralite, providing abstractions like Eventuality. Example: "DOLCE-0 is a supplementary ontology used as a generalisation of DOLCE+DnS Ultralite."

- Endurants: Entities that persist wholly through time (e.g., stable mental states as modeled situations). Example: "These states are considered cognitive attributes of agents and are typically reified as entities that persist over time, i.e. endurants."

- eXtreme Design (XD): An agile methodology emphasizing reuse of Ontology Design Patterns via iterations guided by competency questions. Example: "The eXtreme Design (XD) methodology is an agile, iterative approach to ontology engineering that emphasises the reuse and composition of Ontology Design Patterns"

- EventCore pattern: An ODP for modeling events that occur in time with participants. Example: "the EventCore pattern"

- FIPA (Foundation for Intelligent Physical Agents): An international consortium specifying standards for agent interoperability. Example: "the Foundation for Intelligent Physical Agents (FIPA)"

- Foundational ontologies: High-level ontologies providing general categories (e.g., events, objects) for aligning domain models. Example: "by aligning with foundational ontologies and best practices in modular design."

- Graffoo: A graphical notation for representing ontologies. Example: "Figure~\ref{fig:schema_bdi} shows the Graffoo~\cite{falco2014modelling} diagram of the BDI ontology."

- JACK: A BDI-based Java framework for building multi-agent systems. Example: "JACK"

- JADE: A Java-based framework compliant with FIPA standards for developing multi-agent systems. Example: "JADE"

- JADEX: A BDI agent framework supporting goals and plans within Java. Example: "JADEX \cite{10.1007/3-7643-7348-2_7}"

- JaCaMo: A multi-agent programming platform integrating Jason, CArtAgO, and Moise. Example: "JaCaMo \cite{BOISSIER2013747}"

- JASDL: An extension to the Jason agent platform integrating OWL-based ontological reasoning. Example: "introduce JASDL, an extension of the Jason agent platform that integrates ontological reasoning through the use of the OWL API."

- Knowledge graphs: Graph-structured representations of entities and relations enabling semantic integration and reasoning. Example: "maintaining semantic alignment with shared knowledge graphs."

- LLMs: Large-scale neural models trained on text used for generation and reasoning tasks. Example: "coupling the ontology with LLMs"

- Logic Augmented Generation (LAG): A technique integrating formal logic with LLM generation to improve coherence and consistency. Example: "via Logic Augmented Generation (LAG) to assess the contribution of ontological grounding to inferential coherence and consistency"

- Meronymic relations: Part–whole relationships used to model compositional structures. Example: "meronymic relations, i.e. partâwhole structures, among mental entities."

- MS-LaTTE dataset: A dataset used to evaluate logical consistency and reasoning capabilities. Example: "Through the use of the MS-LaTTE dataset~\cite{jauhar2022ms}"

- Multi-Agent Systems (MAS): Systems composed of multiple interacting agents, possibly heterogeneous and distributed. Example: "across Multi-Agent Systems (MAS)"

- Neuro-symbolic systems: AI systems combining statistical (neural) and symbolic reasoning methods. Example: "neuro-symbolic systems operating within the Web of Data."

- Nuin: An open-source platform combining BDI agents with Semantic Web techniques. Example: "the Nuin agent platform"

- Ontological grounding: The practice of aligning models with formal ontologies to ensure semantic precision. Example: "assess whether ontological grounding enhances the modelâs capability to detect logical inconsistencies"

- Ontology Design Pattern (ODP): A reusable modeling solution capturing best-practice structures for recurring ontology problems. Example: "Ontology Design Pattern (ODP)"

- Ontology Engineering: The systematic process of designing, implementing, and maintaining ontologies. Example: "Ontology Engineering"

- OWL (Ontology Web Language): A Semantic Web language for defining and instantiating ontologies. Example: "ontology languages such as OWL (Ontology Web Language)."

- OWL API: A Java API for manipulating OWL ontologies programmatically. Example: "through the use of the OWL API."

- Perdurants: Temporally extended entities like events and processes. Example: "perdurants, enabling explicit reasoning about agent dynamics and transitions between states."

- pluggable component: A modular part of a system that can be swapped or integrated without changing the core architecture. Example: "making the underlying reasoning technology a pluggable component."

- Procedural Reasoning System (PRS): An early implemented BDI-based agent reasoning system. Example: "the Procedural Reasoning System (PRS) \cite{georgeff, ingrand}, one of the earliest implemented agent-oriented systems based on the BDI architecture."

- Prolog-style reasoning: Logic programming-based inference, characteristic of Prolog systems. Example: "Semas, a Prolog-style reasoning platform"

- Provenance ODP: An ODP for modeling sources, derivations, and justifications of information. Example: "The Provenance ODP"

- RDF triples: Subject–predicate–object statements forming the basic data structure of the Semantic Web. Example: "enabling a bidirectional flow between RDF triples and agent mental states."

- Semas: A reasoning platform integrating BDI concepts with Semantic Web data via T2B2T. Example: "Semas, a Prolog-style reasoning platform that implements the novel Triples-to-Beliefs-to-Triples (T2B2T) paradigm."

- Semantic Web standards: W3C-endorsed formats and protocols (e.g., RDF, OWL) enabling interoperable semantic data. Example: "ensuring compliance with Semantic Web standards"

- Sequence pattern: An ODP for modeling ordered sequences of tasks or actions. Example: "Sequence pattern"

- Situation pattern: An ODP for encapsulating configurations of entities holding at a given time. Example: "Situation pattern"

- Subsumption relation: A taxonomic relationship where one concept is a specialization of another. Example: "but on the subsumption relation between concepts"

- Telicity: The property of events having an inherent endpoint or goal (telos). Example: "Ontologically, this reflects {\em telicity}, a notion rooted in Aristotelian philosophy"

- Telos: The goal or endpoint that characterizes a process or plan. Example: "a specific telos"

- TimeIndexedSituation ODP: An ODP to model situations indexed by temporal intervals or instants. Example: "The TimeIndexedSituation ODP\footnote{\url{https://odpa.github.io/patterns-repository/TimeIndexedSituation/TimeIndexedSituation} has been selected to model the requirements associated with temporal reasoning."

- Triples-to-Beliefs-to-Triples (T2B2T): A paradigm enabling bidirectional mapping between RDF data and agents’ mental states. Example: "which implements the Triples-to-Beliefs-to-Triples (T2B2T) paradigm"

- Unification: A logic programming operation matching terms via variable substitution. Example: "as it is not based solely on unification but on the subsumption relation between concepts"

- Web of Data: The network of interlinked datasets on the web using Semantic Web standards. Example: "operating within the Web of Data."

Collections

Sign up for free to add this paper to one or more collections.