- The paper introduces a neuro-symbolic framework that calibrates perceptual uncertainty to improve symbolic planning for robotic manipulation.

- It employs a hybrid Transformer-GNN architecture with adaptive thresholding to reliably infer spatial relations from continuous visual inputs.

- Empirical evaluations demonstrate enhanced task success (up to 90.7%), increased computational efficiency, and superior plan quality versus POMDP methods.

Neuro-Symbolic Reasoning Under Perceptual Uncertainty: Bridging Continuous Perception and Discrete Symbolic Planning

Introduction

The paper "A Neuro-Symbolic Framework for Reasoning under Perceptual Uncertainty: Bridging Continuous Perception and Discrete Symbolic Planning" (2511.14533) addresses a core issue in AI and robotics: establishing a reliable interface between high-dimensional, uncertain perceptual input and discrete, symbolic planning required for task-level decision-making. The work critiques prior approaches—deterministic neuro-symbolic methods and standard POMDP-based planners—for either neglecting uncertainty in symbolic representations or handling it at an inappropriate abstraction level, causing inefficiencies and limiting interpretability. The proposed framework explicitly calibrates and propagates perceptual uncertainty at the symbolic level, underpinned by formal probabilistic modeling, and empirically validates its effectiveness for robotic manipulation and beyond.

Neuro-Symbolic Pipeline Overview

The core system comprises a neural-symbolic translator (a hybrid Transformer-GNN architecture) that extracts object-centric features from visual sensory input and infers probabilistic symbolic states, combined with a symbolic planner augmented for uncertainty handling and active information gathering.

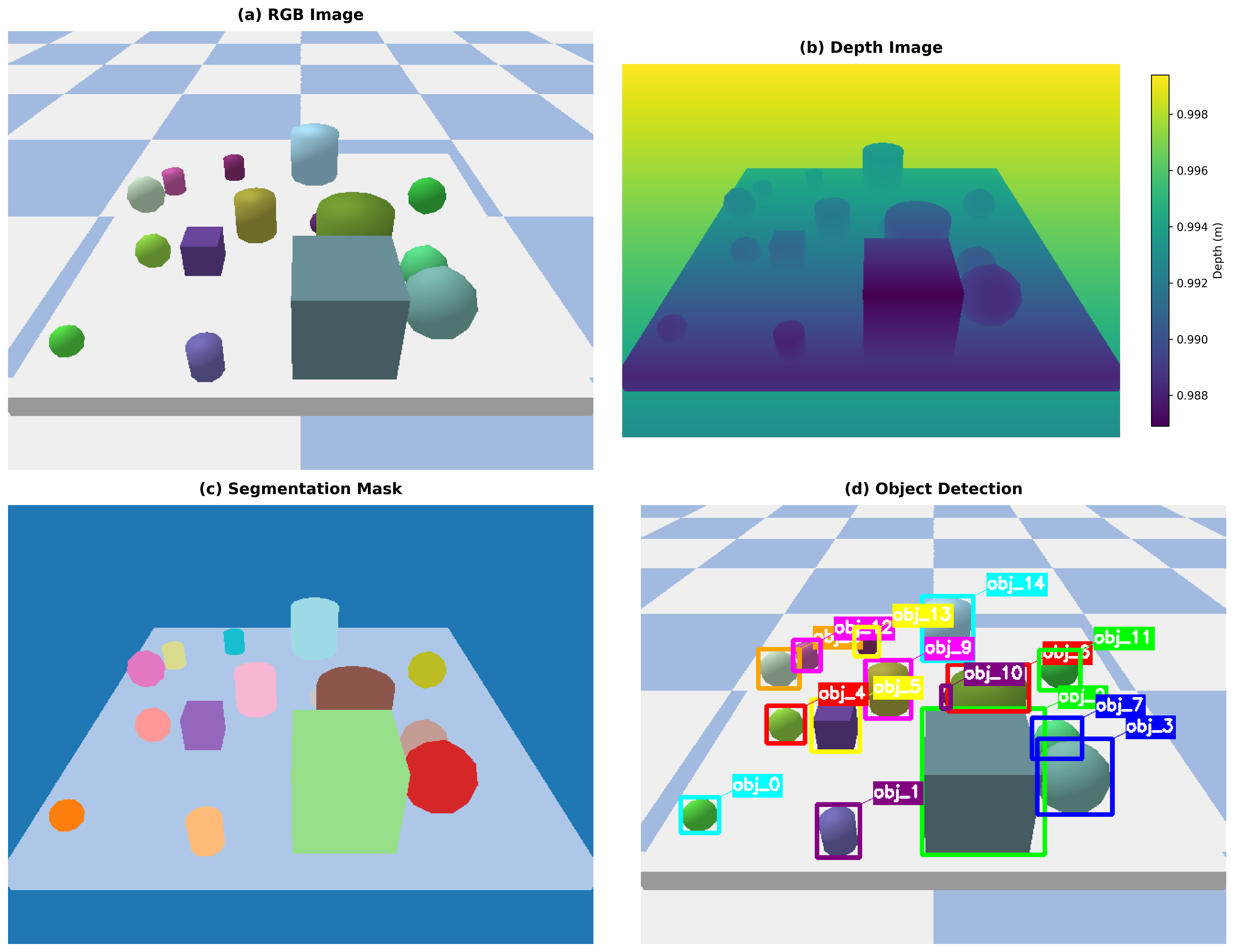

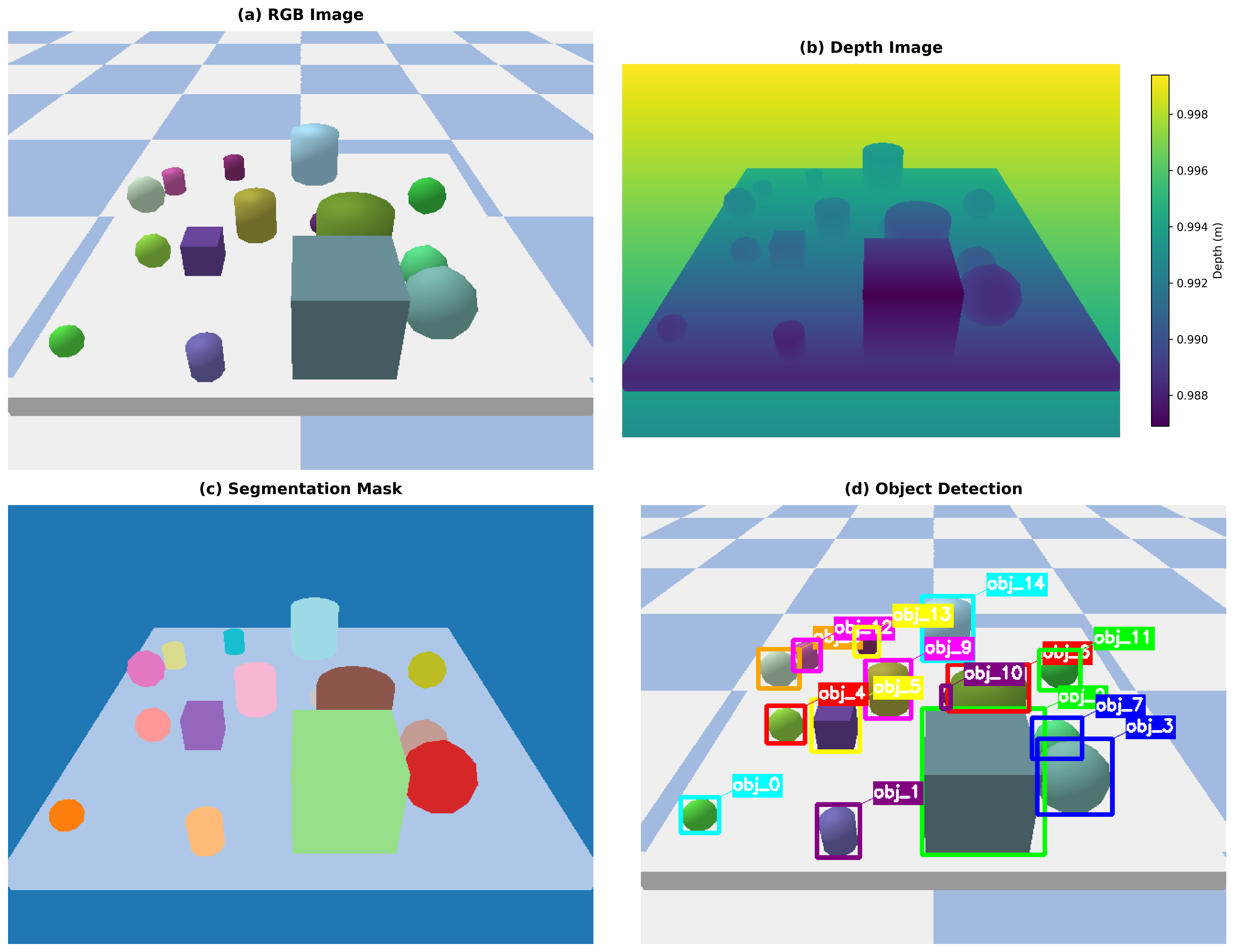

Figure 1: Complete neuro-symbolic task planning pipeline, from raw perception (RGB, depth, segmentation) to symbolic state extraction and uncertainty-aware plan generation.

Each component functions as follows:

- Perception and Translation: Visual observations (RGB, depth, segmentation) are processed to produce object detections and segmentation masks, with geometric and attention mechanisms supporting robust identification even under occlusion.

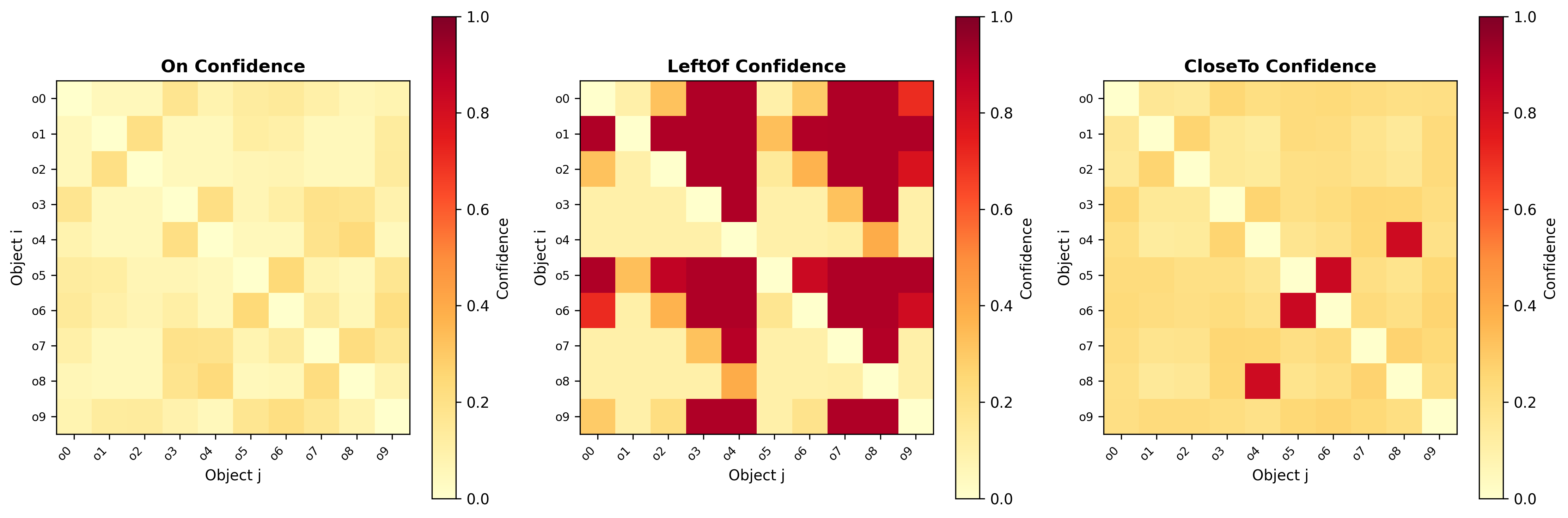

- Probabilistic Symbolic State Construction: Relations such as On, LeftOf, CloseTo, and Clear are inferred for all object pairs; each relation assignment is endowed with a calibrated confidence value, forming a probabilistic symbolic state.

- Uncertainty-Aware Planning Loop: The planner uses only symbolic relations whose confidence exceeds a tuned threshold when generating an action plan (pick/place/information-gathering). When critical symbolic uncertainty remains, the system triggers targeted sensory actions (e.g., look_closer).

Neuro-Symbolic Translator: Architecture and Learning

The translator deploys a ResNet-18 backbone for feature extraction, augmented by multihead self-attention for set-based object representation and a GNN for message passing over object graphs. This hybridization leverages the global context provided by transformers with structured, geometric relational reasoning capacity of GNNs.

Key technical elements:

Handling Uncertainty: Theory and Practice

Uncertainty is quantified and managed via:

Empirical Evaluation

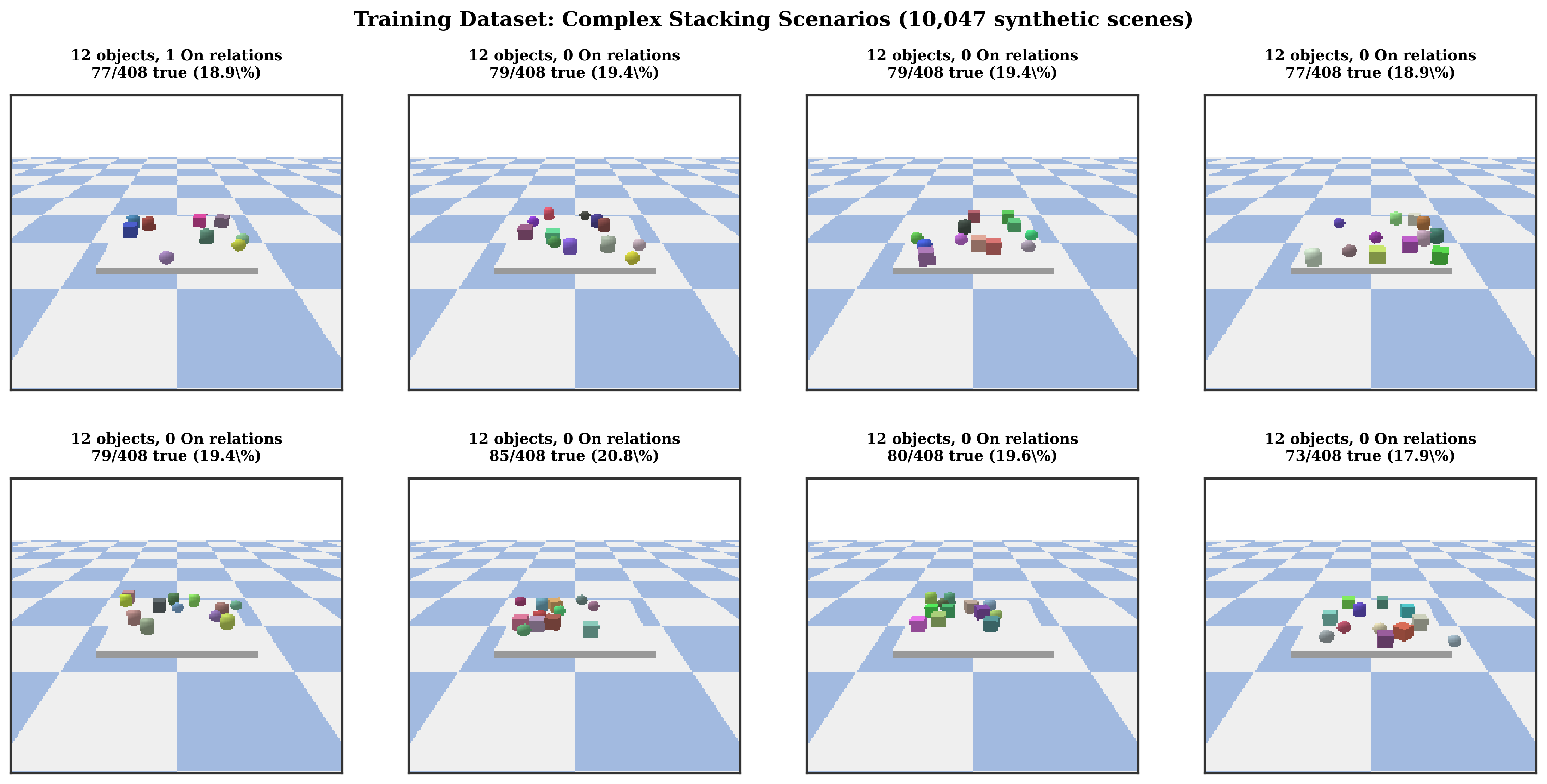

Experiments target tabletop manipulation with UR5 and Franka robots in PyBullet, testing on three benchmarks—Simple Stack, Deep Stack, and Clear+Stack—plus transfer to YCB-Video scenes. The framework is rigorously compared against POMDP solvers (DESPOT, POMCP), neural and neuro-symbolic baselines (PrediNet, LTN, NS-CL, VQA), as well as pure end-to-end RL agents.

Key findings:

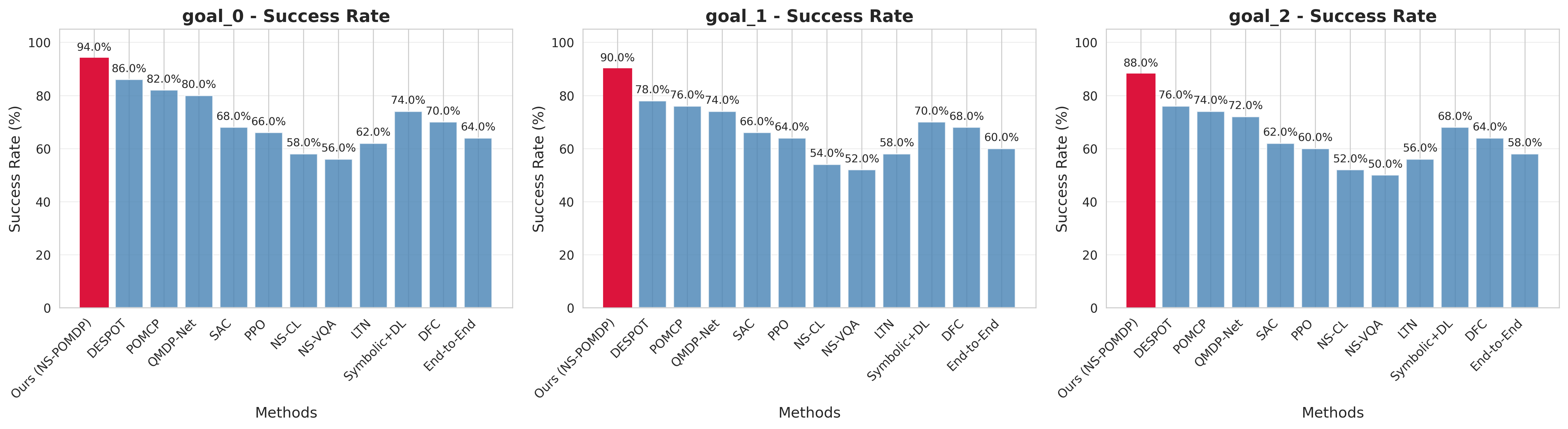

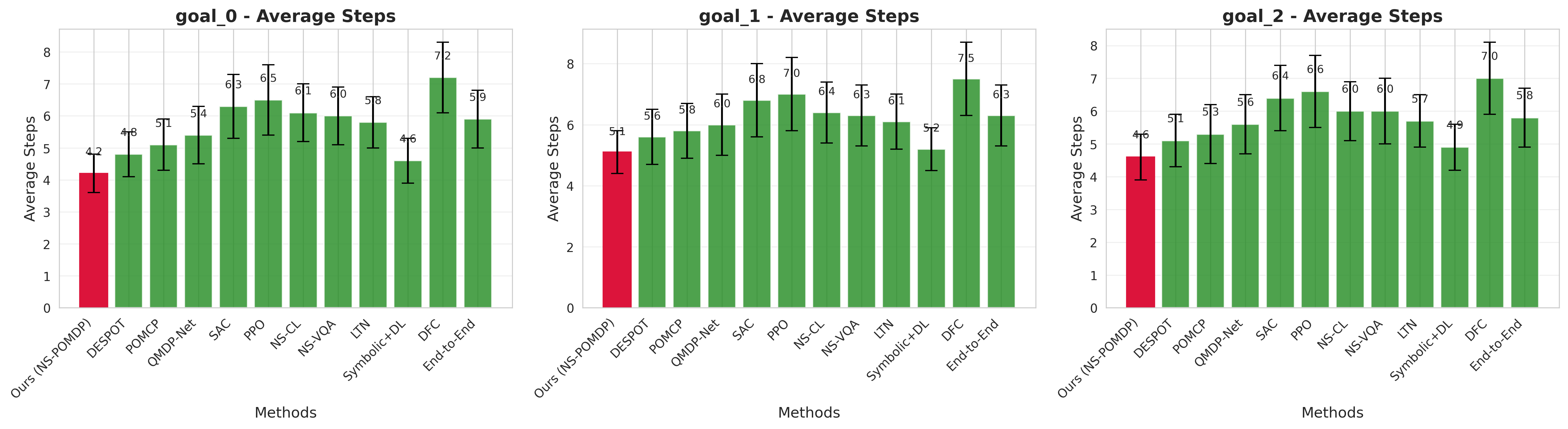

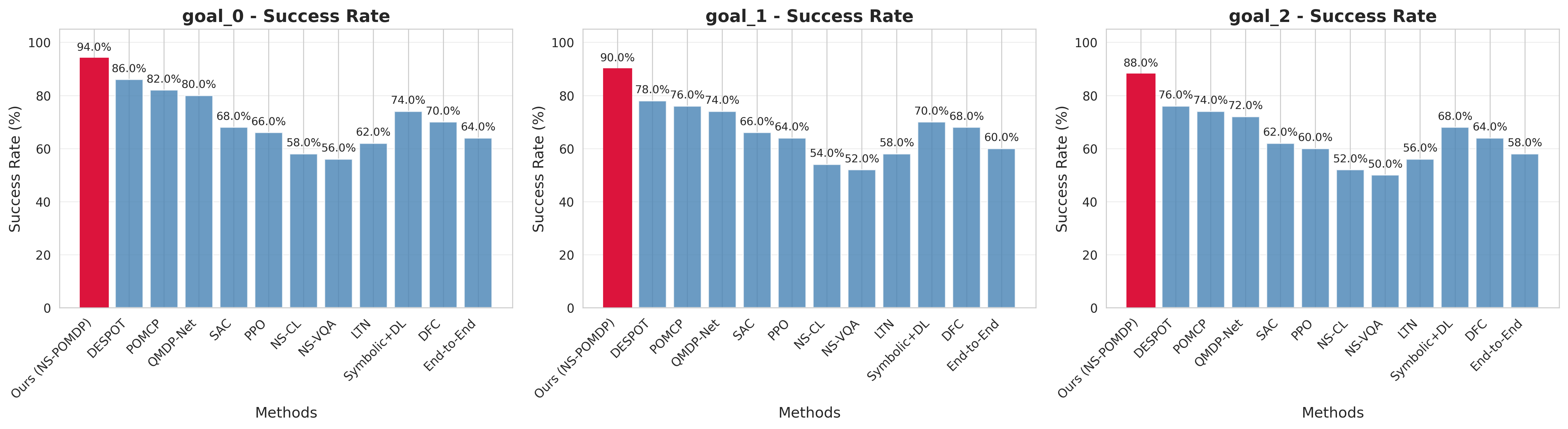

- Task Success: Achieves 94%/90%/88% (average 90.7%) success on the main benchmarks, exceeding the best POMDP baseline by 10–14 points.

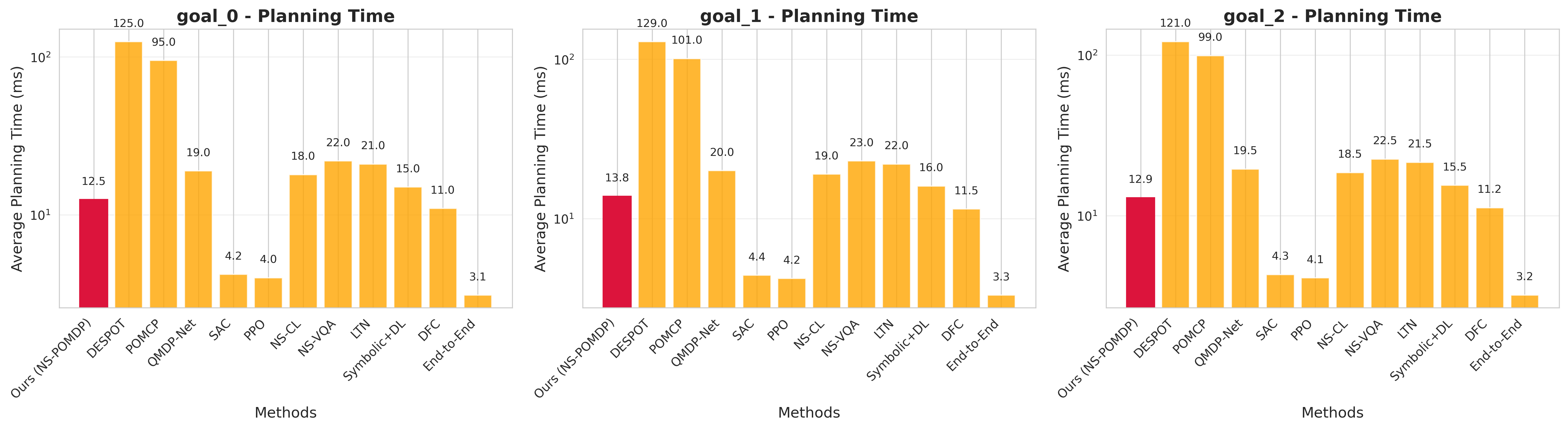

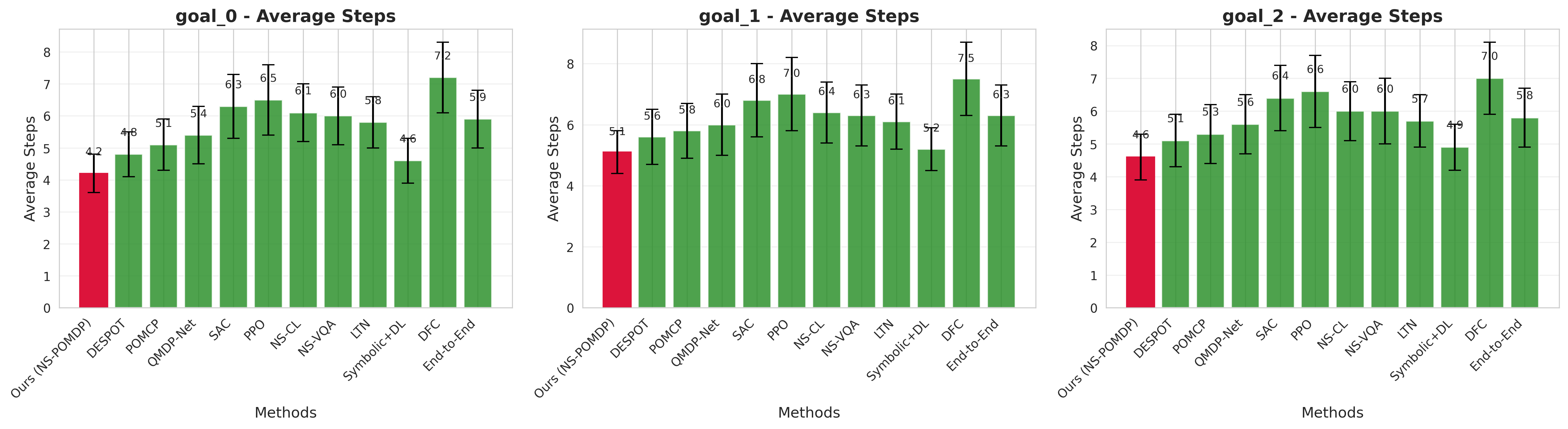

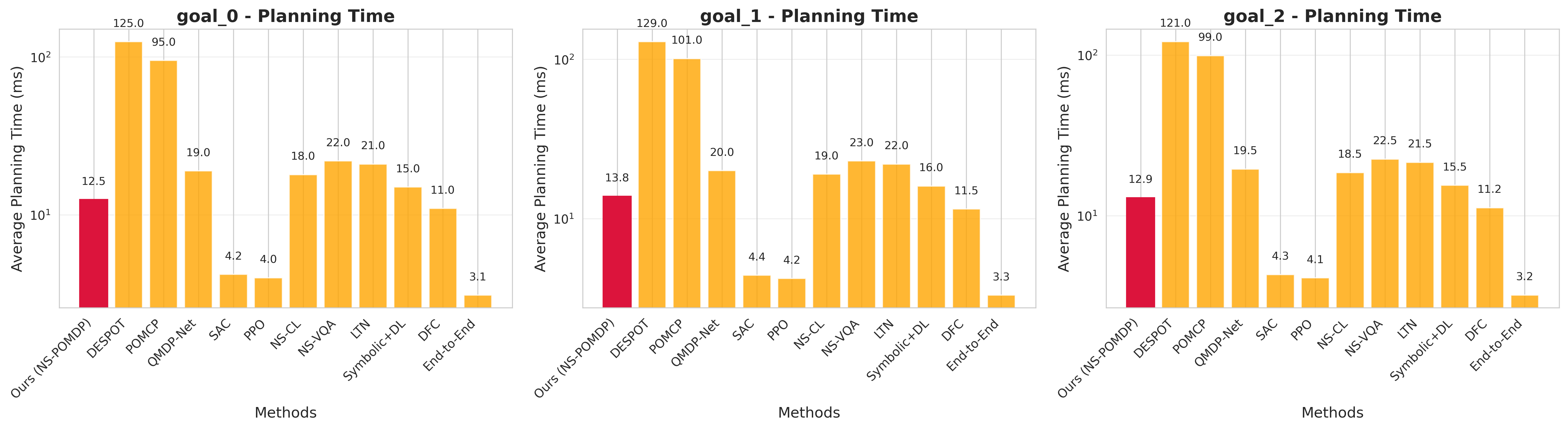

- Sample and Computational Efficiency: Planning times remain in 10–15 ms per episode, over an order-of-magnitude faster than POMDP solvers operating on raw observations.

- Plan Quality: Consistently shorter plans and fewer unnecessary information-gathering actions due to calibrated beliefs.

- F1 Scores: Relation prediction with overall F1 = 0.68 (Clear: 0.75, LeftOf: 0.68, On: 0.52), robust across small and large object counts.

Figure 4: Success rate comparison across all three benchmarks—neuro-symbolic framework statistically dominates all baselines.

Figure 5: The neuro-symbolic planner achieves higher success and shorter plans, due to uncertainty-driven information gathering.

Figure 6: Planning time per episode—framework provides an order-of-magnitude efficiency gain over POMDP-based methods.

The ablation study underscores the importance of each module: without information gathering, success drops by up to 15%; without adaptive thresholding, F1 plummets by 143%; dropping the GNN or using fixed thresholds individually yields substantial accuracy degradation.

Theoretical Contributions and Guarantees

The most salient theoretical advances include:

- Calibration-Convergence Link: Quantitative connection between uncertainty calibration (ECE) and planning convergence; guarantees hold only when predicted confidences are calibrated.

- Dependency-Aware Uncertainty (MRF): Uncertainty propagation and planning are made tighter via MRF-based modeling of logical dependencies, outperforming naïve independence assumptions.

- Analytical Threshold Selection: Optimal planning threshold is analytically determined based on task-specific empirical tradeoffs, yielding provably efficient and robust threshold settings.

These are validated empirically—uncertainty reduction per information-gathering step matches theoretical predictions (α=0.287±0.043, R2=0.912); convergence in action steps differs by under 17% from predicted bounds.

Implications and Future Directions

Practical Implications

- Interpretability and Modularity: The symbolic abstraction layer provides interpretable task plans, facilitates diagnostic inspection, and enables integration of domain knowledge.

- Robustness and Efficiency: Explicit, data-driven quantification of perceptual uncertainty enables more robust and efficient planning under partial observability and ambiguous input, with strong numerical results indicating practical viability for complex manipulation.

- Information Gathering as Endogenous Planning: The planner's ability to trigger information-gathering actions conditionally, rather than as a fixed policy, is critical for balancing reliability and efficiency.

Theoretical and Generalization Implications

- The modeling approach is domain-agnostic—applicable to any task where continuous input must be converted into symbolic knowledge under uncertainty (e.g., scene understanding, autonomous navigation).

- The explicit calibration-convergence theorem formalizes a foundation for analyzing integration of neural and symbolic components in hybrid AI systems, extending to potentially more complex tasks such as multi-agent environments or real-world robotic deployments.

Future Research Directions

- Sim-to-Real Transfer: Addressing reality gap in calibration and geometric modeling with techniques including domain adaptation and calibration transfer.

- Multi-Modal and Multi-Task Extensions: Extending the translator and planning framework to handle richer sensory input, temporal relations, and dynamic environments.

- Improved Geometric Reasoning: Further advancing relation inference (especially On relations) via multi-view perception, 3D structure learning, or more sophisticated GNN architectures.

- Active Learning for Perception: Integrating active learning objectives to optimize dataset generation in uncertain perceptual regimes.

Conclusion

This work rigorously advances the integration of deep perceptual modeling and symbolic planning under uncertainty, delivering both principled theoretical foundations and strong practical gains in robotic manipulation tasks. The framework quantifies and exploits perceptual uncertainty at the symbolic level, efficiently bridging the gap between stochastic continuous observation and discrete, logic-driven task execution. Both the architecture and analysis generalize to broader AI domains, laying a foundation for scalable, robust neuro-symbolic systems.