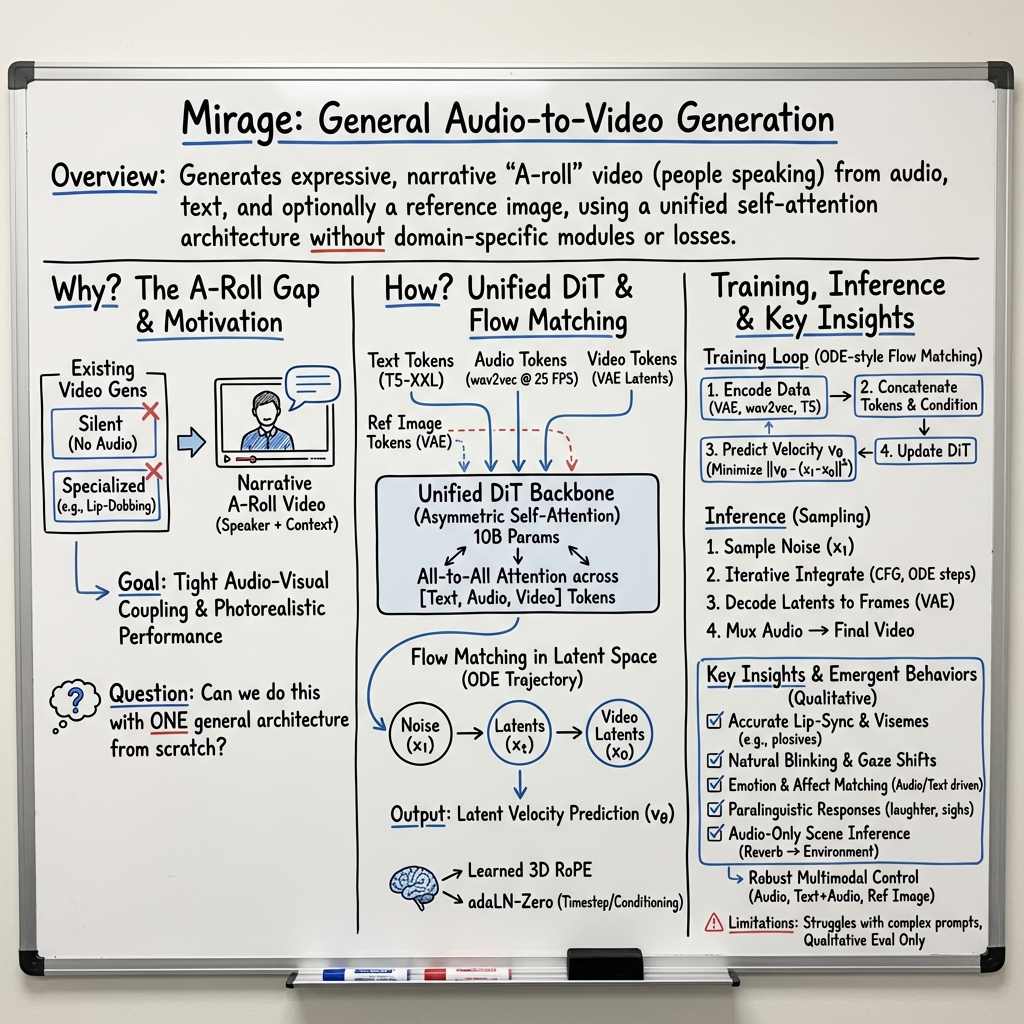

- The paper presents Mirage, a model that generates realistic A-roll videos using a 10-billion parameter Diffusion Transformer with unified self-attention.

- It utilizes a homogeneous architecture with learned rotary position embeddings and a warm-up training regime to seamlessly integrate audio, text, and video inputs.

- Experimental results show high synchrony in lip movement and facial expressions, robust handling of varied inputs, and effective audiovisual alignment.

Overview

The paper "Seeing Voices: Generating A-Roll Video from Audio with Mirage" (2506.08279) introduces Mirage, an advanced foundation model developed to generate A-roll videos from only an audio input. A-roll refers to the primary segment of footage where the primary subject is speaking, usually seen in films or videos where narrative delivery is critical. Mirage integrates this generation capability with existing methodologies for speech synthesis to deliver realistic multimodal videos. Implements a unified, self-attention-based architecture, Mirage achieves superior subjective quality, avoiding domain-specific assumptions, making it a versatile approach suitable for various audio-to-video generation tasks.

Technical Contributions

The key technical advancement of Mirage rests in its use of a Diffusion Transformer (DiT) architecture, which utilizes asymmetric self-attention to cohesively manage information across the audio and video modalities. This setup allows for easy adaptation and incorporation of additional signals such as text and images, thereby extending the model's functional flexibility. By employing self-attention layers combined with a straightforward warm-up and stitching training methodology, the system can achieve realistic outputs without requiring audio-specific architectures or components specialized for speech or image capture. Additionally, Mirage’s approach allows for training from scratch or fine-tuning from an existing silent video model, balancing attention across different modalities without sacrificing quality.

Methodology

Architecture:

Mirage employs a 10-billion parameter DiT model with 48 Transformer blocks facilitating joint self-attention operations across audio, text, and video inputs. By concatenating tokens instead of using cross-attention or separate processing layers, Mirage maintains a homogeneous structure allowing for seamless addition and removal of modalities. This flexibility stems from its use of learned Rotary Position Embeddings applied to three dimensions and a scaling and bias calculation strategy for attention across [text, audio, video] tokens.

Training Regime:

Training leverages latent Flow Matching, flowing from unit Gaussian noise to the latent data distribution across spatiotemporal dimensions. A linear quadratic schedule is used to sample steps during training, and the AdamW optimizer is employed for optimization. For reference image conditioning, Mirage simply concatenates the reference image tokens along the sequence dimension to condition on them efficiently. This efficient training process lets it match modalities smoothly while retaining expressive output quality.

Data Processing:

The curated dataset for Mirage's training comprises 720p videos with corresponding audio clips, meticulously processed with scene segmentation and filtering designed to prioritize high-quality narrative-relevant content. By leveraging complex data processing flows, the authors developed a resource-efficient system that maintained dataset quality, preserving expressivity and validity across various input and scene types.

Results

The model evaluation emphasizes several qualitative metrics: adherence to prompt details, synchronization of facial details with audio, fluidity in subject body motion, and nuanced emotional expressivity. Significant focus is given to evaluating plosive phoneme articulation, naturalistic eye behaviors, and gesture-aligned semantics within generated clips. Mirage demonstrates pronounced strengths in maintaining high precision in facial and body expressiveness synchronized with audio, thereby providing engaging and convincing performances.

Mirage achieves high consistency in scenarios of audio-only conditioning, successfully inferring speaker attributes or environmental contexts from acoustic properties alone. Even under conditions of mismatched text and audio inputs, the model tends to visually align the video with vocal characteristics, displaying robustness and adaptability, although greater semantic alignment yields better visuals.

Conclusion

Mirage represents a step forward in audio-to-video generation, maintaining generality while producing high-quality results across parameters. The model's ability to generate convincing, highly expressive, multimodal A-roll videos from disparate signals positions it as a competitive tool for narrative video generation. Its versatile architecture and training adaptations demonstrate a clear pathway towards practical and flexible video storytelling applications. Ultimately, this work opens avenues for more expansive exploration of audiovisual synthesis, enhancing creative film production, virtual avatars' expressivity, and beyond.