Retrieval Augmented Generation Evaluation in the Era of Large Language Models: A Comprehensive Survey

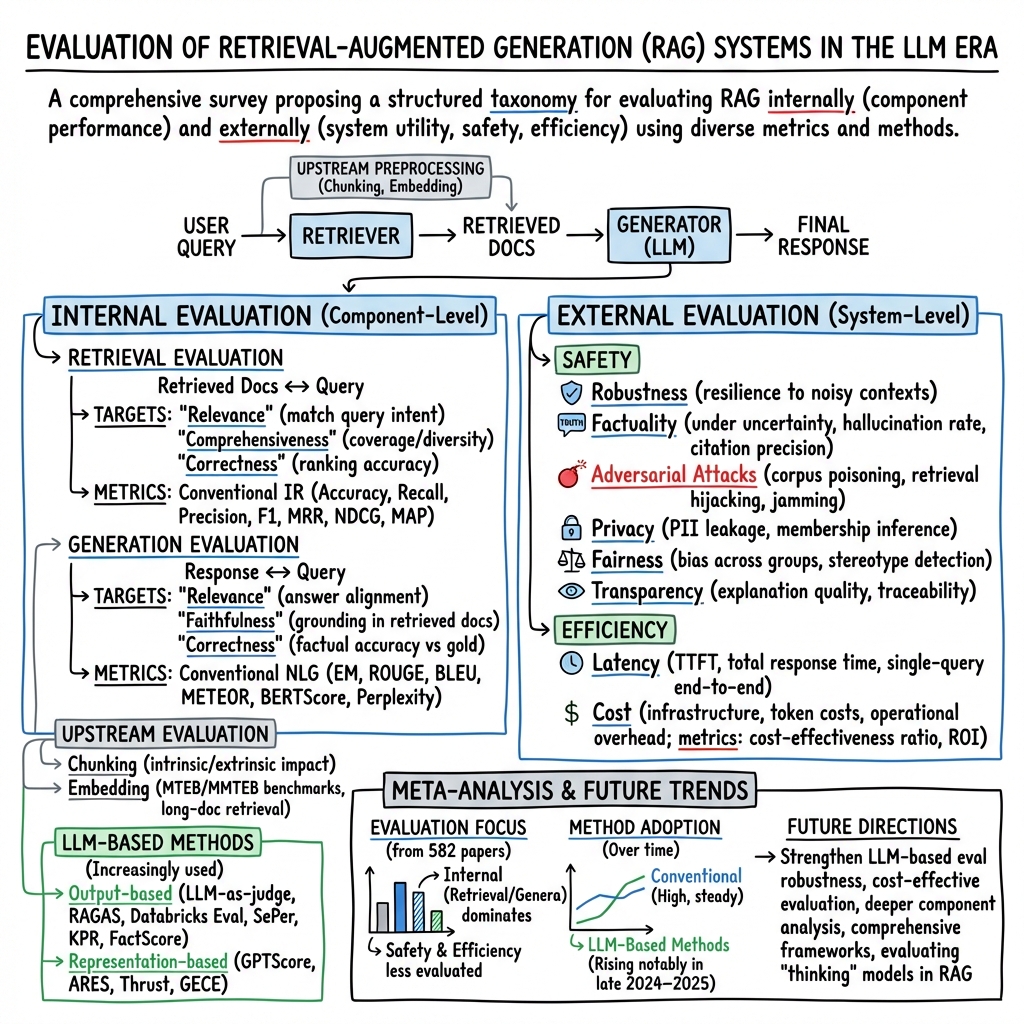

Abstract: Recent advancements in Retrieval-Augmented Generation (RAG) have revolutionized natural language processing by integrating LLMs with external information retrieval, enabling accurate, up-to-date, and verifiable text generation across diverse applications. However, evaluating RAG systems presents unique challenges due to their hybrid architecture that combines retrieval and generation components, as well as their dependence on dynamic knowledge sources in the LLM era. In response, this paper provides a comprehensive survey of RAG evaluation methods and frameworks, systematically reviewing traditional and emerging evaluation approaches, for system performance, factual accuracy, safety, and computational efficiency in the LLM era. We also compile and categorize the RAG-specific datasets and evaluation frameworks, conducting a meta-analysis of evaluation practices in high-impact RAG research. To the best of our knowledge, this work represents the most comprehensive survey for RAG evaluation, bridging traditional and LLM-driven methods, and serves as a critical resource for advancing RAG development.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

Overview: What this paper is about

This paper is a big “map” of how to test and grade RAG systems. RAG stands for Retrieval-Augmented Generation. Think of a RAG system as a team with two roles:

- A finder who searches for useful information (retrieval)

- A writer who uses that information to answer your question (generation)

Because modern AI (LLMs, or LLMs) can now look up outside information, they can be more accurate and up-to-date. But that also makes testing them harder. This paper explains clear ways to evaluate both the finder and the writer, and the whole system in real-world use.

The main questions the paper asks

Here are the simple questions the authors focus on:

- How do we fairly judge each part of a RAG system (the finder and the writer)?

- How do we judge the whole system in real life (Is it safe? Fast? Reliable?)

- Which old-school scores still work, and which new AI-era methods are better?

- What tools, datasets, and frameworks already exist to help with testing?

- What are common mistakes and gaps in how RAGs are evaluated today?

How the authors studied it

This is a survey paper. That means the authors read a lot of existing research and organized it into a simple structure. They divide evaluation into two big buckets:

- Internal evaluation (inside the system): Do the finder and writer do their jobs well? Do they work well together?

- External evaluation (in the real world): Is the system safe, fair, private, and fast enough for users?

They also:

- Explain classic evaluation scores (from search and writing) in simple terms.

- Describe new “LLM-as-a-judge” methods where an AI helps grade another AI’s answers.

- Collect datasets, benchmarks, and tools that people can use to test RAG systems.

- Analyze how top RAG papers actually do evaluation in practice.

To make technical ideas friendly, think of:

- Retrieval like using the school library: Can you find the right books and pages?

- Generation like writing a report: Is your answer correct, relevant, and based on the sources?

- Evaluation like grading: Do we check the finder, the writer, and how they behave under pressure?

What methods are used to evaluate RAG?

The paper explains two levels with plain ideas.

Internal evaluation (grading the finder and the writer)

- For the finder (retrieval):

- Relevance: Did it find documents about the question?

- Coverage/Comprehensiveness: Did it find enough different pieces to cover the topic?

- Correctness: Did it rank the truly useful documents higher than the useless ones?

- For the writer (generation):

- Relevance: Does the answer match the question?

- Faithfulness: Is the answer actually supported by the retrieved documents (no making things up)?

- Correctness: Does it match the correct answer, when one is known?

To measure these, researchers use:

- Classic search scores: precision, recall, MRR, NDCG (all ways to judge how well search results are ordered and how many are correct).

- Classic writing scores: EM (exact match), ROUGE, BLEU, METEOR, and BERTScore (ways to compare your answer to a known correct answer or reference text).

- Upstream checks: Do “chunks” (how documents are split into pieces) and “embeddings” (mathy text fingerprints) help retrieval? Benchmarks like MTEB test embedding quality.

New LLM-era methods include:

- LLM-as-a-judge: Ask an AI to check if an answer is supported by the sources, complete, or hallucinated (making stuff up). Examples: RAGAS, Databricks Eval.

- Fact-by-fact checking: Break answers into small facts and verify each one against the sources (e.g., FactScore).

- Representation-based checks: Use hidden vectors inside models (their “thought fingerprints”) to measure things like how much the model relied on external context.

- Specialized metrics: For example, measuring risk when the system should refuse to answer, or how much external knowledge actually influenced the response.

External evaluation (grading real-world use)

- Safety: Is the system robust to bad or tricky documents? Does it avoid hallucinations? Can it be attacked (e.g., poisoned sources, privacy leaks)? Is it fair and unbiased? Does it cite sources correctly?

- Efficiency: Is it fast enough? Two key times matter: time to first token (how fast the response starts) and total response time.

The paper lists attack types (like poisoning the document pool or hijacking rankings), and how to measure whether the system falls for them (attack success rate, hallucination rate, citation accuracy, privacy leakage rate, and more). It also highlights safety benchmarks and red-teaming approaches that stress-test RAG systems.

The main findings and why they matter

Here are the key takeaways, explained simply:

- There’s no single “best score” to judge a RAG. You need a toolbox. Different parts (finder vs writer) and different goals (accuracy vs safety vs speed) need different tests.

- Old metrics still help, but they’re not enough. New LLM-based methods (like AI judges and vector-based checks) give deeper insight, especially about faithfulness and hallucinations.

- Preprocessing matters a lot. How you split documents (chunking) and how you turn text into vectors (embeddings) can make or break retrieval quality.

- Safety is a big deal. RAG systems can be tricked by bad documents, leak private information, or show bias. Many defenses are still weak, so better testing is urgently needed.

- Community resources exist but are scattered. The survey pulls together datasets, frameworks, and benchmarks to help people evaluate RAGs more consistently.

Why this matters: If we can test RAG systems better, we can build AI helpers that are more accurate, trustworthy, fair, and fast. That means better homework helpers, research assistants, customer support bots, and tools for doctors, scientists, and more.

What this could change going forward

- Researchers get a clear checklist for testing RAG systems from the inside out, which should speed up progress.

- Companies can use these metrics and tools to launch safer, more reliable AI products.

- The field can move toward shared standards for evaluating RAG, making comparisons fair and improving quality overall.

- Expect more use of “AI as the judge,” but also more careful validation to avoid new kinds of bias.

- Stronger safety tests and defenses will likely become standard, especially for privacy, misinformation, and robustness.

In short, this paper is a practical guide to grading the “finder + writer” AI team. With better grading, we’ll get better teams.

Collections

Sign up for free to add this paper to one or more collections.