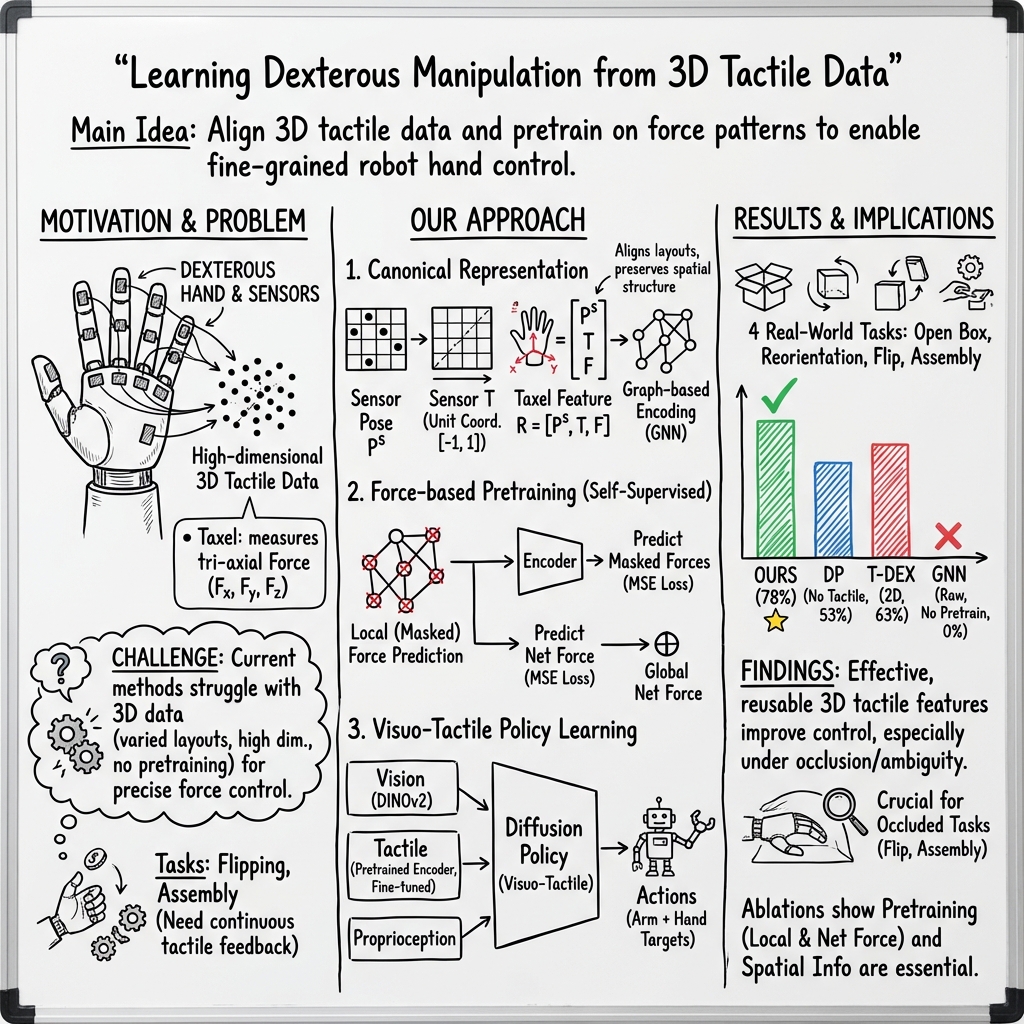

- The paper introduces a canonical representation for 3D tactile data and force-based pretraining that enhances manipulation success rates.

- The methodology normalizes sensor features and uses local and net force predictions to boost feature learning efficiency and policy adaptation.

- Experimental results demonstrate a 78% success rate across complex dexterous tasks, showcasing practical improvements in visuo-tactile policies.

Canonical Representation and Force-Based Pretraining of 3D Tactile for Dexterous Visuo-Tactile Policy Learning

Abstract

This paper introduces a novel approach to tackling the complexity of 3D tactile feature learning in dexterous visuo-tactile policy learning, specifically designed to address high-dimensional tactile sensor data challenges. By proposing a canonical representation and developing a force-based self-supervised pretraining task, the paper seeks to enhance both the efficiency and effectiveness of tactile feature learning, ultimately improving dexterous manipulation success rates.

Introduction

Dexterous hand manipulation is pivotal for integrating robots into everyday human-centric environments, underscoring the necessity of advanced tactile sensing capabilities to master fine-grained, contact-rich tasks. While significant progress has been made through visual base imitation learning, tactile sensing remains a critical component that poses unique challenges due to high dimensionality and the absence of standardized data sets.

Consequently, the paper proposes a canonical representation for 3D tactile data that aligns varying sensor features and reduces the feature space, thereby facilitating more effective learning. Additionally, a force-based self-supervised task is introduced to capture both local and net force dynamics. These innovations are validated experimentally, revealing an average success rate of 78% across four complex real-world dexterous tasks: opening boxes, reorienting objects, flipping caps, and assembling parts.

Tactile Sensing in Dexterous Manipulation

Historically, tactile sensors have been leveraged to refine dexterous manipulation capabilities. Among these, vision-based tactile sensors like GelSight and distributed tactile sensors such as uSkin have found extensive application due to their integration efficiency across different robotic configurations. Despite their utility, the high-dimensionality induced by broad sensor coverage remains an impediment to streamlined feature learning.

Tactile Representation

Existing tactile representations vary from 2D images that leverage visual backbones to graph-based methods using GNNs for encoding spatial signals. While graph-based methods maintain taxel spatial relationships, they require significant data to attain effective learning outcomes. This paper outlines improvements over these methods by presenting a canonical representation that unifies taxel coordinates within distributed sensors.

Tactile Pretraining

Previous approaches to tactile pretraining—especially inter-modal pretraining—have primarily focused on cross-modal interactions for multi-modal learning. In contrast, the current work emphasizes intra-modal pretraining specifically tailored for 3D tactile data, adopting a strategy that enhances feature learning efficiency by focusing on local and net force predictions.

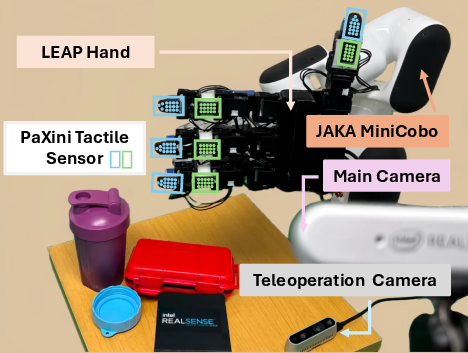

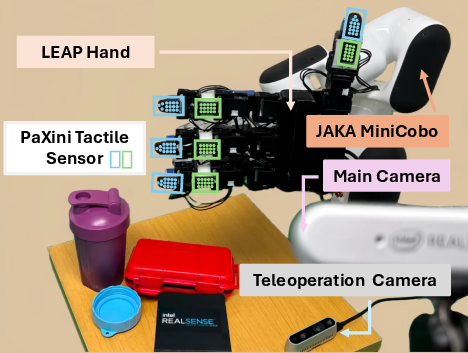

Robot System Setup

The experimental setup features a robotic system comprised of a 6-DoF JAKA MiniCobo arm and a 16-DoF Leap Hand, equipped with discreet PaXini tactile sensors and a teleoperation camera system for data collection.

Figure 1: Real Robot System using distributed tactile sensors to execute dexterous tasks.

Method

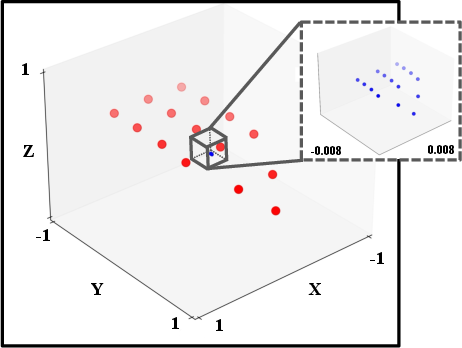

Canonical Tactile Representation

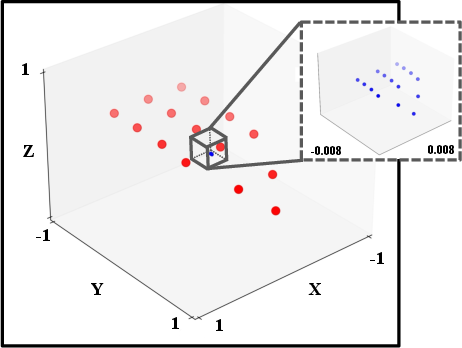

To preserve taxel spatial relationships in 3D tactile data, the paper presents a novel canonical representation, normalizing taxel coordinates within each sensor's coordinate system. This canonicalization enhances feature learning by amplifying the distances between taxels, thereby facilitating more precise local feature capture.

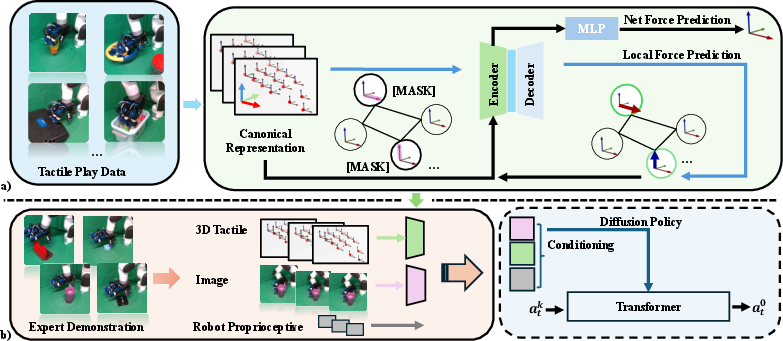

Figure 2: Comparison of Canonical Representation and Original Representation, illustrating feature space reduction and enhanced taxel discriminability.

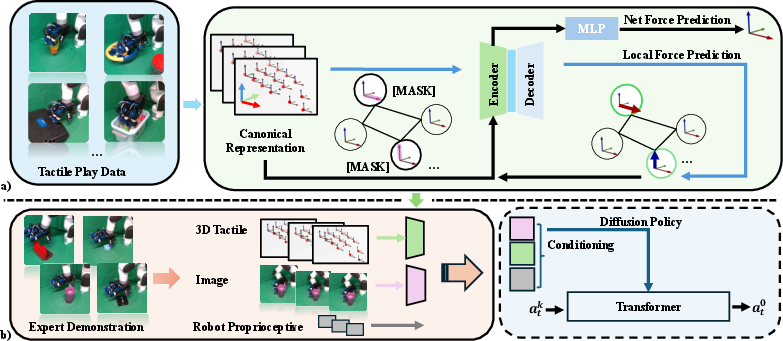

Force-Based Pretraining

Building on human manipulation paradigms, the paper proposes force-based pretraining tasks: local force prediction and net force prediction. Through these tasks, the encoder learns to discern local taxel interactions and overarching net force dynamics, which significantly enhance manipulation policy learning.

Figure 3: Pipeline for incorporating pretrained encoder within imitation learning framework for tactile manipulation.

Visuo-Tactile Policy Learning

Integrating the pretrained tactile encoder into an imitation learning framework, the method adapts diffusion policies for tactile manipulation tasks, with improved visual feature extraction capabilities via DinoV2 backbones.

Experiments

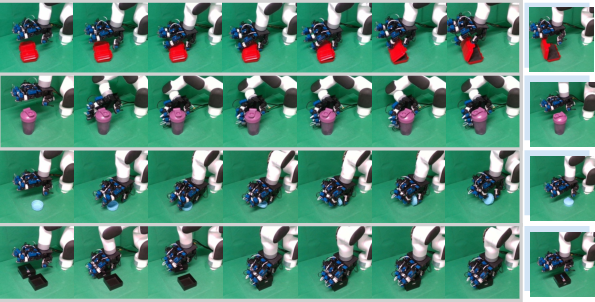

Dexerous Manipulation Tasks

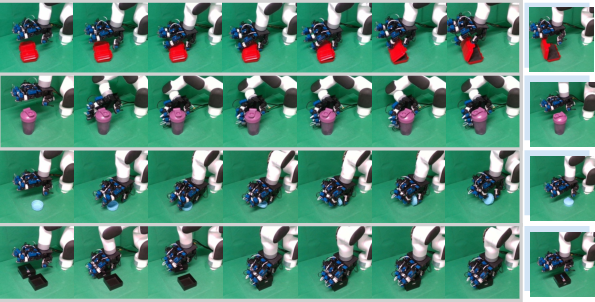

The system undergoes rigorous testing across four manipulation tasks. Success is measured through precise execution of complex, contact-rich operations, validating the efficacy of both representation and pretraining strategies.

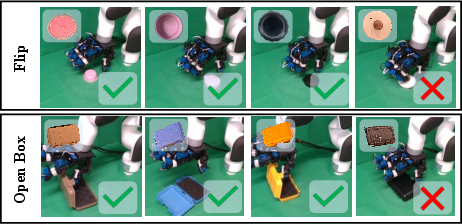

Figure 4: Visualization of Policy Rollout on manipulation tasks.

Results and Discussion

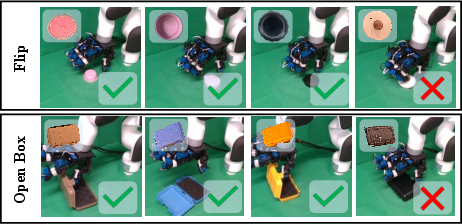

The experimental results demonstrate superior performance across tasks, establishing the significant contributions of canonical representation and force pretraining to tactile feature learning. Ablation studies further substantiate the critical role of these innovations.

Figure 5: Visualization of policy effectiveness on unseen objects, illustrating generalization capabilities.

Conclusion

Presented as a comprehensive approach to dexterous visuo-tactile policy learning, this paper highlights the transformative impact of canonical representation and force-based pretraining on tactile feature acquisition. While the system exhibits promising results, future work should address the generalization limitations posed by diverse object morphologies and dynamics, potentially refined through dynamic adaptation in tactile features.