Designing Heterogeneous LLM Agents for Financial Sentiment Analysis

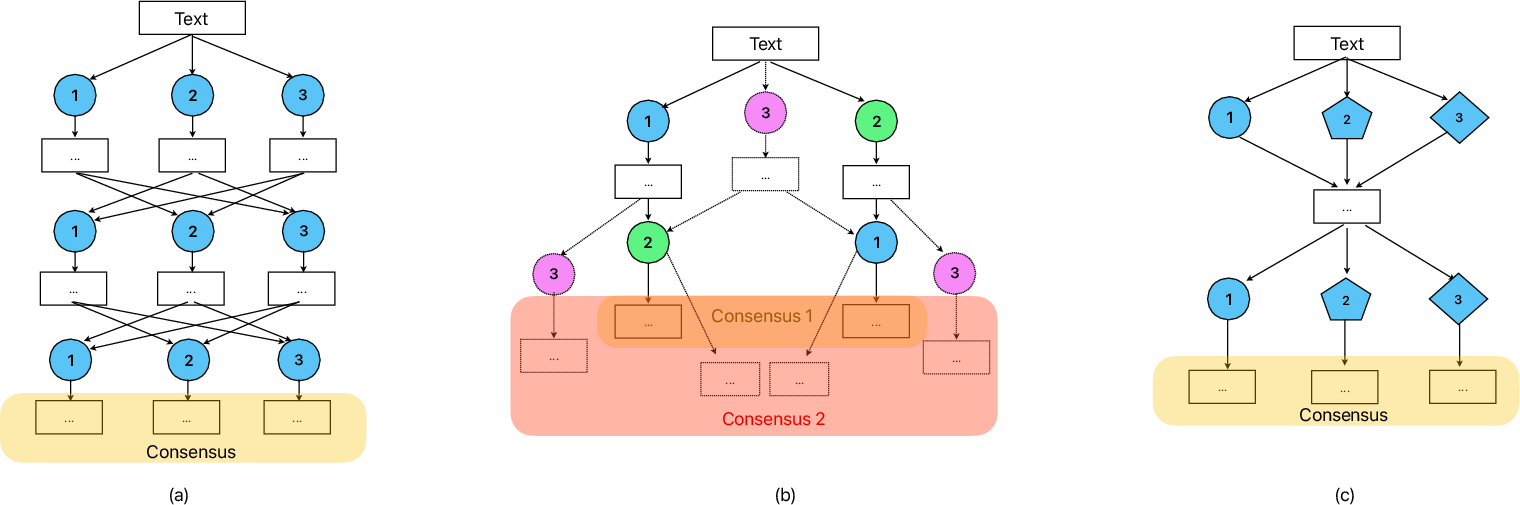

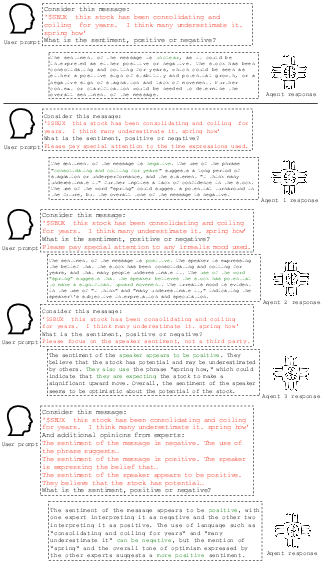

Abstract: LLMs have drastically changed the possible ways to design intelligent systems, shifting the focuses from massive data acquisition and new modeling training to human alignment and strategical elicitation of the full potential of existing pre-trained models. This paradigm shift, however, is not fully realized in financial sentiment analysis (FSA), due to the discriminative nature of this task and a lack of prescriptive knowledge of how to leverage generative models in such a context. This study investigates the effectiveness of the new paradigm, i.e., using LLMs without fine-tuning for FSA. Rooted in Minsky's theory of mind and emotions, a design framework with heterogeneous LLM agents is proposed. The framework instantiates specialized agents using prior domain knowledge of the types of FSA errors and reasons on the aggregated agent discussions. Comprehensive evaluation on FSA datasets show that the framework yields better accuracies, especially when the discussions are substantial. This study contributes to the design foundations and paves new avenues for LLMs-based FSA. Implications on business and management are also discussed.

- Twitter mood predicts the stock market. Journal of Computational Science, 2(1):1–8, 2011. doi:10.1016/j.jocs.2010.12.007.

- Language models are few-shot learners. In Proceedings of NeuIPS’20, pages 1877–1901, 2020. doi:10.48550/arXiv.2005.14165.

- S. Chen and F. Xing. Understanding emojis for financial sentiment analysis. In Proceedings of ICIS’23, pages 1–16, 2023. URL https://aisel.aisnet.org/icis2023/socmedia_digcollab/socmedia_digcollab/3/.

- Teaching large language models to self-debug, 2023. doi:10.48550/ARXIV.2304.05128.

- Investor sentiment and paradigm shifts in equity return forecasting. Management Science, 68(6):4301–4325, 2022. doi:10.1287/mnsc.2020.3834.

- Semeval-2017 task 5: Fine-grained sentiment analysis on financial microblogs and news. In SemEval Workshop, 2017. doi:10.18653/v1/S17-2089.

- D. de França Costa and N. F. F. da Silva. Inf-ufg at fiqa 2018 task 1: predicting sentiments and aspects on financial tweets and news headlines. In Companion Proceedings of the The Web Conference 2018, pages 1967–1971, 2018. doi:10.1145/3184558.3191828.

- Social trading, communication, and networks. Information Systems Research, 2023. doi:10.1287/isre.2021.0143.

- The interaction between microblog sentiment and stock returns: An empirical examination. MIS Quarterly, 42(3):895–918, 2018. doi:10.25300/misq/2018/14268.

- What do llms know about financial markets? a case study on reddit market sentiment analysis. In Companion Proceedings of the ACM Web Conference 2023, 2023. doi:10.1145/3543873.3587324.

- Leveraging financial social media data for corporate fraud detection. Journal of Management Information Systems, 35(2):461–487, 2018. doi:10.1080/07421222.2018.1451954.

- Incorporating multiple knowledge sources for targeted aspect-based financial sentiment analysis. ACM Transactions on Management Information Systems, 14(3):1–24, 2023. doi:10.1145/3580480.

- Improving factuality and reasoning in language models through multiagent debate, 2023. doi:10.48550/ARXIV.2305.14325.

- Reasoning implicit sentiment with chain-of-thought prompting. In Proceedings of ACL’23, pages 1171–1182, 2023. doi:10.18653/v1/2023.acl-short.101.

- S. Gregor and A. R. Hevner. Positioning and presenting design science research for maximum impact. MIS Quarterly, 37(2):337–355, 2013. doi:10.25300/misq/2013/37.2.01.

- Fintech as a game changer: Overview of research frontiers. Information Systems Research, 32(1):1–17, 2021. doi:10.1287/isre.2021.0997.

- Measuring massive multitask language understanding. In Proceedings of ICLR’21, 2021. doi:10.48550/arXiv.2009.03300.

- Design science in information systems research. MIS Quarterly, 28(1):75–105, 2004. doi:10.2307/25148625.

- The bigscience ROOTS corpus: A 1.6tb composite multilingual dataset. In Proceedings of NeurIPS’22, 2022. doi:10.48550/arXiv.2303.03915.

- Leveraging hierarchical language models for aspect-based sentiment analysis on financial data. Information Processing & Management, 60(5):103435, 2023. doi:https://doi.org/10.1016/j.ipm.2023.103435.

- Pre-train, prompt, and predict: A systematic survey of prompting methods in natural language processing. ACM Computing Surveys, 55(9):1–35, 2023. doi:10.1145/3560815.

- V. Liu and L. B. Chilton. Design guidelines for prompt engineering text-to-image generative models. In Proceedings of CHI ’22, 2022. doi:10.1145/3491102.3501825.

- Finbert: A pre-trained financial language representation model for financial text mining. In Proceedings of IJCAI’20, pages 4513–4519, 2020. doi:10.24963/ijcai.2020/622.

- T. Loughran and B. McDonald. When is a liability not a liability? textual analysis, dictionaries, and 10-ks. Journal of Finance, 66(1):35–65, 2011. doi:10.1111/j.1540-6261.2010.01625.x.

- WWW’18 open challenge: financial opinion mining and question answering. In Proceedings of WWW’18, pages 1941–1942, 2018. doi:10.1145/3184558.3192301.

- Good debt or bad debt: Detecting semantic orientations in economic texts. Journal of the Association for Information Science and Technology, 65(4):782–796, 2014. doi:10.1002/asi.23062.

- M. Minsky. The Emotion Machine: Commonsense Thinking, Artificial Intelligence, and the Future of the Human Mind. Simon & Schuster, 2006.

- Crosslingual generalization through multitask finetuning. In Proceedings of ACL’23, pages 15991–16111, 2023. doi:10.18653/v1/2023.acl-long.891.

- Can generalist foundation models outcompete special-purpose tuning? case study in medicine, 2023. doi:10.48550/ARXIV.2311.16452.

- R. L. Peterson. Trading on Sentiment: The Power of Minds Over Markets. Wiley, 2016. doi:10.1002/9781119219149.

- Deep learning for information systems research. Journal of Management Information Systems, 40(1):271–301, 2023. doi:10.1080/07421222.2023.2172772.

- When FLUE meets FLANG: Benchmarks and large pretrained language model for financial domain. In Proceedings of EMNLP’22, 2022. doi:10.18653/v1/2022.emnlp-main.148.

- SEntFiN 1.0: Entity-aware sentiment analysis for financial news. Journal of the Association for Information Science and Technology, 73(9):1314–1335, 2022. doi:10.1002/asi.24634.

- An information-theoretic approach to prompt engineering without ground truth labels. In Proceedings of ACL’22, 2022. doi:10.18653/v1/2022.acl-long.60.

- Sentiment analysis through llm negotiations, 2023. doi:10.48550/ARXIV.2311.01876.

- Bloomberggpt: A large language model for finance, 2023. doi:10.48550/ARXIV.2303.17564.

- Intelligent asset allocation via market sentiment views. IEEE Computational Intelligence Magazine, 13(4):25–34, 2018. doi:10.1109/mci.2018.2866727.

- Sentiment-aware volatility forecasting. Knowledge Based Systems, 176:68–76, 2019. doi:10.1016/j.knosys.2019.03.029.

- Financial sentiment analysis: An investigation into common mistakes and silver bullets. In Proceedings of COLING’20, pages 978–987, 2020. doi:10.18653/v1/2020.coling-main.85.

- Cognitive-inspired domain adaptation of sentiment lexicons. Information Processing & Management, 56(3):554–564, 2019. doi:10.1016/j.ipm.2018.11.002.

- Unlocking the power of voice for financial risk prediction: A theory-driven deep learning design approach. MIS Quarterly, 47(1):63–96, 2023. doi:10.25300/misq/2022/17062.

- Tree of thoughts: Deliberate problem solving with large language models. In Proceedings of NeuIPS’23, pages 1–14, 2023.

- Exploring the effectiveness of prompt engineering for legal reasoning tasks. In Findings of the Association for Computational Linguistics, 2023. doi:10.18653/v1/2023.findings-acl.858.

- Enhancing financial sentiment analysis via retrieval augmented large language models. In Proceedings of ICAIF’23, 2023. doi:10.1145/3604237.3626866.

- Sentiment analysis in the era of large language models: A reality check, 2023. doi:10.48550/ARXIV.2305.15005.

- The state-of-the-art in twitter sentiment analysis: A review and benchmark evaluation. ACM Transactions on Management Information Systems, 9(2):5:1–5:29, 2018. doi:10.1145/3185045.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Collections

Sign up for free to add this paper to one or more collections.