- The paper introduces a hybrid method combining domain-specific landmark detection with vessel enhancement to enable precise multimodal retinal image registration.

- It utilizes a two-step process, starting with feature-based registration using MLSEC-ST for vessel bifurcation detection, followed by intensity-based refinement with normalized cross-correlation.

- Experimental results demonstrate improved registration quality, achieving high VE-NCC scores in both healthy and pathological cases.

Multimodal Registration of Retinal Images Using Domain-Specific Landmarks and Vessel Enhancement

Multimodal retinal image registration is pivotal in ophthalmology for integrating complementary visual information from modalities such as color fundus retinography (CFR) and fluorescein angiography (FA). This integration facilitates improved disease diagnosis and patient follow-up, especially for conditions like diabetic retinopathy and hypertension. The inherent challenge arises from the distinct intensity distributions, anatomical visualizations, and modality-dependent artifacts, which complicate accurate spatial alignment. Traditional approaches—feature-based registration (FBR) and intensity-based registration (IBR)—each suffer shortcomings: FBR methods rely on generic descriptors, yielding a surplus of non-representative interest points and unreliable correspondences in multimodal scenarios, while IBR methods face difficulties with conventional similarity metrics due to inconsistent intermodal intensity relations.

Hybrid Methodology: Domain-Specific Landmarks and Vessel Enhancement

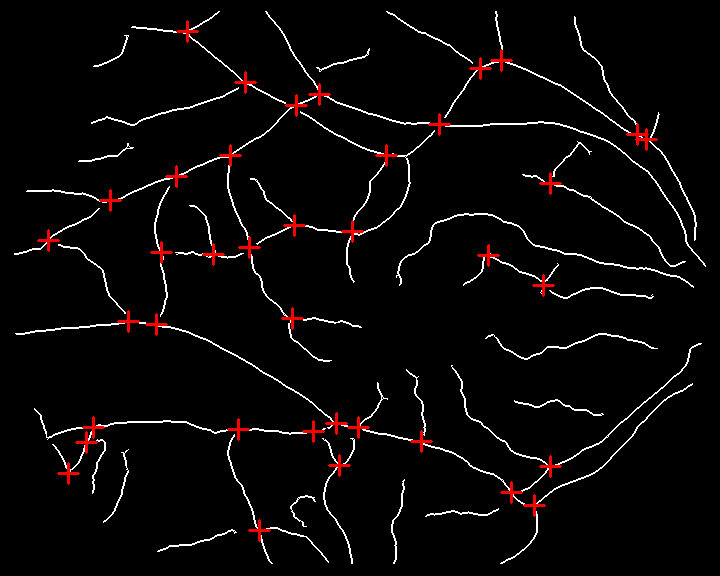

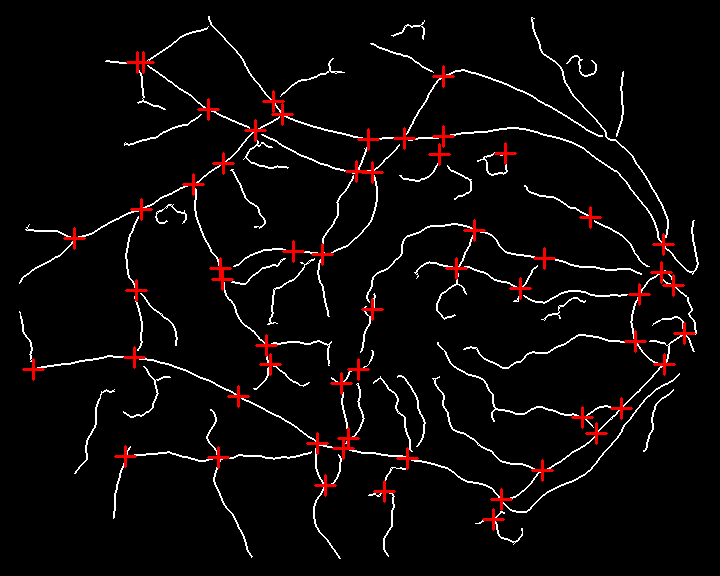

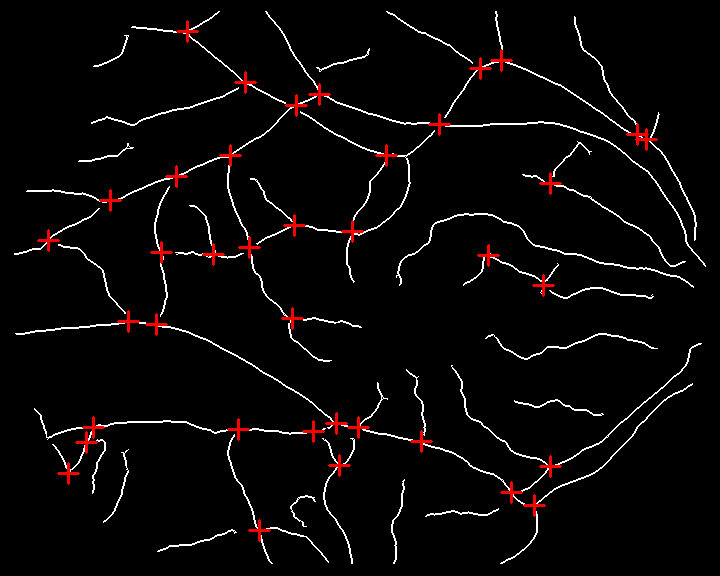

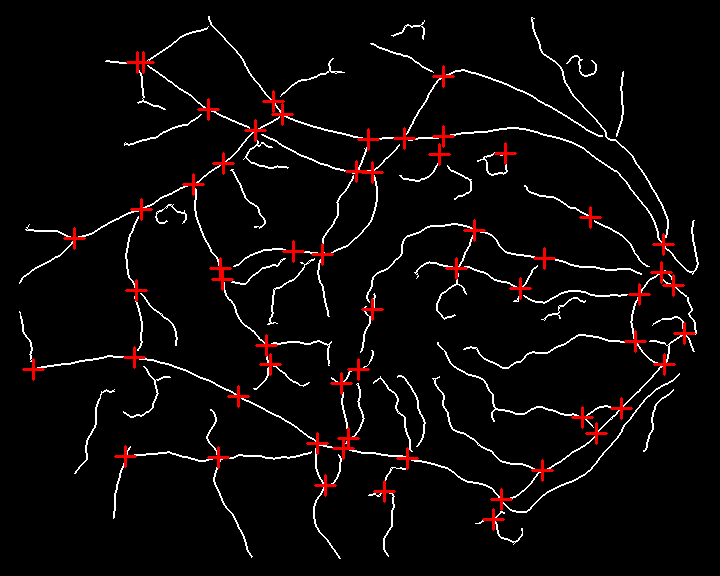

This work introduces a hybrid methodology that sequentially leverages domain-specific FBR followed by domain-adapted IBR refinement. The approach exploits the ubiquity of the retinal vascular tree across modalities, targeting vessel bifurcations and crossovers as robust, natural landmarks. Landmark detection is informed by curvature analysis using the MLSEC-ST operator, producing binary vessel trees and precise landmark localization for both CFR and FA. Transformation estimation between image pairs is achieved through geometric alignment of landmark pairs, using translation, rotation, and isotropic scaling—avoiding descriptor-based matching and reducing computational load.

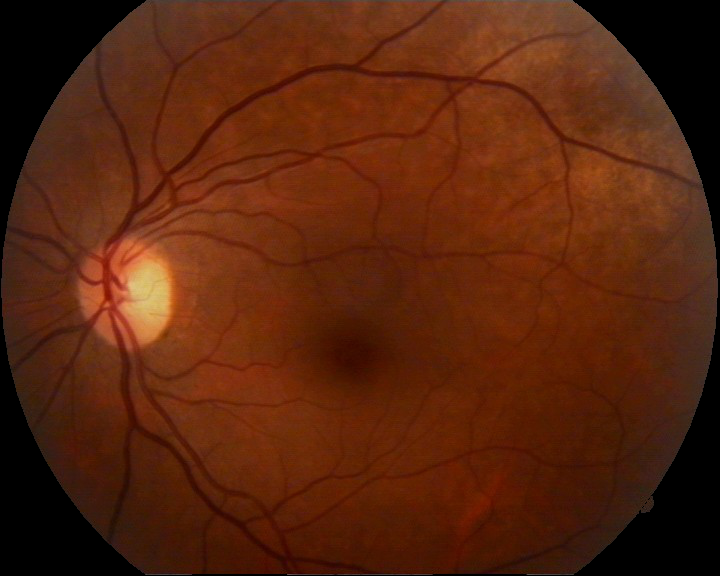

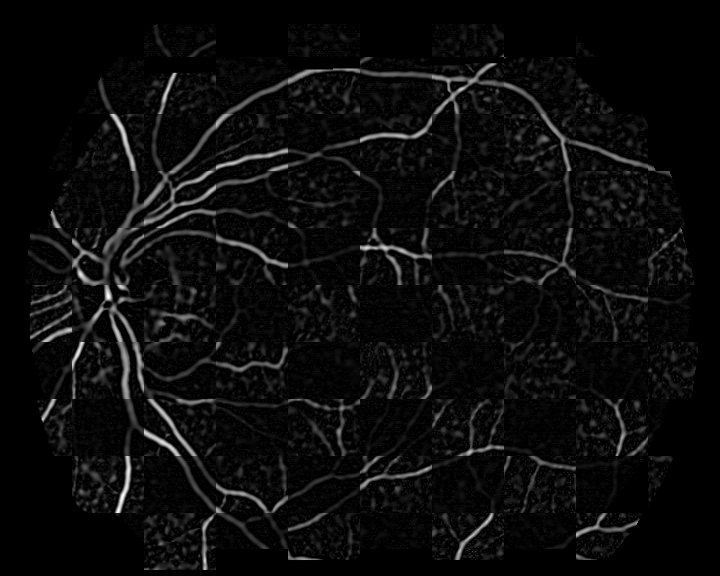

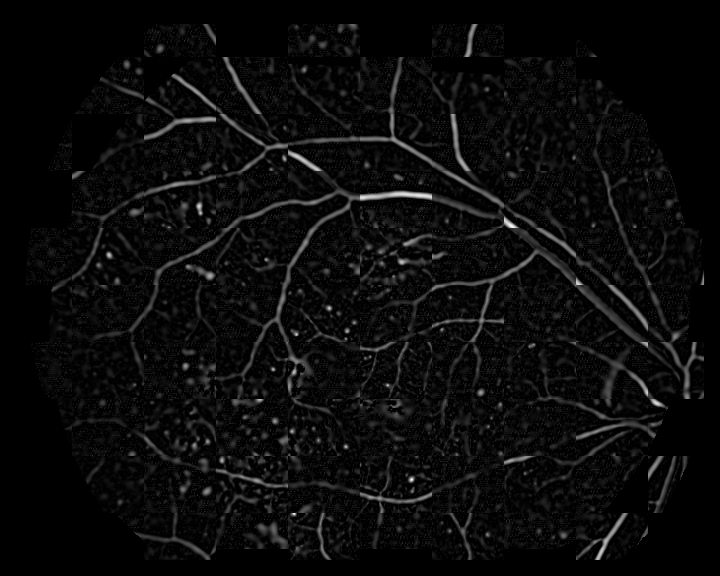

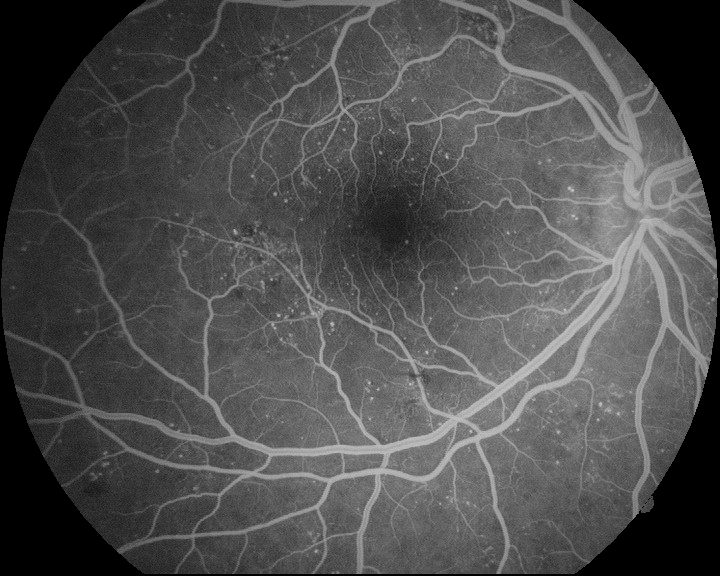

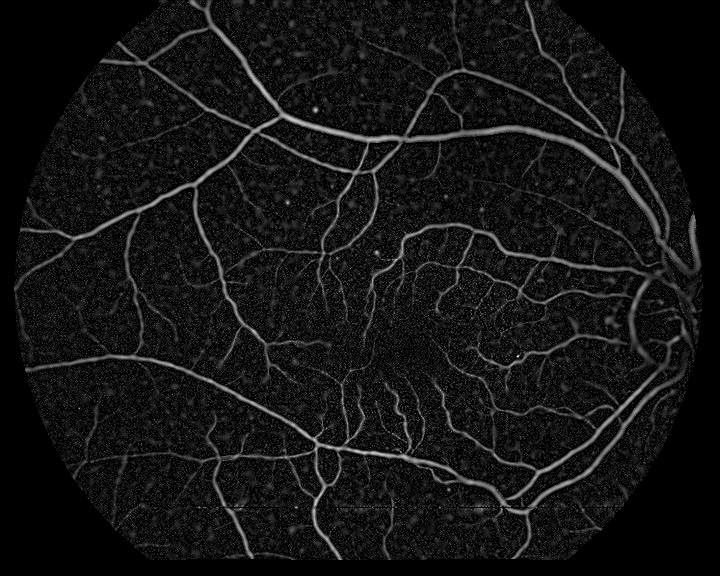

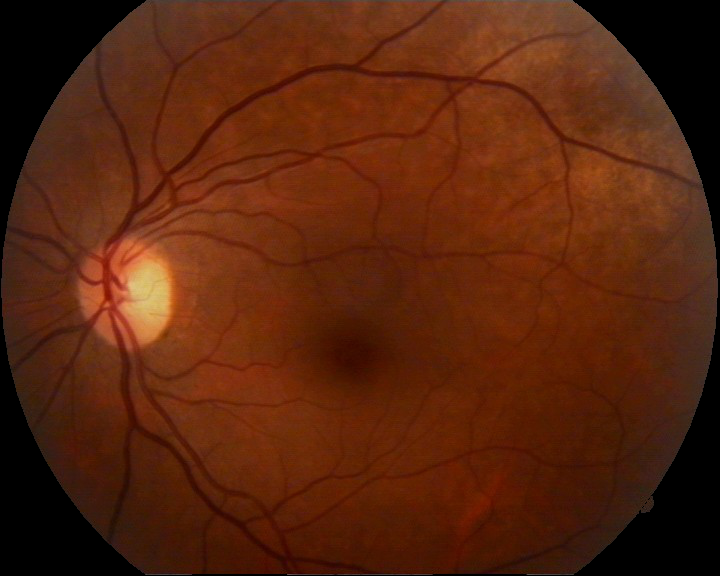

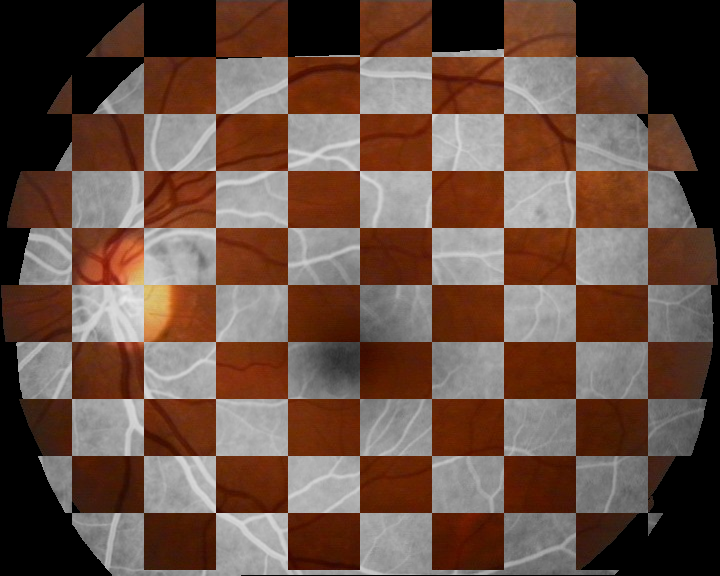

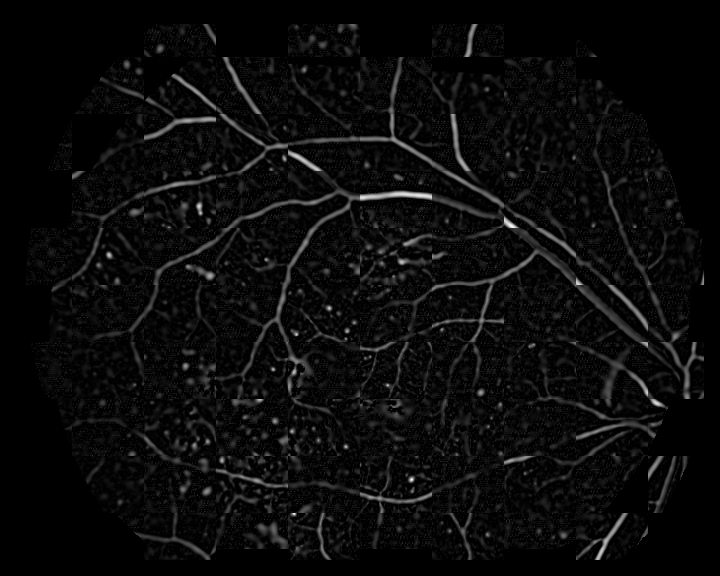

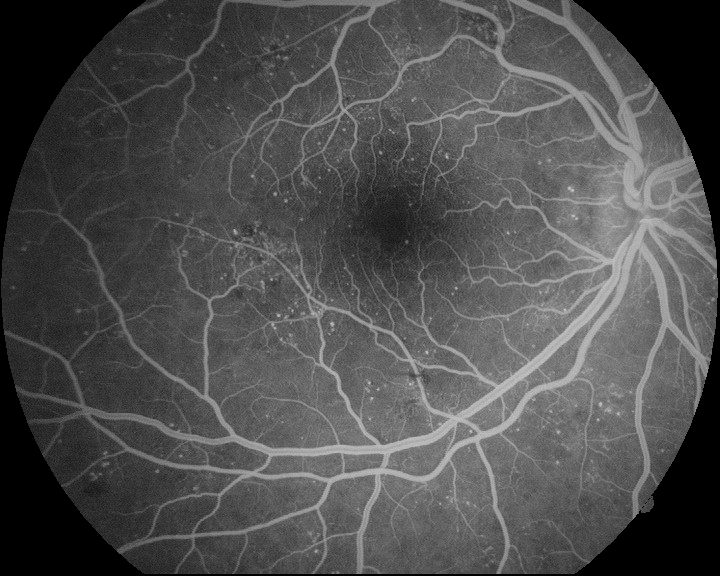

Figure 1: Example of multimodal image pair and the results of the landmark detection method—input CFR and FA, binary vessel trees, and detected landmarks.

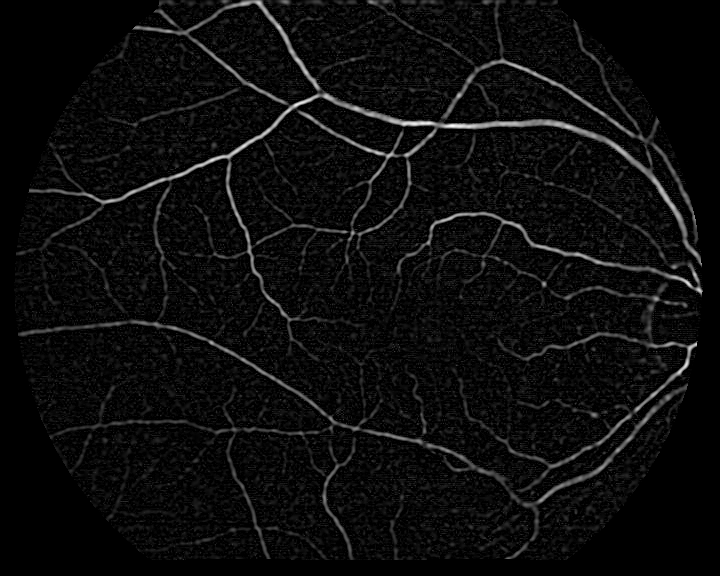

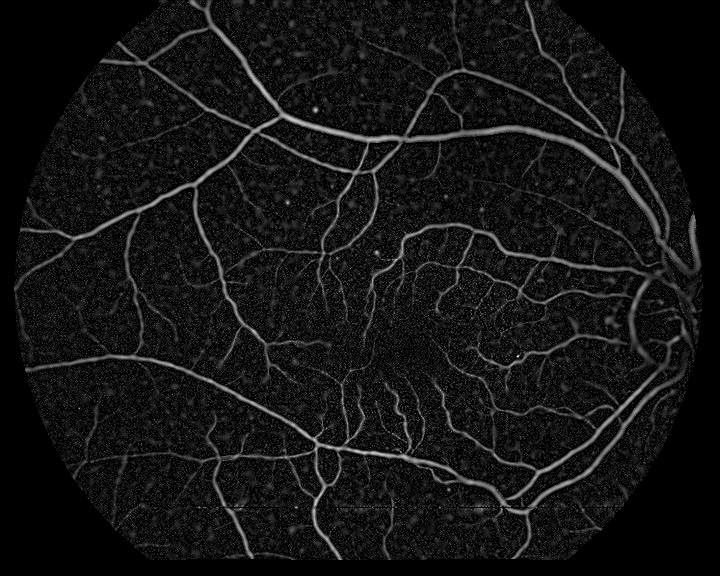

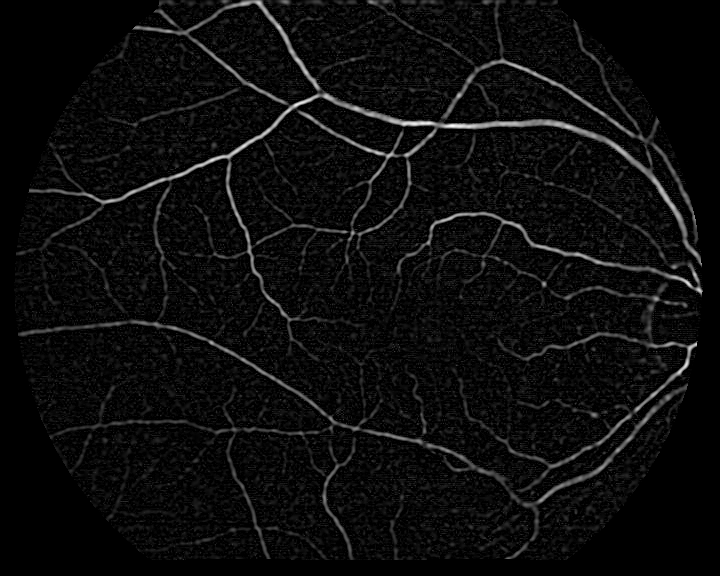

The second phase, IBR, initiates from the FBR-estimated transform and incorporates a vessel enhancement preprocessing, unifying both modalities into a common feature space. This enhancement leverages multiscale Laplacian filtering—normalizing spatial derivatives across scales and rectifying polarity based on modality—to amplify vessel centerlines, thus optimizing the commonality required for similarity assessment. The enhanced images enable the application of normalized cross-correlation (NCC) as a similarity metric, denoted as VE-NCC, suitable for high-order transformation (affine and free form deformation).

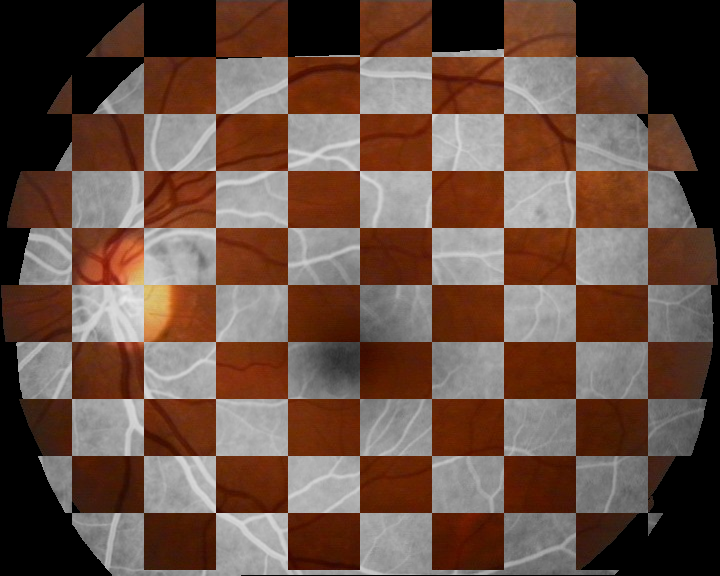

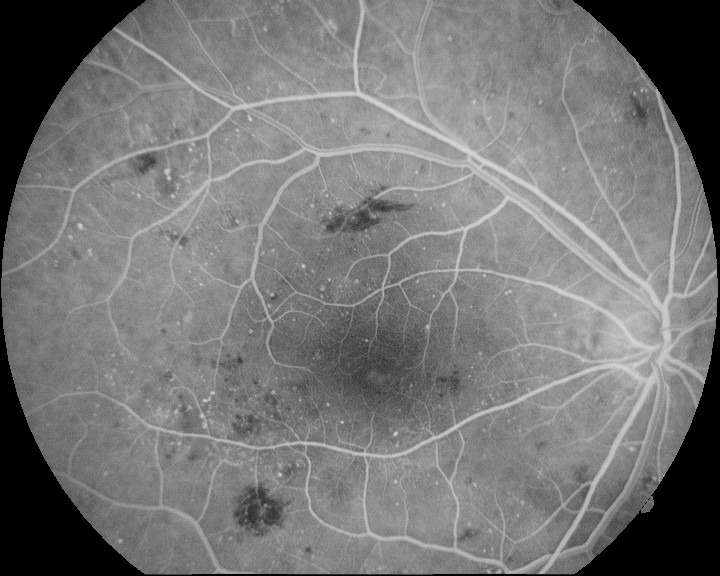

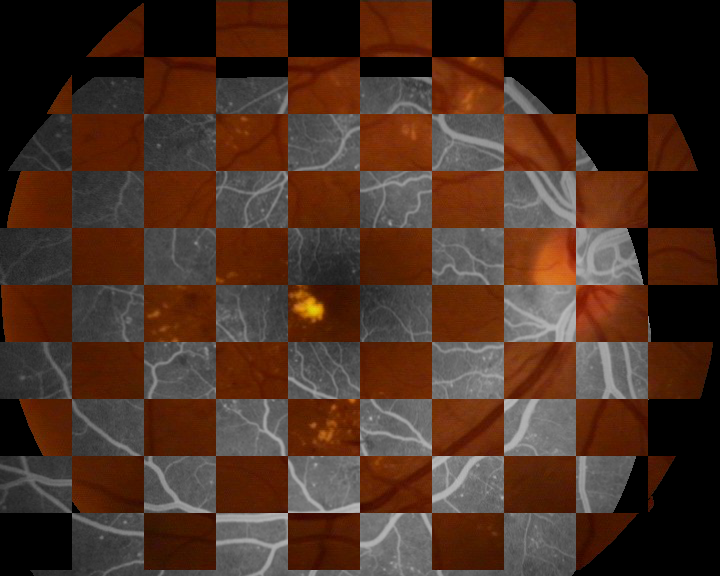

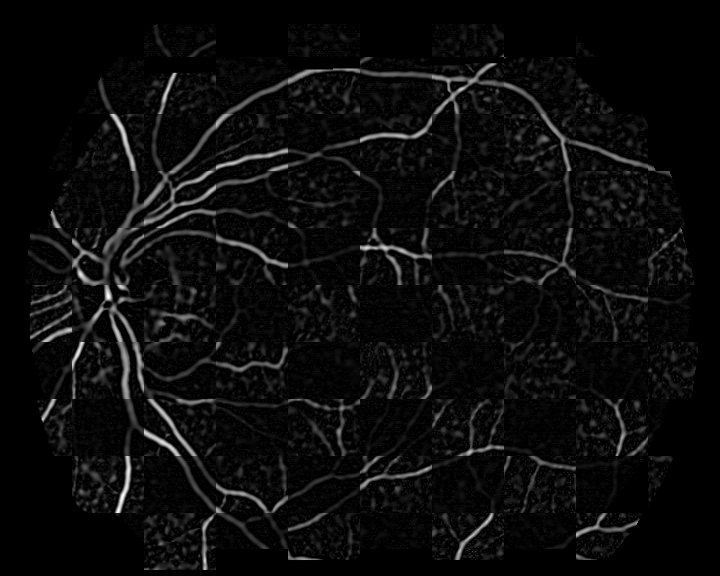

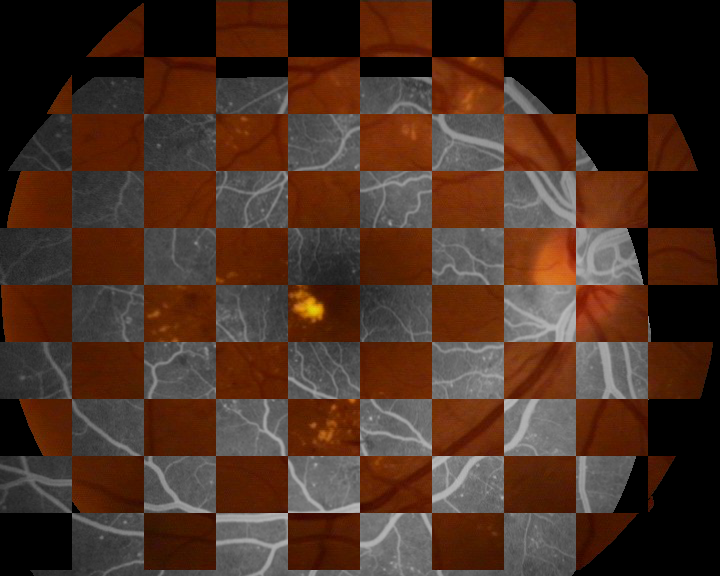

Figure 2: Examples of the vessel enhancement operation applied to a multimodal image pair, illustrating modality unification.

Experimental Evaluation

Experiments utilized the Isfahan MISP dataset containing 59 image pairs (healthy and pathological cases). Multiple registration configurations were scrutinized: feature-based only, intensity-based only (with affine and FFD models), and various compositions of the hybrid method. Performance was primarily assessed via the VE-NCC metric.

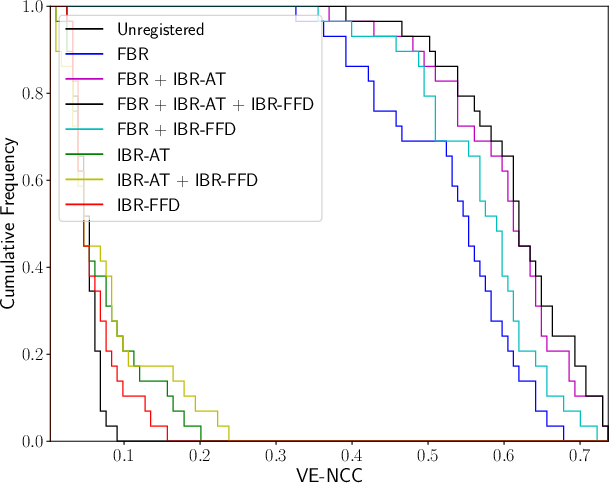

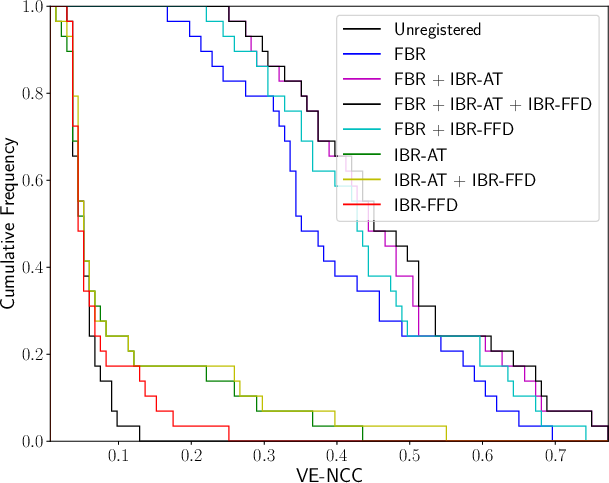

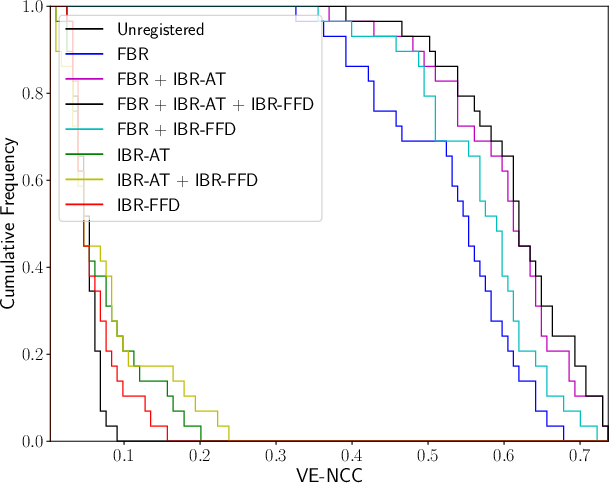

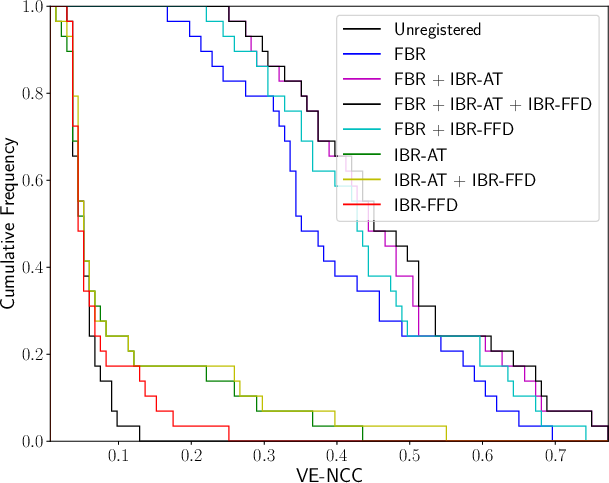

Quantitatively, the hybrid approach combining FBR, IBR-AT, and IBR-FFD achieved the highest VE-NCC scores: 0.6123±0.0815 for healthy cases and 0.4758±0.1419 for pathological cases. The VE-NCC cumulative distributions confirmed superior registration outcomes—especially notable given that standalone IBR frequently failed without FBR initialization, highlighting the critical role of landmark-driven spatial priors.

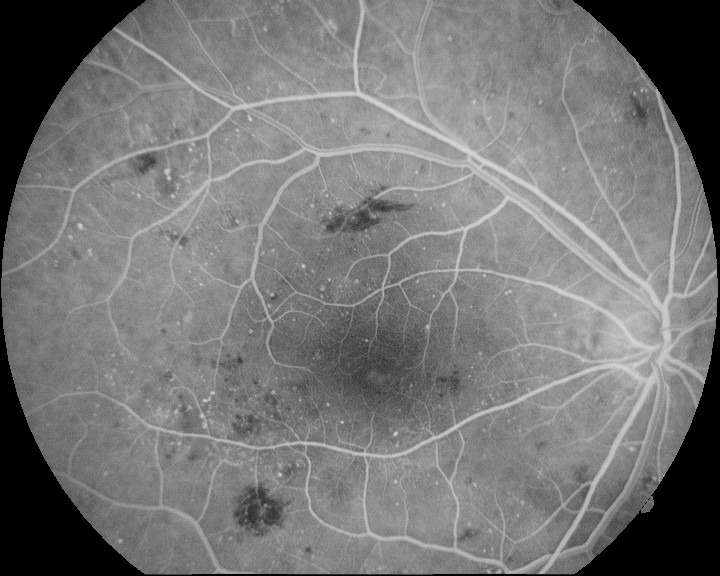

Figure 3: Cumulative distribution of VE-NCC for healthy and pathological cases reflecting registration quality.

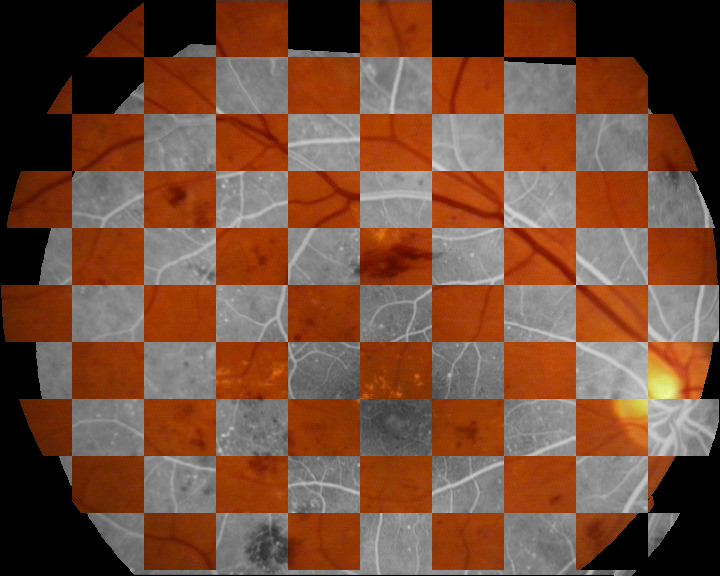

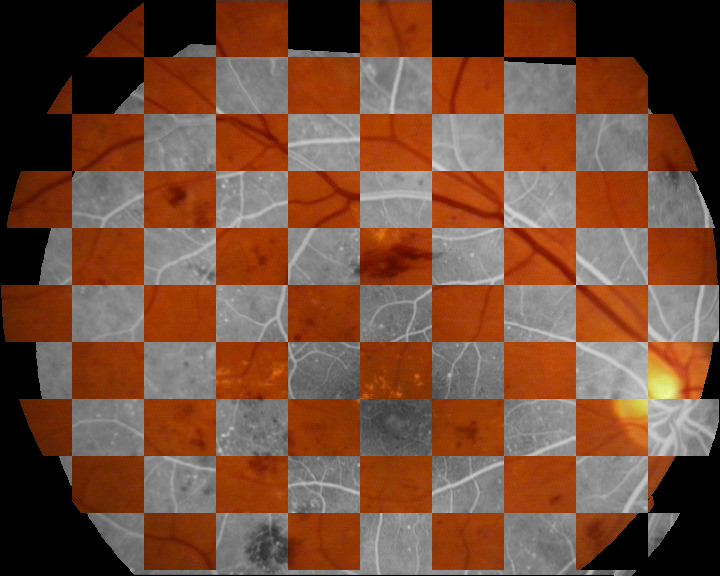

Qualitative analysis corroborated successful multimodal alignment across both healthy and pathological scenarios, indicated by coinciding vascular structures in registered images and enhanced composites.

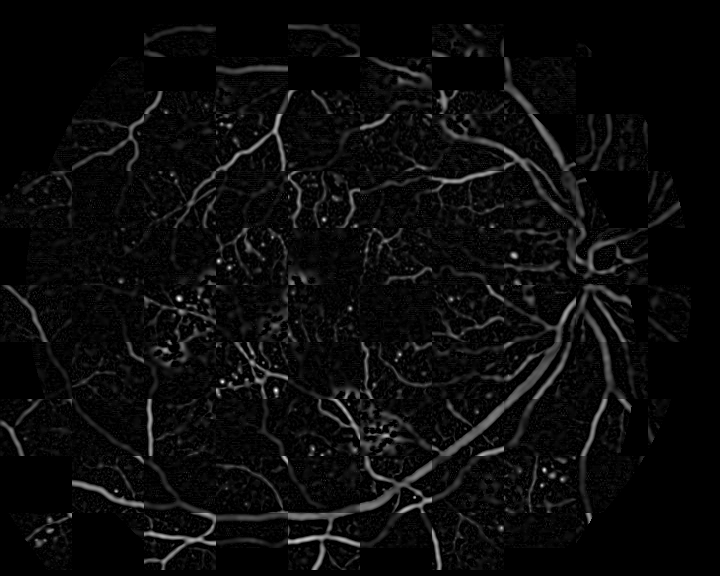

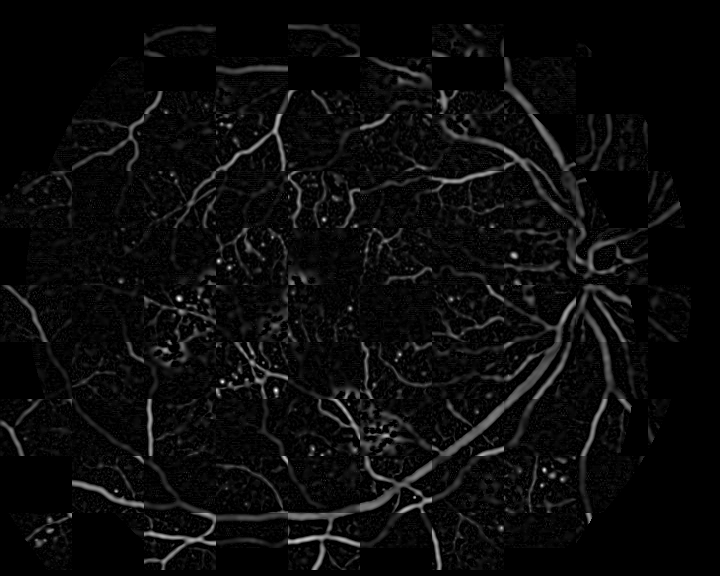

Figure 4: Examples of the multimodal registration with the hybrid approach—original and registered modality pairs, alongside vessel enhancement results.

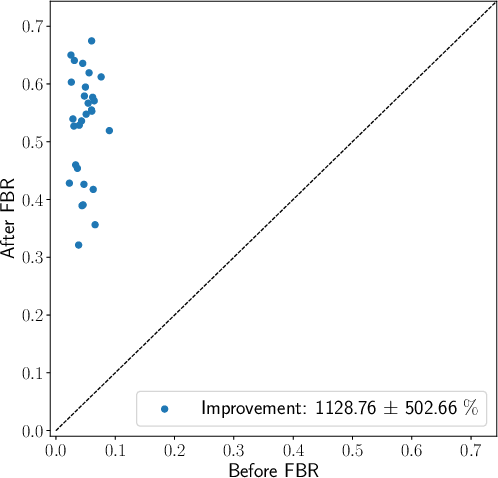

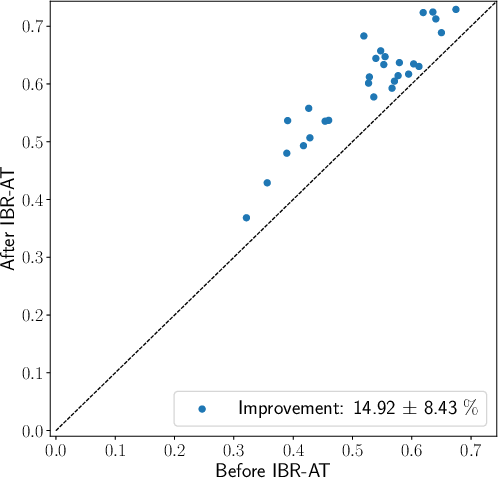

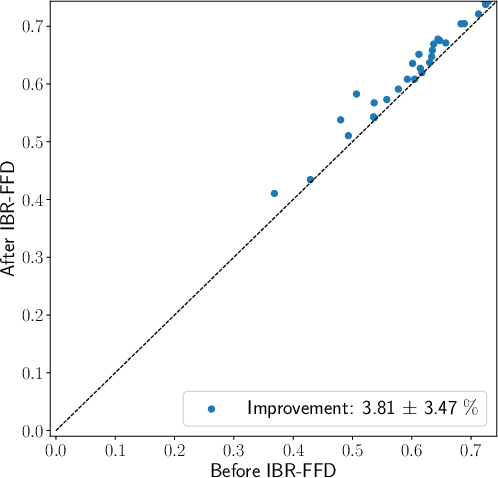

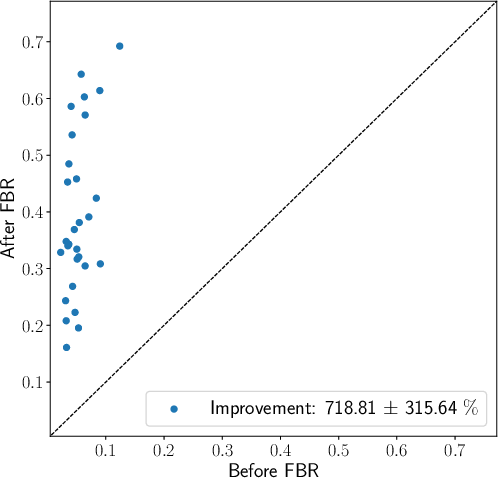

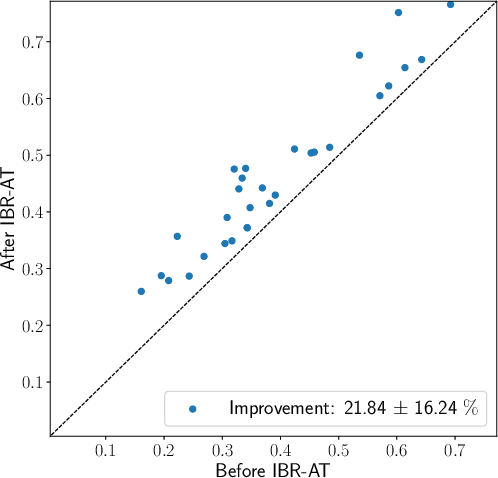

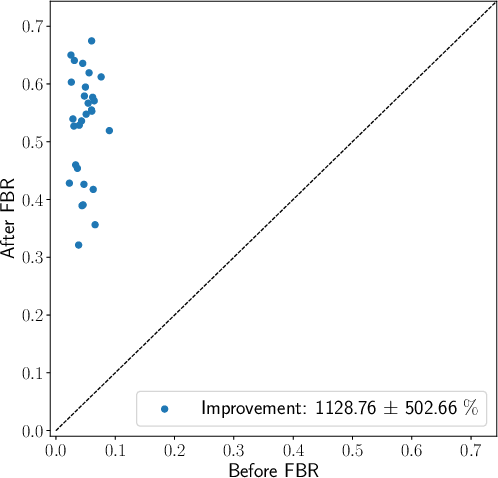

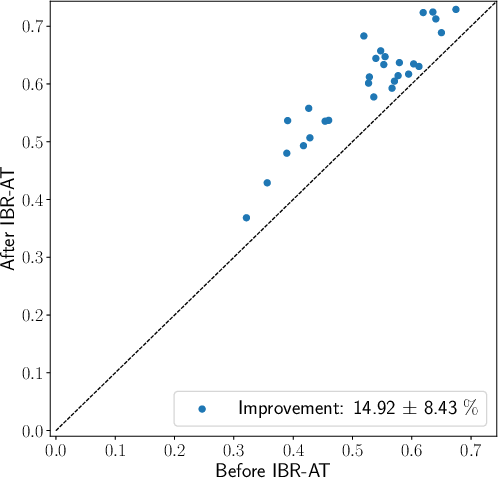

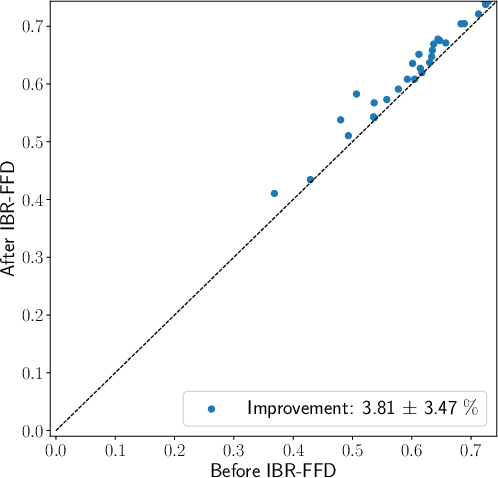

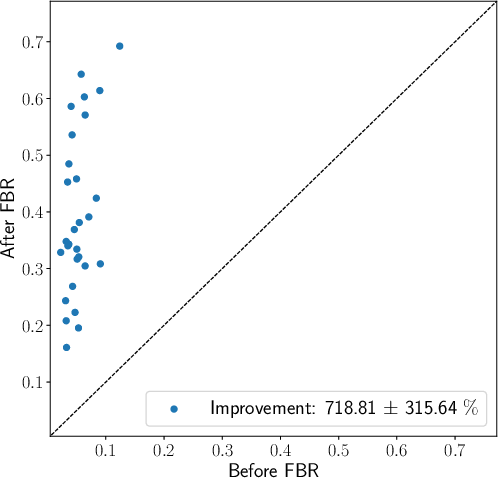

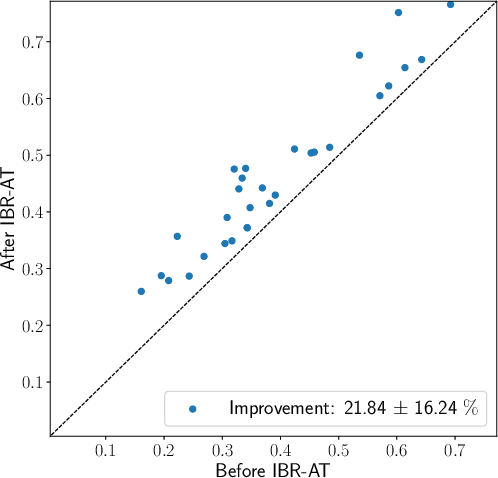

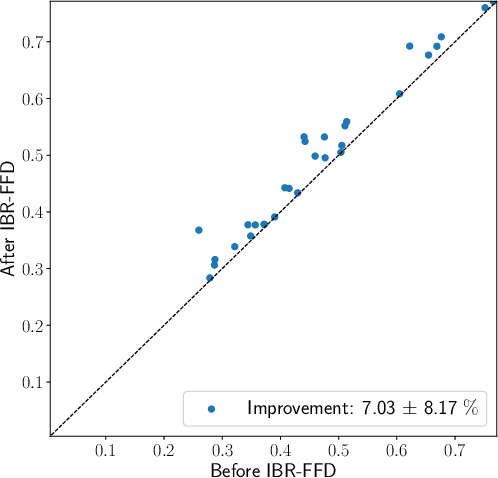

Stepwise analysis revealed the largest registration improvement emerges from the FBR initialization, with subsequent affine and FFD refinements yielding further gains but lower marginal returns.

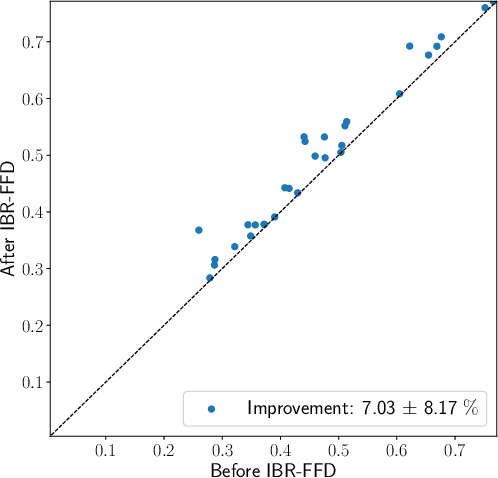

Figure 5: Scatter plots of VE-NCC values before and after each step of the hybrid method, and percentage improvement for each step in healthy and pathological cohorts.

Pathological cases exhibited slightly lower means and higher variance in VE-NCC, indicating moderate sensitivity to disease-induced structural deviations, yet overall robustness to pathology was observed.

Implications and Future Directions

The presented hybrid registration methodology underscores the efficacy of domain-specific landmark selection in multimodal scenarios. The ability to bypass generic descriptors and directly utilize geometric vessel information streamlines computational complexity and enhances matching reliability. Vessel enhancement preprocessing enables a homogenous feature space, reconciling modality disparities and allowing monomodal similarity metrics to be repurposed for multimodal registration.

Practically, this approach empowers more accurate joint analysis of CFR and FA for diagnostic purposes and research studies. Theoretically, it demonstrates the advantages of domain knowledge in medical imaging registration. Future AI developments may incorporate adaptive landmark extraction, advanced vessel enhancement algorithms, or deep learning-driven registration pipelines to further mitigate pathological variability and enable real-time, large-scale registration across broader imaging modalities.

Conclusion

The hybrid methodology combining domain-specific landmark-driven FBR with vessel enhancement-powered IBR achieves reliable, accurate multimodal retinal image registration. Strong numerical results and comprehensive analysis validate its superiority over independent constituent methods. Its robustness and accuracy make it suitable for clinical integration and further research, supporting improved diagnostic workflows and multimodal analysis.