VGG-T³: Offline Feed-Forward 3D Reconstruction at Scale

This presentation explores VGG-T³, a breakthrough method that transforms expensive global attention-based 3D reconstruction into a scalable linear-time operation. By compressing scene-level memory into a compact neural network through test-time training, VGG-T³ achieves up to 33× speedup while maintaining competitive geometric accuracy. The talk examines the core linearization technique, empirical validation across standard benchmarks and thousand-image collections, distributed inference capabilities, and a novel unified framework for both reconstruction and visual localization—all without sacrificing the global feature aggregation that makes multi-view understanding possible.Script

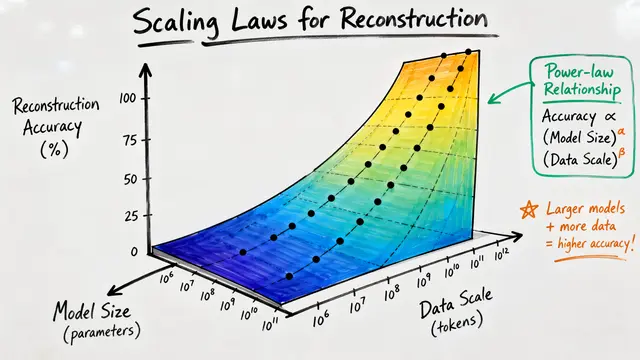

State-of-the-art 3D reconstruction from multiple images works beautifully—until you try to scale it. The best feed-forward models use global attention to aggregate information across all views, but that comes with quadratic computational cost. For a thousand images, that's not just slow—it's prohibitively expensive.

The core problem lies in how these models store and query scene information. Every image produces key-value pairs stored in a variable-length memory bank. When the model needs to reconstruct a point, it queries this entire bank using softmax attention—and that operation grows quadratically with the number of images. A hundred views? Manageable. A thousand? The memory and compute requirements explode.

VGG-T³ breaks this barrier with a deceptively simple idea.

Instead of maintaining a growing memory bank, VGG-T³ compresses the entire key-value mapping into a small neural network—specifically, a multilayer perceptron. During test time, the model optimizes this MLP to memorize how keys map to values for that specific scene. Once trained, querying the scene becomes a simple forward pass through the MLP. No more quadratic attention. The cost scales linearly with the number of images.

The transformation is surgical. Every global attention layer in the original architecture gets replaced by a test-time trained MLP. The pre-trained local attention blocks remain unchanged, preserving per-image feature extraction. What changes is how the model aggregates information across views. The fixed-size MLP enables something the original model couldn't do: distributed inference across multiple GPUs, because only the small MLP weights need synchronization—not the entire token space.

The visual evidence is striking. On standard benchmarks, VGG-T³ produces pointmaps and depth estimates that closely match the quadratic-time models it's derived from, while dramatically outperforming previous linear-time approaches. The reconstruction quality remains globally consistent even as the image count scales up. On DTU and ETH3D benchmarks, VGG-T³ cuts error by 2 to 2.5 times compared to the previous best linear model. The key enabler is a technique called sequence mixing—applying short convolutions to the value space before compression. This breaks the trivial linear relationship between keys and values, allowing the MLP to learn more expressive mappings.

The real test comes at scale. On the 7 Scenes dataset with a thousand images, VGG-T³ completes reconstruction in under a minute—more than 11 times faster than the quadratic baseline. The speedup grows with scene size. For truly massive collections, the method supports distributed inference: shard the images across GPUs, synchronize only the compact MLP gradients during test-time training, and reconstruct in parallel. This wasn't possible with attention-based models.

Here's where the approach gets particularly interesting. The test-time trained MLP doesn't just enable efficient reconstruction—it creates a compressed scene representation you can query with new images. Feed in a novel view, and the model predicts its camera pose relative to the reconstructed scene. On the Wayspots benchmark, VGG-T³ dramatically outperforms previous linear-time methods for visual localization. The authors even demonstrate localizing a tourist photo against a scene reconstructed from street-view imagery captured seven years earlier. Same framework, two tasks.

The method isn't without compromises. Camera pose estimation accuracy trails both the quadratic baselines and models designed for ordered sequences. The authors attribute this to structural mismatches between the pose prediction heads and the fixed-size MLP representation. There's also a residual performance gap in extremely complex scenes where full attention can better capture heterogeneous feature relationships. These limitations point to open questions about adaptive computation and specialized architectures for different geometric modalities within a unified linear framework.

VGG-T³ proves that global scene understanding doesn't require quadratic complexity—you can compress it into a compact neural representation through test-time training and unlock both massive speedups and new query capabilities. Visit EmergentMind.com to explore the full paper and create your own research video presentations.