Perceiver IO: One Architecture for Everything

This presentation explores Perceiver IO, a general-purpose neural architecture that breaks the mold of domain-specific models by handling structured inputs and outputs of any size. We will cover how it decouples computational cost from input/output length through query-based decoding and its success across language, vision, and multimodal tasks.Script

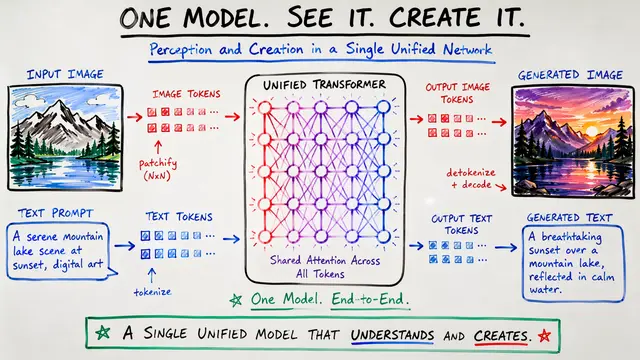

Imagine a single neural network capable of seeing images, reading text, and processing complex entity sets without ever needing a custom backbone for each task. The authors of Perceiver IO propose exactly this: a unified architecture designed to handle diverse structured inputs and outputs with simplicity and scale.

Existing models often require tailored engineering for different data types, and standard Transformers scale poorly because their attention mechanism is quadratic. While the original Perceiver solved the input problem, it was still limited in the complexity of the outputs it could generate.

To solve this, the researchers introduced a Read-Process-Write workflow where the heavy lifting happens in a small, fixed-size latent space. This approach ensures computational costs only grow linearly with the size of your input or output, regardless of how deep the model is.

By looking at this architecture, we see how the model can be applied to nearly any domain. Whether it is predicting pixel-level motion in optical flow or managing symbolic entities in a game like StarCraft 2, the core logic of using cross-attention to bridge different spaces remains the same.

The true innovation lies in how the model produces these structured outputs through the use of flexible output queries.

They construct output queries to specify exactly what information to extract from the latents. For language, these might be position embeddings, while for tasks like optical flow, the queries can actually include features from the input images to capture fine details.

Moving beyond theory, the authors showed that a byte-level model without any tokenizer can match a standard BERT model on GLUE benchmarks. Remarkably, they also achieved state-of-the-art results in optical flow without using traditional techniques like cost volumes or image warping.

The model's visual understanding is impressive, as it successfully propagates flow through occluded areas and captures extremely subtle motions of small objects. This demonstrates that the general-purpose query-based approach can compete with or outperform highly specialized vision algorithms.

Despite its versatility, the model still faces challenges when handling massive inputs that must be seen all at once. For extremely large outputs, the researchers currently rely on sampling techniques during training to keep the memory footprint manageable.

Perceiver IO marks a significant step toward a truly domain-agnostic intelligence that can perceive and act across any data structure with a single, elegant design. To dive deeper into this research, head over to EmergentMind.com.