Text2BIM: From Words to Buildings with AI Agents

This presentation explores Text2BIM, a groundbreaking framework that uses Large Language Model agents to transform natural language descriptions into complete 3D building information models. The talk examines how orchestrating specialized AI agents—product owners, architects, programmers, and reviewers—can overcome the cognitive burden of complex BIM authoring tools, achieving 99% compliance with architectural standards while making architectural design accessible through simple text prompts.Script

Designing a building currently demands mastery of complex software interfaces that stand between intention and creation. Text2BIM collapses that barrier entirely, turning natural language into complete architectural models through coordinated artificial intelligence agents.

Traditional Building Information Modeling software forces architects to navigate layers of menus and commands, spending cognitive energy on tool operation rather than creative problem-solving. The gap between what designers envision and what they can quickly express through software remains frustratingly wide.

Text2BIM bridges this gap through specialized artificial intelligence agents, each performing a distinct role in the design pipeline.

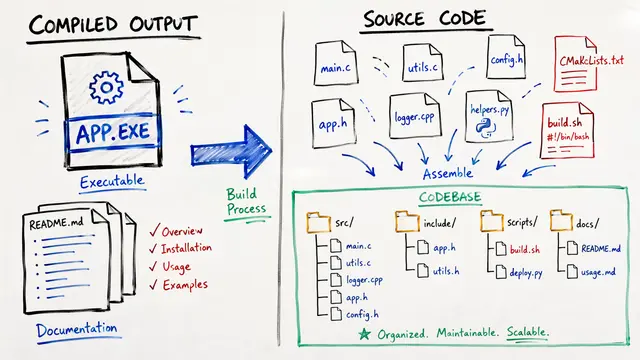

Each agent specializes: one enriches your initial prompt, another creates architectural plans respecting design rules, a third writes the code that generates the actual model, and a fourth checks quality against domain standards. This division of labor mirrors how human design teams collaborate, but operates at machine speed.

Where traditional workflows demand that architects translate every design decision into precise software commands, Text2BIM accepts plain descriptions and handles the technical translation automatically. The framework encapsulates complex API functions into higher-level tools the language models can invoke, while rule-based checkers catch violations before they propagate.

The framework was tested across multiple frontier language models. This comparison reveals how GPT-4o, Gemini 1.5 Pro, and Mistral Large 2 each interpret the same architectural prompt, producing structurally valid but stylistically distinct buildings. The wireframe views expose the underlying geometry and spatial organization, while rendered versions show how semantic information translates to visual output.

When evaluated against architectural standards, the models excelled. GPT-4o and Mistral Large 2 both exceeded 99% compliance with domain-specific rules, a remarkable achievement given the complexity of spatial reasoning and building codes. Gemini's inconsistency suggests that not all language models handle structured design constraints equally well.

Beyond rule compliance, the framework was assessed on requirement satisfaction. This matrix reveals a nuanced picture: agents often generated relevant architectural features even when users didn't explicitly request them, shown by the zero values. The negative scores mark genuine omissions where specified requirements went unmet, highlighting areas where spatial understanding still needs refinement.

Despite these successes, limitations persist. The agents sometimes struggle with intricate floor plans requiring sophisticated spatial logic, like ensuring bathrooms don't open directly into living rooms or that circulation paths make practical sense. Moving from conceptual models to construction-ready designs with full engineering detail remains an open frontier.

Text2BIM demonstrates that the cognitive gap between design intention and technical execution can be narrowed dramatically. When artificial intelligence handles the translation from language to geometry, architects reclaim time for the creative decisions only humans can make. Visit EmergentMind.com to explore this research further and create your own videos.