Embarrassingly Simple Self-Distillation Improves Code Generation

This presentation explores Simple Self-Distillation (SSD), a minimalist post-training method that dramatically improves code generation in large language models without external verifiers, reward models, or reinforcement learning. By training models on their own unfiltered outputs sampled with controlled temperature and truncation, SSD achieves gains of up to 30% relative improvement on challenging benchmarks. The key insight: SSD resolves a fundamental precision-exploration conflict in token prediction, contextually reshaping distributions to sharpen predictions at unambiguous code positions while preserving diversity at decision points.Script

What if a code generation model could improve itself by learning from its own raw outputs, with no correctness filter, no reward signal, and no external teacher? Simple Self-Distillation makes this counterintuitive idea work, achieving 30% relative improvement on hard problems.

The method is almost embarrassingly simple. Sample solutions from your own model using specific temperature and truncation settings. Fine-tune on that synthetic data without any correctness verification. Then decode strategically at inference time. No reinforcement learning, no verifiers, no external supervision whatsoever.

Why does this work when the training data includes so many incorrect solutions?

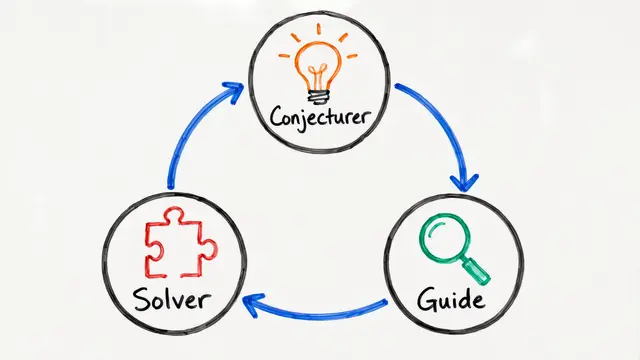

Code generation faces a fundamental tension. At locks, where syntax demands precision, you want sharp probability spikes. At forks, where multiple algorithms work, you need broad coverage. A single global temperature cannot satisfy both requirements, and this is where fixed decoding strategies fail.

Here's the mechanism revealed. After SSD, fork positions transform into plateaus where several valid continuations remain evenly weighted. Lock positions become sharp spikes where the correct token dominates and distractors vanish. This isn't achievable by tuning temperature alone because SSD reshapes distributions differently depending on context, compressing distractor tails everywhere while preserving diversity only where it matters.

The results are striking. On LiveCodeBench, pass at 1 improves by 30% relative. The gains intensify on harder problems, and perhaps most surprising: SSD still works when trained on data sampled at temperature 2.0 without truncation, where most outputs are syntactically invalid. This proves the effect comes from distributional reshaping, not copying correct examples.

Simple Self-Distillation reveals that the path to better code generation isn't always through more supervision or complex reward engineering. Sometimes the model already knows more than it can consistently express, and the right training signal unlocks that latent capability. Visit EmergentMind.com to explore this paper further and create your own research videos.