Search-o1: Agentic Search-Enhanced Large Reasoning Models

This presentation explores Search-o1, a groundbreaking framework that addresses knowledge gaps in large reasoning models through agentic retrieval-augmented generation. The talk examines how Search-o1 enables models to autonomously retrieve external knowledge during complex reasoning tasks, refine retrieved documents through a Reason-in-Documents module, and achieve superior performance across challenging reasoning benchmarks and open-domain question answering tasks, even surpassing human expert performance on specialized datasets.Script

What if your reasoning model could pause mid-thought, realize it needs more information, and autonomously search for exactly what it needs? Search-o1 makes this vision a reality by giving large reasoning models the ability to seek knowledge on demand.

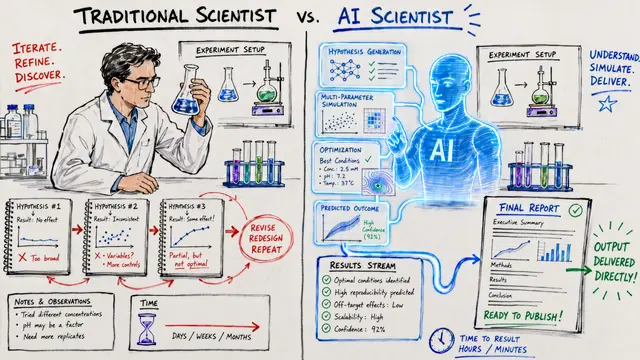

Building on that idea, the authors identified a critical problem: even the most advanced reasoning models hit knowledge walls during complex multi-step reasoning. When models encounter unfamiliar territory, those gaps cascade into errors that derail the entire reasoning chain.

The researchers developed Search-o1 to solve this challenge through intelligent, autonomous knowledge retrieval.

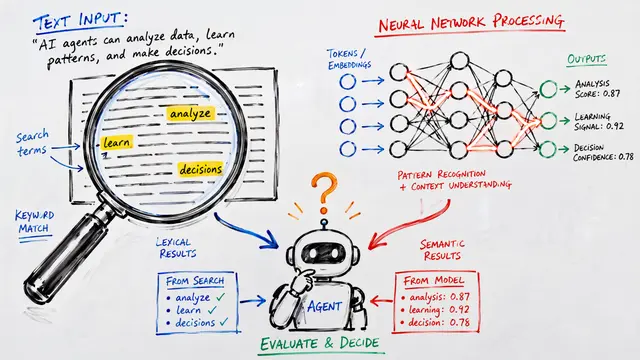

At the heart of Search-o1 lies an agentic retrieval-augmented generation mechanism. The model generates special search queries mid-reasoning, retrieves relevant documents, and then uses the Reason-in-Documents module to extract only the most pertinent information before continuing its reasoning chain.

Contrasting with traditional approaches, standard RAG methods retrieve everything upfront, flooding the reasoning process with potentially irrelevant information. Search-o1 flips this paradigm by letting the model itself determine exactly when it needs external knowledge and what to search for.

This scaling analysis reveals something remarkable: Search-o1 effectively leverages increasing numbers of retrieved documents across diverse reasoning tasks. Notice how performance consistently improves with more documents on GPQA and multi-hop question answering, while mathematical reasoning tasks show more modest gains, suggesting different knowledge requirements across domains.

The results are striking: Search-o1 doesn't just improve over baseline models, it actually exceeds human expert performance on specialized scientific benchmarks. The framework shows particularly strong gains on multi-hop reasoning tasks where synthesizing information from multiple sources is essential.

Technically, the authors implemented Search-o1 using QwQ-32B-Preview as the foundation, connecting it to live web search for retrieving external documents. The system employs batch processing to handle multiple questions efficiently, making it practical for real-world deployment.

The authors acknowledge important limitations: the agentic search process adds computational overhead, and performance remains tied to the quality of available external documents. Optimizing for faster inference while maintaining reasoning quality remains an open challenge.

Search-o1 represents a fundamental shift toward reasoning models that know when they don't know and can actively seek the knowledge they need. Visit EmergentMind.com to explore the full technical details and dive deeper into this research.