Search-o1: Agentic Search-Enhanced Large Reasoning Models

This presentation explores Search-o1, a framework that addresses knowledge gaps in large reasoning models by integrating an agentic retrieval-augmented generation mechanism. The talk examines how Search-o1 enables models to autonomously retrieve external knowledge during complex reasoning, introduces the Reason-in-Documents module for refining retrieved information, and demonstrates performance improvements across challenging reasoning tasks and open-domain question answering benchmarks, including comparisons with human expert performance.Script

What happens when a reasoning model encounters a knowledge gap mid-thought? Search-o1 tackles this challenge by giving large reasoning models the ability to search for information exactly when they need it.

Building on that opening, the authors identified a fundamental limitation: even powerful reasoning models hit walls when their internal knowledge runs out. This creates cascading uncertainties that compromise the entire reasoning chain.

Search-o1 offers an elegant solution by making retrieval an active part of the reasoning process itself.

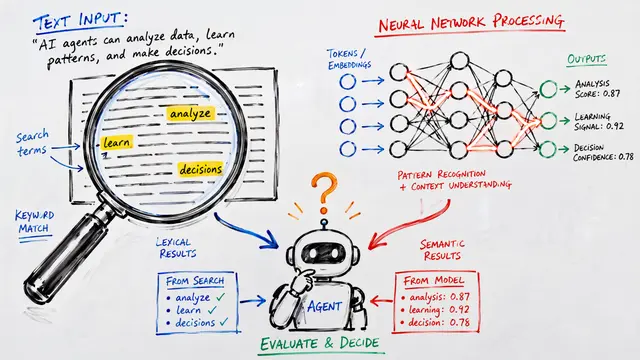

The framework introduces an agentic retrieval mechanism where the model itself determines when it needs more information. Rather than retrieving everything upfront, it strategically searches at moments of uncertainty.

To address the challenge of noisy retrieval results, they developed Reason-in-Documents. This module analyzes retrieved documents through a two-stage process, producing refined knowledge that integrates seamlessly into the reasoning chain.

This contrasts sharply with traditional approaches. While standard retrieval methods front-load everything and hope for the best, Search-o1 retrieves precisely what it needs, exactly when it needs it.

The scaling analysis reveals something remarkable about Search-o1's efficiency. As we increase the number of retrieved documents, performance improves consistently across challenging tasks like GPQA and MATH500, demonstrating that the framework effectively leverages additional knowledge without being overwhelmed by noise.

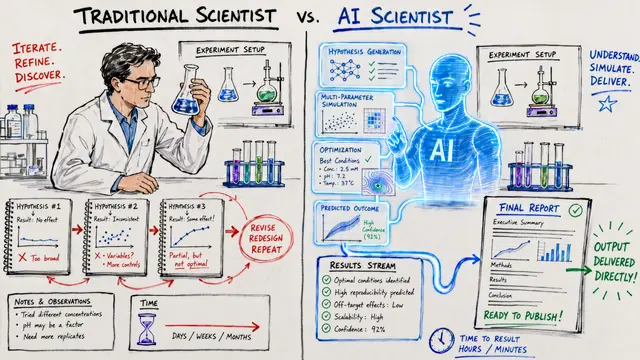

The empirical results are striking. Search-o1 not only beats competing approaches but actually exceeds human expert performance on specialized scientific questions. On multi-hop reasoning tasks requiring multiple inference steps, the improvements are particularly pronounced.

The approach does have trade-offs worth noting. Each retrieval operation adds latency, and performance ultimately depends on the underlying search infrastructure. The experiments focused on a specific backbone model, so generalization to other architectures remains an open question.

Search-o1 demonstrates that teaching models when to search is as important as teaching them how to reason. Visit EmergentMind.com to explore this research further and discover more breakthroughs in agentic AI systems.