SANA-WM: Minute-Scale World Modeling on a Single GPU

This lightning talk explores SANA-WM, a breakthrough 2.6B-parameter world model that generates full minute-long 720p videos with precise camera control on a single GPU. We'll examine the hybrid linear attention architecture that makes this efficiency possible, the dual-branch camera conditioning system that achieves metric-scale trajectory accuracy, and the rigorous benchmarking results showing 36x throughput improvements over baselines while maintaining state-of-the-art visual quality and action-following fidelity.Script

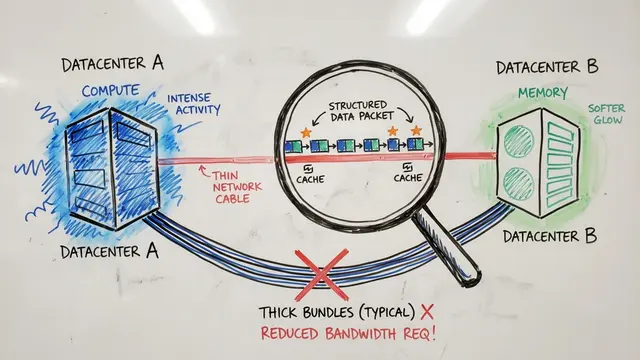

Generating a full minute of high-resolution video with precise camera control used to require massive GPU clusters and days of compute. The researchers behind SANA-WM just compressed that entire workflow onto a single graphics card, producing 720p video at 60 seconds in 34 seconds of wall-clock time.

The architecture achieves this through hybrid linear attention, interleaving efficient Gated DeltaNet blocks that maintain context in constant memory with periodic full softmax layers that recover exact long-range dependencies. This design scales to minute-long sequences without the memory explosion that breaks pure transformer architectures at 60 seconds.

Precise camera control comes from a dual-branch conditioning system. A coarse branch encodes the global 6 degree-of-freedom trajectory to anchor consistency across the full minute, while a fine-grained branch uses Plucker ray mixing to restore per-frame motion details within the compressed latent space. The result is rotation error under 5 degrees and metric-scale trajectory adherence even over hard paths.

Benchmarking against recent baselines reveals substantial gains. SANA-WM cuts rotation error to 4.5 degrees and camera motion error to 1.41, outperforming every competing method while delivering 36 times higher throughput and fitting entirely in a single H100's memory where all-softmax architectures fail.

The system does exhibit drift in rare or highly dynamic settings, and lacks explicit persistent 3D memory for true loop closure. Temporal degradation metrics drop substantially after refinement, but the model still depends on high-quality pose annotation and struggles with uncommon environments outside its training distribution.

SANA-WM fundamentally shifts minute-scale world modeling from an infrastructure problem to an accessible research tool, enabling academic labs and small teams to simulate, test, and iterate on long-horizon embodied AI without cluster-scale compute. Explore the full technical details and create your own video summaries at EmergentMind.com.