Retrieval-Aware Distillation for Transformer-SSM Hybrids

This presentation explores a groundbreaking architectural distillation framework that compresses pretrained Transformers into memory-efficient SSM hybrids by strategically preserving only retrieval-critical attention heads. The research demonstrates that with just 2% of attention heads retained, hybrid models can recover over 95% of teacher performance on retrieval-intensive tasks while achieving 5-6x memory reduction, fundamentally challenging assumptions about uniform attention resource allocation in large language models.Script

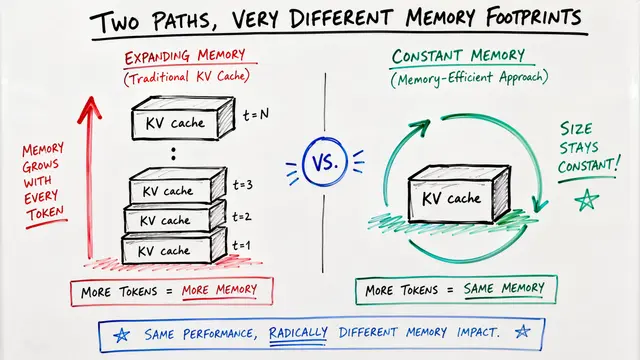

Transformers power today's most capable language models, but their quadratic memory costs create a fundamental barrier. What if we could identify exactly which attention heads matter for retrieval and compress everything else?

Building on this challenge, researchers discovered that state-space models fail at retrieval not because all attention is necessary, but because specific specialized heads are missing. The question becomes: can we identify and preserve only those critical components?

The authors introduce a two-phase framework that systematically identifies and preserves retrieval capacity.

This process contrasts sharply with existing approaches. Instead of guessing where attention should go, the method empirically measures which heads perform retrieval, then surgically replaces only the non-critical components with efficient recurrent modules.

Moving to the empirical findings, the results are striking. Experiments on models distilled from Llama and Qwen demonstrate that extreme sparsity in attention placement actually works when guided by retrieval importance, achieving massive efficiency gains with minimal performance trade-offs.

The underlying mechanism reveals something fundamental about model architecture. The researchers validated that retrieval operations are structurally localized rather than distributed, meaning hybrid models were previously wasting capacity trying to recover retrieval through brute-force SSM parameterization.

These architectural changes unlock practical deployment advantages. By offloading retrieval to preserved attention heads, the framework enables aggressive compression of SSM components while maintaining the capability profile needed for real-world language tasks.

Despite these advances, important questions remain. The concentration finding needs validation at much larger scales, and it is unclear whether heads identified by synthetic probes fully capture the retrieval demands of multi-step reasoning in production scenarios.

The implications extend beyond efficiency gains. This work fundamentally reframes how we should think about hybrid architecture design, suggesting that future models can embrace specialized, modular components rather than uniform resource distribution across layers and heads.

Retrieval-aware distillation demonstrates that strategic architectural sparsity, guided by empirical measurement rather than heuristics, unlocks the efficiency of SSMs while preserving the retrieval strengths of Transformers. Visit EmergentMind.com to explore the full technical details and experimental results.