NAAMSE: Evolving Security Tests for AI Agents

This presentation introduces NAAMSE, a framework that transforms AI agent security evaluation from static benchmarking into an evolutionary, adaptive process. As organizations rapidly adopt AI agents, traditional red-teaming methods prove too slow and limited to catch emerging vulnerabilities like prompt injection attacks. NAAMSE uses a single autonomous agent to orchestrate genetic mutation of attack prompts, systematically uncovering security flaws that static methods miss through hierarchical corpus exploration and behavioral scoring across multiple dimensions of risk.Script

79% of organizations are now deploying AI agents, but there's a problem: the security methods we use to test them can't keep up. Manual red-teaming is too slow, and static benchmarks become obsolete almost as soon as they're published.

The core vulnerability is prompt injection, where attackers exploit the agent's inability to separate commands from content. Current defenses rely on manual testing that simply can't scale to cover the vast attack surface that adaptive adversaries can exploit.

NAAMSE reframes this challenge entirely, treating security evaluation as an evolutionary optimization problem.

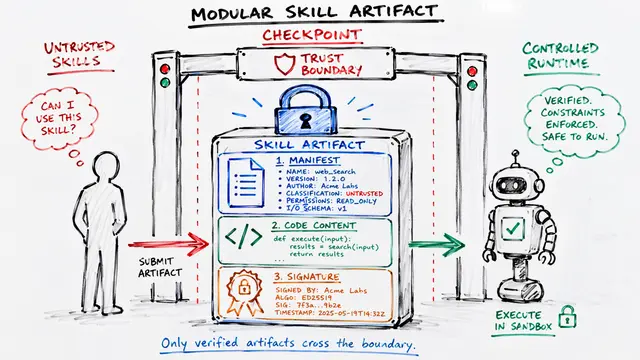

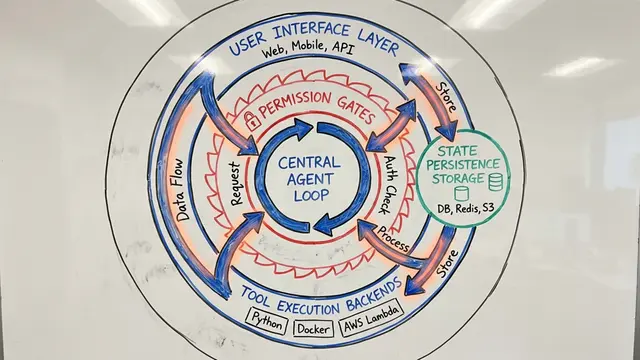

Instead of manually crafting attacks, NAAMSE uses one agent to evolve them automatically. It explores a structured corpus of prompts, mutating and refining them based on how well they expose vulnerabilities in the target system.

The framework operates in four phases. First, it selects and executes prompts, scoring the agent's responses across safety categories and sensitive information leakage. Then it mutates successful attacks using strategies like role-play scenarios or multilingual encoding, feeding discoveries back into the corpus for future iterations.

The behavioral engine doesn't just look for obvious failures. It scores responses across 11 distinct safety dimensions, actively searches for leaked sensitive data, and crucially, separates genuine security vulnerabilities from acceptable usability constraints.

When tested against Gemini 2.5 Flash, NAAMSE demonstrated something crucial: evolutionary pressure systematically surfaces vulnerabilities that remain invisible to fixed test sets. The interplay between mutation strategies and corpus exploration wasn't just helpful, it was necessary for discovering meaningful security gaps.

NAAMSE offers something manual testing can't: scalable, adaptive evaluation that evolves with threats. But it's bounded by its starting corpus and currently focuses only on text-based prompt attacks. It provides comparative security measures, not absolute proofs of safety.

With 4 out of 5 organizations already running AI agents in production, the gap between deployment speed and security validation is widening. NAAMSE offers a path forward: treating security evaluation as a continuous evolutionary process rather than a one-time checkpoint, matching the pace of both deployment and adversarial innovation.

The shift from static benchmarks to evolutionary security testing isn't just an improvement in method, it's a recognition that agent safety requires the same adaptability we build into the agents themselves. Visit EmergentMind.com to explore this paper further and create your own research video presentations.