LLM Routing with Annotation-Free Data

Script

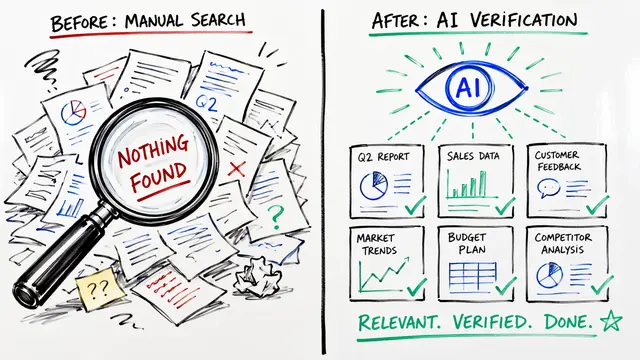

How do you direct traffic to the smartest expert when you do not actually know the correct answers yourself? This paradox defines the challenge of routing prompts without labeled data. The paper 'Routing with Generated Data' introduces a method to separate valid queries from their unreliable generated labels.

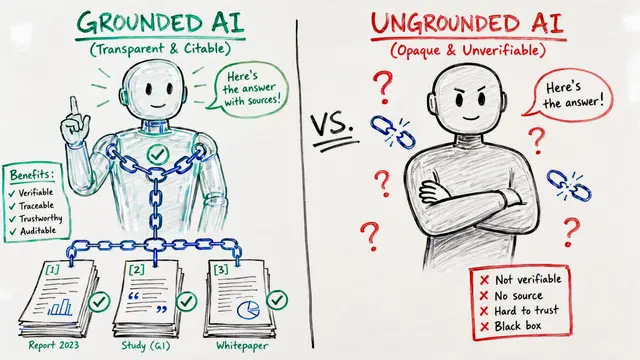

Existing routing systems typically rely on ground-truth data, functioning like a classifier trained on perfect textbooks. However, in the real world, labeled data is scarce, and synthetic data created by weaker models often contains incorrect answers that confuse standard routing algorithms.

To solve this, the researchers uncovered a crucial asymmetry: weak models are surprisingly good at asking relevant questions even when they fail to answer them correctly. Instead of trusting these noisy generated labels, the proposed approach ignores them entirely, relying instead on the confidence-weighted consensus of the model pool to estimate truth.

The method, called CASCAL, treats the majority agreement as a proxy for correctness. It clusters queries into specific 'skill niches'—identifying distinct areas like medical triage or code debugging—and then mathematically ranks which expert models perform best near each cluster center.

This query-only approach proves remarkably robust, maintaining high accuracy even when trained on data from weaker models where traditional routers fail. By focusing on 'variance-inducing' questions where strong models agree but weak ones struggle, the system outperforms baseline methods by over 4 percentage points.

This work demonstrates that we can effectively estimate model expertise without ever seeing a ground-truth label, unlocking efficient deployment in new domains. For more insights on annotation-free machine learning, visit EmergentMind.com.