Group-Evolving Agents: Open-Ended Self-Improvement via Experience Sharing

This lightning talk explores a paradigm-shifting approach to artificial general intelligence where collectives of agents achieve continual self-improvement through experience sharing rather than fixed objectives. The presentation examines how decentralized knowledge exchange drives open-ended behavioral evolution, demonstrates empirical evidence of sustained diversity and adaptation, and discusses the implications for scalable autonomous AI systems that improve without external reward signals.Script

What if artificial intelligence could improve itself not through rewards or objectives, but simply by agents sharing what they've learned with each other? This paper introduces a radical departure from traditional self-improvement, where collectives evolve through decentralized experience exchange.

Building on that question, let's examine why current approaches fall short.

Traditional reinforcement learning and evolutionary methods hit a wall because they depend on explicit objectives that constrain what agents can discover. The authors argue this fundamentally limits the path to artificial general intelligence.

So how do group-evolving agents overcome these limitations?

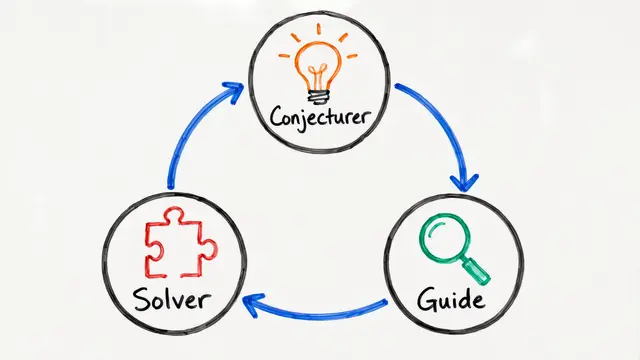

Following this insight, the researchers propose that agents maintain shared experience buffers and exchange knowledge based on emergent criteria rather than fixed objectives. The sharing protocols themselves evolve, creating self-organizing networks of knowledge transfer.

The mechanism works through two complementary processes. Agents intelligently sample experiences from peers based on novelty rather than randomly, then distill that knowledge into transferable skill representations that others can adapt and build upon.

These ideas are compelling in theory, but what does the data show?

Tested across multi-agent coordination tasks and procedurally generated environments, group-evolving agents demonstrate sustained diversity without stagnation. Performance improvements scale with group size, and the system resists the degenerative drift that plagues evolutionary methods.

Perhaps most striking, latent representation analysis reveals that agents spontaneously develop specialized yet complementary skills without any explicit coordination mechanism. The collective sustains high information diversity, proving that explicit reward signals aren't necessary for continual improvement.

The authors acknowledge several frontiers. As collectives grow, the communication costs of experience sharing need better management, and the system's behavior when genuine novelty is scarce remains an open question for future work.

This work fundamentally reframes self-improvement as an emergent property of collective knowledge dynamics rather than individual optimization against fixed goals. To dive deeper into how decentralized agent collectives might unlock open-ended intelligence, visit EmergentMind.com.