Governance-as-a-Service: Enforcing AI Compliance Without Retraining

This presentation introduces Governance-as-a-Service (GaaS), a modular runtime framework that enforces policy compliance in autonomous AI systems without requiring internal agent modifications. By operating as an interposition layer between agents and their environments, GaaS uses a dynamic Trust Factor mechanism to block, warn, or allow actions based on compliance history. Evaluated across essay writing and financial trading domains, GaaS demonstrates superior precision in preventing harmful behaviors while maintaining system functionality, offering a scalable, model-agnostic solution for AI governance in distributed, agentic ecosystems.Script

What if you could stop unsafe AI behavior without ever touching the model itself? As autonomous agents proliferate across industries, governance can no longer rely on retraining or hoping agents play nice. This paper introduces a runtime enforcement layer that makes compliance a service, not an afterthought.

Let's first understand why traditional approaches fall short in distributed AI ecosystems.

Building on that challenge, the authors identify three critical gaps. Black-box models resist internal modification, cooperation fails when incentives diverge, and retraining for policy updates simply doesn't scale. What's needed is governance that operates at runtime, independent of agent architecture.

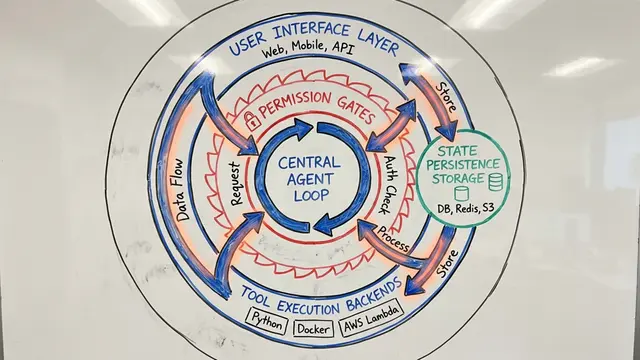

This brings us to how Governance-as-a-Service redesigns the enforcement model.

The architecture is elegantly simple. Agents propose actions based on their internal logic, but those actions never reach the environment directly. Instead, GaaS intercepts every proposal, evaluates it against human-authored policy rules using contextual pattern matching, and decides whether to allow, warn, or block. The Trust Factor, a dynamic score updated with each interaction, ensures enforcement severity adapts to compliance history. Everything is logged for auditability and regulatory reporting.

So what makes GaaS work? The policy engine uses declarative JSON rules that humans can write and update independently. The Trust Factor mechanism tracks each agent's compliance over time, making governance responsive rather than rigid. The system supports coercive blocking for hard violations, normative warnings for softer issues, and adaptive escalation that intensifies with repeated offenses.

The researchers validated this framework across two distinct domains.

They tested GaaS in essay generation and financial trading using open-source models like Llama-3 and Qwen-3. In essay writing, the system blocked unsafe content and maintained argument diversity that unguided agents lacked. In trading, GaaS intercepted risky transactions while preserving system throughput, proving governance doesn't have to throttle performance.

This heatmap reveals something fascinating about how different models violate rules. Darker cells show more frequent violations, and you can see distinct patterns emerge for each architecture. These aren't random failures, they reflect model-specific weaknesses that governance needs to account for. The authors used these insights for targeted red-teaming, showing how enforcement data feeds back into security hardening.

Compared to simple keyword filters and commercial moderation tools, GaaS achieved significantly better precision and recall. Adversarial attacks that initially succeeded were blocked after adaptive patches were applied. The Trust Factor proved especially valuable, escalating enforcement for repeat offenders while giving compliant agents more freedom. Crucially, all of this works without touching the agents themselves.

Governance-as-a-Service proves that compliance can be externalized, scalable, and adaptive without sacrificing agent autonomy. By decoupling enforcement from architecture, the authors offer a path toward trustworthy AI ecosystems that can evolve with policy, not against it. To explore the full framework and implementation details, visit EmergentMind.com.