Learning a Generative Meta-Model of LLM Activations

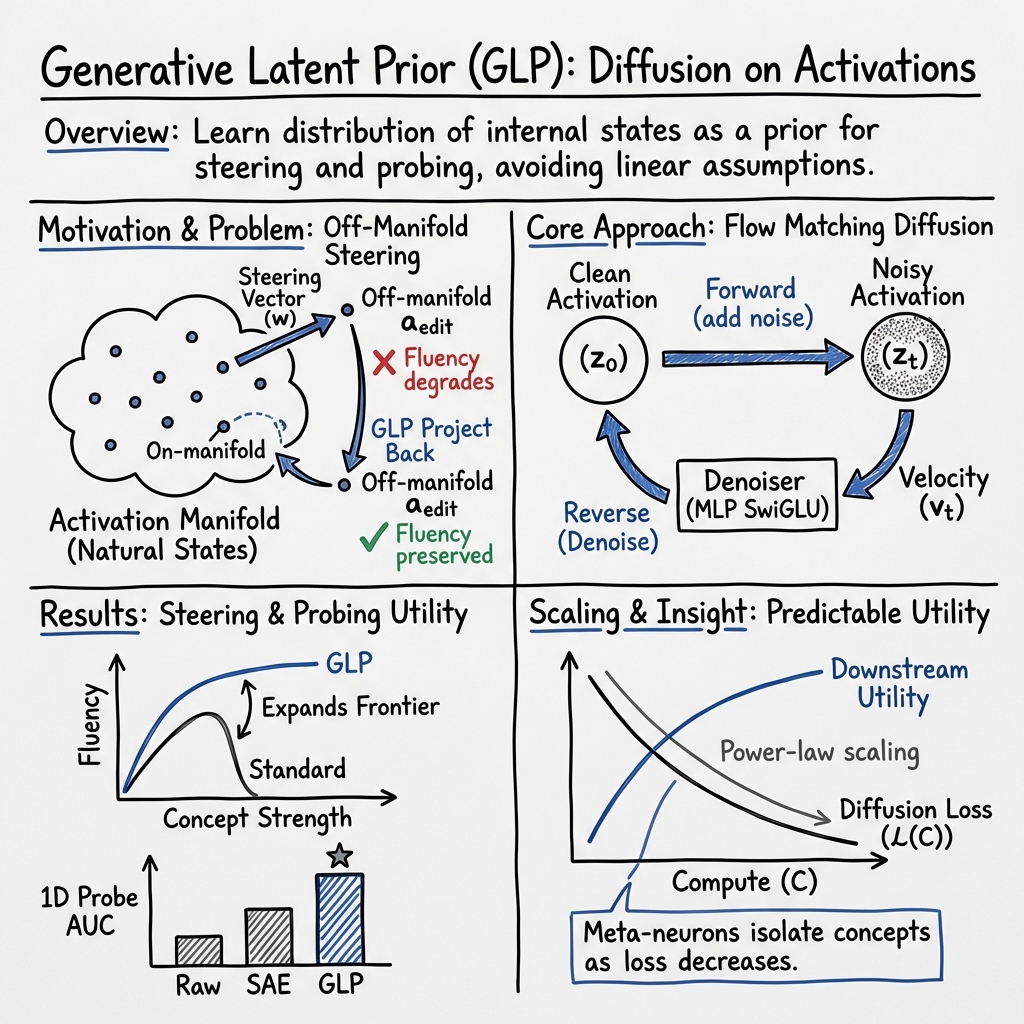

Abstract: Existing approaches for analyzing neural network activations, such as PCA and sparse autoencoders, rely on strong structural assumptions. Generative models offer an alternative: they can uncover structure without such assumptions and act as priors that improve intervention fidelity. We explore this direction by training diffusion models on one billion residual stream activations, creating "meta-models" that learn the distribution of a network's internal states. We find that diffusion loss decreases smoothly with compute and reliably predicts downstream utility. In particular, applying the meta-model's learned prior to steering interventions improves fluency, with larger gains as loss decreases. Moreover, the meta-model's neurons increasingly isolate concepts into individual units, with sparse probing scores that scale as loss decreases. These results suggest generative meta-models offer a scalable path toward interpretability without restrictive structural assumptions. Project page: https://generative-latent-prior.github.io.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

Overview

This paper is about teaching a model to understand the “inner signals” of a LLM and use that understanding to make better, safer edits to the LLM’s behavior. Think of an LLM as a very complicated machine whose “brain activity” (called activations) changes as it reads and writes text. The authors train a new model, called a Generative Latent Prior (GLP), that learns what these activations usually look like. Once GLP knows the “natural shape” of those activations, it can help keep edits “on track,” so the LLM stays fluent and makes sense.

Objectives and Research Questions

The paper asks three main questions, in simple terms:

- Can a generative model learn the normal patterns of an LLM’s inner signals without making strong, hand-crafted assumptions?

- If we use this learned pattern as a guide (a “prior”), can we edit the LLM’s behavior (for example, make it more positive or adopt a certain persona) while keeping its writing smooth and natural?

- Do the internal parts (“meta-neurons”) of this new model capture clear, human-meaningful concepts (like “baseball” or “contradiction”) better than existing methods?

Methods and Approach

How they trained their model (GLP):

- What are activations? Imagine pausing the LLM mid-thought as it processes a word; the numbers flowing through its layers at that moment are its activations. The authors collect a huge dataset of these activations (about 1 billion examples) from a standard place inside the LLM (“residual stream” at a middle layer).

- What is a diffusion model? Picture taking a clear photo and slowly adding random noise until it becomes pure static. A diffusion model learns to run this process backward: starting from noisy inputs and gradually “cleaning” them to look like real data. Here, the “photos” are activation vectors (big lists of numbers), not images.

- Training with “noise and cleanup”: The team adds noise to real activations, then trains GLP to predict how to remove that noise so the result looks like a natural activation again. Over time, GLP learns the overall shape of “legal” (natural) activations—the activation manifold—which is like the “road” the LLM’s thoughts usually travel on.

How they use GLP:

- On-manifold steering: When people edit an LLM (for example, pushing its activations toward “more positive” to get cheerful text), strong edits can shove the activations “off the road,” making outputs less fluent. After making an edit, the authors run a short diffusion “cleanup” with GLP that nudges the activation back onto the natural road while trying to keep the intended change. This is like spell-check for the LLM’s inner state.

- Checking quality: Because you can’t “look” at an activation like a photo, they use statistics:

- Frechet Distance: a number that tells how close two clouds of points are; lower means closer. They compare GLP-generated activations to real ones.

- PCA: a way to view high-dimensional data in 2D; they check if generated and real activations overlap.

- Delta LM Loss: how much worse the LLM gets if you replace its activations with reconstructed ones; lower is better.

- Interpreting concepts with 1-D probing: They test if a single number from inside GLP (a “meta-neuron”) can predict yes/no facts (like “is this about baseball?”). If one number works well, it means the concept is nicely isolated in a single unit—great for interpretability.

Main Findings and Why They Matter

Here are the key results, explained simply:

- GLP learns realistic activations. It can generate activation patterns that are statistically very close to real ones (good Frechet Distance), even though it starts from noise. Surprisingly, when used to “reconstruct” activations, GLP hurts the LLM less than a strong baseline method (sparse autoencoders), meaning its edits stay more natural.

- Better compute → better GLP → better results. As they make GLP bigger and train it more, its training loss follows a smooth curve (a power law). Importantly, this isn’t just a number: the improvements directly translate into better downstream outcomes—cleaner steering and better concept detection—so the training loss is a reliable predictor of usefulness.

- On-manifold steering improves fluency. When they steer for:

- Sentiment (e.g., more positive text),

- Features found by sparse autoencoders (SAEs),

- Personas (e.g., certain behavioral styles),

- adding the GLP cleanup step keeps the outputs more fluent for the same strength of the intended change. In short: you get the effect you want with fewer weird or broken sentences.

- GLP “meta-neurons” isolate concepts well. In tests where a single number must predict 113 different yes/no tasks, GLP’s internal features beat raw LLM neurons and SAE features. This suggests GLP naturally organizes information into clearer, more interpretable units.

- Practical details: Around 20 diffusion cleanup steps were often enough to make generated activations very close to real ones, and the approach worked across different LLM sizes.

Why this matters: Many current tools assume concepts are simple straight-line directions in activation space. That can be limiting and sometimes breaks fluency. GLP avoids those assumptions by learning the actual shape of valid activations, giving a more flexible and reliable foundation for understanding and editing LLMs.

Implications and Potential Impact

- A scalable path to interpretability: Because GLP improves predictably with more compute—and its loss predicts real-world usefulness—researchers have a clear way to make interpretation and control better over time.

- Safer, smoother control: GLP acts like a safety rail for activation edits, helping keep the LLM’s inner state “on the road.” This can make behavior steering more reliable and reduce weird artifacts.

- Clearer concepts without hand-crafted rules: GLP’s meta-neurons often represent concepts cleanly in single units, which can make it easier to analyze what a model knows and how it thinks.

- Future directions: Modeling multiple tokens at once, conditioning GLP on cleaner signals, and covering more layers or activation types could unlock even richer tools. Also, measuring how “typical” an activation is (via GLP’s loss) might help detect unusual or out-of-distribution behavior.

In short, the paper shows that a generative model of an LLM’s inner signals can both explain and improve those signals, giving researchers a powerful, general-purpose tool to understand and gently steer LLMs without breaking their fluency.

Knowledge Gaps

Knowledge gaps, limitations, and open questions

Below is a concise, actionable list of what the paper leaves missing, uncertain, or unexplored.

- Multi-token modeling is absent: assess whether modeling cross-position structure (e.g., attention-mediated dependencies across tokens) improves manifold fidelity, steering robustness, and probing performance.

- Unconditional GLP only: evaluate conditioning on clean activations, layer index, token identity/position, or textual context to reduce information loss and enable targeted edits.

- Single-layer focus: train and evaluate GLPs across multiple layers (early, middle, late), different activation types (residual stream, MLP pre/post-activation, attention outputs), and joint multi-layer models to capture inter-layer dependencies.

- Limited model families: test GLP on larger and different architectures (e.g., Mistral, Gemma, GPT-style), to establish generalization and transfer robustness beyond Llama1B/8B.

- Data distribution robustness: quantify GLP performance under domain shift (e.g., code, scientific text, low-resource languages) and OOD inputs; assess cross-lingual applicability.

- “On-manifold” claim unvalidated: develop and apply manifold-aware metrics (e.g., local intrinsic dimension, likelihood/score-based consistency tests) to verify that post-processed activations lie on the true activation manifold.

- Evaluation metrics limited: FD (Gaussian assumption) and PCA (top-2 visualization) may miss higher-order structure; introduce non-Gaussian, manifold, and neighborhood-preserving metrics and compare across them.

- Semantic preservation not quantified: measure how diffusion post-processing alters intended semantics (e.g., via controlled counterfactuals, invariance tests, and content similarity metrics) in addition to fluency.

- Hyperparameter sensitivity unexplored: systematically ablate t_start, sampling steps, noise schedules, and discretizations; provide principled selection/calibration procedures for different tasks and models.

- Efficiency and latency unmeasured: benchmark inference overhead of 20-step SDEdit-like sampling and explore budgeted variants (few-step samplers, distillation, consistency models) for real-time steering.

- Reconstruction comparison fairness: GLP “reconstruction” uses noised real activations (not a true compression bottleneck); compare against SAEs and other priors under matched information budgets and training data.

- Causal validity of meta-neurons: 1-D probing is correlational; perform causal intervention tests to verify that manipulating specific GLP units reliably induces the target concept in the source LLM.

- Mapping GLP features to LLM circuits: develop methods to align or attribute GLP meta-neurons to specific LLM neurons/features/circuits to increase mechanistic interpretability.

- Concept coverage and interpretability breadth: extend beyond 113 binary tasks with broader, multi-domain, multi-lingual benchmarks and human-in-the-loop evaluation; quantify disentanglement, sparsity, and compositionality of GLP units.

- Scaling law interpretation: the irreducible error floor (E ≈ 0.52) lacks explanation; disentangle limits due to data noise, model capacity, optimization, and layer choice; study data scaling (more than 1B activations) and longer training.

- Alternative generative priors: compare GLP to normalizing flows, VAEs, denoising autoencoders, and score-based models for activation priors in terms of fidelity, steering utility, and runtime.

- Conditioning with side information for editing: test conditional GLPs (e.g., classifier-free guidance on concept labels or prompts) to better preserve/steer desired semantics while correcting artifacts.

- Robustness under strong interventions: characterize failure modes when steering coefficients are extreme, including whether GLP suppresses or distorts the intended concept.

- Transfer across fine-tuning regimes: systematically evaluate base→instruct, SFT, and RLHF variants and other alignment pipelines to quantify transfer degradation and remedies.

- Training stability and reproducibility: report multiple seeds, confidence intervals for scaling exponents and downstream metrics, and sensitivity to architectural choices (width, expansion) and optimizers.

- Multi-layer joint modeling benefits: quantify improvements (if any) from modeling cross-layer correlations for steering and probing; explore parameter sharing and amortized models over layers.

- Beyond LLM-as-a-judge: complement LLM-judge fluency/concept scores with human evaluation and model-agnostic metrics (perplexity, grammaticality, factuality) to reduce judging bias.

- Safety and misuse analysis: GLP improves persona elicitation (including harmful personas); assess risk amplification, create guardrails, and evaluate effects on safety-critical behaviors.

- Theoretical account of “monosemanticity”: explain why diffusion/flow-matching on activations yields more isolated units; test hypotheses (e.g., implicit sparsity, denoising-as-regularization) with controlled experiments.

- Calibration of diffusion loss as utility proxy: verify monotonic relationships across more tasks and models; define thresholds for “good enough” GLP to guide early stopping/model selection.

- Sampling strategy design: explore alternative samplers (e.g., second-order integrators, adaptive step sizes) and their impact on manifold fidelity and semantic preservation.

- Layer-wise or model-wise reusable GLPs: assess whether a single GLP can generalize across neighboring layers or related models via fine-tuning or parameter-efficient adaptation.

Glossary

- 1-D probing: A probing method that tests whether single scalar features can predict binary concepts from activations. "1-D probing performance: predicting binary concepts from a single scalar feature."

- activation diffusion model: A diffusion-based generative model trained over neural activations rather than images or text. "developing the analogous activation diffusion model is not straightforward."

- activation manifold: The low-dimensional structure or subspace that real neural activations naturally occupy. "we need methods that naturally conform to the underlying structure of the activation manifold."

- AUC: Area Under the ROC Curve; a scalar metric for binary classifier performance. "reporting the final test AUC."

- beginning-of-sequence token: A special token that marks the start of an input sequence for sequence models. "except for the beginning-of-sequence token"

- compute-efficient frontier: The set of model checkpoints that achieve the best performance for a given compute budget. "checkpoints on the compute-efficient frontier,"

- cosine schedule: A learning rate schedule that follows a cosine decay pattern over training. "learning rate 5e-5, cosine schedule, and warmup ratio 0.01."

- Delta LM Loss: The increase in a LLM’s loss when original activations are replaced with reconstructed ones. "We next measure Delta LM Loss"

- denoiser: The neural network in diffusion models that predicts the velocity or noise to reverse the noising process. "We formulate our denoiser as a stack of feedforward MLP blocks"

- diffusion loss: The training objective minimized by the diffusion model, typically derived from the mismatch between predicted and target velocities/noise. "diffusion loss follows a smooth power law as a function of compute"

- diffusion sampling: The iterative reverse process that transforms noise (or a noised sample) into a denoised sample. "post-processing via diffusion sampling projects off-manifold activations back onto the natural manifold"

- diffusion steps: The discrete timesteps used during the reverse diffusion sampling process. "we use 1000 diffusion steps."

- DiffMean: A steering baseline that computes concept vectors as the difference in mean activations between contrast sets. "We steer using DiffMean (Marks & Tegmark, 2024; Belrose, 2023; Wu et al., 2025)"

- FLOPs: Floating-point operations; a measure of compute used to quantify training cost. "estimate FLOPs as C = 6ND,"

- flow matching: A diffusion-like training framework where the model learns a velocity field to match paths between data and noise. "We use flow matching (Liu et al., 2023; Albergo & Vanden- Eijnden, 2023; Lipman et al., 2023; Esser et al., 2024; Gao et al., 2024)"

- forward process: The diffusion process that gradually adds noise to data to produce training inputs. "At the core of diffusion is the forward process, which produces training data by adding Gaussian noise to real samples"

- Frechet Distance: A statistical distance between two multivariate Gaussian distributions used to evaluate sample quality. "Frechet Distance confirms this quantitatively."

- gated MLP: An MLP architecture with a multiplicative gate (e.g., SwiGLU) that modulates activations. "the gated MLP's expansion factor to an additional 2x over the model width"

- Generative Latent Prior (GLP): The paper’s diffusion-based meta-model trained to capture the distribution of LLM activations. "We call this model a Generative Latent Prior, or GLP."

- irreducible error: The asymptotic error floor that remains even with infinite compute or perfect modeling capacity. "with an estimated irreducible error of 0.52."

- L-BFGS: A quasi-Newton optimization algorithm often used for fitting logistic regression and other convex models. "using L-BFGS (1000 iterations)"

- L2 regularization: A penalty on the squared magnitude of weights to improve numerical stability and prevent overfitting. "we use L2 regularization which enables numerical stability"

- meta-model: A model trained on data derived from another model (e.g., activations), used as a prior or feature extractor. "the value of a meta-model lies in the trained model itself,"

- meta-neurons: Internal units of the meta-model whose activations correspond to semantically meaningful features. "these "meta-neurons" out- perform both SAE features and raw LLM neurons on 1-D probing tasks,"

- mixed precision training: Training that uses lower-precision arithmetic (e.g., FP16/BF16) to improve memory and speed. "We also speed up our consumer via mixed precision training."

- off-manifold: Refers to activations that deviate from the typical distribution learned by the model. "post-processing via diffusion sampling projects off-manifold activations back onto the natural manifold"

- on-manifold steering: Steering that keeps edited activations within the model’s learned activation manifold. "like on- manifold steering"

- Pareto frontier: The set of solutions where improving one objective (e.g., concept strength) worsens another (e.g., fluency). "We plot the Pareto frontier of concept vs. fluency"

- PCA (Principal Component Analysis): A linear dimensionality reduction method used to visualize or analyze structure. "we use the Frechet Distance (Dowson & Landau, 1982) and PCA (Pearson, 1901)"

- Persona Vector: A steering method that adds learned vectors to induce specific behavioral traits in LLMs. "We apply GLP on top of the Persona Vector (Chen et al., 2025)"

- power law: A scaling relationship where performance improves as a power of compute. "diffusion loss follows a smooth power law as a function of compute"

- producer-consumer data pipeline: A data ingestion setup where one process produces and caches data while another consumes it for training. "we therefore implement a producer-consumer data pipeline,"

- residual connections: Skip connections that add a layer’s input to its output, aiding optimization in deep networks. "with residual connections (He et al., 2016)."

- residual stream: The running sum of residual pathways in a transformer layer stack from which activations are taken. "We extract activations from the residual stream at a given intermediate layer"

- SDEdit: A diffusion-based editing technique that starts sampling from a noised version of an input rather than pure noise. "we propose an activation-space analog of SDEdit (Meng et al., 2022)"

- sparse autoencoders (SAEs): Autoencoders trained with sparsity-inducing objectives to learn dictionary-like features. "such as PCA and sparse autoencoders"

- SwiGLU: A gated activation function combining SiLU and a linear unit, used in transformer MLPs. "Each block is a SwiGLU layer (Shazeer, 2020)"

- timestep conditioning: Conditioning the denoiser on the diffusion timestep to guide denoising behavior. "The only diffusion-specific modification needed is timestep conditioning (Ho et al., 2020)."

- unconditional model: A generative model that does not require auxiliary conditioning inputs like class labels. "The models we train are unconditional, meaning they do not need class labels or any other conditioning information during training."

- warmup ratio: The fraction of total training steps used to linearly increase the learning rate from zero to the base rate. "cosine schedule, and warmup ratio 0.01."

Practical Applications

Practical Applications Derived from “Learning a Generative Meta-Model of LLM Activations”

Below are actionable applications that follow from the paper’s findings and methods. Each item names concrete use cases, links to sectors where relevant, notes potential tools/products/workflows, and calls out assumptions or dependencies that affect feasibility.

Immediate Applications

- On-manifold activation steering to preserve fluency at high control strength (software, consumer AI, customer support, marketing)

- Use GLP as a post-processing module that projects steered activations back onto the activation manifold, improving fluency without sacrificing target concept strength (e.g., sentiment, style, persona).

- Tools/products/workflows: “OnManifoldSteer()” runtime module integrated into inference servers (e.g., vLLM/nnsight plugins); configurable t_start and steps (e.g., 0.5, 20) for strength vs. preservation trade-offs; LLM-as-a-judge evaluation harness for concept–fluency frontier.

- Assumptions/dependencies: Requires activation hooks in the LLM (open weights or instrumented runtime); alignment between GLP’s training layer and intervention layer; modest inference overhead (multi-step denoising passes of an MLP).

- Upgrade existing SAE-based steering with GLP post-processing (interpretability, safety, enterprise AI)

- Wrap SAE decoder-based directions with GLP denoising to mitigate off-manifold artifacts and improve concept–fluency Pareto frontiers.

- Tools/products/workflows: “SAE+GLP Steering” pipeline; Neuronpedia/SAE direction selection → GLP denoising; batch scoring of fluency/concept via LLM judge.

- Assumptions/dependencies: SAE and GLP trained on comparable layers/model; GLP trained on similar activation distribution as the target deployment domain.

- Persona control with higher fluency (consumer AI, education, brand voice)

- Combine Persona Vectors with GLP to maintain fluent, coherent responses while eliciting desired personas (e.g., polite/formal, compassionate, fun).

- Tools/products/workflows: “Persona Control SDK” that calibrates steering coefficients and automatically tunes GLP t_start for minimal degradation.

- Assumptions/dependencies: Activation access; guardrails for disallowed personas; evaluation beyond LLM-as-a-judge for safety-critical domains.

- Sentiment and style control for content generation without retraining (marketing, communications)

- Apply DiffMean-style vectors for positive/neutral tone, adjust reading level or formality, and stabilize outputs using GLP to avoid stilted language at higher control strengths.

- Tools/products/workflows: Writer’s assistant plugins; campaign-specific tone packs; batch post-edit pipelines for large copy sets.

- Assumptions/dependencies: Steering vectors well-estimated for target domain; compute budget for GLP sampling on longer generations.

- Lightweight interpretability probes via GLP meta-neurons (ML research, risk & compliance audit)

- Use GLP’s internal “meta-neurons” for 1-D probing to locate and track concepts with high probe AUC—often outperforming raw neurons and SAEs—enabling targeted audits of model knowledge/biases.

- Tools/products/workflows: “Meta-Neuron Probe Explorer” to discover and visualize high-AUC concept neurons; drift dashboards monitoring probe performance across releases.

- Assumptions/dependencies: Concept labels/datasets for probing (e.g., 113 binary tasks); stable GLP checkpoints tied to base model versions to enable longitudinal tracking.

- Training-quality proxy for interpretability tooling (MLOps for interpretability)

- Use diffusion loss as a single scalar that predicts utility for steering and probing, guiding early stopping, model selection, and compute allocation.

- Tools/products/workflows: “GLP Trainer” with diffusion loss dashboards; power-law fits for planning scaling decisions; alerting when gains flatten.

- Assumptions/dependencies: The reported scaling holds in the user’s data/model regime; careful comparison across checkpoints on the compute-efficient frontier.

- Reconstruction-assisted activation editing with low Delta LM Loss (systems, latency-sensitive applications)

- When replacing or editing activations (e.g., for analysis or controlled transformations), use GLP denoising from noised inputs to keep edits on-manifold and limit perplexity penalties.

- Tools/products/workflows: “Activation Surgery” toolkit that injects controlled noise and GLP-denoises to re-stabilize activations post-edit.

- Assumptions/dependencies: Layer match; validation that small Delta LM Loss correlates with acceptable downstream quality for target tasks.

- Scalable activation data collection pipeline (infrastructure, research labs)

- Adopt the producer–consumer caching workflow (with vLLM and nnsight) to collect billion-scale activation corpora efficiently for GLP/SAE training.

- Tools/products/workflows: “Activation Producer” service with fixed-size buffers, mixed-precision consumer training; scheduling across corpora/layers.

- Assumptions/dependencies: Sufficient storage and GPU availability; data licensing for documents used to elicit activations; privacy/data governance policies.

- Cost-efficient interpretability gains without scaling the base LLM (cost optimization, smaller-model deployments)

- Use a moderately sized GLP to attain strong probing/steering performance on smaller base models, potentially offsetting the need to scale the base LLM for certain control/analysis tasks.

- Tools/products/workflows: “GLP-Boosted Small LLM” bundles; sidecar GLP services running on a single A100-equivalent for mid-sized deployment.

- Assumptions/dependencies: Target tasks amenable to activation-level control/interpretation; latency acceptable with GLP sampling.

- Compliance-ready output conditioning with minimal degradation (governance, policy, safety engineering)

- Apply on-manifold negative steering directions (e.g., for toxicity or unsafe advice reduction) and use GLP denoising to keep responses fluent and on-topic.

- Tools/products/workflows: “Compliance Steering Library” with curated negative directions; audit logs tracking concept–fluency tradeoffs at enforced thresholds.

- Assumptions/dependencies: High-quality, validated steering vectors; human-in-the-loop review for regulated domains; careful measurement beyond LLM judges.

Long-Term Applications

- Multi-token activation modeling for sequence-level control (software, agents)

- Extend GLP to model cross-position structure, enabling edits that jointly consider multiple tokens/layers for more robust and subtle behavior control (e.g., long-horizon agent consistency).

- Tools/products/workflows: “Sequence GLP” with attention layers; sequence-aware on-manifold editing for multi-turn dialogues.

- Assumptions/dependencies: Additional research/training compute; new evaluation protocols for multi-token edits.

- Conditional GLP with clean-activation conditioning (precision editing, low information loss)

- Condition denoising on the clean activation to reduce information loss and achieve more targeted interventions than unconditional GLP.

- Tools/products/workflows: “cGLP Denoiser” for plug-and-play precision steering; adaptive t scheduling.

- Assumptions/dependencies: Architectural and training changes; careful regularization to avoid simply copying inputs.

- Activation typicality and OOD/jailbreak detection via GLP loss (safety, monitoring)

- Use diffusion/flow-matching loss or denoiser residuals as a typicality score to flag unusual activations (e.g., adversarial prompts, jailbreak states, catastrophic policies).

- Tools/products/workflows: “Activation Sentinel” anomaly detector at inference time; risk routing to human reviewers or stricter policies.

- Assumptions/dependencies: Robust mapping from loss to risk; calibration per domain/model; low-latency scoring.

- Cross-model transfer and concept distillation (model compression, safety alignment)

- Train GLP on a teacher model, extract meta-neurons, and transfer concept-localization signals to students for cheaper interpretability and safer behavior.

- Tools/products/workflows: “Interpretability Distiller” aligning student neurons/features to teacher meta-neurons; probe-based regularization during finetuning.

- Assumptions/dependencies: Stability of concept localization across architectures; alignment between layers.

- Automated circuit discovery and targeted patching (mechanistic interpretability)

- Combine high-AUC meta-neurons with causal interventions to map circuits and patch failure modes, aided by on-manifold denoising to avoid corruption during interventions.

- Tools/products/workflows: “Circuit Miner + GLP Surgery” platform; automated search over concepts → causal tests → safe edits.

- Assumptions/dependencies: New experimental suites for causal validation; integration with attribution/patching toolchains.

- Enterprise-grade guardrails that operate at the activation level (safety, compliance)

- Build activation-level policies that constrain models within approved manifolds for domains like finance or healthcare, minimizing performance regressions common with harder refusal policies.

- Tools/products/workflows: “Activation Policy Engine” with policy-specific concept vectors; certified guardrail catalogs per regulation.

- Assumptions/dependencies: Strong domain-specific validation; legal review; robust handling of edge cases and harms.

- KV/activation compression and caching with generative reconstruction (systems efficiency)

- Explore partial/noised logging of activations with GLP-based reconstruction to reduce memory/storage while keeping model quality within acceptable deltas.

- Tools/products/workflows: “Generative Activation Cache” for mid-layer states; knobs for t_start vs. quality.

- Assumptions/dependencies: Demonstrated correlation between Delta LM Loss and end-task quality; potential risks in long-context scenarios.

- Red-teaming via activation exploration (security)

- Systematically push activations along risky directions and use GLP to keep them on-manifold, revealing realistic but dangerous modes for safer training and policy design.

- Tools/products/workflows: “Activation Red Team Harness” generating high-fluency risky outputs under controlled settings; logging and mitigation testing.

- Assumptions/dependencies: Strict containment and governance; curated risky directions; human oversight.

- Cross-layer and multi-activation-type GLPs (richer control and diagnostics)

- Train GLPs for other activation types (e.g., attention outputs, MLP pre-activations) and multiple layers to enable layer-specific diagnostics and edits.

- Tools/products/workflows: “Layered GLP Suite” offering per-layer steering/analysis; dashboards of typicality and probe coverage by layer.

- Assumptions/dependencies: Larger activation datasets and compute; per-layer evaluation standards.

- Vendor-agnostic activation control via standardized hooks (ecosystem/policy)

- Develop standards for safe activation access in proprietary LLM runtimes to enable GLP-like guardrails and audits without full model access.

- Tools/products/workflows: “Activation API” standard; compliance certifications for activation-level control.

- Assumptions/dependencies: Industry collaboration and policy alignment; privacy/security mechanisms for activation telemetry.

- Personalized assistants with stable, auditably controlled personas (consumer AI, education)

- Maintain long-term, consistent, and auditable persona/values in tutors and assistants using on-manifold steering; ensure changes are controlled and revertible.

- Tools/products/workflows: “Persona Ledger” tracking persona vectors and GLP settings; parental/educator controls.

- Assumptions/dependencies: Safeguards against harmful persona drift; user consent and transparency.

- Planning and budgeting for interpretability via scaling laws (MLOps, management)

- Use observed power-law scaling of diffusion loss to forecast compute budgets for interpretability improvements and to set internal KPIs tied to downstream task gains.

- Tools/products/workflows: “Interpretability Scaling Planner” that links loss targets to expected steering/probing performance.

- Assumptions/dependencies: Stability of scaling coefficients across domains/models; ongoing empirical validation.

Notes on Cross-Cutting Assumptions and Dependencies

- Model access: Most applications require read/write hooks into internal activations at specific layers; closed APIs may be incompatible without vendor support.

- Distribution match: GLP performance depends on training data (e.g., FineWeb) and the target domain; retraining or domain-adaptive finetuning may be needed for specialized sectors (healthcare, finance, robotics).

- Compute and latency: GLP denoising adds per-token compute (e.g., ~20 denoising steps); acceptable for many server-side deployments but requires profiling and potential optimization.

- Evaluation robustness: LLM-as-a-judge scoring should be complemented with human evaluation and domain-specific metrics, especially in safety-critical and regulated settings.

- Safety and governance: Activation steering can increase or decrease risky behaviors; deployment should enforce approved directions and thresholds, with monitoring and human oversight.

Collections

Sign up for free to add this paper to one or more collections.