Doc-to-LoRA: Learning to Instantly Internalize Contexts

This presentation introduces Doc-to-LoRA, a novel hypernetwork approach that enables language models to instantly internalize long documents without the memory and latency costs of traditional in-context learning. By generating LoRA adapters in a single forward pass, this method allows models to handle contexts beyond their native window limits while dramatically reducing inference overhead. The talk explores the core mechanism, experimental validation across multiple QA tasks, and practical implications for real-time document understanding and personalized AI applications.Script

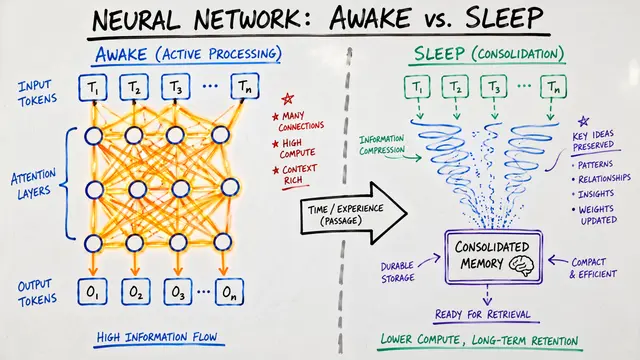

Language models consume long documents the way we might read a book while holding every page open at once. The memory cost is staggering, the latency punishing. Doc-to-LoRA offers a different path: distill the entire context into a compact adapter in a single pass, then answer questions as if the document were never there at all.

The researchers identified a fundamental bottleneck. Every time you query a language model about a document, it reprocesses the entire context through its attention mechanism. For documents exceeding the native context window, this becomes not just slow but infeasible. Standard context distillation offers a solution but demands expensive per-document training.

Doc-to-LoRA eliminates that training step entirely.

The approach is elegantly simple. Feed the document through the language model once to extract token activations. A hypernetwork, based on the Perceiver architecture, consumes those activations and predicts a low-rank adapter for every layer of the model. Apply those adapters, and the model behaves as though the document is still in context, but without the memory or latency overhead.

The results are striking. On question answering tasks like SQuAD, Doc-to-LoRA matches or exceeds the accuracy of full context distillation while using a fraction of the context length. The middle panel reveals the real advantage: update latency measured in milliseconds, not seconds. On the right, memory overhead barely registers compared to the baseline. Prompt compression methods like LLMLingua-2 require progressively more context to maintain accuracy, shown by the gray dots marching rightward. Doc-to-LoRA holds steady.

This opens practical doors. Imagine a personalized assistant that updates its understanding of you in real time, or a mobile device that handles long legal documents without cloud infrastructure. The method excels at text but has not yet been extended to images or audio. The authors see this as a starting point, not a final form.

Doc-to-LoRA proves that context does not need to live in the prompt to shape the answer. By learning to distill documents into adapters instantly, the approach collapses the cost of long-context understanding into a single forward pass. Visit EmergentMind.com to explore the full paper and create your own research videos.