The World Is Bigger: Embedded Agents and Continual Learning

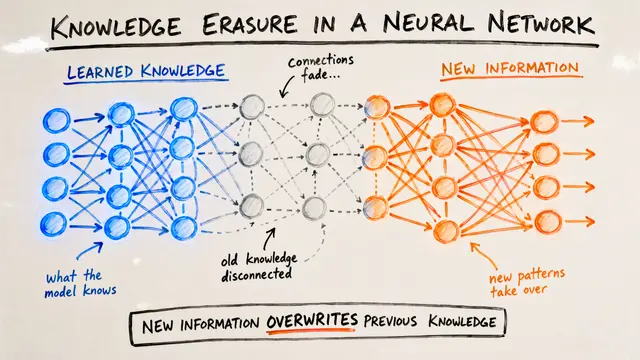

This presentation explores a rigorous formalization of continual learning through the 'big world hypothesis,' where agents are modeled as finite automata embedded within computationally universal environments. The work introduces interactivity, a capacity-relative measure derived from algorithmic complexity, to quantify the necessity of continual adaptation. Through formal proofs and empirical evaluation, the authors demonstrate that agents who cease learning are provably suboptimal and reveal surprising differences in how deep linear versus nonlinear networks sustain adaptive behavior.Script

No matter how powerful an agent becomes, the environment it inhabits will always be vastly more complex. This paper formalizes that intuition by embedding agents as finite automata within computationally universal environments, proving that the world really is bigger.

The authors model agents as local automata whose entire state fits within a finite region, interacting with the environment only through a bounded input-output interface. Because the agent's capacity is always finite while the environment's state space remains unbounded, we get a structural instantiation of the big world hypothesis, no ad hoc constraints required.

Interactivity measures the algorithmic complexity of an agent's future behavior minus the complexity when conditioned on its past. High interactivity means the agent produces unpredictable sequences that remain predictable from history, formalizing the plasticity-stability trade-off at the heart of continual learning.

Because algorithmic complexity is uncomputable, the authors approximate interactivity using prediction error from a learned value function. A bi-level meta-gradient process updates the agent's policy to maximize the difference between static and dynamic prediction error, self-generating the non-stationarity needed for continual adaptation.

Deep nonlinear networks fail catastrophically in this setting, with interactivity collapsing rapidly under bi-level optimization. Deep linear networks, however, sustain high interactivity and benefit measurably from increased capacity, revealing that continual adaptation imposes unique representational demands beyond standard reinforcement learning tasks.

By proving that agents who stop learning are provably suboptimal in a big world, this work offers a rigorous foundation for continual reinforcement learning without relying on external task boundaries. To explore the full paper and generate your own video summaries, visit EmergentMind.com.