Refute-or-Promote: An Adversarial Stage-Gated Multi-Agent Review Methodology for High-Precision LLM-Assisted Defect Discovery

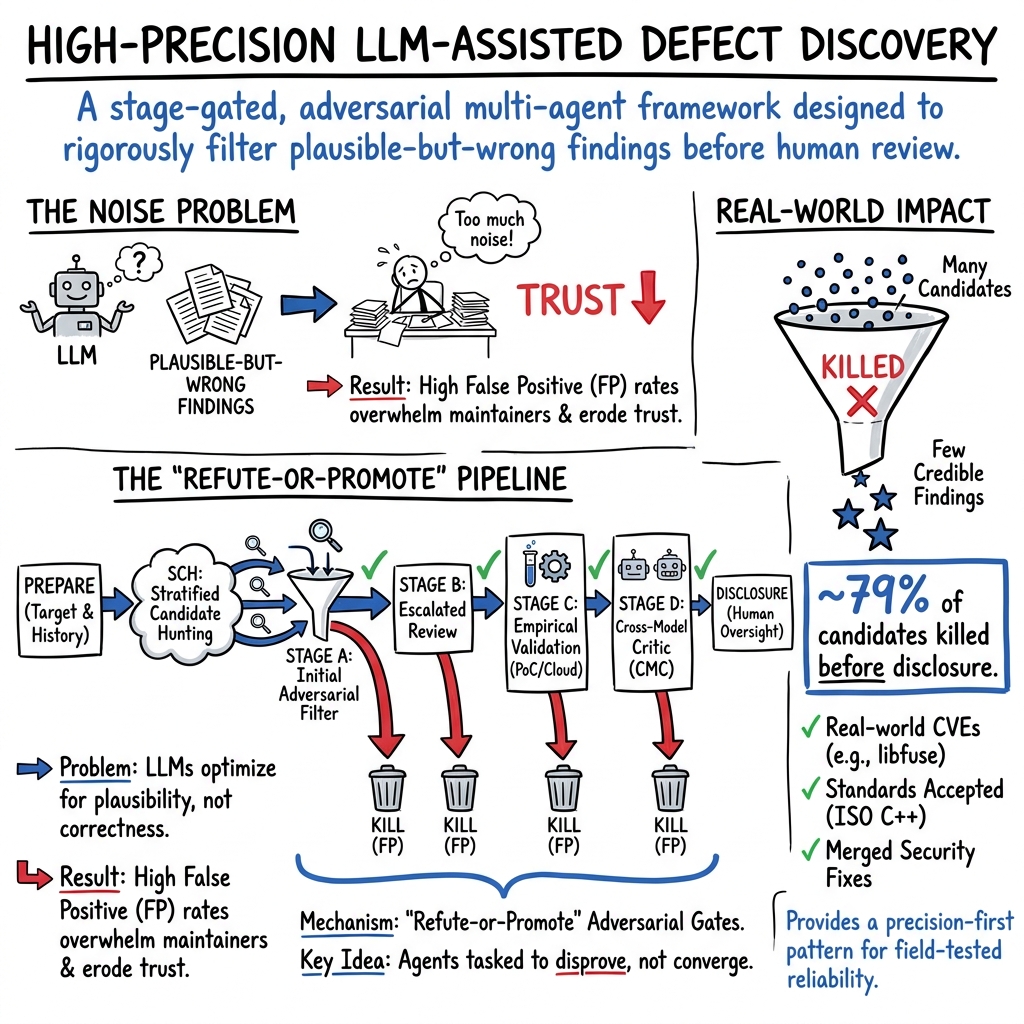

Abstract: LLM-assisted defect discovery has a precision crisis: plausible-but-wrong reports overwhelm maintainers and degrade credibility for real findings. We present Refute-or-Promote, an inference-time reliability pattern combining Stratified Context Hunting (SCH) for candidate generation, adversarial kill mandates, context asymmetry, and a Cross-Model Critic (CMC). Adversarial agents attempt to disprove candidates at each promotion gate; cold-start reviewers are intended to reduce anchoring cascades; cross-family review can catch correlated blind spots that same-family review misses. Over a 31-day campaign across 7 targets (security libraries, the ISO C++ standard, major compilers), the pipeline killed roughly 79% of 171 candidates before advancing to disclosure (retrospective aggregate); on a consolidated-protocol subset (lcms2, wolfSSL; n=30), the prospective kill rate was 83%. Outcomes: 4 CVEs (3 public, 1 embargoed); LWG 4549 accepted to the C++ working paper; 5 merged C++ editorial PRs; 3 compiler conformance bugs; 8 merged security-related fixes without CVE; an RFC 9000 errata filed under committee review; and 1+ FIPS 140-3 normative compliance issues under coordinated disclosure -- all evaluated by external acceptance, not benchmarks. The most instructive failure: ten dedicated reviewers unanimously endorsed a non-existent Bleichenbacher padding oracle in OpenSSL's CMS module; it was killed only by a single empirical test, motivating the mandatory empirical gate. No vulnerability was discovered autonomously; the contribution is external structure that filters LLM agents' persistent false positives. As a preliminary transfer test beyond defect discovery, a simplified cross-family critique variant also solved five previously unsolved SymPy instances on SWE-bench Verified and one SWE-rebench hard task.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

Plain-English Summary of “Refute-or-Promote: Adversarial Stage-Gated Multi-Agent Review for High-Precision LLM-Assisted Defect Discovery”

1) What is this paper about?

This paper is about making AI better at finding real bugs in important software without wasting people’s time with false alarms. Today, LLMs can point out possible problems in code, but they also make a lot of believable mistakes. The authors introduce a careful, step-by-step review process called “Refute-or-Promote” that forces AI agents to try to disprove their own bug reports before any claim is sent to real developers.

2) What questions are the authors trying to answer?

The paper focuses on simple, practical questions:

- How can we reduce the number of false bug reports from AI so that only strong, real findings reach developers?

- Can we make AI reviewers challenge each other in a smart way that catches common mistakes?

- Does using different types of AI models (not just copies of the same one) make reviews more reliable?

- Do real-world tests (like actually running code) catch errors that AI “reasoning” misses?

3) How does their method work?

Think of their process like a science fair mixed with a courtroom and MythBusters:

- First, “hunters” propose possible bugs.

- Then, at several “gates,” other agents act like tough judges whose job is to prove the bug claim wrong. A claim moves forward only if it survives the attack.

Here’s the approach in everyday terms:

- Stratified Context Hunting (SCH): Instead of one AI looking everywhere, several AIs look in different places and ways. One studies past bugs. Another focuses on “hot” files that changed a lot. Another reads the official rules/specs. They also look at different parts of the code so they don’t duplicate work. After a round, they learn from what passed or failed and try again, smarter than before.

- Adversarial kill mandates: Reviewers are told: “Your job is to kill this claim if you can.” This reduces “agreeableness” and forces hard checks rather than friendly nods.

- Context asymmetry: Some reviewers get only the basic claim (cold-start), not the earlier arguments. This helps avoid “anchoring,” where people (or AIs) get stuck believing the first strong-sounding explanation.

- Cross-Model Critic (CMC): A different AI model family (like a second opinion from a totally different expert) does a final check to catch errors that similar models tend to make together.

- Mandatory empirical test: Before any report goes public, they must actually run tests or Proofs-of-Concept (PoCs). Smart arguments aren’t enough—evidence is required.

To make the stages easy to picture, here’s a small table introducing them:

| Stage | Who’s involved | What they do | Why it helps |

|---|---|---|---|

| Candidate Generation (SCH) | Multiple “hunters” with different info and scopes | Suggest possible bugs and self-criticize | Diversity reduces blind spots; early self-checks trim weak ideas |

| Stage A & B (Adversarial review) | Creative agents vs. adversarial agents | One side argues “it’s real,” the other tries to disprove | Challenge prevents easy mistakes and groupthink |

| Stage C (Validation) | Test runners + severity reviewers | Actually run code/tests; adjust severity (CVSS) | Real-world checks catch reasoning errors |

| Stage D (Cross-Model Critic) | A different model family | Independent final critique with minimal hints | Catches correlated errors shared by similar models |

Important definitions explained simply:

- CVE: A public ID for a confirmed security problem, used worldwide to track and fix vulnerabilities.

- Severity (CVSS): A standard way to score how dangerous a bug is (higher = worse).

4) What did they find, and why does it matter?

Over 31 days across 7 real targets (security libraries, the ISO C++ standard text, and compilers), their process:

- Filtered out about 79% of 171 initial bug candidates before any disclosure (on a stricter subset the “kill rate” was 83%).

- Produced real-world outcomes, including:

- 4 CVEs (3 public, 1 still under embargo),

- an accepted defect in the official C++ standard (LWG 4549),

- 5 editorial fixes to the C++ draft,

- 3 compiler correctness bugs,

- and multiple merged security fixes without CVEs.

- Showed that adversarial review often reduced overblown severity scores to more realistic levels.

- Demonstrated transfer beyond security: a simplified variant helped solve difficult code-fixing tasks (SWE-bench Verified instances where previous public attempts failed).

The biggest lesson came from a failure: more than 80 AI agents (including 10 specifically assigned as reviewers) all agreed there was a serious cryptography bug in OpenSSL’s CMS module. It turned out not to exist. One simple real test proved them all wrong. This led the authors to make testing a mandatory step.

Why this matters:

- Developers and security teams are overwhelmed by AI-generated noise. High-precision filtering keeps trust high and work manageable.

- “Everyone agrees” is not proof. Even many AIs can share the same wrong bias. Independent tests and cross-model checks are critical.

5) What’s the bigger impact?

If we want to use AI responsibly in serious areas (security, standards, safety-critical software), we need more than clever outputs—we need reliable processes that:

- Encourage skepticism (try to disprove, not just improve),

- Mix different viewpoints (different contexts and different model families),

- Require real-world evidence (don’t ship without a test),

- Keep a human in charge for judgment calls.

The authors argue this reliability pattern could help in other AI tasks where truth can be checked (for example, automated code repairs, scientific claims with experiments, or formal spec conformance). The key takeaway is simple but powerful: don’t trust AI consensus alone. Design the system so disagreement and testing are built-in.

Knowledge Gaps

Unresolved knowledge gaps, limitations, and open questions

Below is a single, concrete list of what remains missing, uncertain, or unexplored, framed so future researchers can act on it.

- Causal attribution of components: perform preregistered, leave-one-out and combination ablation studies of SCH, kill mandates, context asymmetry, CMC, and the empirical gate to quantify each component’s effect on FP/TP/precision/recall and time-to-decision.

- Recall measurement: establish recall on corpora with ground-truth vulnerabilities (e.g., seeded bugs, held-out CVEs, time-sliced repos) to quantify what the precision-oriented pipeline misses.

- Controlled baselines: compare against cooperative debate, ensemble vote, verifier–patch loops, and single-agent systems under identical targets, budgets, and acceptance criteria.

- Stage specialization hypothesis: test whether Stages A–D truly target distinct failure classes via controlled experiments and inter-rater agreement on stage-attribution of kills.

- Decision policy transparency: formalize, publish, and evaluate the orchestrator’s routing criteria and promotion/kill thresholds to enable reproducible decision-making.

- Operator dependence: replicate the study with multiple independent operators (varying expertise) and blinded orchestration to quantify human-in-the-loop variance and bias.

- Run-to-run variance: assess nondeterminism by re-running the same targets with different seeds, contexts, and sampling parameters; report variance in outcomes and stage decisions.

- Model/version drift: evaluate reproducibility across LLM versions and vendors; quantify robustness of outcomes to model changes and specify version pinning best practices.

- Cross-family critic design: systematically evaluate which model-family pairings most reduce correlated errors; quantify inter-family error correlation and “critic diversity” requirements.

- CMC cost–benefit: measure marginal precision gains versus added cost/latency and derive policies for when to invoke cross-family critique (e.g., only on high-severity or high-uncertainty cases).

- Context asymmetry efficacy: run randomized controlled trials comparing cold-start vs informed reviewers to quantify anchor-bias reduction and net impact on FP/TN/latency.

- SCH effectiveness: isolate and benchmark Stratified Context Hunting against alternative candidate-generation methods (random sampling, pure RAG, hotspot-only) on yield and quality.

- Unanimity-as-warning: develop and test an automatic “unanimity risk” detector that triggers extra scrutiny or resurrection attempts when same-family agreement is unanimous.

- Resurrection agent: implement and evaluate the proposed context-isolated confirm-mandate agent to recover false negatives after unanimous kills; measure rescue rate and false resurrection rate.

- Partial-kill handling: study convergence, cycling risk, and stopping rules when partially refuted candidates re-enter earlier stages; propose formal termination criteria.

- CVSS calibration reliability: benchmark LLM-based severity calibration against human experts and canonical scoring; measure inter-annotator agreement and common miscalibration patterns.

- False-positive/false-negative taxonomy: map observed failure modes to detection mechanisms and create diagnostic triage rules that dynamically allocate reviewers/tests by predicted failure class.

- Negative transfer controls: design guardrails to prevent cross-domain reasoning habits (e.g., spec-only reasoning) from contaminating security workflows; test domain-adaptive prompts/checks.

- Empirical gate scope: define policies for findings that are hard to reproduce locally (e.g., platform-specific, resource-heavy); evaluate remote testbed fidelity and false confirmation risks.

- PoC correctness checks: automate detection of “PoC self-contamination” and other empirical pitfalls (e.g., measuring target signals vs PoC artifacts) with invariant checks and differential tests.

- Coverage complementarity: quantify how this precision-first pipeline integrates with coverage-oriented sweeps (e.g., AISLE) by running coordinated studies on shared targets and measuring union/intersection of findings.

- Target selection bias: analyze how target characteristics (size, language, churn) affect outcomes; create a standardized target suite to reduce selection confounds.

- Domain generalization: test beyond C/C++ crypto/specs—e.g., Rust, Java, web frameworks, kernel subsystems, protocol state machines—to assess transferability and required adaptations.

- Language/toolchain diversity: evaluate pipeline behavior on memory-safe languages and with hybrid inputs (static analysis alerts, fuzzing discoveries) to see if kill gates still add value.

- Ethics and safety of PoCs: specify and evaluate sandboxing, isolation, and data-handling policies for cloud VM execution; quantify risks and mitigation effectiveness.

- Refusal asymmetry: measure over-refusal systematically across model tiers and vendors, tasks, and prompts; study its impact on latency and propose authorization-propagation protocols.

- External acceptance as metric: validate “maintainer merge/CVE/committee acceptance” as a proxy for correctness by independent expert audit; analyze lag and non-technical rejection confounds.

- Human effort accounting: report end-to-end human time, review depth per stage, and training required; derive cost–precision–throughput tradeoff curves (not just API spend).

- Scaling studies: evaluate orchestration at team scale and with higher compute budgets (e.g., parallel reviewers, larger cross-family panels); characterize diminishing returns.

- Resource allocation policies: design and test scheduling heuristics (e.g., allocate senior-tier reviews to high-severity/high-uncertainty candidates) and measure overall pipeline efficiency.

- Robustness to adversarial model behavior: articulate a threat model for agent collusion, prompt injection in retrieved context, and jailbreaks; perform red-team evaluations of gate robustness.

- Data leakage checks: ensure findings are not memorized from training data by using post-cutoff commits and tracking novelty; quantify leakage risk across model families.

- Benchmark alignment: extend preliminary SWE-bench results to full leaderboards with standard splits; report comparable metrics and ablations to situate the approach among peers.

- Interoperability with static/dynamic tools: rigorously evaluate how kill gates filter SAST/DAST/fuzzer outputs versus LLM-proposed candidates, including precision gains and triage cost.

- Stage calibration across domains: define domain-specific validation oracles (e.g., conformance tests for specs, formal checks for proofs) and measure how stage thresholds should adapt.

- Decision logging and reproducibility: release more complete scrubbed logs and synthetic replicas (with seeded vulnerabilities) to enable third-party reproduction without embargo constraints.

- Time-to-disclosure metrics: track and optimize elapsed time per successfully disclosed issue, including iteration counts per stage and wait states (e.g., cloud VM provisioning).

- Model selection policies: develop principled criteria for choosing workhorse vs senior-tier agents per stage and per class of candidate; quantify ROI of tier upgrades.

- Parameter sensitivity: ablate context length, RAG quality, and prompt formats to identify minimum viable context and the conditions under which context asymmetry breaks down.

- Failure analysis of killed campaigns: provide detailed post-mortems for V8/Chrome and Langflow runs to extract generalizable lessons and rules for future targets.

- Governance and stopping criteria: define formal policies for when to cease investigation on a candidate (or a target) to avoid sunk-cost fallacy while minimizing false negatives.

Practical Applications

Immediate Applications

The following use cases can be deployed with today’s tools and infrastructure, using the paper’s stage-gated adversarial pipeline (Stratified Context Hunting, kill mandates, Cross-Model Critic, and mandatory empirical validation).

- Software security engineering (industry, software)

- Deploy an adversarial LLM triage pipeline for vulnerability discovery to cut false positives before responsible disclosure.

- Concrete implementations: a “Refute-or-Promote Orchestrator” service; a GitHub/GitLab Action that routes candidate findings through Stage A–D; CI tasks that spin up sandboxed VMs to run PoCs; Jira integrations for promotion/kill decisions.

- Dependencies/assumptions: access to at least two LLM model families; sandboxed PoC infrastructure (cloud VMs, containers); a test harness or repro path; human orchestrator oversight; organizational CVD (coordinated vulnerability disclosure) process.

- Bug bounty and vulnerability intake (industry, policy)

- Platform-side pre-triage for submissions using adversarial kill-gates and empirical proof requirements to reduce maintainer burden and spam.

- Concrete implementations: a HackerOne/Bugcrowd plugin that enforces a validation gate and cross-family critique before forwarding to vendors.

- Dependencies/assumptions: legal authorization to run PoCs; standardized submission templates that require repro artifacts; policies allowing automated pre-triage.

- DevSecOps/SAST vendors: false-positive reduction modules (software, security)

- Add “adversarial validation” and “cross-model critic” stages to filter SAST/LLM alerts and recalibrate severity (CVSS) downward when warranted.

- Concrete implementations: a pipeline component that re-checks tool findings under cold-start and cross-family reviewers; severity calibration agents integrated into ticketing.

- Dependencies/assumptions: reproducible build/test environments; model-family diversity; minimal-context prompts to avoid anchoring; integration with existing CI/CD.

- Open-source project governance (industry, daily practice)

- Project-side submission policies requiring empirical validation and cold-start reviewer sign-off for AI-generated bug reports.

- Concrete implementations: CONTRIBUTING.md templates mandating PoC evidence; a lightweight reviewer bot that applies Stage A/B with cross-family checks on PRs/issues.

- Dependencies/assumptions: access to at least one alternative model family (can be OSS or API-based); maintainers’ willingness to require validation artifacts.

- Standards/specification engineering (academia, policy, software)

- Introduce an “adversarial review” track for standards text and editorial changes; apply divergence-hunting across multiple implementations to surface conformance defects.

- Concrete implementations: a CI job that submits auto-generated tests to GCC/Clang/MSVC (or N implementations) and flags disagreements for committee review; “spec-diff targeting” that prioritizes recently changed sections.

- Dependencies/assumptions: access to multiple implementations and their CI; committee acceptance of adversarial roles; known oracles (normative text, reference tests).

- Compiler/toolchain QA (industry, software)

- Use LLM-generated tests plus adversarial critics to detect conformance bugs and to validate fixes with cross-family reviewers.

- Concrete implementations: an automated “divergence hunter” harness via Compiler Explorer or internal runners; a patch-validation gate requiring CMC sign-off.

- Dependencies/assumptions: stable test harnesses; compute budget; multiple compilers or build modes; human-in-the-loop for final triage.

- General software QA and code review (industry, software)

- Stage-gated adversarial reviewers for AI-authored patches: require a passing empirical gate (tests, benchmarks) and a cross-family critic prior to merge.

- Concrete implementations: PR checks that run tests and solicit a fresh-context review from a different model family before allowing merge.

- Dependencies/assumptions: reliable unit/integration tests; API access to at least two model families; change-management policies.

- Compliance auditing in regulated domains (finance, security, government)

- Apply adversarial multi-agent review to normative compliance (e.g., FIPS 140-3) to pre-validate evidence packages and detect gaps before audits.

- Concrete implementations: audit-prep pipelines that check requirements with cold-start critics and seek empirical artifacts (logs, test runs, cert outputs).

- Dependencies/assumptions: access to compliance artifacts; domain-specific oracles; authorization to execute tests on controlled systems.

- Security operations and PoC execution (industry, software)

- Automated cloud VM provisioning for empirical validation as a mandatory gate in vuln workflows.

- Concrete implementations: Terraform/Ansible recipes invoked by the orchestrator to run PoCs in ephemeral, isolated environments; results fed back to triage.

- Dependencies/assumptions: cloud accounts; sandboxing and egress controls; legal authorization.

- Education and training (academia, industry upskilling)

- Falsification-first labs that teach adversarial review, context asymmetry, and “unanimity-as-warning”; use the released 56-rule playbook in coursework.

- Concrete implementations: classroom modules where students run Stage A–D on safe open-source targets with scrubbed data.

- Dependencies/assumptions: LLM access for students; sanitized datasets; instructional oversight.

- Risk and severity calibration (industry, policy)

- Structured CVSS calibration via adversarial agents to avoid overclaiming severity in disclosures and advisories.

- Concrete implementations: a severity-check workflow that re-argues preconditions and impact under cold-start and senior-tier review.

- Dependencies/assumptions: access to relevant environmental metrics; disclosure coordination norms.

Long-Term Applications

These use cases require further research, evaluation, or ecosystem scaling (e.g., higher-fidelity oracles, cross-organizational standards, regulatory alignment).

- Safety-critical AI pre-action verification (healthcare, robotics, transportation, energy)

- Use kill-mandated, cross-family critics and simulation-based empirical gates to verify AI plans or recommendations before execution.

- Potential products: “pre-flight adversarial gate” for surgical planning, robot motion plans, grid control actions, or AV maneuvers.

- Dependencies/assumptions: high-fidelity simulators and testbeds; access to protected data under compliance; rigorous incident response; regulator buy-in.

- Cross-domain reliability primitive for LLM outputs (media, finance, legal)

- Adopt kill-gate ensembles and context isolation as a standard reliability pattern for high-stakes content generation (reports, filings, forecasts).

- Potential products: newsroom fact-checker agents; financial report validators; legal drafting gates with cross-family critics.

- Dependencies/assumptions: access to independent data oracles (market feeds, statutes, case law); strong logging and audit trails.

- Proof-assistant and formal methods integration (software, hardware)

- Replace or augment empirical gates with proof oracles (Coq/Lean/Isabelle) as part of Stage C where formal specs exist.

- Potential products: “LLM-to-proof” validators that discharge obligations against formal specs; proof-guided patch generators with adversarial refutation.

- Dependencies/assumptions: formalizable specifications; proof engineering capacity; trustworthy extraction to executables.

- Automated “resurrection agent” to reduce false negatives (software, security)

- A context-isolated confirm agent that triggers after unanimous kills to attempt recovery of true positives (addressing unidirectional bias).

- Potential products: pipeline modules that auto-resurrect and re-test borderline candidates with alternative parameter spaces.

- Dependencies/assumptions: additional compute and time budget; careful thresholding to avoid reintroducing noise; empirical evaluation.

- Unified disclosure and triage platform (industry, policy)

- An end-to-end enterprise product that orchestrates SCH, adversarial gates, CMC, PoC execution, CVSS calibration, and disclosure packaging with legal guardrails.

- Potential products: “Adversarial Gate” SaaS for security teams and bug bounty platforms with audit logs and policy templates.

- Dependencies/assumptions: partnerships with platforms/vendors; hardened sandboxing; detailed compliance and logging.

- Standardization of FP metrics and minority-veto protocols (policy, academia, industry consortia)

- Sector-wide benchmarks and guidelines that require adversarial validation and cross-family critics for AI-generated vulnerability reports or conformance findings.

- Potential products: standards from ISO/IEC, IETF, or industry groups specifying validation gates and acceptance criteria.

- Dependencies/assumptions: multi-stakeholder consensus; reference implementations; funding for shared infrastructure.

- Cross-industry compliance assistants (finance, healthcare, government)

- Adversarial multi-agent audits for SOC 2, ISO 27001, SOX, HIPAA—combining rule libraries, cold-start critics, and evidence-oracle gates.

- Potential products: audit copilots that pre-validate controls evidence and flag ambiguous interpretations with cross-family dissent.

- Dependencies/assumptions: secure evidence access; domain-specific oracles; auditor acceptance.

- Refusal-calibrated agent frameworks (industry, AI platforms)

- Systematic propagation of authorization context to subagents to reduce over-refusal in authorized cybersecurity tasks while maintaining safety.

- Potential products: agent SDKs with built-in trust-context propagation, justification logging, and refusal metrics.

- Dependencies/assumptions: LLM vendor support for context signals and policy hooks; organizational governance to define authorizations.

- Decentralized adversarial verification consortia (industry, open-source)

- Cross-organizational networks that share cross-model critics to counter correlated errors and provide external validation signals.

- Potential products: federated “CMC-as-a-service” with privacy-preserving protocols and artifact escrow for embargoed issues.

- Dependencies/assumptions: legal data-sharing frameworks, privacy-preserving computation, trust arrangements and SLAs.

- Sector-specific simulators and oracles (healthcare, energy, robotics, finance)

- Build high-quality empirical oracles that enable strong Stage C validation beyond software (e.g., clinical emulators, grid simulators, market replay).

- Potential products: domain “oracle packs” that plug into the adversarial gate.

- Dependencies/assumptions: data access, validation against real-world outcomes, ongoing maintenance to prevent drift.

Glossary

- Adversarial kill mandate: A reviewer directive that prioritizes disproving a candidate finding rather than improving it. "The critic carries a kill mandate rather than an improve/evaluate mandate"

- Anchoring cascades: A bias where early information or framing causes subsequent reviewers to converge prematurely. "cold-start reviewers are intended to reduce anchoring cascades"

- Bleichenbacher padding oracle: A class of RSA PKCS#1 v1.5 attacks where padding validity leaks information enabling decryption. "a non-existent Bleichenbacher padding oracle in OpenSSL's CMS module"

- Cold-start reviewers: Reviewers who are given minimal prior context to avoid bias from earlier analyses. "cold-start reviewers are intended to reduce anchoring cascades"

- Coordinated disclosure: A process where vulnerabilities are privately reported and handled with vendors before public release. "under coordinated disclosure"

- Context asymmetry: Deliberately giving reviewers different amounts or views of context to reduce shared errors. "context asymmetry, and a Cross-Model Critic (CMC)"

- Context isolation: Separating production and review contexts to prevent persuasive bias or contamination between agents. "Refute-or-Promote's context isolation was designed to prevent"

- Compiler conformance bugs: Defects where a compiler deviates from the language specification’s required behavior. "3 compiler conformance bugs"

- Cross-family review: Using models from different families to reduce correlated error patterns and shared blind spots. "cross-family review can catch correlated blind spots that same-family review misses"

- Cross-Model Critic (CMC): A critique stage using models from a different family with minimal context to detect correlated errors. "We name this stage's mechanism the Cross-Model Critic (CMC)"

- CVE (Common Vulnerabilities and Exposures): A standardized identifier system for publicly known cybersecurity issues. "4 CVEs [3 public, 1 embargoed]"

- CVSS (Common Vulnerability Scoring System): A framework for rating the severity of security vulnerabilities. "CVSS~7.8"

- CVSS calibration: Adjusting claimed severity using CVSS metrics during adversarial review. "CVSS calibration data"

- Embargo: A temporary restriction on public disclosure of a vulnerability until coordinated processes complete. "under embargo"

- Empirical validation gate: A mandatory stage requiring runtime tests or proofs-of-concept before promoting a candidate. "Empirical validation gate: no candidate reaches disclosure without empirical confirmation."

- Execution-grade oracle: A reliable, testable ground-truth mechanism (e.g., running code/tests) used to adjudicate findings. "an execution-grade oracle"

- FIPS 140-3 normative compliance: Conformance with the U.S. Federal standard for cryptographic modules and their validation. "FIPS~140-3 normative compliance issues"

- GCM check: The integrity/authentication verification step in AES-GCM that validates the authentication tag. "fails the GCM check"

- Git log hotspot analysis: Identifying high-churn code regions via version-control history to prioritize review focus. "a git log hotspot analysis"

- Godbolt Compiler Explorer API: An online tool/API for compiling and testing code across multiple compilers. "via the Godbolt Compiler Explorer API"

- ISO C++ Working Paper: The current draft of the ISO C++ standard under active development. "accepted to the ISO C++ Working Paper"

- Kill gate: A promotion checkpoint where a single strong refutation can veto a candidate from advancing. "Our kill-gate is the architectural analogue"

- LWG (Library Working Group): The ISO C++ committee subgroup responsible for the standard library. "LWG~4549 accepted to the ISO~C++ Working Paper"

- ML-DSA zeroisation: Securely erasing sensitive key material for the ML-DSA (a digital signature scheme) to prevent leakage. "ML-DSA zeroisation"

- Nonce: A “number used once,” commonly a unique per-operation value in cryptographic protocols. "its own nonce computation"

- N-version programming: A fault-tolerance approach using independently developed program versions to reduce correlated failures. "N-version programming"

- Orchestrator agent: The coordinating agent that routes candidates and controls stage progression in the pipeline. "Inter-stage routing is executed by the orchestrator agent"

- PKCS#1 padding: The RSA padding scheme defined in PKCS#1; its validation behavior affects oracle vulnerabilities. "valid PKCS#1 padding"

- PoC self-contamination: A failure mode where a proof-of-concept measures its own artifacts instead of the target system. "PoC self-contamination"

- Popperian falsification: Testing by aggressive attempts to refute (falsify) claims rather than confirm them. "Popperian falsification framework"

- RAG+ReAct ensemble: A multi-agent pattern combining Retrieval-Augmented Generation with ReAct-style reasoning and action. "as a cooperative RAG+ReAct ensemble"

- RFC errata: Official corrections to published IETF Request for Comments documents. "RFC~9000 Errata"

- SAST (Static Application Security Testing): Automated analysis of source code to detect potential security issues without executing it. "SAST tool alerts"

- Stratified Context Hunting (SCH): A candidate-generation strategy stratified by source, scope, and iteration waves. "Stratified Context Hunting (SCH)"

- Training-data priors: Shared biases induced by model training data that can cause correlated errors across agents. "shared training-data priors"

- Triage capacity: The practical ability of maintainers to process and evaluate incoming reports. "overwhelming triage capacity"

- Unanimity-as-warning: The observation that unanimous agent agreement can indicate shared bias rather than truth. "unanimity-as-warning in both directions"

- Validation oracle: The authoritative mechanism used to determine correctness (e.g., runtime tests or committee decisions). "validation oracles (committee acceptance rather than runtime tests)"

Collections

Sign up for free to add this paper to one or more collections.