- The paper introduces a unified transformer that processes detection, lane marking, trajectory planning, and reasoning tasks in a single token-centric architecture.

- It achieves state-of-the-art performance on nuScenes and NAVSIM benchmarks with superior trajectory precision, safety metrics, and a 40% reduction in inference latency.

- The approach leverages pretrained vision-language backbones, specialized feedforward networks, and curriculum training to enable joint optimization across multiple driving paradigms.

OneDrive: Unified Multi-Paradigm Driving with Vision-Language-Action Models

Introduction and Motivation

The complexity inherent to autonomous driving stems from its multi-paradigm nature: perception, semantic reasoning, and decision-making operate over distinct modalities and output formats. Previous paradigms in vision-language-action (VLA) models partitioned these tasks with isolated or cascaded decoders, often at the cost of information bottleneck and hampered backbone sharing. OneDrive proposes a unified transformer-based architecture that reconciles the dichotomy between autoregressive (text generation, reasoning) and parallel (perception, planning) decoding, remaining close to the backbone of pretrained vision-LLMs (VLMs).

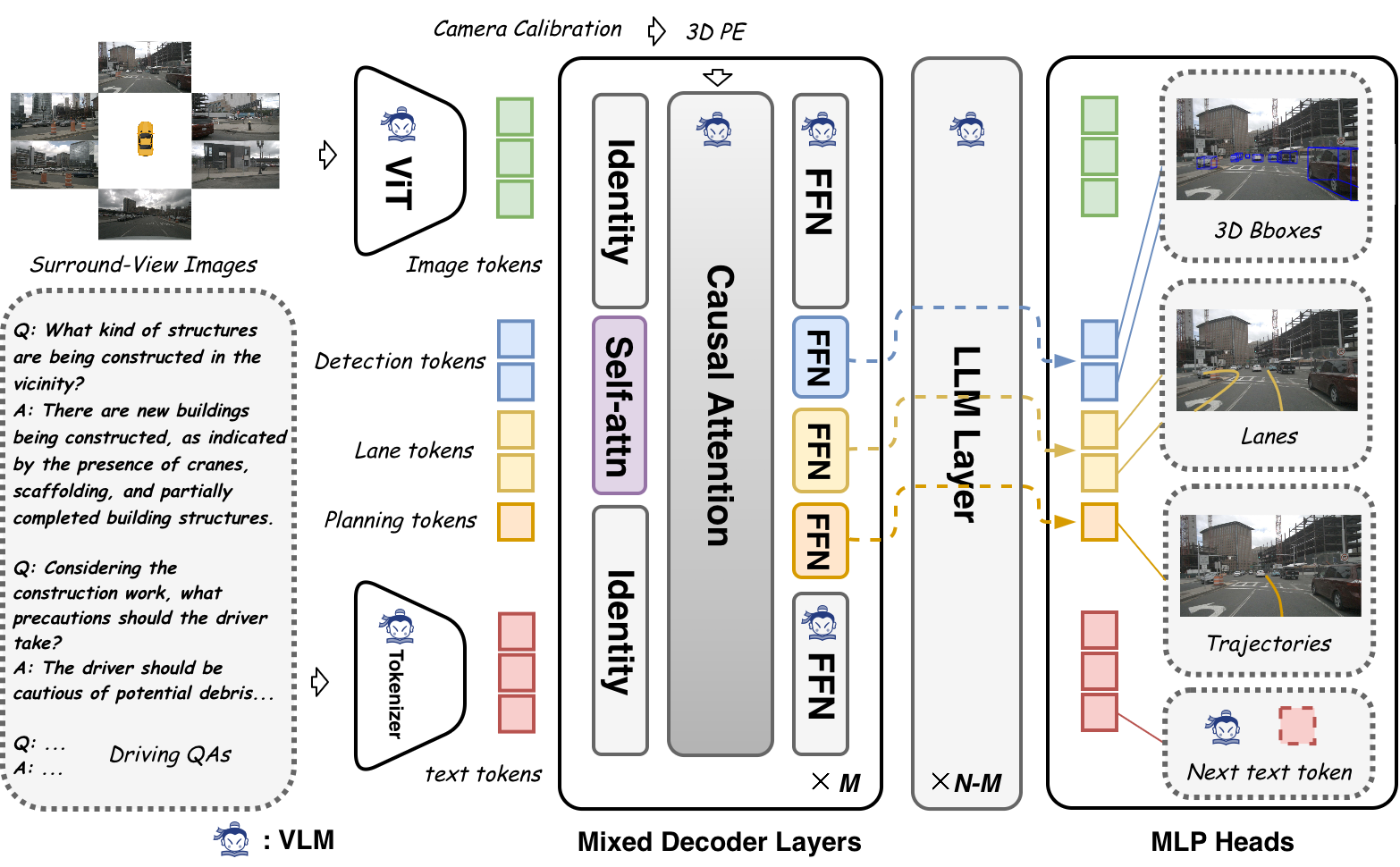

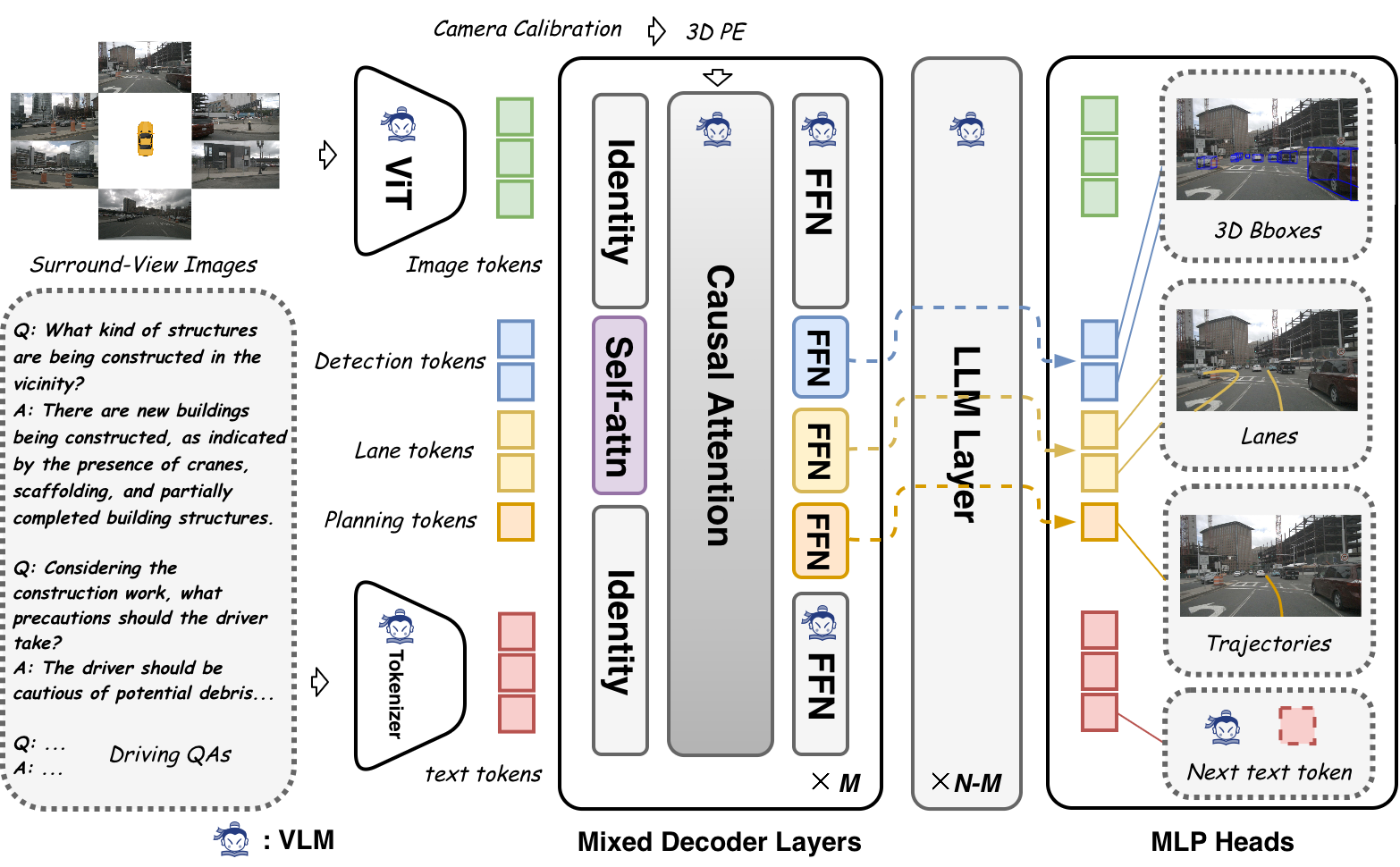

Recent evidence indicates strong transferability of pretrained VLM causal attention beyond conventional language modeling, but highlights a critical deficiency in transferring feed-forward networks (FFNs) to multi-paradigm driving tasks. OneDrive leverages this by keeping causal attention as a universal modeling interface, while inserting task-specific self-attention and FFNs at shallow decoder layers to support structured predictions. This architecture enables planning, perception, and reasoning to jointly optimize within a unified token-centric transformer, eliminating the need for specialized decoder branches.

Unified Architecture

OneDrive organizes heterogeneous task outputs (detection, lanes, trajectories, and language tokens) into a single causal sequence. Surround-view images are tokenized via a ViT, concatenated with query tokens for perception and planning (modeled as learnable tokens following DETR/StreamPETR conventions), then followed by text tokens for reasoning/generation. The sequence is processed by a mixed transformer architecture anchored in a frozen pretrained LLM attention backbone. Perceptual queries undergo additional self-attention—preserving parallelism and spatial context—while their feature extraction is task-specific via dedicated FFNs. The final MLP heads decode structured outputs (e.g., 3D bounding boxes, lane structures, continuous trajectory vectors) in parallel. Textual reasoning is performed in an autoregressive manner at the sequence tail.

Figure 1: The OneDrive architecture, highlighting unified token sequencing and parallel decoding within a single causal transformer backbone.

Critically, task order determines conditional dependencies: putting perception before planning ensures that planned trajectory queries can integrate detailed semantic and geometric context, while retaining the capacity to backpropagate through shared attention. Joint optimization is achieved via three-stage curriculum: initial perception- and language-focused pretraining, followed by planning adaptation using anchor-based queries, and finally joint tuning over all objectives. This design fully exploits backbone reusability and inter-task synergy.

Experimental Validation

Empirical results on nuScenes and NAVSIM benchmarks establish the efficacy of OneDrive, both in open-loop and closed-loop settings. In nuScenes open-loop planning (no ego-vehicle state, image-only input), OneDrive attains state-of-the-art accuracy:

- L2 displacement error: 0.28 m (avg)

- Collision rate: 0.18% (avg)

Both results surpass competitive baselines, demonstrating strong trajectory precision and safety. On NAVSIM closed-loop evaluation, OneDrive reaches 86.8 PDMS—a composite metric incorporating safety, rule-compliance, and efficiency—demonstrating its superior interaction stability and long-term planning. Notably, the architecture's unified decoder reduces inference latency by ~40% compared to comparable systems that need full LLM decoding or custom attention masking.

Ablation studies confirm that:

- Retaining causal attention is essential for all task types; transferring FFNs from language-centric pretraining is detrimental to perception/planning stability.

- Integrating text supervision during training regularizes the unified architecture and reduces collision rate without hurting language generation performance.

- Curriculum training and ordering of perception/planning tokens is central to stabilizing optimization and achieving optimal closed-loop metrics.

Theoretical and Practical Implications

The OneDrive formulation demonstrates that pretrained vision-LLM (VLM) backbones can be straightforwardly adapted for unified multi-paradigm structured output, provided the interface for conditional attention is carefully designed. The resultant hardware efficiency—due to standard attention masking and elimination of cascading—offers a practical deployment advantage, supporting latency-sensitive and high-throughput settings critical for autonomous vehicles.

Theoretically, this decouples the architectural pressure to bias models toward either pure language or perception-planning centricity. Instead, it reframes joint reasoning as a token-centric sequence manipulation problem, with domain adaptation occurring primarily through plugging in lightweight task-specific heads. This suggests that future VLMs pre-trained on general web-scale data could be efficiently adapted to a wide spectrum of embodied agent control domains, provided their attention interface is sufficiently expressive and regularized during downstream task adaptation.

Future Research Directions

The observed underperformance—relative to BEV-specialized vision models—for pure perception tasks underscores a core limitation: current VLM ViTs sacrifice spatial fidelity due to aggressive downsampling and pretraining on language-centric objectives. Research extending pretraining targets to object-centric and geometric tasks, increasing token resolution, or employing cross-scale fusion strategies could further close the performance gap. Additionally, systematic study of model/data scaling for unified VLA models is needed to clarify generalization boundaries, robustness, and reasoning depth across challenging open-world scenarios.

Conclusion

OneDrive introduces a unified end-to-end architecture for autonomous driving, reconciling parallel and autoregressive output via a single pretrained VLM transformer. By structurally decoupling attention and feedforward adaptation, it establishes state-of-the-art performance on driving benchmarks while maintaining hardware efficiency and multi-modal output flexibility. The results validate the feasibility of token-centric, unified modeling for VLA tasks, and point the way to future research on scaling, training, and representational capacity in foundation models for embodied autonomous agents.

Reference: "OneDrive: Unified Multi-Paradigm Driving with Vision-Language-Action Models" (2604.17915)